Abstract

In the near future, robots and people will work hand in hand. Through technical development, robots will be able to follow social rules, interact and communicate with people and move freely in the environment. The number of these so-called social robots will increase significantly especially in production spaces forming hybrid human-robot-teams. This expected increasing integration of robots in production environments raises questions on how to design an ideal robot for hybrid collaboration. While most of the research focuses on the technical aspects of human-machine interactions, there is still a strong need for research on the psychological and social aspects that influence the cooperation within hybrid teams.

In addition to existing research work, the present research project investigates how the appearance and accuracy of a robot influences the outcomes of a collaborative task (i.e. performance, perceived team functionality and trust) within a Wizard-of-Oz experiment, which was set in a virtual reality setting.

One major finding was that the accuracy of the robot influenced the level of trust, faulty robots were perceived as less trustworthy. Furthermore, the appearance of the robots influenced the perceived team functionality and the collaborative performance. Industrial robots led to a higher task reflexivity and performance.

The results will help to improve the future designs of robots, focusing on psychological needs of the human partners.

You have full access to this open access chapter, Download conference paper PDF

Similar content being viewed by others

Keywords

- Industry 4.0

- Social robotics

- Hybrid human-robot-teams

- Human-robotic interactions

- Anthropomorphism

- Reactions

- Cooperation

1 Introduction

So far, robots are still largely separated from human workers in production spaces. There are only a few examples where robots and humans work together such as in a new fulfillment center of Amazon in New Jersey [1]. Through technological development and adapting standards especially in the field of safety [2], the number of shared workspaces and closer human-robot collaborations will increment. Increasing numbers of robots can reduce production costs in the long term, compensate physical limitations of human workers, and countervail production peaks and staffing bottlenecks. Whereas most of the research on human-robot collaboration focuses on technical aspects, there is still a strong need to investigate the psychological and social prerequisites for effective collaboration as pointed out by Charles et al. [3].

Human-robot interaction can vary in many ways, e.g. in the type of task, the ratio of humans and robots, or the understanding of roles [4, 5]. Instead of just coexisting, human and robot might be able to cooperate in the future, which means to share a common goal and interact in a coordinated way [6]. It might even be possible to speak of hybrid human-robot teams. Although, the term team is usually used for human teams, recent research has transferred the concept repeatedly on robots. Classically, a team is defined as two or more individuals, who have specific roles, perform interdependent tasks, are adaptable, and share a common goal [7]. The closer and more equitable the interaction becomes, the more important are human factors [8]. Charalambous and collegeagues [9] gave a first framework of human factors which influence effective implementation and execution of hybrid human-robot collaboration. Among others, the factors trust in the robots and the robot’s reliability play a crucial role.

People often take for granted that machines and robots work perfectly and act unaware of actual dangers. This automation-induced complacency effect is caused particularly through automation reliability [10]. Hancock and colleagues [11] examined critical factors for trust development in hybrid teams in a meta-analysis. They also pointed out that the robot’s performance has the strongest association with trust. Even if the robot’s performance influenced the level of trust, Salem and colleagues [12] found out that manipulating the robot’s behavior did not influence people’s decision to work with them. This leads to two hypotheses:

-

H 1 : The accuracy of a robot’s behavior influences the level of trust. A faulty robot will be perceived as less trustworthy.

-

H 2 : The accuracy of a robots’ behavior does not influence the perception of the team functionality.

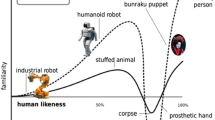

Experiments have shown that a robot’s appearance systematically influences the humans’ affect towards them. This effect, originally proposed by Jentsch [13] and Freud [14], is called “Uncanny Valley” [15] after the form of the diagram which illustrates the conjecture to describe the effect. Correspondingly, humanoid robots are more popular than industrial robots (until they are too anthropomorphic and the response becomes revulsion). Humanlike appearance is also associated with human attributes, people tend for example to delegate responsibility better to humanlike then to machinelike robots [16]. This finding is supported also by Hancock and colleagues [11], who saw the characteristics of the robot (like anthropomorphism) also as an influential factor on trust. The robot’s look rises expectations of the robot’s role and behavior in the situation, wherefore hybrid collaboration can be improved trough a matching of the robot’s appearance and the given task. A robot that confirmed the expectations increases peoples’ sense of the robot’s compatibility with its job, and hence their compliance with the robot [17]. In a production environment, an industrial robot will match the situation better than a humanoid, which leads to the following hypotheses:

-

H 3 : The robot’s appearance influences the level of trust. A humanoid robot will be perceived as more trustworthy.

-

H 4 : The robot’s appearance influences the perception of the team functionality. An industrial robot will create a higher perceived team functionality.

-

H 5 : The robot’s appearance influences the performance of the collaborative task. An industrial robot will lead to a higher performance.

To test these hypotheses, a virtual reality experiment with four experimental groups was conducted which is described in the next section. Then a description of the sample follows and the results are reported. In the last section, the results and limitations are discussed and an outlook is given.

2 Method

To analyze hybrid collaboration in a controlled experiment, a Wizard-of-OzFootnote 1 experiment was designed within a virtual reality setting using a Virtual TheaterFootnote 2 and an Oculus Rift Development Kit 2 (DK2) Head-Mounted Display (after the concept described in [18, 19]).

In a virtual production hall (see Fig. 1), the participants needed to fulfil a task that could only be successfully achieved via a cooperation with the mobile, voice-controlled robot CharlesFootnote 3. To get used to the virtual environment, exploration time was provided where participants could walk freely through the production hall and get information about the different machines (e.g. lathes, bending machine) by pressing the corresponding buttons. The robot stood in the production hall and offered help operating the machines through a textual interface, which was also read aloud. Participants were told that they could give the robot instructions by saying “Go to machine…” (“Gehe zu…”) or “Press the button” (“Benutze…”). The robot could only operate machines with a red button; the human could only operate machines with a green button, which the participant had to figure out by try and error. The names of the machines were visible as numbers over the machines. The actual task instructions were given when they operated a specific machine: The task was to operate an electric chain hoist by alternately pressing a green and a red button. To ensure the power supply, human or robot needed to stand on a platform. They had to pull as much of the rope as possible within five minutes starting from the moment when the instruction was activated. The total time within the Virtual Theatre was 15 min in average.

In a 2 × 2 design, the robot’s characteristics were manipulated. The robot appeared either as an industrial or humanoid robot (see Fig. 2) and operated either reliably or imperfect in response to the human operator. Participants were randomly assigned to the conditions. The behavior of the robot in both conditions was standardized by the specifications of an activity (see Fig. 3).

The experiment was framed with an online pre- and a post-survey using SoSci SurveyFootnote 4. The pre-survey was designed to get insights into the personal characteristics of the participants and the post-survey to gain information of the individual, subjective assessment of the hybrid teamwork. An overview of the complete questionnaires is reported in Table 1.

For the analysis in this paper, we analysed the level of trust towards the robot with an adaption of the questionnaire of Schaefer [25] as well as the team functionality with the Questionnaire of Teamwork (FAT) by Kauffeld and Frieling [23] in dependence of the robot’s characteristics. Trust was evaluated by entering a percentage value. Exemplary questions are “How much percent of the time did the robot work properly?” or “How much percent of the time did the robot work well in the team?”. Team functionality was assessed using a six-point Likert-scale with verbalized endpoints. Examples are “Our goals are realistic and achievable. – Our goals are unrealistic and unachievable.” or “We reach all goals with ease. – Sometimes we get the impression that we cannot achieve our goals.”. Reliability for the individual FAT subscales varied between α = .64 and α = 89 [23].

3 Results

In order to test the hypotheses described above, factorial analyses of variance (ANOVA) with the fixed factors appearance and behaviour of the robot were conducted using SPSS 24.

Robots which performed reliable were trusted more \( \left( {\bar{x} = 6 1. 70,SD = 1 4. 3 7} \right) \) than robots which performed faulty \( (\bar{x} = 5 5. 2 7,SD = 1 6. 6 6) \). This main effect of the robot’s accuracy on the level of trust was significant, F (1, 108) = 4.65, p = .033, ω2 = .04. Hence, hypothesis H 1 is confirmed. The main effect of appearance and the interaction effect were not significant, thus hypothesis H 3 cannot be confirmed.

Robot’s appearanceFootnote 5 influenced some FAT dimensions. There was a significant main effect of the robot’s appearance on orientation, F (1, 108) = 6.16, p = .014, ω2 = .05, and task management, F (1, 108) = 4.23, p = .039, ω2 = .04. The humanoid condition had higher values in both dimensions (\( \bar{x} = 2. 8 5,SD = 1.0 1 \) vs. \( \bar{x} = 2. 3 9,SD = . 9 7 \) for goal orientation and \( \bar{x} = 3. 1 2,SD = 1. 10 \) vs. \( \bar{x} = 2. 7 5,SD = . 8 5 \) for task management) indicating less goal orientation and task management. The dimensions can also be summarized as task reflexivity [31]. The other dimensions of the FAT, which can be summarized as social reflexivity, did not reveal significant main effects, so hypothesis H 4 can be partially confirmed. The teams with both the industrial and the humanoid robot can be classified as fully functioning teams (see Fig. 4). The main effects of behaviour and the interaction effects were not significant, too, which goes along with hypothesis H 2 .

Team functionality of the hybrid teams after West [31].

There was a significant main effect of the robot’s appearance on the performance, F (1, 104) = 5.06, p = .027, ω2 = .05). In collaboration with the humanoid robot, the performance \( (\bar{x} = 3. 2 6,SD = 2. 2 5 ) \) was not as good as with the industrial robot \( (\bar{x} = 4. 2 6,SD = 2. 3 1) \), which goes along with hypothesis H 5 . The main effect of the robot’s behaviour as well as the interaction effect between appearance and behaviour on performance were not significant.

4 Discussion

This study examined the impact of the design of a robotic workmate (appearance and behaviour) on the trust placed in the robot, the perceived team functionality, and the objective team performance (which might be an effect of time – with more time provided for task fulfilment, an impact is assumed). It was found that a faulty robot was perceived as less trustworthy, but that its accuracy did not affect the team functionality and team performance. An industrial appearing lead to a higher task reflexivity and a better collaborative performance, but no influence of the appearance on the level of trust was found.

The lacking influence of appearance on trust and social reflexivity might be a result of the currently still predominant mental model of robots which considers robots – independently of their look – more as a tool than an actual teammate [32]. This might be also enhanced by the design of the study, which assigned the participants as an operator of the robot who commands it. Although a natural bidirectional language interface was chosen and the robot listened to a human name, which should emphasize the equality of the team members, the robots did not seem to introduce a high sense of unity.

Further work will continue to explore how the participants’ characteristics (e.g. personality, attitude towards robots) influence the performance of hybrid collaboration and the subjective perception of it. Through analyzing the interactions between human and robot during the experiment (via video analysis), it will also be considered whether human-robot teams experience team development and whether the stages of human team development can be transferred to hybrid teams.

The study gives first implications of how a robotic workmate should be designed, taking into account the interactions with a human partner. Nevertheless, the limitations of this study should be considered. First, due to technical issues and simulation sickness of some participants, comparatively few test subjects were included in each condition of the experiments; hence, the statistical power was limited. Second, the sample consisted mainly of students and it is possible that construction workers, who will be the actual end-users, will perceive hybrid collaboration in a different way. Third, habituation effects might occur, so that the described patterns might not remain over time. Fourth, a virtual environment setting was used to guarantee a safe interaction with a robot and to manipulate the robot’s characteristics easily. However, it must be explored whether the findings are transferable to real production environments.

Notes

- 1.

The participants interact with the system that they believe to be autonomous, but which is actually being operated by an unseen human being.

- 2.

The Virtual Theater (by MSE Weibull) is an immersive simulator that combines the natural user interfaces of a Head Mounted Display and an omnidirectional conveyor belt. Through a tracking system, the user’s position and orientation in virtual space could be determined. It was combined with a wireless presenter (Logitech Wireless Presenter R400), which served as an input device.

- 3.

The production hall and the robots were designed using Cinema 4D and implemented with Unreal Engine (game engine) and C++.

- 4.

- 5.

Considering the Godspeed Test to measure the users’ perception of the robot, the humanoid robot was rated as more humanlike (\( \bar{x} = 2.0 6,SD = . 5 3 \) vs. \( \bar{x} = 2. 3 8,SD = . 7 7 \)), t (114.54) = −2.94, p = .004. Humanoids were also perceived as more animated (\( \bar{x} = 2. 5 4,SD = . 5 7 \) vs. \( \bar{x} = 2. 7 5,SD = . 7 1 \)), t (151) = −2.09, p = .038, likeable (\( \bar{x} = 3. 1 9,SD = . 4 8 \) vs. \( \bar{x} = 3. 7 8,SD = . 5 3 \) \( \bar{x} = 3. 7 8,SD = . 5 3 \)), t (151) = −7.15, p < .001, and intelligent (\( \bar{x} = 3.0 9,SD = . 6 7 \) vs. \( \bar{x} = 3. 3 9,SD = . 5 8 \)), t (151) = −2.84, p = .005. No influence was found in the level of perceived safety (\( \bar{x} = 2. 6 9,SD = . 5 2 \) vs. \( \bar{x} = 2. 5 4,SD = . 5 8 \)), t (151) = 1.69, p = .093.

References

Knight, W.: Inside Amazon. At a new fulfillment center in New Jersey, humans and robots work together in a highly efficient system. MIT Technol. Rev. (2015)

Lazarte, M.: Robots and humans can work together with new ISO guidance. http://www.iso.org/iso/home/news_index/news_archive/news.htm?refid=Ref2057 (2016). Accessed 13 Jan 2017

Charles, R.L., Charalambous, G., Fletcher, S.: Your new colleague is a robot. Is that ok? In: Proceedings of the International Conference on Ergonomics & Human Factors, pp. 307–311 (2015)

Scholtz, J.: Theory and evaluation of human robot interactions. In: Proceedings of the 36th Hawaii International Conference on System Sciences (2002). doi:10.1109/HICSS.2003.1174284

Yanco, H.A., Drury, J.: Classifying human-robot interaction: an updated taxonomy. In: IEEE International Conference on Systems, Man and Cybernetics (2004). doi:10.1075/z.124.02int

Han, Y.: Software Infrastructure for Configurable Workflow Systems. A Model-Driven Approach Based on Higher Order Object Nets and CORBA, 1st edn. Wissenschaft und Technik, Berlin (1997)

Salas, E., Dickinson, T.L., Converse, S.A., Tanndenbaum, S.I.: Toward an understanding of team performance and training. In: Swezey, R.W., Salas, E. (eds.) Teams: Their Training and Performance, pp. 3–29. Ablex Publishing Corporation, Norwood (1992)

Haeussling, R.: Die zwei Naturen sozialer Aktivitaet. Relationalistische Betrachtung aktueller Mensch-Roboter-Kooperationen. In: Rehberg, K.-S. (ed.) Die Natur der Gesellschaft: Verhandlungen des 33. Kongresses der Deutschen Gesellschaft für Soziologie in Kassel 2006, pp. 720–735. Campus Verlag, Frankfurt am Main (2008)

Charalambous, G., Fletcher, S., Webb, P.: Human-automation collaboration in manufacturing: identifying key implementation factors. In: Proceedings of the 11th International Conference on Manufacturing Research (2013). doi:10.1201/b13826-16

Wickens, C.D., Sebok, A., Li, H., Sarter, N., Gacy, A.M.: Using modeling and simulation to predict operator performance and automation-induced complacency with robotic automation: a case study and empirical validation. Hum. Factors (2015). doi:10.1177/0018720814566454

Hancock, P.A., Billings, D.R., Schaefer, K.E., Chen, J.Y.C., de Visser, E.J., Parasuraman, R.: A meta-analysis of factors affecting trust in human-robot interaction. Hum. Factors (2011). doi:10.1177/0018720811417254

Salem, M., Lakatos, G., Amirabdollahian, F., Dautenhahn, K.: Would you trust a (faulty) robot? Effects of error, task type and personality on human-robot cooperation and trust. In: Proceedings of the Tenth Annual ACM/IEEE International Conference on Human-Robot Interaction (2015). doi:10.1145/2696454.2696497

Jentsch, E.: On the psychology of the uncanny (1906) 1. Angelaki: J. Theor. Hum. (1997). doi:10.1080/09697259708571910

Freud, S.: The ‘Uncanny’. The Standard Edition of the Complete Psychological Works of Sigmund Freud, vol. XVII, pp. 217–256 (1919)

Mori, M., MacDorman, K., Kageki, N.: The uncanny valley [from the field]. IEEE Robot. Automat. Mag. (2012). doi:10.1109/MRA.2012.2192811

Hinds, P.J., Roberts, T.L., Jones, H.: Whose job is it anyway? A study of human–robot interaction in a collaborative task. Hum.-Comput. Interact. (2004). doi:10.1207/s15327051hci1901&2_7

Goetz, J., Kiesler, S., Powers, A.: Matching robot appearance and behavior to tasks to improve human-robot cooperation. In: Proceedings of the 12th IEEE International Workshop on Robot and Human Interactive Communication ROMAN (2003). doi:10.1109/ROMAN.2003.1251796

Richert, A., Shehadeh, M.A., Muller, S.L., Schroder, S., Jeschke, S.: Socializing with robots: human-robot interactions within a virtual environment. In: The 2016 IEEE Workshop on Advanced Robotics and Its Social Impacts, IEEE ARSO 2016, 7–10 July 2016, Shanghai, China, pp. 49–54. IEEE, Piscataway (2016). doi:10.1109/ARSO.2016.7736255

Richert, A., Shehadeh, M.A., Müller, S.L., Schröder, S., Jeschke, S.: Robotic workmates—hybrid human-robot-teams in the industry 4.0. In: Proceedings of the 11th International Conference on e-Learning, pp. 127–131 (2016)

Special Eurobarometer 382: Public Attitudes towards Robots. European Commission (2012). http://ec.europa.eu/public_opinion/archives/ebs/ebs_382_en.pdf. Accessed 30 Mar 2016

Hart, S.G.: NASA-Task Load Index (NASA-TLX): 20 years later. In: Proceedings of the Human Factors and Ergonomics Society Annual Meeting (2006). doi:10.1177/154193120605000909

Nomura, T., Kanda, T., Suzuki, T., Kato, K.: Psychology in human-robot communication: an attempt through investigation of negative attitudes and anxiety toward robots. In: Proceedings of the 13th IEEE International Workshop on Robot and Human Interactive Communication (2004). doi:10.1109/ROMAN.2004.1374726

Kauffeld, S., Frieling, E.: Der Fragebogen zur Arbeit im Team (F-A-T). Zeitschrift für Arbeits- und Organisationspsychologie A&O (2001). doi:10.1026//0932-4089.45.1.26

Beier, G.: Kontrollüberzeugungen im Umgang mit Technik. Rep. Psychol. 9, 684–693 (1999)

Schaefer, K.E.: The perception and measurement of human-robot trust. Dissertation, University of Central Florida Orlando, Florida (2013). http://etd.fcla.edu/CF/CFE0004931/Schaefer_Kristin_E_201308_PhD.pdf. Accessed 17 June 2016

Rammstedt, B., Kemper, C.J., Klein, M.C., Beierlein, C., Kovaleva, A.: Eine kurze Skala zur Messung der fünf Dimensionen der Persönlichkeit. 10 Item Big Five Inventory (BFI-10). methoden, daten, analyse (2013). doi:10.12758/mda.2013.013

Helton, W.S., Näswall, K.: Short stress state questionnaire. Eur. J. Psychol. Assess. (2015). doi:10.1027/1015-5759/a000200

Kennedy, R.S., Lane, N.E., Berbaum, K.S., Lilienthal, M.G.: Simulator sickness questionnaire. an enhanced method for quantifying simulator sickness. Int. J. Aviat. Psychol. (1993). doi:10.1207/s15327108ijap0303_3

Bartneck, C., Kulić, D., Croft, E., Zoghbi, S.: Measurement instruments for the anthropomorphism, animacy, likeability, perceived intelligence, and perceived safety of robots. Int. J. Soc. Robot. (2009). doi:10.1007/s12369-008-0001-3

Wirth, W., Schramm, H., Böcking, S., Gysbers, A., Hartmann, T., Klimmt, C., Vorderer, P.: Entwicklung und Validierung eines Fragebogens zur Entstehung von räumlichem Präsenzerleben. Die Brücke zwischen Theorie und Empirie: Operationalisierung, Messung und Validierung in der Kommunikationswissenschaft. Köln: von Halem, pp. 70–95 (2008)

West, M.A.: Effective Teamwork. Personal and Professional Development. British Psychological Society, Leicester (1994)

Phillips, E., Ososky, S., Grove, J., Jentsch, F.: From tools to teammates. toward the development of appropriate mental models for intelligent robots. In: Proceedings of the Human Factors and Ergonomics Society Annual Meeting (2011). doi:10.1177/1071181311551310

Acknowledgments

The authors would like to thank the German Federal Ministry of Education and Research (BMBF) for the kind support within the joint project ARIZ (Arbeit in der Industrie der Zukunft) and acknowledge the RWTH Start-Up for the kind support within the project SowiRo (Socializing with Robots).

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2017 Springer International Publishing AG

About this paper

Cite this paper

Müller, S.L., Schröder, S., Jeschke, S., Richert, A. (2017). Design of a Robotic Workmate. In: Duffy, V. (eds) Digital Human Modeling. Applications in Health, Safety, Ergonomics, and Risk Management: Ergonomics and Design. DHM 2017. Lecture Notes in Computer Science(), vol 10286. Springer, Cham. https://doi.org/10.1007/978-3-319-58463-8_37

Download citation

DOI: https://doi.org/10.1007/978-3-319-58463-8_37

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-319-58462-1

Online ISBN: 978-3-319-58463-8

eBook Packages: Computer ScienceComputer Science (R0)