Abstract

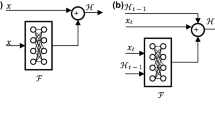

Gradient descent learning algorithms for recurrent neural networks (RNNs) perform poorly on long-term dependency problems. In this paper, we propose a novel architecture called Segmented-Memory Recurrent Neural Network (SMRNN). The SMRNN is trained using an extended real time recurrent learning algorithm, which is gradient-based. We tested the SMRNN on the standard problem of information latching. Our implementation results indicate that gradient descent learning is more effective in SMRNN than in standard RNNs.

Chapter PDF

Similar content being viewed by others

References

Bengio, Y., Simard, P., Frasconi, P.: Learning Long-Term Dependencies with Gradient Descent is Difficult. IEEE Transactions on Neural Networks 5(2), 157–166 (1994)

Elman, J.L.: Distributed Representations, Simple Recurrent Networks, and Grammatical Structure. Machine Learning 7, 195–226 (1991)

Hihi, S.E., Bengio, Y.: Hierarchical Recurrent Neural Networks for Long-Term Dependencies. In: Perrone, M., Mozer, M., Touretzky, D.D. (eds.) Advances in Neural Information Processing Systems, pp. 493–499. MIT Press, Cambridge (1996)

Hochreiter, S., Schmidhuber, J.: Long Short-Term Memory. Neural Computation 9(8), 1735–1780 (1997)

Ku, K.W.C., Mak, M.W., Siu, W.C.: A Cellular Genetic Algorithm for Training Recurrent Neural Networks. In: Proc. Int. Conf. Neural Networks and Signal Processing, pp. 140–143 (1995)

Lin, T., Horne, B.G., Tino, P., Giles, C.L.: Learning Long-Term Dependencies in NARX Recurrent Neural Networks. IEEE Trans. on Neural Networks 7, 1329–1337 (1996)

Lin, T., Horne, B.G., Giles, C.L.: How Embedded Memory in Recurrent Neural Network Architectures Helps Learning Long-Term Temporal Dependencies. Neural Networks 11, 861–868 (1998)

Ma, S., Ji, C.: Fast Training of Recurrent Networks Based on the EM Algorithms. IEEE Trans. on Neural Networks 9(1), 11–26 (1998)

Šter, B.: Latched Recurrent Neural Network. Elektrotehniški vestnik 70(1-2), 46–51 (2003)

Werbos, P.J.: Backpropagation through Time: What It Does and How to Do It. Proc. IEEE 78(10), 1550–1560 (1990)

Williams, R.J., Zipser, D.: Gradient Based Learning Algorithms for Recurrent Connectionist Networks, Northeastem University, College of Computer Science Technical Report, NU-CCS-90-9, 433-486 (1990)

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2004 Springer-Verlag Berlin Heidelberg

About this paper

Cite this paper

Chen, J., Chaudhari, N.S. (2004). Learning Long-Term Dependencies in Segmented Memory Recurrent Neural Networks. In: Yin, FL., Wang, J., Guo, C. (eds) Advances in Neural Networks – ISNN 2004. ISNN 2004. Lecture Notes in Computer Science, vol 3173. Springer, Berlin, Heidelberg. https://doi.org/10.1007/978-3-540-28647-9_61

Download citation

DOI: https://doi.org/10.1007/978-3-540-28647-9_61

Publisher Name: Springer, Berlin, Heidelberg

Print ISBN: 978-3-540-22841-7

Online ISBN: 978-3-540-28647-9

eBook Packages: Springer Book Archive