Abstract

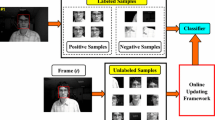

Many learning tasks for computer vision problems can be described by multiple views or multiple features. These views can be exploited in order to learn from unlabeled data, a.k.a. “multi-view learning”. In these methods, usually the classifiers iteratively label each other a subset of the unlabeled data and ignore the rest. In this work, we propose a new multi-view boosting algorithm that, unlike other approaches, specifically encodes the uncertainties over the unlabeled samples in terms of given priors. Instead of ignoring the unlabeled samples during the training phase of each view, we use the different views to provide an aggregated prior which is then used as a regularization term inside a semi-supervised boosting method. Since we target multi-class applications, we first introduce a multi-class boosting algorithm based on maximizing the mutli-class classification margin. Then, we propose our multi-class semi-supervised boosting algorithm which is able to use priors as a regularization component over the unlabeled data. Since the priors may contain a significant amount of noise, we introduce a new loss function for the unlabeled regularization which is robust to noisy priors. Experimentally, we show that the multi-class boosting algorithms achieves state-of-the-art results in machine learning benchmarks. We also show that the new proposed loss function is more robust compared to other alternatives. Finally, we demonstrate the advantages of our multi-view boosting approach for object category recognition and visual object tracking tasks, compared to other multi-view learning methods.

This work has been supported by the Austrian FFG project MobiTrick (825840) and Outlier (820923) under the FIT-IT program.

Chapter PDF

Similar content being viewed by others

Keywords

These keywords were added by machine and not by the authors. This process is experimental and the keywords may be updated as the learning algorithm improves.

References

Chapelle, O., Schölkopf, B., Zien, A.: Semi-Supervised Learning, Cambridge, MA (2006)

Zhu, X.: Semi-supervised learning literature survey. Technical report (2008)

Blum, A., Mitchell, T.: Combining labeled and unlabeled data with co-training. In: COLT, pp. 92–100 (1998)

Brefeld, U., Büscher, C., Scheffer, T.: Multi-view discriminative sequential learning. In: Gama, J., Camacho, R., Brazdil, P.B., Jorge, A.M., Torgo, L. (eds.) ECML 2005. LNCS (LNAI), vol. 3720, pp. 60–71. Springer, Heidelberg (2005)

Sindhwani, V., Rosenberg, D.S.: An rkhs for multi-view learning and manifold co-regularization. In: ICML, pp. 976–983 (2008)

Leskes, B., Torenvliet, L.: The value of agreement a new boosting algorithm. J. Comput. Syst. Sci. 74, 557–586 (2008)

Levin, A., Viola, P., Freund, Y.: Unsupervised improvement of visual detectors using co-training. In: ICCV, vol. I, pp. 626–633 (2003)

Christoudias, C.M., Urtasun, R., Darrell, T.: Unsupervised distributed feature selection for multi-view object recognition. In: CVPR (2008)

Liu, R., Cheng, J., Lu, H.: A robust boosting tracker with minimum error bound in a co-training framework. In: ICCV (2009)

Tang, F., Brennan, S., Zhao, Q., Tao, H.: Co-tracking using semi-supervised support vector machines. In: ICCV (2007)

Sun, S., Zhang, Q.: Multiple-view multiple-learner semi-supervised learning. Technical report (2007)

Leistner, C., Saffari, A., Santner, J., Bischof, H.: Semi-supervised random forests. In: IEEE International Conference on Computer Vision, ICCV (2009)

Freund, Y., Schapire, R.: Experiments with a new boosting algorithm. In: ICML, pp. 148–156 (1996)

Friedman, J., Hastie, T., Tibshirani, R.: Additive logistic regression: a statistical view of boosting. The Annals of Statistics 38, 337–374 (2000)

Shirazi, H.M., Vasconcelos, N.: On the design of loss functions for classification: theory, robustness to outliers, and savageboost. In: NIPS, pp. 1049–1056 (2008)

Saffari, A., Grabner, H., Bischof, H.: SERBoost: Semi-supervised boosting with expectation regularization. In: Forsyth, D., Torr, P., Zisserman, A. (eds.) ECCV 2008, Part III. LNCS, vol. 5304, pp. 588–601. Springer, Heidelberg (2008)

Saffari, A., Leistner, C., Bischof, H.: Regularized multi-class semi-supervised boosting. In: CVPR (2009)

Friedman, J.: Greedy function approximation: A gradient boosting machine. The Annals of Statistics 29, 1189–1232 (2001)

Breiman, L.: Random forests. Machine Learning 45, 5–32 (2001)

Zhu, J., Rosset, S., Zou, H., Hastie, T.: Multi-class adaboost. Technical report (2006)

Guruswami, V., Sahai, A.: Multiclass learning, boosting, and error-correcting codes. In: COLT (1999)

Zhang, B., Ye, G., Wang, Y., Xu, J., Herman, G.: Finding shareable informative patterns and optimal coding matrix for multiclass boosting. In: ICCV (2009)

Sonnenburg, S., Rätsch, G., Schäfer, C., Schölkopf, B.: Large scale multiple kernel learning. JMLR 7, 1531–1565 (2006)

Gehler, P., Nowozin, S.: On feature combination for multiclass object classification. In: ICCV (2009)

Avidan, S.: Ensemble tracking, vol. 2, pp. 494–501 (2005)

Grabner, H., Bischof, H.: On-line boosting and vision, vol. 1, pp. 260–267 (2006)

Grabner, H., Leistner, C., Bischof, H.: On-line semi-supervised boosting for robust tracking. In: Forsyth, D., Torr, P., Zisserman, A. (eds.) ECCV 2008, Part I. LNCS, vol. 5302, pp. 234–247. Springer, Heidelberg (2008)

Babenko, B., Yang, M.H., Belongie, S.: Visual tracking with online multiple instance learning. In: CVPR (2009)

Ross, D., Lim, J., Lin, R.S., Yang, M.H.: Incremental learning for robust visual tracking. IJCV (2008)

Adam, A., Rivlin, E., Shimshoni, I.: Robust fragments-based tracking using the integral histogram. In: CVPR (2006)

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

1 Electronic Supplementary Material

Rights and permissions

Copyright information

© 2010 Springer-Verlag Berlin Heidelberg

About this paper

Cite this paper

Saffari, A., Leistner, C., Godec, M., Bischof, H. (2010). Robust Multi-View Boosting with Priors. In: Daniilidis, K., Maragos, P., Paragios, N. (eds) Computer Vision – ECCV 2010. ECCV 2010. Lecture Notes in Computer Science, vol 6313. Springer, Berlin, Heidelberg. https://doi.org/10.1007/978-3-642-15558-1_56

Download citation

DOI: https://doi.org/10.1007/978-3-642-15558-1_56

Publisher Name: Springer, Berlin, Heidelberg

Print ISBN: 978-3-642-15557-4

Online ISBN: 978-3-642-15558-1

eBook Packages: Computer ScienceComputer Science (R0)