Abstract

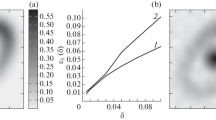

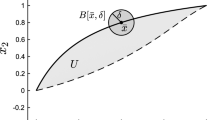

It is shown by example that the reduced Hessian method for constrained optimization that is known to give 2-stepQ-superlinear convergence may not convergeQ-superlinearly.

Similar content being viewed by others

References

R.H. Byrd, “An example of irregular convergence in some constrained optimization methods that use the projected Hessian”,Mathematical Programming, this issue.

J. Goodman, “Newton's method for constrained optimization”, Courant Institute of Mathematical Sciences, New York University, New York (1982).

J. Nocedal and M. Overton, “Projected Hessian updating algorithms for nonlinear constrained optimization” Report 59, Computer Science Department, New York University, New York (1983).

M. Overton, personal communication, 1984.

M.J.D. Powell, “The convergence of variable metric methods for nonlinearly constrained optimization calculation” in: O.L. Mangasarian, R. Meyer and S. Robinson, eds.,Nonlinear Programming 3 (Academic Press, New York and London, 1978).

J. Stoer, “Foundations of recursive quadratic programming methods for solving nonlinear programs”, Institut für Angewandte Mathematik und Statitik, Universität Würzburg, West Germany, presented at the NATO ASI on Computational Mathematical Programming, Bad Windsheim, West Germany (1984).

Author information

Authors and Affiliations

Rights and permissions

About this article

Cite this article

Yuan, Y. An only 2-step Q-superlinear convergence example for some algorithms that use reduced hessian approximations. Mathematical Programming 32, 224–231 (1985). https://doi.org/10.1007/BF01586092

Received:

Revised:

Issue Date:

DOI: https://doi.org/10.1007/BF01586092