Abstract

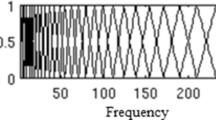

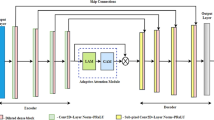

A lot of effort has been made to achieve co-channel (two-talker) speech separation. However, the comprehensive analysis of the amplitude modulation spectrum (AMS) to address this problem has received little attention. In this paper, we propose an approach to exploit the AMS and to perform the separation based on the framework of computational auditory scene analysis (CASA). Specifically, this method utilizes the periodicity encoded in the AMS and then makes the channel selection. The main features of the approach are: (1) the reassignment method is used to improve the spectral resolution of the AMS in short duration; (2) a template-based pitch detector is used to determine the dominant fundamental frequency (F0) in an individual channel; (3) segmentation and grouping, the two stages in the CASA-based approaches, are employed to increase the robustness of channel selection. Systematic evaluation and comparison show that the proposed approach yields better performance than the previous system.

Similar content being viewed by others

Notes

A T-F unit corresponds to a certain filter at a specific time frame.

The ERB of a filter is defined as the bandwidth of an ideal rectangular filter which has a response in its passband equal to the maximum response of the specified filter and transmits the same total power of white noise as the specified filter.

See the next footnote for the definition of the dominant F0 in a unit.

The dominant F0 in T-F unit u(c,m) is defined as the fundamental frequency corresponding to the maximum of R c,m (f) within the plausible pitch range of human speech, i.e., [100, 400 Hz] in this paper.

A correlogram is an autocorrelation of every filter response in an auditory filter bank [11].

References

F. Auger, P. Flandrin, Improving the readability of time-frequency and time-scale representations by the reassignment method. IEEE Trans. Signal Process. 43(5), 1068–1089 (1995)

A. Bregman, Auditory Scene Analysis (MIT Press, Cambridge, 1990)

G. Brown, M. Cooke, Computational auditory scene analysis. Comput. Speech Lang. 8, 297–336 (1994)

P. Boersma, D. Weenink, Praat: doing phonetics by computer (Version 4.3.14) (2005). Accessed 21 August 2013. See http://www.fon.hum.uva.nl/praat

T. Dau, D. Puschel, A. Kohlrausch, A quantitative model of the “effective” signal processing in the auditory system. I. Model structure. J. Acoust. Soc. Am. 99(6), 3615–3622 (1996)

D. Ellis, Prediction-driven computational auditory scene analysis. Ph.D. Dissertation, Mass. Inst. of Technol, Cambridge, MA (1996)

C. Fevotte, S. Godsill, A Bayesian approach for blind separation of sparse sources. IEEE Trans. Audio Speech Lang. Process. 14(6), 2174–2188 (2006)

P. Flandrin, F. Auger, E. Chassande-Mottin, Time-frequency reassignment: from principles to algorithms, in Applications in Time-Frequency Signal Processing, ed. by P. Antonia (CRC Press, Boca Raton, 2003), pp. 179–203

J. Garofolo, L. Lamel, W. Fisher, J. Fiscus, D. Pallett, N. Dahlgren, DARPA TIMIT acoustic-phonetic continuous speech corpus. Technical Report NISTIR 4930, National Inst. of Standards and Technol, Gaithersburg, MD (1993)

D. Hermes, Measurement of pitch by subharmonic summation. J. Acoust. Soc. Am. 83(1), 257–264 (1988)

G. Hu, D. Wang, Monaural speech segregation based on pitch tracking and amplitude modulation. IEEE Trans. Neural Netw. 15(5), 1135–1150 (2004)

G. Hu, D. Wang, A tandem algorithm for pitch estimation and voiced speech segregation. IEEE Trans. Audio Speech Lang. Process. 18(8), 2067–2079 (2010)

G. Hu, D. Wang, Auditory segmentation based on onset and offset analysis. IEEE Trans. Audio Speech Lang. Process. 15(2), 396–405 (2007)

J. Hershey, S. Rennie, P. Olsen, T. Kristjansson, Super-human multi-talker speech recognition: a graphical modeling approach. Comput. Speech Lang. 24, 45–66 (2010)

J. Holdsworth, I. Nimmo-Smith, R. Patterson, P. Rice, Implementing a gammatone filter bank. MRC Appl. Psychology Unit Rep. (1988)

K. Han, D. Wang, A classification based approach to speech segregation. J. Acoust. Soc. Am. 132(5), 3475–3483 (2012)

K. Hu, D. Wang, An unsupervised approach to cochannel speech separation. IEEE Trans. Audio Speech Lang. Process. 21(1), 122–131 (2013)

G. Jang, T. Lee, A probabilistic approach to single channel blind source separation, in Advances in Neural Inf. Process, Syst., (2003), pp. 1173–1180

Z. Jin, D. Wang, HMM-based multipitch tracking for noisy and reverberant speech. IEEE Trans. Audio Speech Lang. Process. 19(5), 1091–1102 (2011)

A. Klapuri, Signal processing methods for the automatic transcription of music. Ph.D. Dissertation, Tampere University of Technol, Finland (2004)

A. Klapuri, Multipitch analysis of polyphonic music and speech signals using an auditory model. IEEE Trans. Audio Speech Lang. Process. 16(2), 255–266 (2008)

B. Kollmeier, R. Koch, Speech enhancement based on physiological and psychoacoustical models of modulation perception and binaural interaction. J. Acoust. Soc. Am. 95(3), 1593–1602 (1994)

G. Kim, Y. Lu, Y. Hu, P. Loizou, An algorithm that improves speech intelligibility in noise for normal-hearing listeners. J. Acoust. Soc. Am. 129(3), 1486–1494 (2009)

M. Lewicki, T. Sejnowski, Learning nonlinear overcomplete representations for efficient coding, in Advances in Neural Inf. Process. Syst., ed. by M. Jordan, M. Kearns, S. Solla (MIT Press, Cambridge, 1998)

N. Li, P. Loizou, Factors infuencing intelligibility of ideal binary-masked speech: implications for noise reduction. J. Acoust. Soc. Am. 123(3), 1673–1682 (2008)

P. Li, Y. Guan, B. Xu, W. Liu, Monaural speech separation based on computational auditory scene analysis and objective quality assessment of speech. IEEE Trans. Audio Speech Lang. Process. 14(6), 2014–2023 (2006)

Y. Li, D. Wang, On the optimality of ideal binary time frequency masks. Speech Commun. 51, 230–239 (2009)

A. Mahmoodzadeh, H. Abutalebi, H. Soltanian-Zadeh, H. Sheikhzadeh, Single channel speech separation in modulation frequency domain based on a novel pitch range estimation method. EURASIP J. Adv. Signal Process. (2012). doi:10.1186/1687-6180-2012-67

B. Moore, An Introduction to the Psychology of Hearing (Academic Press, San Diego, 2003)

P. Mowlaee, M. Christensen, S. Jensen, New results on single-channel speech separation using sinusoidal modeling. IEEE Trans. Audio Speech Lang. Process. 19(5), 1265–1277 (2011)

A. Noll, Cepstrum pitch determination. J. Acoust. Soc. Am. 41(2), 293–309 (1967)

F. Plante, G. Meyer, W. Ainsworth, Improvement of speech spectrogram accuracy by the method of reassignment. IEEE Trans. Speech Audio Process. 6(3), 282–286 (1998)

R. Patterson, I. Nimmo-Smith, J. Holdsworth, P. Rice, An efficient auditory filterbank based on the gammatone function. MRC Appl. Psychology Unit Rep. pp. 1–33 (1988)

A. Reddy, B. Raj, Soft mask methods for single-channel speaker separation. IEEE Trans. Audio Speech Lang. Process. 15(6), 1766–1776 (2007)

M. Radfar, R. Dansereau, Single-channel speech separation using soft mask filtering. IEEE Trans. Audio Speech Lang. Process. 15(8), 2299–2310 (2007)

S. Roweis, Factorial models and refiltering for speech separation and denoising, in Proc. ISCA European Conference Speech Communication and Technology (EuroSeech) (2003), pp. 1009–1012

A. Shapiro, C. Wang, A versatile pitch tracking algorithm: from human speech to killer whale vocalizations. J. Acoust. Soc. Am. 126(1), 451–459 (2009)

H. Strube, H. Wilmers, Noise reduction for speech signals by operation on the modulation frequency spectrum. J. Acoust. Soc. Am. 105(2), 1092 (1999)

M. Stark, M. Wohlmayr, F. Pernkopf, Source-filter-based single-channel speech separation using pitch information. IEEE Trans. Audio Speech Lang. Process. 19(2), 242–255 (2011)

M. Schmidt, R. Olsson, Single-channel speech separation using sparse non-negative matrix factorization, in Proc. of the International Conference on Spoken Lang. Process. (ICSLP) (2006), pp. 2614–2617

S. Schimmel, K. Fitz, L. Atlas, Frequency reassignment for coherent modulation filtering, in Proceedings of the International Conference on Acoust., Speech, and Signal Process (ICASSP) (2006), pp. 261–264

J. Tchorz, B. Kollmeier, SNR estimation based on amplitude modulation analysis with applications to noise suppression. IEEE Trans. Speech Audio Process. 11(3), 184–192 (2001)

C. Wang, S. Seneff, Robust pitch tracking for prosodic modeling in telephone speech, in Proceedings of the International Conference on Acoust., Speech, and Signal Process (ICASSP), Istanbul, Turkey (2000), pp. 1143–1146

D. Wang, G. Brown (eds.), Computational Auditory Scene Analysis: Principles, Algorithms, and Applications (Wiley/IEEE Press, New York, 2006)

D. Wang, U. Kjems, M. Pedersen, J. Boldt, Speech perception of noise with binary gains. J. Acoust. Soc. Am. 125(4), 2303–2307 (2008)

D. Wang, On ideal binary masks as the computational goal of auditory scene analysis, in Speech Separation by Humans and Machines, ed. by P. Divenyi (Kluwer Academic, Boston, 2005), pp. 181–197

M. Weintraub, A theory and computational model of auditory monaural sound separation. Ph.D. Dissertation, Stanford University, CA, USA (1985)

R. Weiss, D. Ellis, Speech separation using speaker-adapted eigenvoice speech models. Comput. Speech Lang. 24, 16–29 (2010)

D. Yang, G. Meyer, W. Ainsworth, Vowel separation using the reassigned amplitude-modulation spectrum, in Proc. of the International Conference on Spoken Lang. Process. (ICSLP) (1998), p. 0511

Acknowledgements

This work is supported in part by the National Natural Science Foundation of China (60772039 and 61202265). The authors would also like to thank the anonymous reviewers for their helpful suggestions/criticisms.

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Hu, Q., Liang, MG. Co-channel Speech Separation Based on Amplitude Modulation Spectrum Analysis. Circuits Syst Signal Process 33, 565–588 (2014). https://doi.org/10.1007/s00034-013-9656-6

Received:

Revised:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s00034-013-9656-6