Abstract

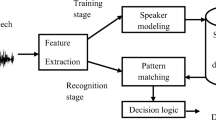

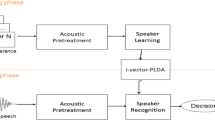

In speaker recognition, a robust recognition method is essential. This paper proposes a speaker verification method that is based on the time-delay neural network (TDNN) and long short-term memory with recurrent project layer (LSTMP) model for the speaker modeling problem in speaker verification. In this work, we present the application of the fusion of TDNN and LSTMP to the i-vector speaker recognition system that is based on the Gaussian mixture model-universal background model. By using a model that can establish long-term dependencies to create a universal background model that contains a larger amount of speaker information, it is possible to extract more feature parameters, which are speaker dependent, from the speech signal. We conducted experiments with this method on four corpora: two in Chinese and two in English. The equal error rate, minimum detection cost function and detection error tradeoff curve are used as criteria for system performance evaluation. The experimental results show that the TDNN–LSTMP/i-vector speaker recognition method outperforms the baseline system on both Chinese and English corpora and has better robustness.

Similar content being viewed by others

References

Y. Bengio, P. Simard, P. Frasconi, Learning long-term dependencies with gradient descent is difficult. IEEE Trans. Neural Netw. 5(2), 157 (2002). https://doi.org/10.1109/72.279181

G.E. Dahl, D. Yu, L. Deng, A. Acero, Context-dependent pre-trained deep neural networks for large-vocabulary speech recognition. IEEE Trans. Audio Speech Lang. Process. 20(1), 30 (2011). https://doi.org/10.1109/TASL.2011.2134090

N. Dehak, P.J. Kenny, R. Dehak, Front-end factor analysis for speaker verification. IEEE Trans. Audio Speech Lang. Process. 19(4), 788 (2011). https://doi.org/10.1109/TASL.2010.2064307

D. Garcia-Romero, X. Zhou, C.Y. Espy-Wilson, Multicondition training of Gaussian PLDA models in i-vector space for noise and reverberation robust speaker recognition, in IEEE International Conference on Acoustics, Speech and Signal Processing (2012), pp. 4257–4260

J.S. Garofolo, L.F. Lamel, W.M. Fisher, Darpa timit acoustic-phonetic continuous speech corpus cdrom. NIST speech disc 1-1.1. NASA STI/Recon Technical Report N 93 (1993)

K.J. Han, S. Hahm, B.H. Kim, Deep learning-based telephony speech recognition in the wild, in INTERSPEECH (2017), pp. 1323–1327. https://doi.org/10.21437/Interspeech.2017-1695

G. Hinton, L. Deng, D. Yu, Deep neural networks for acoustic modeling in speech recognition: the shared views of four research groups. IEEE Signal Process. Mag. 29(6), 82 (2012). https://doi.org/10.1109/MSP.2012.2205597

J.D. Hui Bu, Aishell-1: an open-source mandarin speech corpus and a speech recognition baseline, in Orient. COCOSDA 2017 (2017) (Submitted)

V. Joshi, N.V. Prasad, S. Umesh, Modified mean and variance normalization: transforming to utterance-specific estimates. Circuits Syst. Signal Process. 35(5), 1593 (2016). https://doi.org/10.1007/s00034-015-0129-y

S. Karita, A. Ogawa, M. Delcroix, Forward-backward convolutional lstm for acoustic modeling, in INTERSPEECH (2017), pp. 1601–1605. https://doi.org/10.21437/Interspeech.2017-554

P. Kenny, Bayesian speaker verification with heavy tailed priors, in Proceedings of the Odyssey Speaker and Language Recognition Workshop, Brno, Czech Republic (2010)

NIST, The NIST year 2008 speaker recognition evaluation plan. https://www.nist.gov/sites/default/files/documents/2017/09/26/sre08_evalplan_release4.pdf. Accessed 3 Apr 2008

V. Panayotov, G. Chen, D. Povey, Librispeech: an ASR corpus based on public domain audio books, in IEEE International Conference on Acoustics, Speech and Signal Processing (2015), pp. 5206–5210. https://doi.org/10.1109/ICASSP.2015.7178964

V. Peddinti, D. Povey, S. Khudanpur, A time delay neural network architecture for efficient modeling of long temporal contexts, in INTERSPEECH (2015), pp. 3214–3218

A. Poddar, M. Sahidullah, G. Saha, Speaker verification with short utterances: a review of challenges, trends and opportunities. IET Biom. 7(2), 91 (2018). https://doi.org/10.1049/iet-bmt.2017.0065

D. Povey, A. Ghoshal, G. Boulianne, The kaldi speech recognition toolkit, in IEEE 2011 Workshop on Automatic Speech Recognition and Understanding. (IEEE Signal Processing Society, 2011)

D.A. Reynolds, T.F. Quatieri, R.B. Dunn, Speaker Verification Using Adapted Gaussian Mixture Models (Academic Press Inc., London, 2000)

H. Sak, A. Senior, F. Beaufays, Long short-term memory based recurrent neural network architectures for large vocabulary speech recognition. In: Interspeech, pp 338–342 (2014). https://arxiv.org/abs/1402.1128

D. Snyder, D. Garcia-Romero, D. Povey, Time delay deep neural network-based universal background models for speaker recognition. Autom. Speech Recognit. Underst. (2016). https://doi.org/10.1109/ASRU.2015.7404779

L.M. Surhone, M.T. Tennoe, S.F. Henssonow, Long Short Term Memory (Betascript Publishing, Riga, 2010)

D. Wang, X. Zhang, Thchs-30: a free chinese speech corpus. Comput. Sci. (2015). https://arxiv.org/abs/1512.01882

Y. Xu, I. Mcloughlin, Y. Song, Improved i-vector representation for speaker diarization. Circuits Syst. Signal Process. 35(9), 3393 (2016). https://doi.org/10.1007/s00034-015-0206-2

Acknowledgements

This research reported here was supported by China National Nature Science Funds (No. 31772064).

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher’s Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations

Rights and permissions

About this article

Cite this article

Liu, H., Zhao, L. A Speaker Verification Method Based on TDNN–LSTMP. Circuits Syst Signal Process 38, 4840–4854 (2019). https://doi.org/10.1007/s00034-019-01092-3

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s00034-019-01092-3