Abstract

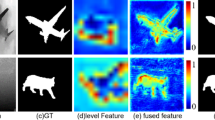

Detecting salient objects in RGB-D images attracts more and more attention in recent years. It benefits from the widespread use of depth sensors and can be applied in the comprehensive understanding of RGB-D images. Existing models focus on double-stream networks which transfer from color stream to depth stream, but depth stream with one channel information cannot learn the same feature as color stream with three channels information even if HHA representation is adopted. In our works, RGB-D four-channels input is chosen, and meanwhile, progressive parallel spatial and channel attention mechanisms are performed to improve feature representation. Spatial and channel attention can pay more attention on partial positions and channels in the image which show higher response to salient objects. Both attentive features are optimized by attentive feature from higher layer, respectively, and parallel fed into recurrent convolutional layer to generate side-output saliency maps guided by saliency map from higher layer. Last multi-level saliency maps are fused together from multi-scale perspective. Experiments on benchmark datasets demonstrate that parallel attention mechanism and progressive optimization operation play an important role in improving the accuracy of salient object detection, and our model outperforms state-of-the-art models in evaluation matrices.

Similar content being viewed by others

References

Wu, P., Duan, L., Kong, L.: RGB-D salient object detection via feature fusion and multi-scale enhancement. In: CCF Chinese Conference on Computer Vision. Springer, pp. 359–368 (2015)

Ren, J., Gong, X., Yu, L., Zhou, W., Ying Yang, M.: Exploiting global priors for RGB-D saliency detection. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition Workshops, pp. 25–32 (2015)

Ju, R., Ge, L., Geng, W., Ren, T., Wu, G.: Depth saliency based on anisotropic center-surround difference. In: IEEE International Conference on Image Processing, pp. 1115–1119 (2015)

Jiang, L., Koch, A., Zell, A.: Salient regions detection for indoor robots using RGB-D data. In: 2015 IEEE International Conference on Robotics and Automation (ICRA), pp. 1323–1328. IEEE (2015)

Guo, J., Ren, T., Bei, J., Zhu, Y.: Salient object detection in RGB-D image based on saliency fusion and propagation. In: Proceedings of the 7th International Conference on Internet Multimedia Computing and Service, p. 59. ACM (2015)

Feng, D., Barnes, N., You, S., McCarthy, C.: Local background enclosure for RGB-D salient object detection. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 2343–2350 (2016)

Lin, H., Lin, C., Zhao, Y., Wang, A.: 3D saliency detection based on background detection. J. Vis. Commun. Image Represent. 48, 238–253 (2017)

Zhu, C., Zhang, W., Li, T.H., Li, G.: Exploiting the value of the center-dark channel prior for salient object detection. arXiv preprint arXiv:1805.05132

Zhu, C., Cai, X., Huang, K., Li, T.H., Li, G.: PDNet: prior-model guided depth-enhanced network for salient object detection. arXiv preprint arXiv:1803.08636

Chen, H., Li, Y., Su, D.: RGB-D saliency detection by multi-stream late fusion network. In: International Conference on Computer Vision Systems, pp. 459–468. Springer (2017)

Han, J., Hao, C., Liu, N., Yan, C., Li, X.: CNNs-based RGB-D saliency detection via cross-view transfer and multiview fusion. IEEE Trans. Cybern. 48(99), 1–13 (2017)

Chen, H., Li, Y.-F., Su, D.: M3net: multi-scale multi-path multi-modal fusion network and example application to RGB-D salient object detection. In: Intelligent Robots and Systems (IROS), pp. 4911–4916. IEEE (2017)

Chen, H., Li, Y., Su, D.: Multi-modal fusion network with multi-scale multi-path and cross-modal interactions for RGB-D salient object detection. Pattern Recognit. 86, 376–385 (2019)

Chen, H., Li, Y.: Progressively complementarity-aware fusion network for RGB-D salient object detection. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 3051–3060 (2018)

Woo, S., Park, J., Lee, J.-Y., So Kweon, I.: CBAM: convolutional block attention module. In: Proceedings of the European Conference on Computer Vision (ECCV), pp. 3–19 (2018)

Hu, J., Shen, L., Sun, G.: Squeeze-and-excitation networks. arXiv preprint arXiv:1709.01507

Zhang, X., Wang, T., Qi, J., Lu, H., Wang, G.: Progressive attention guided recurrent network for salient object detection. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 714–722 (2018)

Sun, F., Li, W., Guan, Y.: Self-attention recurrent network for saliency detection. Multimed. Tools Appl. 78, 1–15 (2018)

Liu, N., Han, J., Yang, M.-H.: Picanet: Learning pixel-wise contextual attention for saliency detection. arXiv preprint arXiv:1708.06433

Chen, S., Tan, X., Wang, B., Hu, X.: Reverse attention for salient object detection. arXiv preprint arXiv:1807.09940

Chen, S., Wang, B., Tan, X., Hu, X.: Embedding attention and residual network for accurate salient object detection. IEEE Trans. Cybern (2018). https://doi.org/10.1109/TCYB.2018.2879859

Zhang, P., Wang, L., Wang, D., Lu, H., Shen, C.: Agile amulet: real-time salient object detection with contextual attention. arXiv preprint arXiv:1802.06960

Chen, H., Li, Y., Su, D.: RGB-D salient object detection based on discriminative cross-modal transfer learning. arXiv preprint arXiv:1703.00122

Chen, H., Li, Y., Su, D.: Multi-modal fusion network with multi-scale multi-path and cross-modal interactions for RGB-D salient object detection. Pattern Recognit. 86, 376–385 (2019)

Wang, S., Zhou, Z., Jin, W., Qu, H.: Visual saliency detection for RGB-D images under a bayesian framework. IPSJ Trans. Comput. Vis. Appl. 10(1), 1 (2018)

Gupta, S., Girshick, R., Arbeláez, P., Malik, J.: Learning rich features from RGB-D images for object detection and segmentation. In: European Conference on Computer Vision, pp. 345–360. Springer (2014)

Cong, R., Lei, J., Zhang, C., Huang, Q., Cao, X., Hou, C.: Saliency detection for stereoscopic images based on depth confidence analysis and multiple cues fusion. IEEE Signal Process. Lett. 23(6), 819–823 (2016)

Du, D., Xu, X., Ren, T., Wu, G.: Depth images could tell us more: enhancing depth discriminability for RGB-D scene recognition. In: 2018 IEEE International Conference on Multimedia and Expo (ICME), pp. 1–6. IEEE (2018)

Song, X., Herranz, L., Jiang, S.: Depth CNNs for RGB-D scene recognition: learning from scratch better than transferring from RGB-CNNs. In: AAAI, pp. 4271–4277 (2017)

Liu, Z., Shi, S., Duan, Q., Zhang, W., Zhao, P.: Salient object detection for RGB-D image by single stream recurrent convolution neural network. Neurocomputing 363, 46–57 (2019)

Huang, P., Shen, C.-H., Hsiao, H.-F.: RGBD salient object detection using spatially coherent deep learning framework. In: 2018 IEEE 23rd International Conference on Digital Signal Processing (DSP), pp. 1–5. IEEE (2018)

Fan, D.-P., Lin, Z., Zhao, J.-X., Liu, Y., Zhang, Z., Hou, Q., Zhu, M., Cheng, M.-M.: Rethinking RGB-D salient object detection: models, datasets, and large-scale benchmarks. arXiv preprint arXiv:1907.06781

Liang, M., Hu, X.: Recurrent convolutional neural network for object recognition. In: Computer Vision and Pattern Recognition, pp. 3367–3375 (2015)

Qu, L., He, S., Zhang, J., Tian, J., Tang, Y., Yang, Q.: Rgbd salient object detection via deep fusion. IEEE Trans. Image Process. 26(5), 2274–2285 (2017)

Xu, K., Ba, J., Kiros, R., Cho, K., Courville, A., Salakhudinov, R., Zemel, R., Bengio, Y.: Show, attend and tell: neural image caption generation with visual attention. In: International Conference on Machine Learning, pp. 2048–2057 (2015)

Chen, L., Zhang, H., Xiao, J., Nie, L., Shao, J., Liu, W., Chua, T.-S.: Sca-cnn: Spatial and channel-wise attention in convolutional networks for image captioning. In: 2017 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), pp. 6298–6306. IEEE (2017)

Xu, H., Saenko, K.: Ask, attend and answer: exploring question-guided spatial attention for visual question answering. In: European Conference on Computer Vision, pp. 451–466. Springer (2016)

Yang, Z., He, X., Gao, J., Deng, L., Smola, A.: Stacked attention networks for image question answering. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 21–29 (2016)

Zhu, Y., Groth, O., Bernstein, M., Fei-Fei, L.: Visual7w: grounded question answering in images. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 4995–5004 (2016)

Chu, X., Yang, W., Ouyang, W., Ma, C., Yuille, A.L., Wang, X.: Multi-context attention for human pose estimation. arXiv preprint arXiv:1702.07432 1(2)

Wang, F., Jiang, M., Qian, C., Yang, S., Li, C., Zhang, H., Wang, X., Tang, X.: Residual attention network for image classification. arXiv preprint arXiv:1704.06904

Kuen, J., Wang, Z., Wang, G.: Recurrent attentional networks for saliency detection. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 3668–3677 (2016)

Simonyan, K., Zisserman, A.: Very deep convolutional networks for large-scale image recognition. Comput. Sci (2014). arXiv preprint arXiv:1409.1556

Nair, V., Hinton, G.E.: Rectified linear units improve restricted Boltzmann machines. In: Proceedings of the 27th International Conference on Machine Learning (ICML-10), pp. 807–814 (2010)

Peng, H., Li, B., Xiong, W., Hu, W., Ji, R.: RGBD salient object detection: a benchmark and algorithms. In: European Conference on Computer Vision, pp. 92–109. Springer (2014)

Niu, Y., Geng, Y., Li, X., Liu, F.: Leveraging stereopsis for saliency analysis. In: 2012 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), pp. 454–461. IEEE (2012)

Martin, D.R., Fowlkes, C.C., Malik, J.: Learning to detect natural image boundaries using local brightness, color, and texture cues. IEEE Trans. Pattern Anal. Mach. Intell. 26(5), 530–549 (2004)

Fan, D.-P., Cheng, M.-M., Liu, Y., Li, T., Borji, A.: Structure-measure: a new way to evaluate foreground maps. In: Proceedings of the IEEE International Conference on Computer Vision, pp. 4558–4567 (2017)

Fan, D.-P., Gong, C., Cao, Y., Ren, B., Cheng, M.-M., Borji, A.: Enhanced-alignment measure for binary foreground map evaluation. arXiv preprint arXiv:1805.10421

Fan, D.-P., Cheng, M.-M., Liu, J.-J., Gao, S.-H., Hou, Q., Borji, A.: Salient objects in clutter: bringing salient object detection to the foreground. In: European Conference on Computer Vision, pp. 196–212. Springer (2018)

Guo, J., Ren, T., Bei, J.: Salient object detection for RGB-D image via saliency evolution. In: Multimedia and Expo (ICME), pp. 1–6. IEEE (2016)

Chen, H., Li, Y.: Three-stream attention-aware network for RGB-D salient object detection. IEEE Trans. Image Process. 28(6), 2825–2835 (2019)

Acknowledgements

We thank Dr. Hao Chen from City University of Hong Kong for providing their result saliency maps. We also thank Prof. Ming-ming Cheng and Dr. Deng-ping Fan from Nankai University for providing the code of the evaluation metrics. We thank all anonymous reviewers for their valuable comments. This research is supported by National Natural Science Foundation of China (61602004), Natural Science Foundation of Anhui Province (1908085MF182) and Key Program of Natural Science Project of Educational Commission of Anhui Province (KJ2019A0034).

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Liu, Z., Duan, Q., Shi, S. et al. Multi-level progressive parallel attention guided salient object detection for RGB-D images. Vis Comput 37, 529–540 (2021). https://doi.org/10.1007/s00371-020-01821-9

Published:

Issue Date:

DOI: https://doi.org/10.1007/s00371-020-01821-9