Abstract

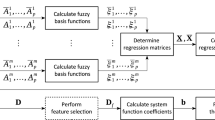

Among several extensions of fuzzy sets, hesitant fuzzy sets (HFSs) are interesting and practical. This paper proposes an application of HFSs in multiple classifier systems (MCSs). The MCSs have been proven as an effective and robust strategy for classification problems. These systems combine different classifiers and generally are composed of three steps: generation, selection (optional) and integration. This paper focuses on the selection step and proposes a novel dynamic ensemble selection method. In particular, the proposed method employs some selection criteria to determine the range of competency of the classifiers, and then, a HMCDM (hesitant fuzzy multiple criteria decision making) method is utilized to select the appropriate classifiers. Experimental results show that the proposed framework improves classification accuracy when compared against current state-of-the-art dynamic ensemble selection techniques. The Quade nonparametric statistical test confirms the capability of our proposed method.

Similar content being viewed by others

Notes

DESlib is an easy-to-use ensemble learning library in Python that available in github; author: Cruz, Rafael M. O. and Hafemann, Luiz G. and Sabourin, Robert and Cavalcanti, George D. C.

References

Alcalá-Fdez J, Sánchez L, García S, del Jesus MJ, Ventura S, Garrell JM, Otero J, Romero C, Bacardit J, Rivas VM, Fernández JC, Herrera F (2009) KEEL: a software tool to assess evolutionary algorithms to data mining problems. Soft Comput 13(3):307–318. https://doi.org/10.1007/s00500-008-0323-y

Aliahmadipour L, Torra V, Eslami E, Eftekhari M (2016) A definition for hesitant fuzzy partitions. Int J Comput Intell Syst 9:497–505. https://doi.org/10.1080/18756891.2016.1175814

Bashbaghi S, Granger E, Sabourin R, Bilodeau GA (2017) Dynamic ensembles of exemplar-svms for still-to-video face recognition. Pattern Recognit 69(C):61–81. https://doi.org/10.1016/j.patcog.2017.04.014

Breiman L (1996) Bagging predictors. Mach Learn 24(2):123–140. https://doi.org/10.1007/BF00058655

Breiman L (2001) Random forests. Mach Learn 45(1):5–32. https://doi.org/10.1023/A:1010933404324

Britto AS Jr, Sabourin R, Soares de Oliveira L (2014) Dynamic selection of classifiers—a comprehensive review. Pattern Recognit 47:3665–3680. https://doi.org/10.1016/j.patcog.2014.05.003

Cavalin PR, Sabourin R, Suen CY (2012) Logid: an adaptive framework combining local and global incremental learning for dynamic selection of ensembles of hmms. Pattern Recognit 45(9):3544–3556. https://doi.org/10.1016/j.patcog.2012.02.034

Cavalin PR, Sabourin R, Suen CY (2013) Dynamic selection approaches for multiple classifier systems. Neural Comput. Appl. 22(3):673–688. https://doi.org/10.1007/s00521-011-0737-9

Cruz RMO, Oliveira DVR, Cavalcanti GDC, Sabourin R (2018b) FIRE-DES++: enhanced online pruning of base classifiers for dynamic ensemble selection. CoRR arXiv:1810.00520

Cruz RMO, Sabourin R, Cavalcanti GDC, Ren TI (2018c) META-DES: a dynamic ensemble selection framework using meta-learning. CoRR arXiv:1810.01270

Cruz RM, Sabourin R, Cavalcanti GD (2018a) Dynamic classifier selection. Inf Fusion 41(C):195–216. https://doi.org/10.1016/j.inffus.2017.09.010

de Almeida PRL, da Silva Júnior EJ, Celinski TM, de Souza Britto A, de Oliveira LES, Koerich AL (2012) Music genre classification using dynamic selection of ensemble of classifiers. In: 2012 IEEE international conference on systems, man, and cybernetics (SMC), pp 2700–2705. https://doi.org/10.1109/ICSMC.2012.6378155

Didaci L, Giacinto G, Roli F, Marcialis G (2005) Rapid and brief communication: a study on the performances of dynamic classifier selection based on local accuracy estimation. Pattern Recognit 38:2188–2191. https://doi.org/10.1016/j.patcog.2005.02.010

Duda RO, Hart PE, Stork DG (2000) Pattern Classif, 2nd edn. Wiley, New York

Ebrahimpour M, Eftekhari M (2016) Ensemble of feature selection methods: a hesitant fuzzy sets approach. Appl Soft Comput. https://doi.org/10.1016/j.asoc.2016.11.021

Ebrahimpour M, Eftekhari M (2018) Distributed feature selection: a hesitant fuzzy correlation concept for microarray high-dimensional datasets. Chemom Intell Lab Syst 173:51. https://doi.org/10.1016/j.chemolab.2018.01.001

Farhadinia B (2014) A series of score functions for hesitant fuzzy sets. Inf Sci 277:102–110. https://doi.org/10.1016/j.ins.2014.02.009

Feng J, Wang L, Sugiyama M, Yang C, Zhou ZH, Zhang C (2012) Boosting and margin theory. Front Electr Electron Eng 7:127. https://doi.org/10.1007/s11460-012-0188-9

Freund Y, Schapire RE (1995) A decision-theoretic generalization of on-line learning and an application to boosting. In: Proceedings of the second European conference on computational learning theory, Springer, London, EuroCOLT ’95, pp 23–37, http://dl.acm.org/citation.cfm?id=646943.712093

Galar M, Fernandez A, Barrenechea E, Bustince H, Herrera F (2012) A review on ensembles for the class imbalance problem: bagging-, boosting-, and hybrid-based approaches. IEEE Trans Syst Man Cybern Part C (Applications and Reviews) 42(4):463–484. https://doi.org/10.1109/TSMCC.2011.2161285

Giacinto G, Roli F (2001) Dynamic classifier selection based on multiple classifier behaviour. Pattern Recognit 34:1879–1881

Giacinto G, Roli F, Didaci L (2003) Fusion of multiple classifiers for intrusion detection in computer networks. Pattern Recognit Lett 24(12):1795–1803. https://doi.org/10.1016/S0167-8655(03)00004-7

Giacinto G, Perdisci R, Del Rio M, Roli F (2008) Intrusion detection in computer networks by a modular ensemble of one-class classifiers. Inf Fusion 9(1):69–82. https://doi.org/10.1016/j.inffus.2006.10.002

Hongshan Xiao ZX, Wang Y (2016) Ensemble classification based on supervised clustering for credit scoring. Appl Soft Comput 43:73–86

Jahrer M, Töscher A, Legenstein R (2010) Combining predictions for accurate recommender systems. In: Proceedings of the 16th ACM SIGKDD international conference on knowledge discovery and data mining, ACM, New York, KDD ’10, pp 693–702. https://doi.org/10.1145/1835804.1835893

Jin F, Ni Z, Chen H (2016) Note on “hesitant fuzzy prioritized operators and their application to multiple attribute decision making”. Knowl Based Syst 96(C):115–119. https://doi.org/10.1016/j.knosys.2015.12.023

Ko AHR, Sabourin R, Britto AS Jr (2008) From dynamic classifier selection to dynamic ensemble selection. Pattern Recognit 41(5):1718–1731. https://doi.org/10.1016/j.patcog.2007.10.015

Krawczyk B, Minku LL, Gama J, Stefanowski J, Woniak M (2017) Ensemble learning for data stream analysis. Inf Fusion 37(C):132–156. https://doi.org/10.1016/j.inffus.2017.02.004

Kuncheva LI (2004) Classifier ensembles for changing environments. In: Roli F, Kittler J, Windeatt T (eds) Multiple classifier systems. Springer, Heidelberg, pp 1–15

Kurzynski M, Trajdos P (2017) On a new competence measure applied to the dynamic selection of classifiers ensemble. In: International conference on discovery science, pp 93–107

Mohtashami M, Eftekhari M (2019) A hybrid filter-based feature selection method via hesitant fuzzy and rough sets concepts. Iran J Fuzzy Syst 16(2):165–182. https://doi.org/10.22111/ijfs.2019.4550

Nucci DD, Palomba F, Oliveto R, Lucia AD (2017) Dynamic selection of classifiers in bug prediction: an adaptive method. IEEE Trans Emerg Top Comput Intell 1(3):202–212. https://doi.org/10.1109/TETCI.2017.2699224

Panichella A, Oliveto R, Lucia AD (2014) Cross-project defect prediction models: L’union fait la force. In: 2014 software evolution week—IEEE conference on software maintenance, reengineering, and reverse engineering (CSMR-WCRE), pp 164–173, https://doi.org/10.1109/CSMR-WCRE.2014.6747166

Polikar R, Upda L, Upda SS, Honavar V (2001) Learn++: an incremental learning algorithm for supervised neural networks. IEEE Trans Syst Man Cybern Part C (Applications and Reviews) 31(4):497–508. https://doi.org/10.1109/5326.983933

Porcel C, Tejeda-Lorente A, Martínez MA, Herrera-Viedma E (2012) A hybrid recommender system for the selective dissemination of research resources in a technology transfer office. Inf Sci 184(1):1–19. https://doi.org/10.1016/j.ins.2011.08.026

Rodriguez JJ, Kuncheva LI, Alonso CJ (2006) Rotation forest: a new classifier ensemble method. IEEE Trans Pattern Anal Mach Intell 28(10):1619–1630. https://doi.org/10.1109/TPAMI.2006.211

Rodríguez R, Martinez L, Torra V, Xu Z, Herrera F (2014) Hesitant fuzzy sets: state of the art and future directions. Int J Intell Syst 29:495–524. https://doi.org/10.1002/int.21654

Sardari S, Eftekhari M, Afsari F (2017) Hesitant fuzzy decision tree approach for highly imbalanced data classification. Appl Soft Comput 61:727–741. https://doi.org/10.1016/j.asoc.2017.08.052

Skurichina M, Duin RPW (1998) Bagging for linear classifiers. Pattern Recognit 31:909–930

Stefan Lessmann HVS, Baesens B, Thomas LC (2015) Benchmarking state-of-the-art classification algorithms for credit scoring: an update of research. Eur J Oper Res 247:124–136

Torra V (2010) Hesitant fuzzy sets. Int J Intell Syst 25(6):529–539. https://doi.org/10.1002/int.v25:6

Wang B, Mao Z (2018) Outlier detection based on gaussian process with application to industrial processes. Appl Soft Comput. https://doi.org/10.1016/j.asoc.2018.12.029

Werro N (2008) Fuzzy classification of online customers. Springer, Berlin. https://doi.org/10.1007/978-3-319-15970-6

Woloszynski T, Kurzynski M (2011) A probabilistic model of classifier competence for dynamic ensemble selection. Pattern Recognit 44(10–11):2656–2668. https://doi.org/10.1016/j.patcog.2011.03.020

Woloszynski T, Kurzynski M, Podsiadlo P, Stachowiak GW (2012) A measure of competence based on random classification for dynamic ensemble selection. Inf Fusion INFFUS 13:207. https://doi.org/10.1016/j.inffus.2011.03.007

Wozniak M, Graña M, Corchado E (2014) A survey of multiple classifier systems as hybrid systems. Inf Fusion 16:3–17. https://doi.org/10.1016/j.inffus.2013.04.006

Xia M, Xu Z (2011) Hesitant fuzzy information aggregation in decision making. Int J Approx Reason 52:395–407. https://doi.org/10.1016/j.ijar.2010.09.002

Zhang ZL, Chen YY, Li J, Luo XG (2019) A distance-based weighting framework for boosting the performance of dynamic ensemble selection. Inf Process Manag 56:1300–1316. https://doi.org/10.1016/j.ipm.2019.03.009

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest

The authors declare no conflict of interest.

Ethical standard

This article does not contain any studies with human participants performed by any of the authors. This article does not contain any studies with animals performed by any of the authors. This article does not contain any studies with human participants or animals performed by any of the authors.

Informed consent

There is no individual participant included in the study.

Additional information

Communicated by V. Loia.

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Elmi, J., Eftekhari, M. Dynamic ensemble selection based on hesitant fuzzy multiple criteria decision making. Soft Comput 24, 12241–12253 (2020). https://doi.org/10.1007/s00500-020-04668-3

Published:

Issue Date:

DOI: https://doi.org/10.1007/s00500-020-04668-3