Abstract

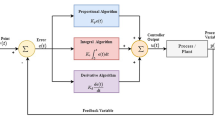

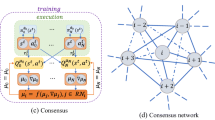

This paper establishes an optimal control of unknown complex-valued system. Policy iteration is used to obtain the solution of the Hamilton–Jacobi–Bellman equation. Off-policy learning allows the iterative performance index and iterative control to be obtained by completely unknown dynamics. Critic and action networks are used to get the iterative control and iterative performance index, which execute policy evaluation and policy improvement. Asymptotic stability of the closed-loop system and the convergence of the iterative performance index function are proven. By Lyapunov technique, the uniformly ultimately bounded of the weight error is proven. Simulation study demonstrates the effectiveness of the proposed optimal control method.

Similar content being viewed by others

References

Yang CD (2009) Stability and quantization of complex-valued nonlinear quantum systems. Chaos Solitons Fractals 42(2):711–723

Hu J, Wang J (2012) Global stability of complex-valued recurrent neural networks with time-delays. IEEE Trans Neural Netw Learning Syst 23(6):853–865

Zhao H, Zeng X, He Z, Jin W, Li T (2012) Complex valued pipelined decision feedback recurrent neural network for non-linear channel equalisation. IET Commun 6(9):1082–1096

Ceylan R, Ceylan M, Özbay Y, Kara S (2011) Fuzzy clustering complex-valued neural network to diagnose cirrhosis disease. Expert Syst Appl 38(8):9744–9751

Fang T, Sun J (2013) Stability analysis of complex-valued impulsive system. IET Control Theory Appl 7(8):1152–1159

Song R, Zhang H, Luo Y, Wei Q (2010) Optimal control laws for time delay systems with saturating actuators based on heuristic dynamic programming. Neurocomputing 73(16–18):3020–3027

Wei Q, Liu D, Lin H (2015) Value iteration adaptive dynamic programming for optimal control of discrete-time nonlinear systems. IEEE Trans Cybern. doi:10.1109/TCYB.2015.2492242

Wei Q, Liu D (2012) An iterative \(\epsilon\)-optimal control scheme for a class of discrete-time nonlinear systems with unfixed initial state. Neural Netw 32(6):236–244

Wei Q, Wang F, Liu D, Yang X (2014) Finite-approximation-error based discrete-time iterative adaptive dynamic programming. IEEE Trans Cybern 44(12):2820–2833

Song R, Xiao W, Zhang H (2013) Multi-objective optimal control for a class of unknown nonlinear systems based on finite-approximation-error ADP algorithm. Neurocomputing 119(7):212–221

Song R, Xiao W, Wei Q (2013) Multi-objective optimal control for a class of nonlinear time-delay systems via adaptive dynamic programming. Soft Comput 17(11):2109–2115

Song R, Xiao W, Wei Q, Sun C (2014) Neural-network-based approach to finite-time optimal control for a class of unknown nonlinear systems. Soft Comput 18(8):1645–1653

Wei Q, Liu D, Yang X (2015) Infinite horizon self-learning optimal control of nonaffine discrete-time nonlinear systems. IEEE Trans Neural Netw Learning Syst 26(4):866–879

Zhang H, Qing C, Luo Y (2014) Neural-network-based constrained optimal control scheme for discrete-time switched nonlinear system using dual heuristic programming. IEEE Trans Autom Sci Eng 11(3):839–849

Vrabie D, Pastravanu O, Lewis F, Abu-Khalaf M (2009) Adaptive optimal control for continuous-time linear systems based on policy iteration. Automatica 45(2):477–484

Lewis F, Vrabie D, Vamvoudakis K (2012) Reinforcement learning and feedback control: using natural decision methods to design optimal adaptive controllers. IEEE Control Syst Mag 32(6):76–105

Vrabie D, Lewis F (2011) Adaptive dynamic programming for online solution of a zero-sum differential game. J Control Theory Appl 9(3):353–360

Modares H, Lewis FL (2014) Optimal tracking control of nonlinear partially-unknown constrained-input systems using integral reinforcement learning. Automatica 50(7):1780–1792

Modares H, Lewis FL, Naghibi-Sistani MB (2014) Integral reinforcement learning and experience replay for adaptive optimal control of partially-unknown constrained-input continuous-time systems. Automatica 50:193–202

Zhang H, Qing C, Jiang B, Luo Y (2014) Online adaptive policy learning algorithm for \(H_\infty\) state feedback control of unknown affine nonlinear discrete-time systems. IEEE Trans Cybern 44(12):2706–2718

Zhang H, Wei Q, Luo Y (2008) A novel infinite-time optimal tracking control scheme for a class of discrete-time nonlinear systems via the greedy HDP iteration algorithm. IEEE Trans Syst Man Cybern Part B Cybern 38(4):937–942

Jiang Y, Jiang Z (2012) Computational adaptive optimal control for continuous-time linear systems with completely unknown dynamics. Automatica 48:2699–2704

Modares H, Lewis FL, Naghibi-Sistani MB (2013) Adaptive optimal control of unknown constrained-input systems using policy iteration and neural networks. IEEE Trans Neural Netw Learning Syst 24(10):1513–1525

Sutton R, Barto A (2005) Reinforcement learning: an introduction. The MIT Press, Cambridge

Lewis FL, Vrabie D (2009) Reinforcement learning and adaptive dynamic programming for feedback control. IEEE Circuits Syst Mag 9(3):32–50

Al-Tamimi A, Lewis FL, Abu-Khalaf M (2008) Discrete-time nonlinear HJB solution using approximate dynamic programming: convergence proof. IEEE Trans Syst Man Cybern Part B Cybern 38(4):943–949

Murray JJ, Cox CJ, Lendaris GG, Saeks R (2002) Adaptive dynamic programming. IEEE Trans Syst Man Cybern Syst 32(2):140–153

Modares H, Lewis F, Jiang Z (2015) \(H_\infty\) tracking control of completely unknown continuous-time systems via off-policy reinforcement learning. IEEE Trans Neural Netw Learning Syst 26(10):2550–2562

Song R, Xiao W, Zhang H, Sun C (2014) Adaptive dynamic programming for a class of complex-valued nonlinear systems. IEEE Trans Neural Netw Learning Syst 25(9):1733–1739

Wang J, Xu X, Liu D, Sun Z, Chen Q (2014) Self-learning cruise control using kernel-based least squares policy iteration. IEEE Trans Control Syst Technol 22(3):1078–1087

Luo B, Wu H, Huang T, Liu D (2014) Data-based approximate policy iteration for affine nonlinear continuous-time optimal control design. Automatica 50(12):3281–3290

Modares H, Lewis FL (2014) Linear quadratic tracking control of partially-unknown continuous-time systems using reinforcement learning. IEEE Trans Autom Control 59:3051–3056

Kiumarsi B, Lewis FL, Modares H, Karimpur A, Naghibi-Sistani MB (2014) Reinforcement Q-learning for optimal tracking control of linear discrete-time systems with unknown dynamics. Automatica 50(4):1167–1175

Abu-Khalaf M, Lewis FL (2005) Nearly optimal control laws for nonlinear systems with saturating actuators using a neural network HJB approach. Automatica 41:779–791

Acknowledgments

This work was supported in part by the National Natural Science Foundation of China under Grants 61304079, 61374105, and in part by Fundamental Research Funds for the Central Universities under Grant FRF-TP-15-056A3, and in part by the Open Research Project from SKLMCCS under Grant 20150104.

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Song, R., Wei, Q. & Xiao, W. Off-policy neuro-optimal control for unknown complex-valued nonlinear systems based on policy iteration. Neural Comput & Applic 28, 1435–1441 (2017). https://doi.org/10.1007/s00521-015-2144-0

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s00521-015-2144-0