Abstract

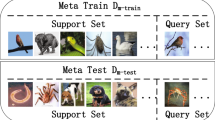

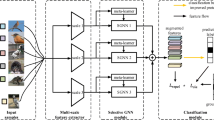

Recently, few-shot learning has received considerable attention from researchers. Compared to deep learning, which requires abundant data for training, few-shot learning only requires a few labeled samples. Therefore, few-shot learning has been extensively used in scenarios in which a large number of samples cannot be obtained. However, effectively extracting features from a limited number of samples are the most important problem in few-shot learning. To solve this limitation, a multi-local feature relation network (MLFRNet) is proposed to improve the accuracy of few-shot image classification. First, we obtain the local sub-images of each image by random cropping, which is used to obtain local features. Second, we propose support-query local feature attention by exploring local feature relationships between the support and query sets. Using the local feature attention, the importance of local features of each class prototype can be calculated to classify query data. Moreover, we explore local feature relationship between the support set and the support set, and we propose support-support local feature similarity. Using local feature similarity, we can adaptively determine the margin loss of the local features, which then improves the network accuracy. Experiments on two benchmark datasets show that the proposed MLFRNet achieves state-of-the-art performance. In particular, for the miniImageNet dataset, the proposed method achieves 66.79% (1-shot) and 83.16% (5-shot) accuracy.

Similar content being viewed by others

References

He K, Zhang X, Ren S, Sun J (2016) Deep residual learning for image recognition. In: Proceedings of the IEEE conference on computer vision and pattern recognition, pp 770–778

Ji Y, Zhang H, Zhang Z, Liu M (2021) CNN-based encoder–decoder networks for salient object detection: a comprehensive review and recent advances. Inf Sci 546:835–857

Ronneberger O, Fischer P, Brox T (2015) U-net: convolutional networks for biomedical image segmentation. In: International conference on medical image computing and computer-assisted intervention. Springer, pp 234–241

Schwartz E, Karlinsky L, Shtok J, Harary S, Marder M, Feris R, Kumar A, Giryes R, Bronstein AM (2018) Delta-encoder: an effective sample synthesis method for few-shot object recognition

Gao R, Hou X, Qin J, Chen J, Liu L, Zhu F, Zhang Z, Shao L (2020) Zero-vae-gan: generating unseen features for generalized and transductive zero-shot learning. IEEE Trans Image Process 29:3665–3680. https://doi.org/10.1109/TIP.2020.2964429

Liu L, Zhang H, Xu X, Zhang Z, Yan S (2020) Collocating clothes with generative adversarial networks cosupervised by categories and attributes: a multidiscriminator framework. IEEE Trans Neural Netw Learn Syst 31(9):3540–3554. https://doi.org/10.1109/TNNLS.2019.2944979

Fe-Fei L et al (2003) A Bayesian approach to unsupervised one-shot learning of object categories. In: Proceedings ninth IEEE international conference on computer vision. IEEE, pp 1134–1141

Finn C, Abbeel P, Levine S (2017) Model-agnostic meta-learning for fast adaptation of deep networks. In: International conference on machine learning. PMLR, pp 1126–1135

Nichol A, Achiam J, Schulman J (2018) On first-order meta-learning algorithms. arXiv:1803.02999

Gidaris S, Komodakis N (2018) Dynamic few-shot visual learning without forgetting. In: Proceedings of the IEEE conference on computer vision and pattern recognition, pp 4367–4375

Gidaris S, Komodakis N (2019) Generating classification weights with GNN denoising autoencoders for few-shot learning. In: Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, pp 21–30

Lee K, Maji S, Ravichandran A, Soatto S (2019) Meta-learning with differentiable convex optimization. In: Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, pp 10657–10665

Santoro A, Bartunov S, Botvinick M, Wierstra D, Lillicrap T (2016) One-shot learning with memory-augmented neural networks. arXiv:1605.06065

Munkhdalai T, Yu H (2017) Meta networks. In: International conference on machine learning. PMLR, pp 2554–2563

Munkhdalai T, Yuan X, Mehri S, Trischler A (2018) Rapid adaptation with conditionally shifted neurons. In: International conference on machine learning. PMLR, pp 3664–3673

Li H, Eigen D, Dodge S, Zeiler M, Wang X (2019) Finding task-relevant features for few-shot learning by category traversal. In: Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, pp 1–10

Vinyals O, Blundell C, Lillicrap T, Kavukcuoglu K, Wierstra D (2016) Matching networks for one shot learning. arXiv:1606.04080

Snell J, Swersky K, Zemel RS (2017) Prototypical networks for few-shot learning. arXiv:1703.05175

Sung F, Yang Y, Zhang L, Xiang T, Torr PH, Hospedales TM (2018) Learning to compare: relation network for few-shot learning. In: Proceedings of the IEEE conference on computer vision and pattern recognition, pp 1199–1208

Oreshkin BN, Rodriguez P, Lacoste A (2018) Tadam: task dependent adaptive metric for improved few-shot learning. arXiv:1805.10123

Hou R, Chang H, Ma B, Shan S, Chen X (2019) Cross attention network for few-shot classification. arXiv:1910.07677

Li A, Huang W, Lan X, Feng J, Li Z, Wang L (2020) Boosting few-shot learning with adaptive margin loss. In: Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, pp 12576–12584

Koch G, Zemel R, Salakhutdinov R (2015) Siamese neural networks for one-shot image recognition. In: ICML deep learning workshop, vol 2. Lille

Satorras VG, Estrach JB (2018) Few-shot learning with graph neural networks. In: International conference on learning representations

Zhong Z, Zheng L, Kang G, Li S, Yang Y (2020) Random erasing data augmentation. In: Proceedings of the AAAI conference on artificial intelligence, vol 34, pp 13001–13008

Ren M, Triantafillou E, Ravi S, Snell J, Swersky K, Tenenbaum J, Larochelle H, Zemel R (2018) Meta-learning for semi-supervised few-shot classification. ICLR

Russakovsky O, Deng J, Su H, Krause J, Satheesh S, Ma S, Huang Z, Karpathy A, Khosla A, Bernstein M et al (2015) Imagenet large scale visual recognition challenge. Int J Comput Vis 115(3):211–252

Kim J, Kim T, Kim S, Yoo CD (2019) Edge-labeling graph neural network for few-shot learning. In: Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, pp 11–20

Chen WY, Liu YC, Kira Z, Wang YCF, Huang JB (2018) A closer look at few-shot classification. In: International conference on learning representations

Chen Y, Wang X, Liu Z, Xu H, Darrell T (2020) A new meta-baseline for few-shot learning. arXiv:2003.04390

Nichol A, Achiam J, Schulman J (2018) On first-order meta-learning algorithms. arXiv:1803.02999 [cs]

Raghu A, Raghu M, Bengio S, Vinyals O (2019) Rapid learning or feature reuse? Towards understanding the effectiveness of MAML. CoRR abs/1909.09157 arXiv:1909.09157

Funding

This work was supported by the National Key R&D Program of China (No. 2018YFC0807500) and by National Natural Science Foundation of China (No. 61806045).

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest

Li Ren declares that he has no conflict of interest. Guiduo Duan has received research grants from the National Key R&D Program of China (No. 2018YFC0807500). Tianxi Huang declares that he has no conflict of interest. Zhao Kang received research grants from National Natural Science Foundation of China (No. 61806045).

Ethical approval

This article does not contain any studies with human participants or animals performed by any of the authors.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Ren, L., Duan, G., Huang, T. et al. Multi-local feature relation network for few-shot learning. Neural Comput & Applic 34, 7393–7403 (2022). https://doi.org/10.1007/s00521-021-06840-8

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s00521-021-06840-8