Abstract

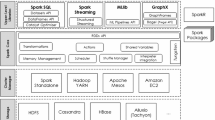

Large-scale optimization problems abound in data mining and machine learning applications, and the computational challenges they pose are often addressed through parallelization. We identify structural properties under which a convex optimization problem can be massively parallelized via map-reduce operations using the Frank–Wolfe (FW) algorithm. The class of problems that can be tackled this way is quite broad and includes experimental design, AdaBoost, and projection to a convex hull. Implementing FW via map-reduce eases parallelization and deployment via commercial distributed computing frameworks. We demonstrate this by implementing FW over Spark, an engine for parallel data processing, and establish that parallelization through map-reduce yields significant performance improvements: We solve problems with 20 million variables using 350 cores in 79 min; the same operation takes 48 h when executed serially.

Similar content being viewed by others

References

Abadi M, Barham P, Chen J, Chen Z, Davis A, Dean J, Devin M, Ghemawat S, Irving G, Isard M, et al (2016) Tensorflow: A system for large-scale machine learning. In: OSDI

Bahmani B, Moseley B, Vattani A, Kumar R, Vassilvitskii S (2012) Scalable k-means++. In: VLDB

Beck A, Shtern S (2015) Linearly convergent away-step conditional gradient for non-strongly convex functions. Math Progr 164:1–27

Bellet A, Liang Y, Garakani AB, Balcan M-F, Sha F (2015) A distributed Frank–Wolfe algorithm for communication-efficient sparse learning. In: SDM

Bertsekas DP (1999) Nonlinear programming. Athena Scientific, Belmont

Bian Y, Mirzasoleiman B, Buhmann JM, Krause A (2017) Guaranteed non-convex optimization: Submodular maximization over continuous domains. In: AISTATS

Boyd S, Parikh N, Chu E, Peleato B, Eckstein J (2011) Distributed optimization and statistical learning via the alternating direction method of multipliers. Found Trends Mach Learn 3(1):1–122

Boyd S, Vandenberghe L (2004) Convex optimization. Cambridge University Press, New York

Calinescu G, Chekuri C, Pál M, Vondrák J (2011) Maximizing a monotone submodular function subject to a matroid constraint. SIAM J Comput 40(6):1740–1766

Canon M, Cullum C (1968) A tight upper bound on the rate of convergence of Frank–Wolfe algorithm. SIAM J Control 6(4):509–516

Chandrasekaran V, Recht B, Parrilo PA, Willsky AS (2012) The convex geometry of linear inverse problems. Found Comput Math 12(6):805–849

Chen S, Banerjee A (2015) Structured estimation with atomic norms: General bounds and applications. In: NIPS

Chu C-T, Kim SK, Lin Y-A, Yu Y, Bradski G, Ng AY, Olukotun K (2006) Map-reduce for machine learning on multicore. In: NIPS

Clarkson K L (2010) Coresets, sparse greedy approximation, and the Frank–Wolfe algorithm. ACM Trans Algorithms 6(4):63:1–63:30

Dean J, Ghemawat S (2008) Mapreduce: simplified data processing on large clusters. Commun ACM 51(1):107–113

Dudik M, Harchaoui Z, Malick J (2012) Lifted coordinate descent for learning with trace-norm regularization. In: AISTATS

Dunn JC (1979) Rates of convergence for conditional gradient algorithms near singular and nonsingular extremals. SIAM J Control Optim 17(2):187–211

Frank M, Wolfe P (1956) An algorithm for quadratic programming. Naval Res Logist Q 3(1–2):95–110

Garber D, Hazan E (2016) A linearly convergent variant of the conditional gradient algorithm under strong convexity, with applications to online and stochastic optimization. SIAM J Optim 26(3):1493–1528

Garber D, Meshi O (2016) Linear-memory and decomposition-invariant linearly convergent conditional gradient algorithm for structured polytopes In: Advances in neural information processing systems, pp 1001–1009

Guélat J, Marcotte P (1986) Some comments on Wolfe’s away step. Math Progr 35(1):110–119

Harchaoui Z, Douze M, Paulin M, Dudik M, Malick J (2012) Large-scale image classification with trace-norm regularization. In: CVPR

Harper F M, Konstan J A (2015) The movielens datasets: history and context. ACM Trans Interact Intell Syst 5(4):19:1–19:19

Hazan E, Kale S (2012) Projection-free online learning. In: ICML

Hazan E, Luo H (2016) Variance-reduced and projection-free stochastic optimization. In: ICML

Jaggi M (2013) Revisiting Frank–Wolfe: projection-free sparse convex optimization. In: ICML

Joulin A, Tang K, Fei-Fei L (2014) Efficient image and video co-localization with Frank–Wolfe algorithm. In: ECVV

Koren Y, Bell R, Volinsky C (2009) Matrix factorization techniques for recommender systems. Computer 42(8):30

Kumar R, Moseley B, Vassilvitskii S, Vattani A (2015) Fast greedy algorithms in mapreduce and streaming. ACM Trans Parallel Comput 2(3):14

Lacoste-Julien S, Jaggi M (2015) On the global linear convergence of Frank–Wolfe optimization variants. In: NIPS

Lacoste-Julien S, Jaggi M, Schmidt M, Pletscher P (2013) Block-coordinate Frank–Wolfe optimization for structural SVMs. In: Proceedings of ICML

Lan G, Zhou Y (2016) Conditional gradient sliding for convex optimization. SIAM J Optim 26(2):1379–1409

Leighton F T (2014) Introduction to parallel algorithms and architectures: trees hypercubes. Elsevier, Amsterdam

Li M, Zhou L, Yang Z, Li A, Xia F, Andersen DG, Smola A (2013) Parameter server for distributed machine learning. In: NIPS workshop

Lichman M (2013) UCI Machine Learning Repository. University of California, School of Information and Computer Science, Irvine, CA

Ng AY (2004) Feature selection, L1 vs. L2 regularization, and rotational invariance. In: ICML

Osokin A, Alayrac J-B, Lukasewitz I, Dokania PK, Lacoste-Julien S (2016) Minding the gaps for block Frank–Wolfe optimization of structured SVMs. In: ICML

Recht B, Re C, Wright S, Niu F (2011) Hogwild: A lock-free approach to parallelizing stochastic gradient descent. In: NIPS

Reddi SJ, Sra S, Póczós B, Smola A (2016) Stochastic Frank–Wolfe methods for non-convex optimization. In: Allerton

Shah P, Bhaskar BN, Tang G, Recht B (2012) Linear system identification via atomic norm regularization. In: CDC

Sherman J, Morrison WJ (1950) Adjustment of an inverse matrix corresponding to a change in one element of a given matrix. Ann Math Stat 21(1):124–127

Suri S, Vassilvitskii S (2011) Counting triangles and the curse of the last reducer. In: WWW

Tewari A, Ravikumar PK, Dhillon IS (2011) Greedy algorithms for structurally constrained high dimensional problems. In: NIPS

Tibshirani R (1996) Regression shrinkage and selection via the lasso. J R Stat Soc Ser B (Methodol) 73:267–288

Tran NL, Peel T, Skhiri S (2015) Distributed Frank–Wolfe under pipelined stale synchronous parallelism. In: IEEE international conference on big data (Big Data)

Wang Y-X, Sadhanala V, Dai W, Neiswanger W, Sra S, Xing E (2016) Parallel and distributed block-coordinate Frank–Wolfe algorithms. In: Proceedings of ICML

White T (2012) Hadoop: the definitive guide. O’Reilly Media, Inc

Wolfe P (1970) Convergence theory in nonlinear programming. In: Abadie J (ed) Integer and nonlinear programming. North-Holland Publishing Company, Amsterdam

Yang H-C, Dasdan A, Hsiao R-L, Parker DS (2007) Map-reduce-merge: simplified relational data processing on large clusters. In: SIGMOD

Yang T (2013) Trading computation for communication: distributed stochastic dual coordinate ascent. In: NIPS

Ying Y, Li P (2012) Distance metric learning with eigenvalue optimization. J Mach Learn Res 13:1–26

Zaharia M, Chowdhury M, Franklin MJ, Shenker S, Stoica I (2010) Spark: cluster computing with working sets. In: HotCloud

Zhang L, Wang G, Romero D, Giannakis GB (2017) Randomized block Frank–Wolfe for convergent large-scale learning. IEEE Trans Signal Proc 65(4):6448–6461

Zinkevich M, Weimer M, Li L, Smola AJ (2010) Parallelized stochastic gradient descent. In: NIPS

Acknowledgements

We kindly thank our reviewers for their very useful comments and suggestions. The work was supported by National Science Foundation (NSF) CAREER grant CCF-1750539.

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Moharrer, A., Ioannidis, S. Distributing Frank–Wolfe via map-reduce. Knowl Inf Syst 60, 665–690 (2019). https://doi.org/10.1007/s10115-018-1294-7

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10115-018-1294-7