Abstract

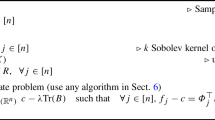

This paper investigates the approximation of multivariate functions from data via linear combinations of translates of a positive definite kernel from a reproducing kernel Hilbert space. If standard interpolation conditions are relaxed by Chebyshev-type constraints, one can minimize the norm of the approximant in the Hilbert space under these constraints. By standard arguments of optimization theory, the solutions will take a simple form, based on the data related to the active constraints, called support vectors in the context of machine learning. The corresponding quadratic programming problems are investigated to some extent. Using monotonicity results concerning the Hilbert space norm, iterative techniques based on small quadratic subproblems on active sets are shown to be finite, even if they drop part of their previous information and even if they are used for infinite data, e.g., in the context of online learning. Numerical experiments confirm the theoretical results.

Similar content being viewed by others

References

D. Braess, Chebyshev approximation by splines wuth free knots, Numer. Math. (1974) 357–366.

S. De Marchi, R. Schaback and H. Wendland, Optimal point locations for radial basis interpolation, in preparation (2002).

R. Fletcher, Practical Methods of Optimization (Wiley, 1987).

M. Frank and P. Wolfe, An algorithm for quadratic programming, Naval Res. Logist. Quart. 3 (1956) 95–110.

C.I. Barrodale and Phillips, Algorithm 495: Solution of an overdetermined system of linear equations in the Chebychev norm, ACM Trans. Math. Software (TOMS) 1 (1975) 264–270.

C.A. Micchelli, Interpolation of scattered data: Distance matrices and conditionally positive definite functions, Constr. Approx. 2 (1986) 11–22.

C.A. Micchelli and T.J. Rivlin, A survey of optimal recovery, in: Optimal Estimation in Approximation Theory, eds. C.A. Micchelli and T.J. Rivlin (Plenum Press, 1977) pp. 1–54.

C.A. Micchelli and T.J. Rivlin, Optimal recovery of best approximations, Results Math. 3 (1978) 25–32.

C.A. Micchelli and T.J. Rivlin, Lectures on optimal recovery, in: Numerical Analysis, Lancaster 1984, ed. P.R. Turner, Lecture Notes in Math. 1129 (Springer-Verlag, 1984) pp. 12–93.

C.A. Micchelli, T.J. Rivlin and S. Winograd, Optimal recovery of smooth function approximations, Numer. Math. 260 (1976) 191–200.

R. Schaback, Native Hilbert spaces for radial basis functions I, in: New Developments in Approximation Theory, eds. M.D. Buhmann, D.H. Mache, M. Felten and M.W. Müller, Internat. Ser. Numer. Math. 132 (Birkhäuser, 1999) pp. 255–282.

R. Schaback, Mathematical results concerning kernel techniques, Manuscript (2002).

R. Schaback and H. Wendland, Adaptive greedy techniques for approximate solution of large RBF systems, Numer. Algorithms 24 (2000) 239–254.

R. Schaback and H. Wendland, Numerical techniques based on radial basis functions, in: Curve and Surface Fitting, eds. A. Cohen, C. Rabut and L. Schumaker (Vanderbilt University Press, Nashville, TN, 2000).

B. Schölkopf and A.J. Smola, Learning with Kernels (MIT Press, 2002).

J. Stewart, Positive definite functions and generalizations, an historical survey, Rocky Mountain J. Math. 6 (1976) 409–434.

V. Vapnik, The Nature of Statistical Learning Theory (Springer, New York, 1995).

J. Werner, Optimization Theory and Applications (Vieweg, 1984).

Author information

Authors and Affiliations

Additional information

Communicated by Joe Ward

Dedicated to C.A. Micchelli at the occasion of his 60th birthday

Mathematics subject classifications (2000)

65D05, 65D10, 41A15, 41A17, 41A27, 41A30, 41A40, 41A63.

Rights and permissions

About this article

Cite this article

Schaback, R., Werner, J. Linearly constrained reconstruction of functions by kernels with applications to machine learning. Adv Comput Math 25, 237–258 (2006). https://doi.org/10.1007/s10444-004-7616-1

Received:

Accepted:

Issue Date:

DOI: https://doi.org/10.1007/s10444-004-7616-1