Abstract

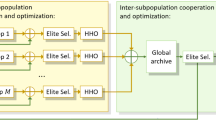

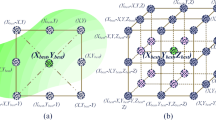

In this research, a modification of the Harris hawk optimization (HHO) is introduced and applied to feature selection. The proposed HHO variant is termed as hybrid multi-leader HHO with differential evolution (MLHHDE). To help hawks overcome the situation of falling into local optimum during the searching process, an improved memory structure is introduced, which makes the hawks learn simultaneously from the historical best and global best positions. Besides, the multi-leader mechanism is also introduced to make full use of the valuable information from global best memories (leaders), enhance the diversity of hawks’ search mode, and improve the hawks’ exploration capability. Furthermore, differential evolution operations are integrated into HHO to further improve the weak exploration phase. Our proposed MLHHDE algorithm integrates a V-shaped transfer function, which can convert continuous solutions to binary solutions. To validate the modification of MLHHDE, we compared its performance with the advanced optimization algorithms through three experiments. In the first experiment, the performance of MLHHDE to solve a set of problems from the CEC 2017 benchmark is evaluated. Meanwhile, the second experiment aims to apply the binary version of MLHHDE to tackle the feature selection task by applying it to a set of sixteen datasets from the UCI repository. In the third, we applied the proposed model as a quantitative structure-activity relationship method to predict the influenza viruses H1N1 as a real-world application. The performance of the proposed MLHHDE is assessed using a number of evaluation indicators. The experiment results prove the powerful capability of MLHHDE to find the optimal solution in the two experiments as well as it outperforms other methods (i.e., either global optimization or feature selection). In addition, the developed MLHHDE provides accuracy overall the UCI datasets nearly 84% with difference 5% between it and traditional HHO, also, it provides accuracy 92% with standard deviation when applied to predict H1N1.

Similar content being viewed by others

References

Ahmadianfar I, Bozorg-Haddad O, Chu X (2020) Gradient-based optimizer: a new metaheuristic optimization algorithm. Inf Sci 540:131–159. https://doi.org/10.1016/j.ins.2020.06.037

Al-Fakih AM, Algamal ZY, Lee MH et al (2016) Quantitative structure-activity relationship model for prediction study of corrosion inhibition efficiency using two-stage sparse multiple linear regression. J Chemom 30:361–368. https://doi.org/10.1002/cem.2800

Algamal ZY, Qasim MK, Ali HTM (2017) A QSAR classification model for neuraminidase inhibitors of influenza A viruses (H1N1) based on weighted penalized support vector machine. SAR QSAR Environ Res 28:415–426. https://doi.org/10.1080/1062936X.2017.1326402

Algamal ZY, Qasim MK, Lee MH, Ali HTM (2020) QSAR model for predicting neuraminidase inhibitors of influenza A viruses (H1N1) based on adaptive grasshopper optimization algorithm. SAR QSAR Environ Res 31:803–814. https://doi.org/10.1080/1062936X.2020.1818616

Al-Thanoon NA, Qasim OS, Algamal ZY (2019) A new hybrid firefly algorithm and particle swarm optimization for tuning parameter estimation in penalized support vector machine with application in chemometrics. Chemom Intell Lab Syst 184:142–152. https://doi.org/10.1016/j.chemolab.2018.12.003

Altman NS (1992) An Introduction to Kernel and nearest-neighbor nonparametric regression. Am Stat 46:175–185. https://doi.org/10.1080/00031305.1992.10475879

Al-Wajih R, Abdulkadir SJ, Aziz N et al (2021) Hybrid binary Grey Wolf With Harris Hawks optimizer for feature selection. IEEE Access 9:31662–31677. https://doi.org/10.1109/ACCESS.2021.3060096

Ang JC, Mirzal A, Haron H, Hamed HNA (2016) Supervised, unsupervised, and semi-supervised feature selection: a review on gene selection. IEEE/ACM Trans Comput Biol Bioinforma 13:971–989. https://doi.org/10.1109/TCBB.2015.2478454

Arora S, Anand P (2019) Binary butterfly optimization approaches for feature selection. Expert Syst Appl 116:147–160. https://doi.org/10.1016/j.eswa.2018.08.051

Askari Q, Saeed M, Younas I (2020) Heap-based optimizer inspired by corporate rank hierarchy for global optimization. Expert Syst Appl 161:113702. https://doi.org/10.1016/j.eswa.2020.113702

Birogul S (2019) Hybrid Harris Hawk optimization based on differential evolution (HHODE) algorithm for optimal power flow problem. IEEE Access 7:184468–184488. https://doi.org/10.1109/ACCESS.2019.2958279

Bui M, Kalantar et al (2019) Harris Hawks optimization: a novel swarm intelligence technique for spatial assessment of landslide susceptibility. Sensors 19:3590. https://doi.org/10.3390/s19163590

Cong Y, Li B, Yang X et al (2013) Quantitative structure-activity relationship study of influenza virus neuraminidase A/PR/8/34 (H1N1) inhibitors by genetic algorithm feature selection and support vector regression. Chemom Intell Lab Syst 127:35–42. https://doi.org/10.1016/j.chemolab.2013.05.012

Emary E, Zawbaa HM, Ella A (2016a) Binary grey wolf optimization approaches for feature selection. Neurocomputing 172:371–381. https://doi.org/10.1016/j.neucom.2015.06.083

Emary E, Zawbaa HM, Hassanien AE (2016b) Binary ant lion approaches for feature selection. Neurocomputing 213:54–65. https://doi.org/10.1016/j.neucom.2016.03.101

Ewees AA, Abualigah L, Yousri D et al (2021) Improved slime mould algorithm based on firefly algorithm for feature selection: a case study on QSAR model. Eng Comput. https://doi.org/10.1007/s00366-021-01342-6

Gao Z-M, Zhao J, Hu Y-R, Chen H-F (2019) The improved Harris hawk optimization algorithm with the Tent map. In: 2019 3rd international conference on electronic information technology and computer engineering (EITCE). IEEE, pp 336–339

Guyon I, Elisseeff A (2003) An introduction to variable and feature selection. J Mach Learn Res 3:1157–1182

Hadi AA, Mohamed AW, Jambi KM (2019) LSHADE-SPA memetic framework for solving large-scale optimization problems. Complex Intell Syst 5:25–40. https://doi.org/10.1007/s40747-018-0086-8

Han J, Pei J, Kamber M (2011) Data mining: concepts and techniques

Heidari AA, Mirjalili S, Faris H et al (2019) Harris hawks optimization: algorithm and applications. Futur Gener Comput Syst 97:849–872. https://doi.org/10.1016/j.future.2019.02.028

Houssein EH, Saad MR, Hussain K et al (2020) Optimal sink node placement in large scale wireless sensor networks based on Harris’ Hawk optimization algorithm. IEEE Access 8:19381–19397. https://doi.org/10.1109/ACCESS.2020.2968981

Hussain K, Zhu W, Salleh MNM (2019) Long-Term memory Harris’Hawk optimization for high dimensional and optimal power flow problems. IEEE Access 7:147596–147616. https://doi.org/10.1109/ACCESS.2019.2946664

Inza I, Larrañaga P, Blanco R, Cerrolaza AJ (2004) Filter versus wrapper gene selection approaches in DNA microarray domains. Artif Intell Med 31:91–103. https://doi.org/10.1016/j.artmed.2004.01.007

Jia H, Lang C, Oliva D et al (2019) Dynamic harris hawks optimization with mutation mechanism for satellite image segmentation. Remote Sens 11:1421. https://doi.org/10.3390/rs11121421

Kennedy J, Eberhart RC (1997) A discrete binary version of the particle swarm algorithm. In: 1997 IEEE international conference on systems, man, and cybernetics. computational cybernetics and simulation. IEEE, pp 4104–4108

Kennedy J, Eberhart R (1995) Particle swarm optimization. IEEE Int Conf Part Swarm Optim 4:1942–1948

Kim CU, Lew W, Williams MA et al (1997) Influenza neuraminidase inhibitors possessing a novel hydrophobic interaction in the enzyme active site: design, synthesis, and structural analysis of carbocyclic sialic acid analogues with potent anti-influenza activity. J Am Chem Soc 119:681–690. https://doi.org/10.1021/ja963036t

Kira K, Larry AR (1992) A practical approach to feature selection. Mach Learn Proc. https://doi.org/10.1016/B978-1-55860-247-2.50037-1

Kömer P, Abraham A, Snášel V (2014) Proceedings of the fifth international conference on innovations in bio-inspired computing and applications IBICA 2014. Adv Intell Syst Comput 303:301–310. https://doi.org/10.1007/978-3-319-08156-4

Kohavi R, John GH (1997) Wrappers for feature subset selection. Artif Intell 97:273–324

Kurtuluş E, Yıldız AR, Sait SM, Bureerat S (2020) A novel hybrid Harris hawks-simulated annealing algorithm and RBF-based metamodel for design optimization of highway guardrails. Mater Test 62:251–260. https://doi.org/10.3139/120.111478

Li Y, Kong Y, Zhang M et al (2016) Using support vector machine (SVM) for classification of selectivity of h1n1 neuraminidase inhibitors. Mol Inform 35:116–124. https://doi.org/10.1002/minf.201500107

Li Q, Chen H, Huang H et al (2017) An enhanced grey wolf optimization based feature selection wrapped kernel extreme learning machine for medical diagnosis. Comput Math Methods Med 2017:1–15. https://doi.org/10.1155/2017/9512741

Li J, Cheng K, Wang S et al (2018) Feature selection: a data perspective. ACM Comput Surv 50:1–45. https://doi.org/10.1145/3136625

Liu P, Liu J (2017) Multi-leader PSO (MLPSO): a new PSO variant for solving global optimization problems. Appl Soft Comput J 61:256–263. https://doi.org/10.1016/j.asoc.2017.08.022

Liu H, Motoda H (1998) Feature selection for knowledge discovery and data mining. Springer, Boston

Mafarja MM, Mirjalili S (2017) Hybrid Whale optimization algorithm with simulated annealing for feature selection. Neurocomputing 260:302–312. https://doi.org/10.1016/j.neucom.2017.04.053

Mafarja M, Aljarah I, Asghar A et al (2018) Knowledge-based systems evolutionary population dynamics and grasshopper optimization approaches for feature selection problems. Knowledge-Based Syst 145:25–45. https://doi.org/10.1016/j.knosys.2017.12.037

Mafarja M, Aljarah I, Faris H et al (2019) Binary grasshopper optimisation algorithm approaches for feature selection problems. Expert Syst Appl 117:267–286. https://doi.org/10.1016/j.eswa.2018.09.015

Masaeli M, Yan Y, Cui Y, et al (2010) Convex principal feature selection. In: Proceedings of the 2010 SIAM international conference on data mining. pp 619–628

Mercader AG, Pomilio AB (2010) QSAR study of flavonoids and biflavonoids as influenza H1N1 virus neuraminidase inhibitors. Eur J Med Chem 45:1724–1730. https://doi.org/10.1016/j.ejmech.2010.01.005

Mirjalili S, Lewis A (2013) S-shaped versus V-shaped transfer functions for binary Particle Swarm optimization. Swarm Evol Comput 9:1–14. https://doi.org/10.1016/j.swevo.2012.09.002

Mirjalili S, Lewis A (2016) The Whale optimization algorithm. Adv Eng Softw 95:51–67. https://doi.org/10.1016/j.advengsoft.2016.01.008

Mirjalili S, Mirjalili SM, Lewis A (2014) Grey Wolf optimizer. Adv Eng Softw 69:46–61. https://doi.org/10.1016/j.advengsoft.2013.12.007

Mohamed AW, Hadi AA, Fattouh AM, Jambi KM (2017) LSHADE with semi-parameter adaptation hybrid with CMA-ES for solving CEC 2017 benchmark problems. In: 2017 IEEE congress on evolutionary computation (CEC). IEEE, pp 145–152

Narendra PM, Fukunaga K (1977) A branch and bound algorithm for feature subset selection. IEEE Comput Archit Lett 26:917–922. https://doi.org/10.1109/TC.1977.1674939

Niu W, Feng Z, Liu S et al (2021) Multiple hydropower reservoirs operation by hyperbolic grey wolf optimizer based on elitism selection and adaptive mutation. Water Resour Manag 35:573–591. https://doi.org/10.1007/s11269-020-02737-8

Oh I, Lee J, Moon B (2004) Hybrid genetic algorithms for feature selection. Trans Pattern Anal Mach Intell 26:1424–1437

Pampara G, Engelbrecht AP, Franken N (2006) Binary differential evolution. In: 2006 IEEE International conference on evolutionary computation. IEEE, pp 1873–1879

Qais MH, Hasanien HM, Alghuwainem S (2020) Parameters extraction of three-diode photovoltaic model using computation and Harris Hawks optimization. Energy 195:117040. https://doi.org/10.1016/j.energy.2020.117040

Qasim MK, Algamal ZY, Ali HTM (2018) A binary QSAR model for classifying neuraminidase inhibitors of influenza A viruses (H1N1) using the combined minimum redundancy maximum relevancy criterion with the sparse support vector machine. SAR QSAR Environ Res 29:517–527. https://doi.org/10.1080/1062936X.2018.1491414

Qasim OS, Al-Thanoon NA, Algamal ZY (2020) Feature selection based on chaotic binary black hole algorithm for data classification. Chemom Intell Lab Syst 204:104104. https://doi.org/10.1016/j.chemolab.2020.104104

Qin AK, Suganthan PN, Self-adaptive differential evolution algorithm for numerical optimization. In: 2005 IEEE congress on evolutionary computation. IEEE, pp 1785–1791

Qu J, Ren K, Shi X (2021) Binary Grey Wolf optimization-regularized extreme learning machine wrapper coupled with the Boruta algorithm for monthly streamflow forecasting. Water Resour Manag 35:1029–1045. https://doi.org/10.1007/s11269-021-02770-1

Rashedi E, Nezamabadi-pour H, Saryazdi S (2010) BGSA: binary gravitational search algorithm. Nat Comput 9:727–745. https://doi.org/10.1007/s11047-009-9175-3

Safaldin M, Otair M, Abualigah L (2021) Improved binary gray wolf optimizer and SVM for intrusion detection system in wireless sensor networks. J Ambient Intell Humaniz Comput 12:1559–1576. https://doi.org/10.1007/s12652-020-02228-z

Safavian SR, Landgrebe D (1991) A survey of decision tree classifier methodology. IEEE Trans Syst Man Cybern 21:660–674. https://doi.org/10.1109/21.97458

Saremi S, Mirjalili S, Lewis A (2017) Grasshopper optimisation algorithm: theory and application. Adv Eng Softw 105:30–47. https://doi.org/10.1016/j.advengsoft.2017.01.004

Sharma H, Bansal JC, Arya KV (2012) Fitness based differential evolution. Memetic Comput 4:303–316. https://doi.org/10.1007/s12293-012-0096-9

Storn R (1996) On the usage of differential evolution for function optimization. In: Proceedings of North American fuzzy information processing. IEEE, pp 519–523

Tanabe R, Fukunaga AS (2014) Improving the search performance of SHADE using linear population size reduction. In: 2014 IEEE congress on evolutionary computation (CEC). IEEE, pp 1658–1665

Taradeh M, Mafarja M, Heidari AA et al (2019) An evolutionary gravitational search-based feature selection. Inf Sci (ny) 497:219–239. https://doi.org/10.1016/j.ins.2019.05.038

Too A, Saad M (2019) A new quadratic binary Harris hawk optimization for feature selection. Electronics 8:1130. https://doi.org/10.3390/electronics8101130

Tran B, Xue B, Zhang M (2016) Genetic programming for feature construction and selection in classification on high-dimensional data. Memetic Comput 8:3–15. https://doi.org/10.1007/s12293-015-0173-y

Tropsha A (2010) Best practices for QSAR model development, validation, and exploitation. Mol Inform 29:476–488. https://doi.org/10.1002/minf.201000061

Worachartcheewan A, Nantasenamat C, Isarankura-Na-Ayudhya C, Prachayasittikul V (2014) QSAR Study of H1N1 neuraminidase inhibitors from influenza a virus. Lett Drug Des Discov 11:420–427. https://doi.org/10.2174/15701808113106660085

Xie W, Wang J, Xing C et al (2020) Adaptive hybrid soft-sensor model of grinding process based on regularized extreme learning machine and least squares support vector machine optimized by golden sine Harris hawk optimization algorithm. Complexity 2020:1–26. https://doi.org/10.1155/2020/6457517

Yaeghoobi M, Frimayanti N, Chee CF et al (2016) QSAR, in silico docking and in vitro evaluation of chalcone derivatives as potential inhibitors for H1N1 virus neuraminidase. Med Chem Res 25:2133–2142. https://doi.org/10.1007/s00044-016-1636-5

Yang Y, Shen HT, Ma Z, et al (2011) ℓ2,1-norm regularized discriminative feature selection for unsupervised learning. In: IJCAI international joint conference on artificial intelligence

Yang J, Honavar V (1998) Feature subset selection using a genetic algorithm. IEEE Intell Syst 13:44–49. https://doi.org/10.1109/5254.671091

Yang X (2016) Nature-inspired metaheuristic algorithms nature, Second Edition

Yıldız AR, Yıldız BS, Sait SM, Li X (2019) The Harris hawks, grasshopper and multi-verse optimization algorithms for the selection of optimal machining parameters in manufacturing operations. Mater Test 61:725–733. https://doi.org/10.3139/120.111377

Yu L, Huan L (2003) Feature selection for high-dimensional data: a fast correlation-based filter solution. In: Proceedings of the 20th international conference on machine learning (ICML-03). pp 856–863

Zhu A, Xu C, Li Z et al (2015) Hybridizing grey wolf optimization with differential evolution for global optimization and test scheduling for 3D stacked SoC. J Syst Eng Electron 26:317–328. https://doi.org/10.1109/JSEE.2015.00037

Acknowledgements

This work is supported by the Hubei Provincial Science and Technology Major Project of China under Grant No. 2020AEA011 and the Key Research & Development Plan of Hubei Province of China under Grant No. 2020BAB100 and the project of Science,Technology and Innovation Commission of Shenzhen Municipality of China under Grant No. JCYJ20210324120002006. Also, the China Postdoctoral Science Foundation under Grant No. 2019M652647.

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Abd Elaziz, M., Yang, H. & Lu, S. A multi-leader Harris hawk optimization based on differential evolution for feature selection and prediction influenza viruses H1N1. Artif Intell Rev 55, 2675–2732 (2022). https://doi.org/10.1007/s10462-021-10075-3

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10462-021-10075-3