Abstract

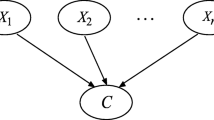

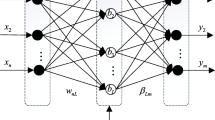

Aiming at the shortage of classic ID3 decision tree and C4.5 decision tree algorithm in ability of time series data mining, this paper increases Bayesian classification algorithm in the node of the decision tree algorithm as a preprocessing Bayesian node to form incremental learning decision tree. In incremental learning, the decision tree is more powerful in the mining of time series data, and the result is more robust and accurate. In order to verify the effectiveness of the algorithm, time series data mining simulation based on UCR time series data sets have been conducted, and the performance and efficiency of ID3 decision tree, Bayesian algorithm and incremental decision tree algorithm have been contrasted respectively in non-incremental learning and incremental learning. The experimental results show that in both the incremental and non-incremental time series data mining, the incremental decision tree algorithm based on Bayesian nodes optimization which can improve the classification accuracy. When optimizing the parameters of Bayesian nodes, the consumption of pruning time will be decreased, and the efficiency of the data mining is greatly improved.

Similar content being viewed by others

References

Ghosh, D.K., Ghosh, N.: An entropy-based adaptive genetic algorithm approach on data mining for classification problems. Int. J. Comput. Sci. Inf. Technol. (2014)

Cohen, S., Rokach, L., Maimon, O.: Decision-tree instance-space decomposition with grouped gain-ratio. Inf. Sci. 177(17), 3592–3612 (2007)

Zang, W., Zhang, P., Zhou, C., et al.: Comparative study between incremental and ensemble learning on data streams: case study. J. Big Data 1(1), 5 (2014)

Wang, T., Li, Z., Yan, Y., et al.: An Incremental Fuzzy Decision Tree Classification Method for Mining Data Streams. Machine Learning and Data Mining in Pattern Recognition, pp. 91–103. Springer, Berlin Heidelberg (2007)

Wang, L.: Incremental hybrid Bayesian network with noise resistance ability. Chinese J. Sci. Instrum. (2005)

Hu, X.G., Li, P.P., Wu, X.D., et al.: A semi-random multiple decision-tree algorithm for mining data streams. J. Comput. Sci. Technol. 22(5), 711–724 (2007)

Hester, T., Stone, P.: Intrinsically motivated model learning for developing curious robots. Artif. Intell. 247, 170–186 (2017)

Xing-Ming, O., Liu, Y.S.: A new algorithm for incremental induction of decision tree. Microprocessors (2008)

Rokach, L.: Mining manufacturing data using genetic algorithm-based feature set decomposition. Int. J. Intell. Syst. Technol. Appl. 4(1/2), 57–78 (2008)

Salperwyck, C., Lemaire, V.: Incremental decision tree based on order statistics. International Joint Conference on Neural Networks, pp. 1–8 (2013)

Zhou, Z.H., Li, H., Yang, Q.: Advances in knowledge discovery and data mining : 11th Pacific-Asia conference, PAKDD 2007, Nanjing, China, May 22–25, 2007: Proceedings. Pacific-Asia Conference on Knowledge Discovery & Data Mining. Springer (2007)

Domshlak, C., Karpas, E., Markovitch, S.: Online speedup learning for optimal planning. AI Access Foundation (2012)

Kargupta, H.: Onboard vehicle data mining, social networking, and pattern-based advertisement (2011)

Li, B., Yuan, S.: Incremental hybrid Bayesian network in content-based image retrieval. Canadian Conference on Electrical and Computer Engineering, 2005. IEEE, pp. 2025–2028 (2005)

Jantan, H., Hamdan, A.R., Othman, Z.A.: Classification for talent management using decision tree induction techniques. Conference on Data Mining and Optimization, Dmo 2009, Universiti Kebangsaan Malaysia, 27–28 October. DBLP, pp. 15–20 (2009)

Qi, R., Poole, D.: A new method for influence diagram evaluation. Comput. Intell. 11(3), 498–528 (2010)

Collet, T., Pietquin, O.: Bayesian Intervals, Credible, for Online and Active Learning of Classification Trees. Computational Intelligence, : IEEE Symposium. IEEE 2015, pp. 571–578 (2015)

Jiang, F., Sui, Y., Cao, C.: An incremental decision tree algorithm based on rough sets and its application in intrusion detection. Artif. Intell. Rev. 40(4), 517–530 (2013)

Kolter, J.Z., Maloof, M.A.: Dynamic weighted majority: an ensemble method for drifting concepts. JMLR.org (2007)

Kargupta, H.: Onboard vehicle data mining, social networking, advertisement: US, US8478514 (2013)

Samet, S., Miri, A., Granger, E.: Incremental learning of privacy-preserving Bayesian networks. Appl. Soft Comput. J. 13(8), 3657–3667 (2013)

Cazzolato, M.T., Ribeiro, M.X.: A statistical decision tree algorithm for medical data stream mining. IEEE, International Symposium on Computer-Based Medical Systems, pp. 389-392. IEEE (2013)

Kumari, S.R., Kumari, P.K.: Adaptive anomaly intrusion detection system using optimized Hoeffding tree. J. Eng. Appl. Sci. 95(17), 22–26 (2014)

Zurn, J.B., Motai, Y., Vento, S.: Self-reproduction for Articulated Behaviors with Dual Humanoid Robots Using On-line Decision Tree Classification. Cambridge University Press (2012)

White, K.J., Sutcliffe, R.F.E.: Applying incremental tree induction to retrieval from manuals and medical texts. J. Assoc. Inf. Sci. Technol 57(5), 588–600 (2006)

Wang, S.Y., Wang, Z.H.: Research on the time series classification algorithm based on incremental decision-tree. Modern Comput. (2015)

Bajo, J., Borrajo, M.L., Paz, J.F.D., et al.: A multi-agent system for web-based risk management in small and medium business. Expert Syst. Appl. 39(8), 6921–6931 (2011)

Todorovic, S., Ahuja, N.: Unsupervised category modeling, recognition, and segmentation in images. IEEE Trans. Pattern Anal. Mach. Intell. 30(12), 2158–74 (2008)

Shi, Y., Yang, Y.: An algorithm for incremental tree-augmented naive Bayesian classifier learning. International Conference on Artificial Intelligence and Computational Intelligence. IEEE, pp. 524–527 (2010)

Ordonez, C., Zhao, K.: Evaluating Association Rules and Decision Trees to Predict Multiple Target Attributes. IOS Press (2011)

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Xingrong, S. Research on time series data mining algorithm based on Bayesian node incremental decision tree. Cluster Comput 22 (Suppl 4), 10361–10370 (2019). https://doi.org/10.1007/s10586-017-1358-6

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10586-017-1358-6