Abstract

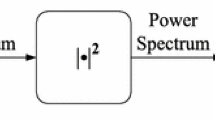

Combination of multiple acoustic features has great potential to improve Automatic Speech Recognition (ASR) accuracy. Our contribution in this research was to investigate one novel method when using voiced formants’ features in combination with standard MFCC features in order to enhance TIMIT phone recognition. These voiced features provide accurate formants frequencies using a Variable Order LPC Coding (VO-LPC) algorithm that was combined with continuity constraints. The overall estimating formants were concatenated with MFCC features when a voiced frame could be detected. For feature-level combination, Heteroscedastic Linear Discriminant Analysis (HLDA) based approach had been used successfully to find an optimal linear combination of successive vectors of a single feature stream.

A series of experiments on phone recognition speaker-independent continuous-speech had been carried out using a subset of the large read-speech TIMIT phone corpus. Hidden Markov Model Toolkit (HTK) was also used throughout all carried experiments. Using such feature level combination, optimized mixture splitting and a bigram language model, a detailed analysis on this combination performance was discussed for Context-Independent (CI) and Context-Dependent (CD) Hidden Markov Models (HMM). Experimental results from our proposed procedure showed that phone error rate was successfully decreased by about 3%. At phonetic level group, an increase of 8% and of 10% was observed respectively for vowel and liquid group. These results proved clear phone enhancement regarding existing state of the art.

Similar content being viewed by others

References

Atal, B. S., & Hanauer, S. L. (1971). Speech analysis and synthesis by linear prediction of the speech wave. The Journal of the Acoustical Society of America, 50(2), 637–655.

Atal, B. S., & Rabiner, L. R. (1976). A pattern recognition approach to voiced-unvoiced-silence classification with application to speech recognition. IEEE Transactions on Acoustics, Speech, and Signal Processing, 24(3), 201–212.

Benzeghiba, M., De Mori, R., Deroo, O., Dupont, S., Erbes, T., Jouvet, D., Fissore, L., Laface, P., Mertins, A., Ris, C., Rose, R., Tyagi, V., & Wellekens, C. (2007). Automatic speech recognition and speech variability: a review. Speech Communication, 49, 763–786.

Ben Messaoud, Z., Gargouri, D., Zribi, S., & Ben Hamida, A. (2009). Formant tracking linear prediction model using HMMs for noisy speech processing. International Journal of Signal Processing, WASET, 291–296.

Ben Messaoud, Z., & Ben Hamida, A. (2010). CDHMM parameters selection for speaker-independent phone recognition in continuous speech system. In 15th IEEE mediterranean electrotechnical conference, Valletta, Malta (pp. 253–258).

Davis, S., & Mermelstein, P. (1980). Comparison of parametric representations of monosyllabic word recognition in continuously spoken sentences. IEEE Transactions on Acoustics, Speech, and Signal Processing, ASSP-28, 357–366.

De Mori, R., Moisa, L., Gemello, R., Mana, F., & Albensano, D. (2001). Augmenting standard speech recognition features with energy gravity centres. Computer Speech and Language, 15, 341–354.

Dunn, H. K. (1961). Methods of measuring vowel formant bandwidths. The Journal of the Acoustical Society of America, 33(12), 1737–1746.

Fiscus, J. G. (1997). A post-processing system to yield reduced word error rates: recognizer output voting error reduction (rover). In IEEE automatic speech recognition and understanding workshop, Santa Barbara, CA (pp. 347–354).

Gales, M. J. F. (2002). Maximum likelihood multiple subspace projections for hidden Markov models. IEEE Transactions on Speech and Audio Processing, 10(2), 37–47.

Gargouri, D., Zerzri, M. A., & Ben Hamida, A. (2008). Formants estimation algorithm in noisy environment. GESTS International Transaction on Computer Science and Engineering, 45(1), 221–241.

Holmes, J. N., Holmes, W. J., & Garner, P. N. (1997). Using formant frequencies in speech recognition. In European conf. on speech communication and technology, Rhodes, Greece (Vol. 4, pp. 2083–2086).

Holmes, W. J., & Garner, P. N. (1998). On robust incorporation of format features into hidden Markov models for automatic speech recognition. In IEEE international conference on acoustics, speech, and signal processing, Istanbul, Turkey (pp. 1–4).

Hunt, M. J. (1987). Delayed decisions in speech recognition—the case of formants. Pattern Recognition Letters, 6, 121–137.

Kumar, N., & Andreou, A. G. (1996). A generalization of linear discriminant analysis in maximum likelihood framework (Tech. Rep. JHU-CLSP Technical Report No. 16). Johns Hopkins University.

Laprie, Y., & Berger, M. O. (1992). Active models for regularizing formant trajectories. In Proc. ICSLP, Banff (pp. 815–818).

Lee, K. F., & Hon, H. (1989). Speaker-independent phone recognition using hidden Markov models. IEEE Transactions on Acoustics, Speech, and Signal Processing, 37, 1641–1648.

Leung, K. Y., & Siu, M. (2004). Integration of acoustic and articulatory information with application to speech recognition. Information Fusion, 5(2), 141–151.

Lopes, C., & Perdigão, F. (2009). Phonetic recognition improvements through input feature set combination and acoustic context window widening. In 7th conference on telecommunications, Conftele, Sta Maria da Feira, Portugal (Vol. 1, pp. 449–452).

McCandless, S. (1974). An algorithm for automatic formant extraction using linear prediction spectra. IEEE Transactions on Acoustics, Speech, and Signal Processing, ASSP-22, 135–141.

Reynolds, T., & Antoniou, C. (2003). Experiments in speech recognition using a modular MLP architecture for acoustic modeling. Information Sciences, 156, 39–54.

Stuttle, M. N., & Gales, M. J. F. (2002). Combining a Gaussian mixture model front end with MFCC parameters. In International conference on spoken language processing, Denver, CO (Vol. 3, pp. 1565–1568).

Sorin, & Ramabadran, T. (2003). Extended advanced front end (XAFE) algorithm description. Version 1.1 (Tech. Rep. ES 202 212). ETSI STQAurora DSR Working Group.

Schmid, P., & Barnard, E. (1995). Robust N-Best Formant Tracking. In Proc. EUROSPEECH’95, Madrid (pp. 737–740).

Shire, M. L. (2001). Multi-stream ASR trained with heterogeneous reverberant environments. In IEEE international conference on acoustics, speech, and signal processing, Salt Lake City, UT (Vol. 1, pp. 253–256).

Saon, G., Padmanabhan, M., Gopinath, R., & Chen, S. (2000). Maximum likelihood discriminant feature spaces. In IEEE international conference on acoustics, speech, and signal processing (Vol. 2, pp. II1-129–II1-132).

Thomson, D. L., & Chengalvarayan, R. (1998). Use of periodicity and jitter as speech recognition feature. In Proc. IEEE int. conf. on acoustics, speech, and signal processing, Seattle, WA (Vol. 1, pp. 21–24).

Welling, L., & Ney, H. (1996). A model for efficient formant estimation. In IEEE international conference on acoustics, speech, and signal processing, Atlanta, GA (Vol. 2, pp. 797–801).

Wilkinson, N. J., & Russell, M. J. (2002). Improved phone recognition on TIMIT using formant frequency data and confidence measures. In 7th international conference on spoken language processing, Denver, CO.

Weber, K., Bourlard, H., & Bengio, S. (2001). Hmm2-extraction of formant features and their use for robust ASR. In European conference on speech communication and technology, Aalborg, Denmark (p. 607–610).

Xia, K., & Wilson, C. E. (2000). A new strategy of formant tracking based on dynamic programming. In International conference on spoken language processing, Beijing, China.

Yan, Z. J., Zhu, B., Hu, Y., & Wang, R. H. (2008). Minimum word classification error training of HMM for automatic speech. In IEEE international conference on acoustics, speech, and signal processing, Las Vegas, USA (pp. 4521–4524).

Young, S. (1992). The general use of tying in phoneme-based hmm speech recognisers. In IEEE international conference on acoustics, speech and signal processing, San Francisco, California, USA (Vol. 1 pp. 569–572).

Young, S., Kershaw, D., Odell, J., Ollason, D., Valtchev, V., & Woodland, P. (2006). The HTK book (revised for HTK version 3.4). Cambridge University Engineering Department-Speech Group. Available: http://www.htk.eng.cam.ac.uk.

Zolnay, A., Schlüter, R., & Ney, H. (2003). Extraction methods of voicing feature for robust speech recognition. In European conference on speech communication and technology, Geneva, Switzerland (Vol. 1, pp. 497–500).

Zolnay, A., Kocharov, D., Schlutera, R., & Hermann, N. (2007). Using multiple acoustic feature sets for speech recognition. Speech Communication, 49, 514–525.

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Ben Messaoud, Z., Ben Hamida, A. Combining formant frequency based on variable order LPC coding with acoustic features for TIMIT phone recognition. Int J Speech Technol 14, 393–403 (2011). https://doi.org/10.1007/s10772-011-9119-z

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10772-011-9119-z