Abstract

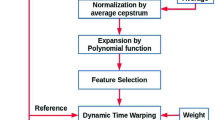

This paper introduces a neural network optimization procedure allowing the generation of multilayer perceptron (MLP) network topologies with few connections, low complexity and high classification performance for phoneme’s recognition. An efficient constructive algorithm with incremental training using a new proposed Frame by Frame Neural Networks (FFNN) classification approach for automatic phoneme recognition is thus proposed. It is based on a novel recruiting hidden neuron’s procedure for a single hidden-layer. After an initializing phase started with initial small number of hidden neurons, this algorithm allows the Neural Networks (NNs) to adjust automatically its parameters during the training phase. The modular FFNN classification method is then constructed and tested to recognize 5 broad phonetic classes extracted from the TIMIT database. In order to take into account the speech variability related to the coarticulation effect, a Context Window of Three Successive Frame’s (CWTSF) analysis is applied. Although, an important reduction of the computational training time is observed, this technique penalized the overall Phone Recognition Rate (PRR) and increased the complexity of the recognition system. To alleviate these limitations, two feature dimensionality reduction techniques respectively based on Principal Component Analysis (PCA) and Self Organizing Maps (SOM) are investigated. It is observed an important improvement in the performance of the recognition system when the PCA technique is applied.

Optimal neuronal phone recognition architecture is finally derived according to the following criteria: best PRR, minimum computational training time and complexity of the BPNN architecture.

Similar content being viewed by others

References

Baghai-Ravary, L. (2010). Evidence for the strength of the relationship between automatic speech recognition and phoneme alignment performance. In IEEE international conference on acoustics speech and signal processing (ICASSP) (pp. 5262–5265).

Baker, J. M., Deng, L., Glass, J., Khudanpur, S., Lee, C., Morgan, N., & O’Shaugnessy, D. (2009). Research developments and directions in speech recognition and understanding. Part 1. IEEE Signal Processing Magazine, 26(3), 75–80.

Ben Messaoud, Z., & Ben Hamida, A. (2011). Combining formant frequency based on variable order LPC coding with acoustic features for TIMIT phone recognition. International Journal of Speech Technology, 14(4), 393–403.

Ben Massoud, Z., Gargouri, D., Zribi, S., & Ben Hamida, A. (2009). Formant tracking linear prediction model using HMMs for noisy speech processing. International Journal of Signal Processing, 5(4), 291–296.

Cutajar, M., Gatt, E., Grech, I., Casha, O., & Micallef, J. (2011). Neural network architectures for speaker independent phoneme recognition. In The 7th international symposium on image and signal processing and analysis (ISPA) (pp. 90–94).

The DARPA (1990). TIMIT acoustic-phonetic continuous speech corpus (TIMIT) Training and test data and speech header software NIST Speech Disc CD1-1.1 October 1990.

Davis, S.-B., & Mermelstein, P. (1980). Comparison of parametric representations for monosyllabic word recognition in continuously spoken sentences. IEEE Transactions on ASSP, 28(4), 357–366.

Fahlman, S. E. (1988). Faster-learning variations on back-propagation: an empirical study. In Proceedings, 1988 Connectionist models summer school. Los Altos: Morgan-Kaufmann.

Fernandez, S., Graves, A., & Schmidhuber, J. (2008). Phoneme recognition in TIMIT with BLSTM-CTC. Technical report No. IDSIA-04-08/USI-SUPSI, Dalle Molle Institute for Artificial Intelligence, Galleria 2, 6928 Manno, Switzerland.

Furui, S. (1986). Speaker independent isolated word recognition using dynamic features of speech spectrum. IEEE Transactions on Acoustics, Speech, and Signal Processing, 34(1), 52–59.

Guo, J., Gao, S., & Hong, B. (2010). An auditory brain–computer interface using active mental response. IEEE Transactions on Neural Systems and Rehabilitation Engineering, 18(3), 230–235.

Hermansky, H. (1990). Perceptual linear predictive (PLP) analysis of speech. The Journal of the Acoustical Society of America, 87, 1738–1752.

Hild, H., & Waibel, A. (1993). Connected letter recognition with a multi-state time delay neural network. In S. Hanson, J. Cowan, & C. L. Giles (Eds.), Advances in neural information processing systems 5. San Mateo: Morgan Kaufmann.

Hornik, K., Stinchcombe, M., & White, H. (1989). Multilayer feedforward networks are universal approximators. Neural Networks, 2, 359–366.

Huang, Y. (2009). Phoneme recognition using neural network and sequence learning model. PhD thesis. The Russ College of Engineering and Technology of Ohio University.

Huang, C., Chen, T., & Chang, E. (2004). Accent issues in large vocabulary continuous speech recognition. International Journal of Speech Technology, 7(2–3), 141–153.

Kasabov, N. K. (1996). Foundations of neural networks, fuzzy systems, and knowledge engineering. Cambridge: MIT Press.

Kasabov, N., & Peev, E. (1994). Phoneme recognition with hierarchical self organized neural networks and fuzzy systems—a case study. In Proceedings of the international conference on artificial neural networks’94, Italy, Sorento (Vol. 2, pp. 201–204). Berlin: Springer.

Ketabdar, H., & Bourlard, H. (2010). Enhanced phone posteriors for improving speech recognition systems. IEEE Transactions on Audio, Speech, and Language Processing, 18(6), 1094–1106.

Lee, K. F., & Hon, H. W. (1989). Speaker-independent phone recognition using hidden Markov models. IEEE Transactions on Acoustics, Speech, and Signal Processing, 37(11), 1641–1648.

Lippman, R. (1989). Review of neural networks for speech recognition. Neural Computation, 1(1), 1–38.

Liu, D., Chang, T. S., & Zhang, Y. (2002). A constructive algorithm for feedforward neural networks with incremental training. IEEE Transactions on Circuits and Systems. I, Fundamental Theory and Applications, 49(12), 1876–1879.

Lopez, C., & Perdigao, F. (2011). In I. Ipsic (Ed.), Phoneme recognition on the TIMIT database. Speech technologies.

Masmoudi, S., Frikha, M., Chtourou, M., & Ben Hamida, A. (2011). Efficient MLP constructive training algorithm using a neuron recruiting approach for isolated word recognition system. International Journal of Speech Technology, 14(1), 1–10.

Morgan, N. (2010). Deep and wide: multiple layers in automatic speech recognition. IEEE Transactions on Audio, Speech, and Language Processing.

Morgan, N., & Bourlard, H. A. (1995). Neural networks for statistical recognition of continuous speech. Proceedings of the IEEE, 83(5), 742–772.

Picone, J. (1993). Signal modeling techniques in speech recognition. Proceedings of the IEEE, 8(9), 1215–1247.

Pinto, J., Garimella, S., Magimai-Doss, M., Hermansky, H., & Bourlard, H. (2011). Analysis of MLP-based hierarchical phoneme posterior probability estimator. IEEE Transactions on Audio, Speech, and Language Processing, 19(2), 225–241.

Pitts, W., & McCulloch, W. S. (1943). A logical calculus of ideas immanent in nervous activity. Bulletin of Mathematical Biophysics, 5, 115–133.

Radha, V., Vimala, C., & Krishnaveni, M. (2011). Isolated word recognition system for Tamil spoken language using back propagation neural network based on LPCC features. Computer Science & Engineering: An International Journal, 1(4), 1–11.

Shanableh, T., Assaleh, K., & Al-Rousan, M. (2007). Spatio-temporal feature-Extraction techniques for isolated gesture recognition in Arabic sign language. IEEE Transactions on Systems, Man, and Cybernetics, Part B: Cybernetics, 37(3), 641–650.

Sivaram, G. S., & Hermansky, H. (2011). Sparse multilayer perceptron for phoneme recognition audio. IEEE Transactions on Audio, Speech, and Language Processing, 20(1), 23–29.

Skowronski, M. D., & Harris, J. G. (2007). Noise-robust automatic speech recognition using a predictive echo state network. IEEE Transactions on Audio, Speech, and Language Processing, 15(5), 1724–1730.

Tebelskis, J. (1995). Speech recognition using neural networks. PhD thesis, School of Computer Science Carnegie Mellon University.

Thomas, S., Nguyen, P., Zweig, G., & Hermansky, H. (2011). MLP based phoneme detectors for automatic speech recognition. In IEEE international conference on acoustics, speech and signal processing (ICASSP) (pp. 5024–5027).

Traunmüller, H. (1984). Articulatory and perceptual factors controlling the age- and sex-conditioned variability in formant frequencies of vowels. Speech Communication, 3(1), 49–61.

Valente, F., Doss, M. M., Plahl, C., Ravuri, S., & Wang, W. (2011). Transcribing mandarin broadcast speech using multi-layer perceptron acoustic features. IEEE Transactions on Audio, Speech, and Language Processing, 19(8), 2439–2450.

Wade, J. J., McDaid, L. J., Santos, J. A., & Sayers, H. M. (2010). SWAT: a spiking neural network training algorithm for classification problems. IEEE Transactions on Neural Networks, 21(11), 1817–1830.

Waibel, A., Hanazawa, T., & Hinton, G. (1989). Phoneme recognition using time-delay neural networks. IEEE Transactions on Acoustics, Speech, and Signal Processing, 37(3), 328–339.

Wilamowski, B. M. (2009). Neural network architectures and learning algorithms. Industrial Electronics Magazine, IEEE, 3(4), 56–63.

Xu, C., Wang, X., & Wang, S. (2008). Research on Chinese digit speech recognition based on multi-weighted neural network. In Pacific-Ashia workshop on computational intelligence and industrial application, PACIIA’08 (Vol. 1, pp. 400–403).

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Frikha, M., Masmoudi, S., Ben Hamida, A. et al. Advanced classification approach for neuronal phoneme recognition system based on efficient constructive training algorithm. Int J Speech Technol 16, 273–284 (2013). https://doi.org/10.1007/s10772-012-9177-x

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10772-012-9177-x