Abstract

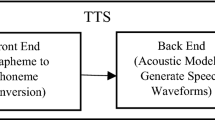

The objective of this paper is to demonstrate the significance of combining different features present in the glottal activity region for statistical parametric speech synthesis (SPSS). Different features present in the glottal activity regions are broadly categorized as F0, system, and source features, which represent the quality of speech. F0 feature is computed from zero frequency filter and system feature is computed from 2-D based Riesz transform. Source features include aperiodicity and phase component. Aperiodicity component representing the amount of aperiodic component present in a frame is computed from Riesz transform, whereas, phase component is computed by modeling integrated linear prediction residual. The combined features resulted in better quality compared to STRAIGHT based SPSS both in terms of objective and subjective evaluation. Further, the proposed method is extended to two Indian languages, namely, Assamese and Manipuri, which shows similar improvement in quality.

Similar content being viewed by others

References

Adiga, N., Khonglah, B. K., & Prasanna, S. M. (2017). Improved voicing decision using glottal activity features for statistical parametric speech synthesis. Digital Signal Processing, 71, 131–143.

Adiga, N., & Prasanna, S. R. M. (2015). Detection of glottal activity using different attributes of source information. The IEEE Signal Processing Letters, 22(11), 2107–2111.

Adiga, N. & Prasanna, S. R. M. (2018). Acoustic features modelling for statistical parametric speech synthesis: A review. IETE Technical Review. https://doi.org/10.1080/02564602.2018.1432422

Airaksinen, M., Bollepalli, B., Juvela, L., Wu, Z., King, S. & Alku, P. (2016). Glottdnna full-band glottal vocoder for statistical parametric speech synthesis. In Proc. Interspeech.

Alku, P. (1992). Glottal wave analysis with pitch synchronous iterative adaptive inverse filtering. Speech Communication, 1(2), 109–118.

Ananthapadmanabha, T. V. (1984). Acoustic analysis of voice source dynamics. STL-QPSR 23. Speech, Music and Hearing, Royal Institute of Technology, Stockholm: Tech. Rep.

Aragonda, H. & Seelamantula, C. (2013) Riesz-transform-based demodulation of narrowband spectrograms of voiced speech. In Proc. IEEE Int. Conf. Acoust. Speech Signal Process., May (pp. 8203–8207).

Aragonda, H., & Seelamantula, C. (2015). Demodulation of narrowband speech spectrograms using the Riesz transform. The IEEE/ACM Transactions on Audio, Speech, and Language Processing, 23(11), 1824–1834.

Arik, S. O., Chrzanowski, M., Coates, A., Diamos, G., Gibiansky, A., Kang, Y., Li, X., Miller, J., Raiman, J. & Sengupta, S. et al. (2017). Deep Voice: Real-time neural text-to-speech. arXiv:1702.07825.

Chi, C.-Y., & Kung, J.-Y. (1995). A new identification algorithm for allpass systems by higher-order statistics. Signal Processing, 41(2), 239–256.

De Cheveigné, A., & Kawahara, H. (2002). YIN, a fundamental frequency estimator for speech and music. The Journal of the Acoustical Society of America, 111(4), 1917–1930.

Degottex, G., & Erro, D. (2014). A uniform phase representation for the harmonic model in speech synthesis applications. EURASIP Journal on Audio Speech Music Process, 1, 1–16. https://doi.org/10.1186/s13636-014-0038-1.

Eleftherios, B., Daniel, E., Antonio, B., & Asuncion, M. (2008). Flexible harmonic/stochastic modeling for HMM-based speech synthesis. V Jornadas en Tecnologa del Habla.

Erro, D., Sainz, I., Navas, E., & Hernaez, I. (2014). Harmonics plus noise model based vocoder for statistical parametric speech synthesis. IEEE Journal of Selected Topics in Signal Process, 8(2), 184–194.

Fisher, W. M., Doddington, G. R. & Goudie-Marshall, K. M. (1986). The DARPA speech recognition research database: Specifications and status. In Proc. DARPA workshop on speech recognition (pp. 93–99).

Flanagan, J . L. (2013). Speech analysis, synthesis and perception (Vol. 3). New York: Springer.

Fukada, T., Tokuda, K., Kobayashi, T., & Imai, S. (1992). An adaptive algorithm for mel-cepstral analysis of speech. Proceedings IEEE International Conference on Acoustics, Speech, and Signal Processing, 1, 137–140.

Hemptinne, C. (2006). Integration of the harmonic plus noise model (HNM) into the Hidden Markov Model-Based speech synthesis system (HTS). Master’s thesis, Idiap Research Institute.

Hermes, D. J. (1988). Measurement of pitch by subharmonic summation. The Journal of the Acoustical Society of America, 83(1), 257–264.

Hunt, A. J., & Black, A. W. (1996). Unit selection in a concatenative speech synthesis system using a large speech database. Proceedings IEEE International Conference on Acoustics, Speech, and Signal Processing, 1, 373–376.

Kawahara, H., Estill, J. & Osamu, F. (2001). Aperiodicity extraction and control using mixed mode excitation and group delay manipulation for a high quality speech analysis, modification and synthesis system straight. In Proc. MAVEBA (pp. 59–64).

Kawahara, H., Masuda-Katsuse, I., & de Cheveign, A. (1999). Restructuring speech representations using a pitch-adaptive time frequency smoothing and an instantaneous-frequency-based F0 extraction. Speech Communication, 27, 187–207.

King, S. (2011). An introduction to statistical parametric speech synthesis. Sadhana, 36(5), 837–852.

Krishnamurthy, A., & Childers, D. (1986). Two-channel speech analysis. IEEE Transactions on Acoustics, Speech, and Signal Processing, 34(4), 730–743.

Larkin, K. G., Bone, D. J., & Oldfield, M. A. (2001). Natural demodulation of two-dimensional fringe patterns. I. General background of the spiral phase quadrature transform. The Journal of the Optical Society of America A, 18(8), 1862–1870.

Makhoul, J. (1975). Linear prediction: A tutorial review. Proceedings of the IEEE, 63(4), 561–580.

McAulay, R. J., & Quatieri, T. F. (1986). Speech analysis/synthesis based on a sinusoidal representation. IEEE Transactions on Acoustics, Speech, and Signal Processing, 34(4), 744–754.

Mehri, S., Kumar, K., Gulrajani, I., Kumar, R., Jain, S., Sotelo, J., Courville, A. & Bengio, Y. (2016). SampleRNN: An unconditional end-to-end neural audio generation model. arXiv:1612.07837.

Murthy, K. S. R., & Yegnanarayana, B. (2008). Epoch extraction from speech signals. IEEE Transactions on Audio Speech and Language Processing, 16, 1602–1613.

Murthy, K. S. R., Yegnanarayana, B., & Joseph, M. A. (2009). Characterization of glottal activity from speech signals. The IEEE Signal Processing Letters, 16(6), 469–472.

Nemer, E., Goubran, R., & Mahmoud, S. (2001). Robust voice activity detection using higher-order statistics in the LPC residual domain. IEEE Transactions on Speech and Audio Processing, 9(3), 217–231.

Oppenheim, A. V. (1969). Speech analysis-synthesis system based on homomorphic filtering. The Journal of the Acoustical Society of America, 45(2), 458–465.

Pantazis, Y. & Stylianou, Y. (2008). Improving the modeling of the noise part in the harmonic plus noise model of speech. In Proc. IEEE Int. Conf. Acoust. Speech Signal Process, March (pp. 4609–4612).

Patil, H. A., Patel, T. B., Shah, N. J., Sailor, H. B., Krishnan, R., Kasthuri, G., Nagarajan, T., Christina, L., Kumar, N. & Raghavendra V. et al. (2013). A syllable-based framework for unit selection synthesis in 13 Indian languages. In Proc. Oriental COCOSDA (pp. 1–8). IEEE.

Plante, F., Meyer, G., & Ainsworth, W. (1995). A pitch extraction reference database. Children, 8(12), 30–50.

Prathosh, A., Ananthapadmanabha, T., & Ramakrishnan, A. (2013). Epoch extraction based on integrated linear prediction residual using plosion index. IEEE Transactions on Audio Speech and Language Processing, 21(12), 2471–2480.

Quatieri, T. F. (2002). 2-D processing of speech with application to pitch estimation. In Proc. Interspeech.

Raitio, T., Suni, A., Pulakka, H., Vainio, M. & Alku, P. (2011). Utilizing glottal source pulse library for generating improved excitation signal for HMM-based speech synthesis. In Proc. IEEE Int. Conf. Acoust. Speech Signal Process. (pp. 4564–4567).

Raitio, T., Suni, A., Yamagishi, J., Pulakka, H., Nurminen, J., Vainio, M., et al. (2011). HMM-based speech synthesis utilizing glottal inverse filtering. IEEE Transactions on Audio Speech and Language Processing, 19–1, 153–165.

Seelamantula, C. S., Pavillon, N., Depeursinge, C., & Unser, M. (2012). Local demodulation of holograms using the Riesz transform with application to microscopy. The Journal of the Optical Society of America A, 29(10), 2118–2129.

Shamma, S. (2001). On the role of space and time in auditory processing. Trends in Cognitive Sciences, 5(8), 340–348.

Sharma, B., Adiga, N. & Prasanna, S. M. (2015). Development of Assamese text-to-speech synthesis system. In Proc. TENCON (pp. 1–6). IEEE.

Sjölander, K. & Beskow, J. (2000). Wavesurfer—An open source speech tool. In Proc. Interspeech (pp. 464–467).

Stylianou, Y. (2001). Applying the harmonic plus noise model in concatenative speech synthesis. IEEE Transactions on Speech and Audio Processing, 9(1), 21–29.

Stylianou, I. (1996). Harmonic plus noise models for speech, combined with statistical methods, for speech and speaker modification. Ph.D. dissertation, Ecole Nationale Supérieure des Télécommunications

Tokuda, K., Kobayashi, T., Masuko, T. & Imai, S. (1994). Mel-generalized cepstral analysis-a unified approach to speech spectral estimation. In Proceedings of ICSLP.

Tokuda, K., Nankaku, Y., Toda, T., Zen, H., Yamagishi, J., & Oura, K. (2013). Speech synthesis based on hidden Markov models. Proceedings of the IEEE, 101–5, 1234–1252.

van den oord, A., Dieleman, S., Zen, H., Simonyan, K., Vinyals, O., Graves, A., Kalchbrenner, N., Senior, A. & Kavukcuoglu, K. (2016). WaveNet: A generative model for raw audio. arXiv:1609.03499.

Wang, T., & Quatieri, T. (2012). Two-dimensional speech-signal modeling. IEEE Transactions on Audio Speech and Language Processing, 20(6), 1843–1856.

Wang, Y., Skerry-Ryan, R., Stanton, D., Wu, Y., Weiss, R. J., Jaitly, N., Yang, Z., Xiao, Y., Chen, Z., Bengio, S., Le, Q., Agiomyrgiannakis, Y., Clark, R. & Saurous, R. A. (2017). Tacotron: A fully end-to-end text-to-speech synthesis model. arXiv:1703.10135.

Wu, Z., Watts, O., & King, S. (2016). Merlin: An open source neural network speech synthesis system. In Proceedings of the speech synthesis workshop (SSW). Sunnyvale, USA: SSW.

Yoshimura, T., Tokuda, K., Masuko, T., Kobayashi, T. & Kitamura, T. (1999). Simultaneous modeling of spectrum, pitch and duration in HMM-based speech synthesis. In Proceedings of Eurospeech.

Zen, H., Tokuda, K., & Black, A. W. (2009). Statistical parametric speech synthesis. Speech Communication, 51–11, 1039–1064.

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Adiga, N., Prasanna, S.R.M. Speech synthesis for glottal activity region processing. Int J Speech Technol 22, 79–91 (2019). https://doi.org/10.1007/s10772-018-09583-5

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10772-018-09583-5