Abstract

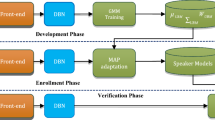

Great progress has been made in speaker recognition by extracting features from Gaussian mixture model (GMM) or deep neural network (DNN). In this paper, to extract the personality characteristics of speakers more accurately, we propose a novel deep Gaussian correlation supervector (DGCS) feature based on a DBN-GMM hybrid model. In the method, we firstly extract MFCC from preprocessed speech signals and employ a DBN to gain bottleneck features. Then bottleneck features are fed to a GMM to extract deep Gaussian supervector (DGS) which can be as the input of SVM achieving pattern discrimination and judgment. Further considering the relevance between deep mean vectors of DGS, DGS will be transformed to DGCS by the method of supervector recombination. Our experiments show that utilizing DGCS can significantly improve recognition rate by 17.979% compared to the system only with supervector, 18.22% compared to the system with DGS and 1.875% compared to the system with correlation supervector. In addition, the proposed DGCS demonstrates that time complexity for identification task can be largely reduced.

Similar content being viewed by others

References

Bahmaninezhad, F. (2017). I-vector/PLDA speaker recognition using support vectors with discriminant analysis. In ICASSP, pp. 5410–5414.

Bansal, P., Bharti, R. (2015). Speaker recognition using MFCC, shifted MFCC with vector quantization and fuzz. In International conference on soft computing techniques and implementations, pp. 41–45.

Bhattacharya, G., Kenny, P., Alam, J., & Stafylakis, T. (2016). Deep neural network based text-dependent speaker verification: preliminary results in Odyssey 2016: The Speaker and Language Recognition Workshop, Bilbao, June 21–24, pp. 9–15.

Burges, C. J. C. (1998). A tutorial on support vector machines for pattern recognition. Data Mining and Knowledge Discovery, 2(2), 121–167.

Ciregan, D., Meier, U., Schmidhuber, J. (2012). Multi-column deep neural networks for image classification. In IEEE conference on computer vision and pattern recognition, pp. 3642–3649.

Dehak, N., Kenny, P. J., & Dehak, R. (2011). Front- end factor analysis for speaker verification. IEEE Transactions on Audio, Speech and Language Processing, 19(4), 788–798.

Giusti, A., Ciresan, D. C., Masci, J. (2013). Fast image scanning with deep max-pooling convolutional neural networks. In IEEE international conference on image processing, pp. 4034–4038.

Hazrat, A., & Son, N. (2018). Speaker recognition with hybrid features from a deep belief network. Neural Comput & Applic, 29, 13–19.

He, T. X., Fan, Y. C., Qian, Y. M., Tan, T., & Yu, K. (2014). Reshaping deep neural network for fast decoding by node-pruning. In Acoustics, speech and signal processing (ICASSP), pp. 245–224.

Hinton, G. E. (2012). A practical guide to training restricted Boltzmann machines in Neural Networks: Tricks of the Trade (Lecture Notes in Computer Science, 7700). Berlin: Springer, pp. 599–619

Liu, Z. L., Wu, Z. D., & Li, T. (2018). GMM and CNN hybrid method for short utterance speaker recognition. IEEE Transactions on Industrial informatics, 14(7), 3244–3252.

Ma, B., Li, H. Z., Larcher, A. and Lee, K. A. (2014).Text-dependent speaker verification: classifiers, databases and RSR2015. In Speech Communication, pp. 56–77.

Makrem, B., Imen, J. (2016). Study of speaker recognition system based on feed forward deep neural networks exploring text-dependent mode. In International conference on science of electronics, technologies of information and telecommunications, pp. 355–360.

Matejka, P., Zhang, L., Ng, T., Mallidi, H. S., Glembek, O., Ma, J., and Zhang, B. (2014). Neural network bottleneck features for language identification. In Proceedings of IEEE Odyssey, pp. 299–304.

Mohamed, A., Dahl, G., Sainath, T. N. (2011). Deep belief networks using discriminative features for phone recognition. In IEEE international conference on acoustics, speech and signal processing, pp. 5060–5063.

Sarkar, A. K., Do, C. T., & Le, V. B. (2014). Combination of cepstral and phonetically discriminative features for speaker verification. IEEE Signal Processing Letters, 21(9), 1040–1044.

Sergey, N., Timur, P., Andrey, S. and Alexey, S. (2014).Text-dependent GMM-GFA system for password based speaker verification. In IEEE ICASSP, pp. 729–737.

Song, Y., Jiang, B., & Bao, Y. (2013). I-vector representation based on bottleneck features for language identification. Electronics Letters, 49(24), 1569–1570.

Tian, G. (2011). Hybrid genetic and variational expectation-maximization algorithm for Gaussian-mixture-model-based brain MR image segmentation. IEEE Transactions on Information Technology in Biomedicine, 15(3), 373–380.

Yamada, T., Wang, L., and Kai, A. (2013). Improvement of distant-talking speaker identification using bottleneck features of DNN. In Proc. Interspeech, pp. 3661–3664.

Yu, H., Tan, Z. H., & Ma, Z. Y. (2018). Spoofing detection in automatic speaker verification systems using DNN classifiers and dynamic acoustic features. IEEE Transactions on Neural Networks and Learning Systems, 29(10), 1–12.

Zhang, S. X., & Mak, M. W. (2011). Optimized discriminative kernel for SVM scoring and its application to speaker verification. IEEE Transactions on Neural Networks, 22(2), 173–185.

Zhang, S. X., Chen, Z., Zhao, Y. (2016). End-to-end attention based text-dependent speaker verification. IEEE Spoken Language Technology Workshop, pp. 171–178.

Acknowledgements

This work is supported by the National Natural Science Foundation of China (61671252, 61501251), the Natural Science Foundation of Jiangsu Province (BK20140891).

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Sun, L., Gu, T., Xie, K. et al. Text-independent speaker identification based on deep Gaussian correlation supervector. Int J Speech Technol 22, 449–457 (2019). https://doi.org/10.1007/s10772-019-09618-5

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10772-019-09618-5