Abstract

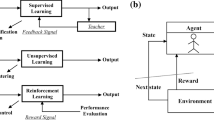

A multi-agent reinforcement learning algorithm with fuzzy policy is addressed in this paper. This algorithm is used to deal with some control problems in cooperative multi-robot systems. Specifically, a leader-follower robotic system and a flocking system are investigated. In the leader-follower robotic system, the leader robot tries to track a desired trajectory, while the follower robot tries to follow the reader to keep a formation. Two different fuzzy policies are developed for the leader and follower, respectively. In the flocking system, multiple robots adopt the same fuzzy policy to flock. Initial fuzzy policies are manually crafted for these cooperative behaviors. The proposed learning algorithm finely tunes the parameters of the fuzzy policies through the policy gradient approach to improve control performance. Our simulation results demonstrate that the control performance can be improved after the learning.

Similar content being viewed by others

References

Baird, L.C., Moore, A.W.: Gradient descent for general reinforcement learning. In: Advances in Neural Information System, vol.11, MIT, Cambridge, MA (1995)

Barto, A.G., Sutton, R.S., Anderson, C.W.: Neuronlike adaptive elements that can solve difficult learning control problems. IEEE Trans. SMC 13(5), 834–846 (1983)

Baxter, J., Bartlett, P.L.: Infinite-horizon policy-gradient estimation. J. Artif. Intell. Res. 15, 319–350 (2001)

Berenji, H.R., Khedkar, P.: Learning and tuning fuzzy logic controllers through reinforcements. IEEE Trans. Neural Netw. 3(5), 724–740 (1992)

Berenji, H.R., Vengerov, D.: A convergent actor critic based fuzzy reinforcement learning algorithm with application to power management of wireless transmitters. IEEE Trans. Fuzzy Systems. 11(4), 478–485 (2003)

Bowling, M., Veloso, M.: Multiagent learning using a variable learning rate. Artif. Intell. 136, 215–250 (2002)

Grudic, G.Z., Kumar, V., Ungar, L.: Using policy gradient reinforcement learning on autonomous robot controllers. In: Proceedings of IEEE-RSJ International Conference on Intelligent Robots and Systems(IROS), Las Vegas, Nevada, pp. 406–411 (2003)

Hu, J., Wellman, M.P.: Nash Q-learning for general-sum stochastic games. J. Mach. Learn. Res. 4, 1039–1069 (2003)

Kimura, H., Yamamura, M., Kobayashi, S.: Reinformcenent leanring by stochastic hill climbing on discounted reward. In: Proceedings of the 12th International Conference Machine Learning, pp. 152–160 California (1995)

Kohl, N., Stone, P.: Policy gradient reinformcenent leanring for fast quadrupedal locomotion. In: Proceedings of the IEEE International Conference on Robotics and Automation(ICRA), pp. 2619–2624 New Orleans, LA (2004)

Konda, V.R., Tsitsiklis, J.N.: Actor-critic algorithms. SIAM J. Control Optim. 42(4), 1143–1166 (2003)

Littman, M.L.: Markov games as a framework for multiagent reinforcement learning. In: Proceedings of the 11th International Conference on Machine Learning, pp.157–163 (1994)

Littman, M.L.: Value-function reinforcement learning in Markov games. Cogn. Syst. Res. 2(1), 55–66 (2000)

Olfati-Saber, R.: Flcoking for multi-agent dynamic systems: Algorithms and theory. IEEE Trans. Automat. Contr. 19(6), 933–941 (2006)

Peshkin, L., Kim, K., Meuleau, N., Kaelblingn, L.P.: Learning to cooperate via policy search. In: Proceedings of the 6th International Conference on uncertainty in artificial intelligence, pp. 307–314 (2000)

Reynolds, C.W.: Flocks, herds, and schools: A distributed behavioural model. Comput. Graph. 21(4), 25–34 (1987)

Singh, S., Kearns, M., Mansour, Y.: Nash convergence of gradient dynamics in general-sum games. In: Proceedings of the 16th Annual Conference on Uncertainty in Artificial Intelligence (UAI), pp. 541–548 Stanford University, Stanford, CA (2000)

Sutton, R.S., McAllester, D., Singh, S., Mansour, Y.: Policy gradient methods for reinforcement learning with function approximation. Adv. Neural Inf. Process. syst. 12, 1057–1063 (2000) (MIT)

Tanner, H.G., Jadbabaie, A., Pappas, G.J.: Flocking in fixed and switching networks. IEEE Trans. Automat. Contr. (to appear)

Tao, N., Baxter, J., Weaver, L.: A multi-agent policy-gradient approach to network routing. In: Proceedings of 18th International Conference on Machine Learning, Williamstown MA, pp. 553–560, July 2001

Tedrake, R., Zhang, T., Seung, H.: Stochastic policy gradient reinforcement learning on a simple 3D biped. In: Proceedings of IEEE-RSJ International Conference on Intelligent Robots and Systems(IROS), Senda Japan, pp. 2849–2854, October 2004

William, R.J.: Simple statistical gradient-following algorithms for connectionist reinforcement learning. Mach. Learn. 8, 229–256 (1992)

Yang, E., Gu, D., Hu, H.: Nonsingular formation control of cooperative mobile robots via feedback linearization. In: Proceedings of IEEE-RSJ International Conference on Intelligent Robots and Systems(IROS), Edmonton, Canada, pp. 3652–3657, August 2005

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Gu, D., Yang, E. Fuzzy Policy Reinforcement Learning in Cooperative Multi-robot Systems. J Intell Robot Syst 48, 7–22 (2007). https://doi.org/10.1007/s10846-006-9103-z

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10846-006-9103-z