Abstract

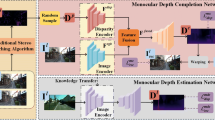

Monocular depth estimation by unsupervised learning is a potential strategy, which is mainly self-supervised by calculating view reconstruction loss from stereo pairs or monocular sequences. However, most existing works only consider the geometric information during training, without using semantics. We propose a semantic monocular depth estimation (SE-Net), a neural network framework that estimates depth using semantic information and video sequences. The whole framework is semi-supervised, because we take advantage of labelled semantic ground truth data. In view of the structural consistency between the semantically segmented image and the depth map, we first perform semantic segmentation on the image, and then use the semantic labels to guide the construction of the depth estimation network. Experiments on the KITTI dataset show that learning semantic information from images can effectively improve the effect of monocular depth estimation, and SE-Net is superior to the most advanced methods in depth estimation accuracy.

Similar content being viewed by others

References

Mur-Artal, R., Montiel, J.M.M., Tardós, J.D.: ORB-SLAM: a versatile and accurate monocular SLAM system. IEEE Trans. Robot. 31(5), 1147–1163 (2015)

Mur-Artal, R., Tardós, J.D.: ORB-SLAM2: an open-source SLAM system for monocular, stereo, and RGB-D cameras. IEEE Trans. Robot. 33(5), 1255–1262 (2017)

G. Klein and D. Murray, "Parallel Tracking and Mapping for Small AR Workspaces," Presented at the Proceedings of the 2007 6th IEEE and ACM International Symposium on Mixed and Augmented Reality, 2007

Forster, C., Zhang, Z., Gassner, M., Werlberger, M., Scaramuzza, D.: SVO: Semidirect visual Odometry for monocular and multicamera systems. IEEE Trans. Robot. 33(2), 249–265 (2017)

J. Engel, T. Schöps, and D. Cremers, "LSD-SLAM: Large-Scale Direct Monocular SLAM," in Computer Vision – ECCV 2014, Cham, 2014, pp. 834–849: Springer International Publishing

J. Jiao, Y. Cao, Y. Song, and R. Lau, "Look deeper into depth: Monocular depth estimation with semantic booster and attention-driven loss," in Proceedings of the European Conference on Computer Vision (ECCV), 2018, pp. 53–69

W. Chen, S. Qian, and J. Deng, "Learning single-image depth from videos using quality assessment networks," in Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, 2019, pp. 5604–5613

Z. Zhang, Z. Cui, C. Xu, Z. Jie, X. Li, and J. Yang, "Joint task-recursive learning for semantic segmentation and depth estimation," in Proceedings of the European Conference on Computer Vision (ECCV), 2018, pp. 235–251

A. Kendall, M. Grimes, and R. Cipolla, "PoseNet: A Convolutional Network for Real-Time 6-DOF Camera Relocalization," in 2015 IEEE International Conference on Computer Vision (ICCV), 2015, pp. 2938–2946

D. Eigen, C. Puhrsch, and R. Fergus, "Depth Map Prediction from a Single Image using a Multi-Scale Deep Network," in Advances in Neural Information Processing Systems 27, 2014, pp. 2366--2374: Curran Associates, Inc.

D. Eigen and R. Fergus, "Predicting Depth, Surface Normals and Semantic Labels with a Common Multi-Scale Convolutional Architecture," The IEEE International Conference on Computer Vision (ICCV), 2015

S. Wang, R. Clark, H. Wen, and N. Trigoni, "DeepVO: Towards End-to-End Visual Odometry with Deep Recurrent Convolutional Neural Networks," in 2017 IEEE International Conference on Robotics and Automation (ICRA), 2017, Pp. 2043-2050

B. Ummenhofer et al., "DeMoN: Depth and Motion Network for Learning Monocular Stereo," in The IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2017

C. Godard, O. Mac Aodha, and G. J. Brostow, "Unsupervised monocular depth estimation with left-right consistency," in Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, 2017, pp. 270–279

T. Zhou, M. Brown, N. Snavely, and D. G. Lowe, "Unsupervised Learning of Depth and Ego-Motion from Video," in 2017 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2017, pp. 6612–6619

Z. Yin and J. Shi, "GeoNet: Unsupervised Learning of Dense Depth, Optical Flow and Camera Pose," in 2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2018, Pp. 1983-1992

A. Wong and S. Soatto, "Bilateral cyclic constraint and adaptive regularization for unsupervised monocular depth prediction," in Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, 2019, pp. 5644–5653

Y. Kuznietsov, J. Stuckler, and B. Leibe, "Semi-Supervised Deep Learning for Monocular Depth Map Prediction," in 2017 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2017, pp. 2215–2223

N. Yang, R. Wang, J. Stückler, and D. Cremers, "Deep Virtual Stereo Odometry: Leveraging Deep Depth Prediction for Monocular Direct Sparse Odometry: 15th European Conference, Munich, Germany, September 8–14, 2018, Proceedings, Part VIII," 2018, pp. 835–852

V. Nekrasov, T. Dharmasiri, A. Spek, T. Drummond, and I. Reid, "Real-Time Joint Semantic Segmentation and Depth Estimation Using Asymmetric Annotations," 2018

A. Atapour-Abarghouei and T. P. Breckon, "Veritatem dies aperit-temporally consistent depth prediction enabled by a multi-task geometric and semantic scene understanding approach," in Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, 2019, pp. 3373–3384

P. Z. Ramirez, M. Poggi, F. Tosi, S. Mattoccia, and L. Di Stefano, "Geometry meets semantics for semi-supervised monocular depth estimation," in Asian Conference on Computer Vision, 2018, pp. 298–313: Springer

A. Atapour-Abarghouei and T. P. Breckon, "Monocular segment-wise depth: Monocular depth estimation based on a semantic segmentation prior," in 2019 IEEE International Conference on Image Processing (ICIP), 2019, pp. 4295–4299: IEEE

G. Ros, L. Sellart, J. Materzynska, D. Vazquez, and A. M. Lopez, "The synthia dataset: A large collection of synthetic images for semantic segmentation of urban scenes," in Proceedings of the IEEE conference on computer vision and pattern recognition, 2016, pp. 3234–3243

G. Lin, A. Milan, C. Shen, and I. Reid, RefineNet: Multi-path Refinement Networks for High-Resolution Semantic Segmentation. 2017, pp. 5168–5177

Fehn, C.: Depth-image-based rendering (DIBR), compression and transmission for a new approach on 3D-TV. Proc. SPIE. 5291, 05/01 (2004)

T. Zhou, S. Tulsiani, W. Sun, J. Malik, and A. Efros, View Synthesis by Appearance Flow. 2016, pp. 286–301

Z. Wang, A. Bovik, H. R. Sheikh, and E. Simoncelli, "Image quality assessment: From error visibility to structural similarity," IEEE Trans. Image Process., vol. 13, pp. 600–612, 01/01 2014

M. Abadi et al., TensorFlow : Large-Scale Machine Learning on Heterogeneous Distributed Systems. 2015

A. Geiger, Are we ready for autonomous driving? The KITTI vision benchmark suite. 2012, pp. 3354–3361

.D. Kingma and J. Ba, "Adam: a Method for Stochastic Optimization," International Conference on Learning Representations, 12/22 2014

Liu, F., Shen, C., Lin, G., Reid, I.: Learning depth from single monocular images using deep convolutional neural fields. IEEE Trans. Pattern Anal. Mach. Intell. 38, 02/25 (2015)

R. Garg, V. K. B G, G. Carneiro, and I. Reid, Unsupervised CNN for Single View Depth Estimation: Geometry to the Rescue. 2016

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher’s Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Yue, M., Fu, G., Wu, M. et al. Semi-Supervised Monocular Depth Estimation Based on Semantic Supervision. J Intell Robot Syst 100, 455–463 (2020). https://doi.org/10.1007/s10846-020-01205-0

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10846-020-01205-0