Abstract

Flutter shutter (coded exposure) cameras allow to extend indefinitely the exposure time for uniform motion blurs. Recently, Tendero et al. (SIAM J Imaging Sci 6(2):813–847, 2013) proved that for a fixed known velocity v the gain of a flutter shutter in terms of root means square error (RMSE) cannot exceeds a 1.1717 factor compared to an optimal snapshot. The aforementioned bound is optimal in the sense that this 1.1717 factor can be attained. However, this disheartening bound is in direct contradiction with the recent results by Cossairt et al. (IEEE Trans Image Process 22(2), 447–458, 2013). Our first goal in this paper is to resolve mathematically this discrepancy. An interesting question was raised by the authors of reference (IEEE Trans Image Process 22(2), 447–458, 2013). They state that the “gain for computational imaging is significant only when the average signal level J is considerably smaller than the read noise variance \(\sigma _r^2\)” (Cossairt et al., IEEE Trans Image Process 22(2), 447–458, 2013, p. 5). In other words, according to Cossairt et al. (IEEE Trans Image Process 22(2), 447–458, 2013) a flutter shutter would be more efficient when used in low light conditions e.g., indoor scenes or at night. Our second goal is to prove that this statement is based on an incomplete camera model and that a complete mathematical model disproves it. To do so we propose a general flutter shutter camera model that includes photonic, thermal (The amplifier noise may also be mentioned as another source of background noise, which can be included w.l.o.g. in the thermal noise) and additive [The additive (sensor readout) noise may contain other components such as reset noise and quantization noise. We include them w.l.o.g. in the readout.] (sensor readout, quantification) noises of finite variances. Our analysis provides exact formulae for the mean square error of the final deconvolved image. It also allows us to confirm that the gain in terms of RMSE of any flutter shutter camera is bounded from above by 1.1776 when compared to an optimal snapshot. The bound is uniform with respect to the observation conditions and applies for any fixed known velocity. Incidentally, the proposed formalism and its consequences also apply to e.g., the Levin et al. motion-invariant photography (ACM Trans Graphics (TOG), 27(3):71:1–71:9, 2008; Method and apparatus for motion invariant imag- ing, October 1 2009. US Patent 20,090,244,300, 2009) and variant (Cho et al. Motion blur removal with orthogonal parabolic exposures, 2010). In short, we bring mathematical proofs to the effect of contradicting the claims of Cossairt et al. (IEEE Trans Image Process 22(2), 447–458, 2013). Lastly, this paper permits to point out the kind of optimization needed if one wants to turn the flutter shutter into a useful imaging tool.

Similar content being viewed by others

References

Agrawal, A., Raskar, R.: Resolving objects at higher resolution from a single motion-blurred image. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), pp. 1–8 (2007)

Agrawal, A., Raskar, R.: Optimal single image capture for motion deblurring. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), pp. 2560–2567 (2009)

Agrawal, A., Xu, Y.: Coded exposure deblurring: optimized codes for PSF estimation and invertibility. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), pp. 2066–2073 (2009)

Boracchi, G., Foi, A.: Uniform motion blur in Poissonian noise: blur/noise tradeoff. IEEE Trans Image Process 20(2), 592–598 (2011)

Buades, T., Lou, Y., Morel, J.-M., Tang, Z.: A note on multi-image denoising. In: Proceedings of the IEEE International Workshop on Local and Non-Local Approximation in Image Processing (LNLA), pp. 1–15 (2009)

Cho, T.S., Levin, A., Durand, F., Freeman, W.T.: Motion blur removal with orthogonal parabolic exposures. In: Proceedings of the IEEE International Conference on Computational Photography (ICCP), pp. 1–8 (2010)

Cossairt, O., Gupta, M., Mitra, K., Veeraraghavan, A.: Performance bounds for computational imaging. In: Computational Optical Sensing and Imaging. Optical Society of America (2013)

Cossairt, O., Gupta, M., Nayar, S.K.: When does computational imaging improve performance? IEEE Trans Image Process 22, 447–458 (2013)

Ding, Y., McCloskey, S., Yu, J.: Analysis of motion blur with a flutter shutter camera for non-linear motion. In: Proceedings of the Springer-Verlag European Conference on Computer Vision (ECCV), pp. 15–30 (2010)

Foi, A., Alenius, S., Trimeche, M., Katkovnik, V., Egiazarian, K.: A spatially adaptive Poissonian image deblurring. In: Proceedings of the IEEE International Conference on Image Processing (ICIP), 1:I–925 (2005)

Gehm, M.E., John, R., Brady, D.J., Willett, R.M., Schulz, T.J.: Single-shot compressive spectral imaging with a dual-disperser architecture. Opt. Express 15(21), 14013–14027 (2007)

Hitomi, Y., Gu, J., Gupta, M., Mitsunaga, T., Nayar, S.K.: Video from a single coded exposure photograph using a learned over-complete dictionary. In: Proceedings of the IEEE International Conference on Computer Vision (ICCV) (2011)

Karr, A.F.: Probability. Springer Texts in Statistics. Springer, New York (1993)

Koppal, S.J., Yamazaki, S., Narasimhan, S.G.: Exploiting DLP illumination dithering for reconstruction and photography of high-speed scenes. Int. J. Comput. Vis. 96, 125–144 (2012)

Kozuka, H.: Image sensor, October 29, 2002. US Patent 6,473,538

Krantz, S.G.: A Panorama of Harmonic Analysis. Carus Mathematical Monographs, vol. 27. Mathematical Association of America, New York (1999)

Levin, A., Sand, P., Cho, T.S., Durand, F., Freeman, W.T.: Motion-invariant photography. ACM Trans. Graphics (TOG) 27(3), 71:1–71:9 (2008)

Levin, A., Sand, P., Cho, T.S., Durand, F., Freeman, W.T.: Method and apparatus for motion invariant imaging, October 1 2009. US Patent 20,090,244,300

McCloskey, S.: Temporally coded flash illumination for motion deblurring. In: Proceedings of the IEEE International Conference on Computer Vision (ICCV), pp. 683–690 (2011)

McCloskey, S., Au, W., Jelinek, J.: Iris capture from moving subjects using a fluttering shutter. In: Proceedings of the IEEE International Conference on Biometrics: Theory Applications and Systems (BTAS), pp. 1–6 (2010)

McCloskey, S., Muldoon, K., Venkatesha, S.: Motion invariance and custom blur from lens motion. In: Proceedings of the IEEE International Conference on Computational Photography (ICCP), pp. 1–8 (2011)

Mitra, K., Cossairt, O., Veeraraghavan, A.: A framework for the analysis of computational imaging systems with practical applications. arXiv:1308.1981, PAMI (submitted) (2013)

Mitra, K., Cossairt, O., Veeraraghavan, A.: Performance limits for computational photography. In: International Workshop on Advanced Optical Imaging and Metrology (2013)

Nakamura, K., Ohzu, H., Ueno, I.: Image sensor in which reading and resetting are simultaneously performed, November 16, 1993. US Patent 5,262,870

Narasimhan, S.G., Koppal, S.J., Yamazaki, S.: Temporal dithering of illumination for fast active vision. In: Proceedings of the Springer-Verlag European Conference on Computer Vision (ECCV), pp. 830–844 (2008)

Nayar, S.K., Ben-Ezra, M.: Motion-based motion deblurring. IEEE Trans. Pattern Anal. Mach. Intell. 26(6), 689–698 (2004)

Raskar, R.: Method and apparatus for deblurring images, July 13, 2010. US Patent 7,756,407

Raskar, R., Agrawal, A., Tumblin, J.: Coded exposure photography: motion deblurring using fluttered shutter. ACM Trans. Graphics (TOG) 25(3), 795–804 (2006)

Raskar, R., Tumblin, J., Agrawal, A.: Method for deblurring images using optimized temporal coding patterns, August 25, 2009. US Patent 7,580,620

Reddy, D., Veeraraghavan, A., Chellappa, R.: P2C2: programmable pixel compressive camera for high speed imaging. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), pp. 329–336 (2011)

Reddy, D., Veeraraghavan, A., Raskar, R.: Coded strobing photography for high-speed periodic events. In: Imaging Systems. Optical Society of America (2010)

Sarker, A., Hamey, L.G.C.: Improved reconstruction of flutter shutter images for motion blur reduction. In: Proceedings of the IEEE International Conference on Digital Image Computing: Techniques and Applications (DICTA), pp. 417–422 (2010)

Shi, G., Gao, D., Liu, D., Wang, L.: High resolution image reconstruction: a new imager via movable random exposure. In: Proceedings of the IEEE International Conference on Image Processing (ICIP), pp. 1177–1180 (2009)

Shiryaev, A.N.: Probability. Graduate Texts in Mathematics. Springer, New York (1984)

Tai, Y.W., Du, H., Brown, M.S., Lin, S.: Correction of spatially varying image and video motion blur using a hybrid camera. IEEE Trans. Pattern Anal. Mach. Intell. 32(6), 1012–1028 (2010)

Tai, Y.W., Kong, N., Lin, S., Shin, S.Y.: Coded exposure imaging for projective motion deblurring. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), pp. 2408–2415 (2010)

Telleen, J., Sullivan, A., Yee, J., Wang, O., Gunawardane, P., Collins, I., Davis, J.: Synthetic shutter speed imaging. Comput. Graphics Forum 26(3), 591–598 (2007)

Tendero, Y.: The flutter shutter camera simulator. Image Process. Line 2, 225–242 (2012)

Tendero, Y.: Are random flutter shutter codes good enough? Submittted (UCLA CAM report 13-84), pp. 1–23 (2013)

Tendero, Y., Morel, J.-M.: An optimal blind temporal motion blur deconvolution filter. IEEE Trans. Signal Process. Lett. 20(5), 523–526 (2013)

Tendero, Y., Morel, J.-M., Rougé, B.: A formalization of the flutter shutter. J. Phys. 386(1), 012001 (2012)

Tendero, Y., Morel, J.-M., Rougé, B.: The flutter shutter paradox. SIAM J. Imaging Sci. 6(2), 813–847 (2013)

Tendero, Y., Osher, S.: Blind uniform motion blur deconvolution for image bursts and video sequences based on sensor characteristics. Submittted (UCLA CAM report 14-17), pp. 1–4 (2014)

Tsai, R.: Pulsed control of camera flash, June 14, 2011. US Patent 7,962,031

Veeraraghavan, A., Reddy, D., Raskar, R.: Coded strobing photography: compressive sensing of high speed periodic events. IEEE Trans. Pattern Anal. Mach. Intell. 33(4), 671–686 (2011)

Wilburn, B., Joshi, N., Vaish, V., Talvala, E.V., Antunez, E., Barth, A., Adams, A., Horowitz, M., Levoy, M.: High performance imaging using large camera arrays. ACM Trans. Graphics (TOG) 24(3), 765–776 (2005)

Xu, S., Liang, H., Tu, D., Li, G.: A deblurring technique for large scale motion blur images using a hybrid camera. Proceedings of the IEEE International Congress on Image and Signal Processing (ICISP), vol. 2, pp. 806–810 (2010)

Xu, W., McCloskey, S.: 2D Barcode localization and motion deblurring using a flutter shutter camera. In: Proceedings of the IEEE Workshop on Applications of Computer Vision (WACV), pp. 159–165 (2011)

Acknowledgments

Yohann Tendero is partially supported on the grants ONR No: N00014-14-1-0683 and BEA Appareo. Part of this work was done at the UCLA Math. dept. Jean-Michel Morel is partially founded by the European Research Council (advanced grant Twelve Labours No: 246961), the office of Naval research (ONR grant N00014-14-1-0023), and ANR-DGA project ANR-12-ASTR-0035.

Author information

Authors and Affiliations

Corresponding author

Additional information

Part of this work was done at the UCLA Mathematics Department.

Appendices

Annex 1: Proof of Lemma 1

For any \(x \in \mathbb {R}\), we have

Poisson’s formula (xxv) justifies (44). Indeed, consider

From Cauchy–Schwartz’s inequality we have

Indeed, since \(u \in L^1(\mathbb {R})\) by Young’s inequality we have

because \( \alpha \in L^2(\mathbb {R})\) and \( u \in L^1(\mathbb {R})\). Since \(\gamma \in L^2(\mathbb {R})\), from (50), we deduce that \(f_x \in L^1(\mathbb {R})\). Moreover, we have

Since u is \([-\pi ,\pi ]\) band-limited and \(\gamma \) is \([-\pi ,\pi ]\) band-limited from its Definition (19) we deduce that \(\hat{f}_x(\xi )=0\) for all \(\xi \in \mathbb {R}\) such that \(|\xi |>\pi \). Yet, from Poisson’s formula (xxv) this only implies that

From (51), \(f_x(y)\) is given by a convolution. Thus,

since \(\hat{u}\) is \([-\pi ,\pi ]\) band-limited. Since the integrand is non-zero on the set \(\{\pi \}\), which has Lebesgue measure zero, we deduce that \(\hat{f}_x(2\pi )=0\). Similarly we obtain \(\hat{f}_x( -2\pi )=0\). The validity of (45)–(46) is, from the continuity of the \(L^2(\mathbb {R})\) function \(\mathbb {R}\ni x \mapsto \left[ \left( \frac{1}{|v|}\alpha \left( \frac{\cdot }{v}\right) *u\right) *\gamma \right] (x)\), obvious. Not less obvious is (47) from the inverse Fourier transform definition (xxiii). The definition (19) of \(\hat{\gamma }\) justifies (48). The fact that u is \([-\pi ,\pi ]\) band-limited and again the definition of \(\hat{\gamma }\) (19) justifies (49) and completes our proof.

Annex 2: Proof of Lemma 2

We first prove (23) then prove that \(\mathrm {var}\left( {\widetilde{\mathbbm {u}}}_\mathrm{est,ana}(\cdot ) \right) \) can be written as the sum of an \(L^1(\mathbb {R})\) function and a constant. From Proposition 3, for any \(x\in \mathbb {R}\), we have

Therefore, combining Proposition 1 (Eq. (7)) and (52), for any \(x\in \mathbb {R}\), we have

and (23) is proved. As a consequence, the function \(\mathbb {R}\ni x \mapsto \mathrm {var}\left( {\widetilde{\mathbbm {u}}}_\mathrm{est,ana}(x) \right) \) can be written as

where \(f_{\text {ana}}(\cdot )\) is defined by (24). It remains to justify that \(f_{\text {ana}} \in L^1(\mathbb {R})\). We have

This concludes our proof.

Annex 3: Proof of Proposition 4

We first prove (26) then (27). From the numerical flutter shutter samples definition (Definition 6), since, by assumption, \(\mathbb {E}(\eta (n))=0\) we have

Thus,

and (26) is proved. We now prove (27). From Definition 6, since \(\mathrm {var}\left( \eta (n) \right) =\sigma ^2_r\) we have

and (27) is proved. This concludes our proof.

Annex 4: Proof of Theorem 2

The proof follows the same path as the proof of Lemma 2 on Annex 1: we first justify that \(\mathrm {var}\left( {\widetilde{\mathbbm {u}}}_\mathrm{est,num}(x)\right) \) can be written as the sum of an \(L^1(\mathbb {R})\) function and a constant. We then prove the formula in Theorem 2.

From Proposition 5, we have that

where the last equality is justified by Proposition 4. The rest of the calculation is similar to (53)–(54) in Annex 1, and we obtain that

for any \(x \in \mathbb {R}\), where

The proof that \(f_{\text {num}} \in L^1(\mathbb {R})\) is identical to the proof that \(f_{\text {ana}} \in L^1(\mathbb {R})\) given in Annex 1. Indeed, \(\alpha \in L^1(\mathbb {R}) \cap L^2(\mathbb {R})\) and therefore \(\alpha ^2 \in L^1(\mathbb {R})\). We now justify the formula in Theorem 2. From Proposition 5, for any \(x\in \mathbb {R}\) we have \(\mathbb {E}({\widetilde{\mathbbm {u}}}_\mathrm{est,num}(x))=\widetilde{u}(x)\) and therefore \(\mathbb {E}|{\widetilde{\mathbbm {u}}}_\mathrm{est,num}(x) - \widetilde{u}(x) |^2=\mathrm {var}\left( {\widetilde{\mathbbm {u}}}_\mathrm{est,num}(x) \right) .\) Thus, by similar calculations between Lemma 2 and Theorem 1 we obtain

and Theorem 2 is proved.

Annex 5: Main Notations and Formulae

-

(i)

t time variable

-

(ii)

\(\Delta t\) length of a time interval (exposure time)

-

(iii)

\(x \in \mathbb {R}\) spatial variable

-

(iv)

\(X \sim Y\) means that the random variables X and Y have the same law

-

(v)

\(\mathbb {P}(A)\) probability of an event A

-

(vi)

\(\mathbb {E}(X)\) expected value of a random variable X

-

(vii)

\(\mathrm {var}(X)\) variance of a random variable X

-

(viii)

\(f *g\) convolution of two \(L^2 (\mathbb {R})\) functions \((f *g)(x) = \int _{\mathbb {R}} f(y) g(x-y) dy\) (the validity is discussed as soon as both functions are not in \(L^2(\mathbb {R})\))

-

(ix)

\(l(t,x)>0 ~ \forall ~ x \in \mathbb {R}^+ \times \mathbb {R}\) continuous landscape before passing through the optical system

-

(x)

\(\mathcal P(\lambda )\) Poisson random variable with intensity \(\lambda >0\)

-

(xi)

\(g\) point-spread-function of the optical system. Assumption \(g\in S(\mathbb {R})\) (\(S(\mathbb {R})\) denotes the Schwartz class on \(\mathbb {R}\))

-

(xii)

\(\sigma _o^2<+\infty \) variance of the thermal noise

-

(xiii)

\(\sigma _r^2<+\infty \) variance of the additive (sensor readout, quantization) noise

-

(xiv)

\(\mu <+\infty \) average mean of \(\widetilde{u}\) (see (xv))

-

(xv)

\(\widetilde{u}=\mathbbm {1}_{[-\frac{1}{2},\frac{1}{2}]}*g*l\) ideal observable landscape just before sampling. Assumption: \(\widetilde{u}\) \([-\pi ,\pi ]\) band-limited, \(\mu :=\lim _{T\rightarrow +\infty } \frac{1}{2T} \int _{-T}^T \widetilde{u}(x) \mathrm{d}x\) and \(u:=(\widetilde{u}-\mu ) \in L^1(\mathbb {R})\cap L^2(\mathbb {R})\)

-

(xvi)

\(obs(n)\), \(n \in \mathbb {Z}\) observation of the landscape at pixel n

-

(xvii)

\({\widetilde{\mathbbm {u}}}_\mathrm{est,ana}\) estimated landscape for the analog flutter shutter from the observed samples \(obs(n)\) where \(n \in \mathbb {Z}\). It is defined to have \(\mathbb {E}({\widetilde{\mathbbm {u}}}_\mathrm{est,ana}(x))=\widetilde{u}(x)\) for any \(x \in \mathbb {R}\).

-

(xviii)

\({\widetilde{\mathbbm {u}}}_\mathrm{est,num}\) estimated landscape for the numerical flutter shutter from the observed samples \(obs(n)\) where \(n \in \mathbb {Z}\). It is defined to have \(\mathbb {E}({\widetilde{\mathbbm {u}}}_\mathrm{est,ana}(x))=\widetilde{u}(x)\) for any \(x \in \mathbb {R}\).

-

(xix)

v relative velocity between the landscape and the camera (unit: pixels per second)

-

(xx)

\(\alpha (t)\) flutter shutter gain function.

-

(xxi)

\(\mathbbm {1}_{[a,b]}\) indicator function of an interval [a, b]

-

(xxii)

\( \Vert f \Vert _{L^1(\mathbb {R})}= \int _\mathbb {R}|f(x)| \mathrm{d}x\), \( \Vert f \Vert _{L^2(\mathbb {R})}= \sqrt{\int _\mathbb {R}|f(x)|^2 \mathrm{d}x}\)

-

(xxiii)

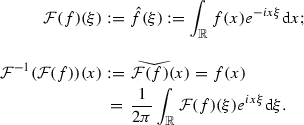

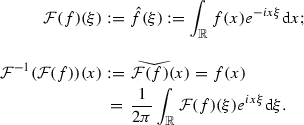

Let \(f,g \in L^1(\mathbb {R})\) or \(L^2(\mathbb {R})\), then

Moreover \(\mathcal {F}(f*g)(\xi )=\mathcal {F}(f)(\xi )\mathcal {F}(g)(\xi )\) and (Plancherel)

$$\begin{aligned} \int _{\mathbb {R}} |f(x)|^2dx= & {} \Vert f \Vert _{L^2(\mathbb {R})}^2=\frac{1}{2 \pi } \int _{\mathbb {R}} |\mathcal {F}(f)|^2(\xi ) \mathrm{d}\xi \nonumber \\&= \frac{1}{2\pi } \left\| \mathcal {F}(f) \right\| _{L^2(\mathbb {R})}^2. \end{aligned}$$(55) -

(xxiv)

\(\mathrm {sinc}(x)=\frac{\sin (\pi x)}{\pi x}=\frac{1}{2 \pi } \mathcal {F}(\mathbbm {1}_{[-\pi ,\pi ]})(x)=\mathcal {F}^{-1}(\mathbbm {1}_{[-\pi ,\pi ]})(x)\)

-

(xxv)

(Poisson summation formula) Let \(\varphi \in L^1(\mathbb {R})\) be \([-\pi ,\pi ]\) band-limited.

$$\begin{aligned} \sum _n \varphi (n)=\sum _k \hat{\varphi }(2k\pi ). \end{aligned}$$(56)Since \(\varphi \) is \([-\pi ,\pi ]\) band-limited \(\hat{\varphi }(\xi )=0\) \(\forall \xi \in \mathbb {R}\) such that \(|\xi |>\pi \), then from Poisson’s formula (xxv) Eq. (56) we have

$$\begin{aligned} \sum _n\varphi (n)=\hat{\varphi }(0)=\int _{\mathbb {R}}\varphi (x)\mathrm{d}x. \end{aligned}$$(57)This applies to any shift of \(\varphi \), so we also have

$$\begin{aligned} \sum _n\varphi (x+n)=\int _{\mathbb {R}}\varphi (x)\mathrm{d}x. \end{aligned}$$(58)

Rights and permissions

About this article

Cite this article

Tendero, Y., Morel, JM. On the Mathematical Foundations of Computational Photography. J Math Imaging Vis 54, 378–397 (2016). https://doi.org/10.1007/s10851-015-0609-5

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10851-015-0609-5