Abstract

In data envelopment analysis, we are often puzzled by the large difference between the constant-returns-scale and variable returns-to-scale scores, and by the convexity production set syndrome in spite of the S-shaped curve, often observed in many real data sets. In this paper, we propose a solution to these problems. Initially, we evaluate the constant-returns-scale and variable returns-to-scale scores for all decision-making units by means of conventional methods. We obtain the scale-efficiency for each decision-making unit. Using the scale-efficiency, we decompose the constant-returns-scale slacks for each decision-making unit into scale-independent and scale-dependent parts. Following this, we eliminate scale-dependent slacks from the data set, and thus obtain a scale-independent data set. Next, we classify decision-making units into several clusters, depending either on the degree of scale-efficiency or on some other predetermined characteristics. We evaluate slacks of scale-independent decision-making units within the same cluster using the constant-returns-scale model, and obtain the in-cluster slacks. By summing the scale-dependent and the in-cluster slacks, we define the total slacks for each decision-making unit. Following this, we evaluate the efficiency score of the decision-making unit and project it onto the efficient frontiers, which are no longer guaranteed to be convex and are usually non-convex. Finally, we define the scale-dependent data set by which we can find the scale elasticity of each decision-making unit. We apply this model to a data set of Japanese universities’ research activities.

Similar content being viewed by others

1 Introduction

In data envelopment analysis (DEA), we are often puzzled by the large difference between the constant-returns-scale (CRS) score and the variable returns-to-scale (VRS) score.Footnote 1 Several authors (Avkiran [1], Avkiran et al. [2], Bogetoft and Otto [3], among others) have proposed solutions for this problem. In this paper, we propose a different approach to solving this problem, and present our results. A further problem, which is closely related to the problem mentioned above, is the conventional convex production possibility set assumption. Several researchers have discussed non-convex production possibility issues (Dekker and Post [4], Kousmanen [5], Podinovski [6], Olesen and Petersen [7], among others). Among them, we refer two relevant articles. Dekker and Post [4] extended the standard concave efficient frontier assumption in DEA to the quasi-concavity model. However, this quasi-concavity assumption is not always work for identification of frontiers associated with real world problems. Our approach is more general in dealing with the non-convex issue. Kousmanen [5] utilized Disjunctive Programming for identification of conditionally convex production set. He characterizes various reference technologies by setting irrelevant intensity values to zero, while keeping the convexity condition in the chosen technology. His method is enumerative in the sense that the number of reference technologies is combinatorial. Our method differs from his in (a) introduction of clusters instead of enumeration, and (b) relaxation of the convexity condition on the intensity vector. As far as we know, no paper has discussed these subjects in the scale and cluster related context.

In this paper, we discuss the above two fundamental problems of DEA.

A further objective of this paper is measurement of the scale elasticity of production. Most prior research into this subject has been based on the convex production possibility set assumption. We propose a new scheme for evaluation of the scale elasticity within the specific cluster containing each individual decision-making unit (DMU).

We refer to Farrell’s seminal papers (Farrell [8], Farrell and Fieldhouse [9]) for discussion of the case of economies and diseconomies of scale. Charnes et al. [10] extended Farrell’s work for evaluation of the program and the managerial efficiency of the program follow through experiments, where program follow through (PFT) and non-follow through (NFT) were treated as two separate clusters. Førsund et al. [11] revisited Farrell [8] and Farrell and Fieldhouse [9]. They pointed out that Farrell and Fieldhouse’s grouping method creates efficient frontiers of each group. They also generalized this idea to multiple outputs and tried to represent graphically frontier functions, where the EffiVision (Krivonozhko et al. [12]) was utilized. They discussed several economic concepts through this visualization.

Considering the scope of these previous works, the novel features of this paper are as follows:

-

(a)

We extend Farrell’s approach to discriminate scale-merits and scale-demerits, by utilising scale-efficiency. We decompose slacks of each DMU into scale-dependent and scale-independent parts;

-

(b)

We extend the clustering approach of Charnes et al. [10], by coupling the approach with scale-merits and scale-demerits. Thus, we can find non-convex frontiers;

-

(c)

Although non-convex frontiers can be identified by the free disposal hull (FDH) model (Deprins et al. [13]), this model is a discrete model in the sense that elements of the intensity vector are binary. In addition, scale-effects are not involved. Our model permits continuous intensity vectors and can find non-convex frontiers by means of the clusters.

This paper is structured as follows. In Sect. 2, we describe decomposition of the CRS slacks after introducing basic notations, and define the scale-independent data set. In Sect. 3, we introduce clusters and define the scale and cluster adjusted score (SAS). In Sect. 4, we explain our scheme using an artificial example. We develop the radial model case in Sect. 5. In Sect. 6, we define the scale elasticity based on the scale-dependent data set. A typical application, in this case concerning Japanese National University research outputs, follows in Sect. 7, and the last section concludes this paper.

2 Global Formulation

In this section, we introduce notation and basic tools, and discuss decomposition of the slacks. Throughout Sects. 2– 4, we utilize the input-oriented slacks-based measure (SBM) (Tone [14]), a non-radial model, for explanation of our model.

2.1 Notation and Basic Tools

Let the input and output data matrices be, respectively:

where \(m\), \(s\) and \(n\) are the number of inputs, outputs and DMUs, respectively. We assume that the data are positive, \(i.e.\), \({\mathbf {X}}>{\mathbf {0}}\) and \({\mathbf {Y}}>{\mathbf {0}}\).

Then, the production possibility set for the CRS and VRS models, respectively, are defined by:

where \({\mathbf {x}}(>{\mathbf {0}})\in \text{ IR }^m\), \({\mathbf {y}}(>{\mathbf {0}})\in \text{ IR }^s\) and \({\varvec{\lambda }}(\ge {\mathbf {0}})\in \text{ IR }^n\)are, respectively, the input, output and intensity vectors, and \({\mathbf {e}} \in \text{ IR }^{n}\) is the row vector with all elements equal to 1.

The input-oriented slacks-based measure for evaluation of the efficiency of each DMU \(({\overline{\mathbf {x}}}_k ,{\overline{\mathbf {y}}}_k )\;(k=1,\ldots ,n)\), regarding the CRS and VRS models, are as follows:

where \({\varvec{\lambda }}\) is the intensity vector, and \({\mathbf {s}}^-\) and \({\mathbf {s}}^+\) are the input- and output-slacks, respectively. Although we present our model in the input-oriented SBM model, we can also develop the model in the output-oriented and non-oriented SBM models, as well as in the radial models.

Although we utilise the scale-efficiency, CRS/VRS, as an index of the scale-merits and scale-demerits, we can make use of other indices, that are appropriate for discriminating handicaps due to scale. However, the index must be normalised between 0 and 1, with a larger value indicating a better scale condition.

2.2 Uniqueness of Slacks

Although CRS and VRS scores are determined uniquely, their slacks are not always unique in the SBM model. We can resolve this problem as follows:

-

(a)

Priority We determine the priority (importance) of the input factors. For example, the most cost-influential input factor will be identified to the first priority, because its reduction in the slack is most recommended. The second and others follow in this way;

-

(b)

Multi-objective programming for the determination of slacks according to the priority We assume the priority is \(s_1^-,s_2^-,\ldots ,s_m^- \) in this order. We maximise slacks \(s_1^-,s_2^-,\ldots ,s_m^- \) using the multi-objective programming framework below.

$$\begin{aligned} \begin{array}{l} \mathop {\max }\limits _{{\varvec{\lambda }},{\mathbf {s}}^-,{\mathbf {s}}^+} ({\mathbf {s}}_1^-,s_2^-,\ldots ,s_m^- ) \\ \text{ s.t }.\quad \frac{1}{m}\sum \nolimits _{i=1}^m {\frac{s_i^- }{x_{ik} }} =1-\theta _k^{\mathrm{{CRS}}} ,{\mathbf {X}}{\varvec{\lambda }}+{\mathbf {s}}^-={\mathbf {x}}_k ,{\mathbf {Y}}{\varvec{\lambda }}-{\mathbf {s}}^+={\mathbf {y}}_k,{\varvec{\lambda }}\ge {\mathbf {0}},{\mathbf {s}}^-\ge {\mathbf {0}},{\mathbf {s}}^+\ge {\mathbf {0}}.\\ \end{array} \end{aligned}$$(7)This notation indicates that we first maximize \(s_1^- \) subject to (7). Then, fixing \(s_1^- \) at the optimal vale, we maximize \(s_2^- \) subject to (7). We repeat this process until \(s_{m-1}^- \).

For the VRS model (4), we can determine the slacks uniquely using the above procedure by including the convexity constraint \({\mathbf {e}}{\varvec{\lambda }}=1.\)

2.3 Decomposition of CRS Slacks

We decompose CRS slacks into scale-independent and scale-dependent parts as follows:

If DMU\(_{k}\) satisfies \(\sigma _k =1\) (the so called “most productive scale size”), all its slacks are attributed to scale-independent slacks. However, if \(\sigma _k <1\), the slacks are decomposed into the scale-independent part and the scale-dependent part as follows:

2.4 Scale-Independent Data Set

We define the scale-independent data \(({\overline{\mathbf {x}}}_k ,{\overline{\mathbf {y}}}_k )\;\;(k=1,\ldots ,n)\)by subtracting and adding the scale-dependent slacks as follows:

This process is illustrated in Fig. 1. The scale-independent data set \((\overline{\mathbf {X}} ,\overline{\mathbf {Y}})\) is defined by:

Let us define the production possibility sets \(P({\mathbf {X}},{\mathbf {Y}})\) and \(P(\overline{\mathbf {X}} ,\overline{\mathbf {Y}} )\) for \(({\mathbf {x}}_j, {\mathbf {y}}_j )\) and \(({\overline{\mathbf {x}}}_j,{\overline{\mathbf {y}}}_j)\;(j=1,\ldots ,n)\), respectively, as follows:

Lemma 2.1

\(P({\mathbf {X}},{\mathbf {Y}})=P(\overline{\mathbf {X}},\overline{\mathbf {Y}} ).\)

Proof

We define the scale-independent DMU, \(({\overline{\mathbf {x}}}_j ,{\overline{\mathbf {y}}}_j )\;(j=1,\ldots ,n)\), by:

If \(\sigma _j =1\), then we have \({\overline{\mathbf {x}}}_j ={\mathbf {x}}_j \) and \({\overline{\mathbf {y}}}_j ={\mathbf {y}}_j \). If \(\sigma _j <1\), then:

where \(({\mathbf {x}}_j -{\mathbf {s}}_j^{-*},{\mathbf {y}}_j +{\mathbf {s}}_j^{+*} )\) is the projection of \(({\mathbf {x}}_j ,{\mathbf {y}}_j )\) onto the \(P\)(X, Y) frontiers. Thus \(({\overline{\mathbf {x}}}_j,{\overline{\mathbf {y}}}_j )\;(j=1,\ldots ,n)\) belongs to \(P\)(X,Y). Hence, efficient frontiers are common to \(P\)(X,Y) and \(P(\overline{\mathbf {X}},\overline{\mathbf {Y}} )\). \(\square \)

3 In-Cluster Issue: Scale and Cluster adjusted DEA Score

In this section, we introduce the cluster of DMUs and define the scale and cluster adjusted score (SAS).

3.1 Cluster

We classify DMUs into several clusters depending on their characteristics. The clusters can be determined using a clustering method in statistics appropriate to the problem concerned, or supplied exogenously (see Sect. 7 for an example).

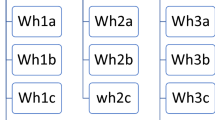

Farrell and Fieldhouse [9] used the grouping method for their study on farm survey data for England and Wales 1952–1953. They divided all observations (208) into 10 groups (clusters) according to output (gross sales). It certainly depends on adequate data sets with sufficient observations in each size group. See Fig. 2 for an illustration where 3 clusters are exhibited depending on the output size (a variation of Fig. 1 in Førsund et al. [11]).

Environmental factors can be utilized for classification in addition to input/output factors. Charnes et al. [10] divided DMUs into two groups by PFT and NFT properties. Several authors discussed environmental factors, e.g., Avkiran [15], Paradi et al. [16], Cook [17] among others.

However, if the above clustering methods are unavailable, clusters can be determined \(a\) posteriori depending on the degree of scale-efficiency. An example of the latter case can be achieved, as a supplementary tool, as described in Appendix A.

Since both clusters and scale-efficiencies critically affect the results of the proposed scheme, we need deliberate handlings on these subjects referring to the above literatures, and need to try many clustering cases to attain a reasonable conclusion.

We denote the name of the cluster containing DMU\(_{j}\) as Cluster(\(j)\), where \((j=1,\ldots ,n)\).

3.2 Solving the CRS Model in the Same Cluster

We solve the CRS model for each DMU \(({\overline{\mathbf {x}}}_k ,{\overline{\mathbf {y}}}_k )\;(k=1,\ldots ,n)\), referring to the \((\overline{\mathbf {X}},\overline{\mathbf {Y}} )\) in the same Cluster (\(k)\). The solution is formulated as follows:

We denote optimal in-cluster slacks by \(({\mathbf {s}}_k^{\mathrm{{cl}}-*} ,{\mathbf {s}}_k^{\mathrm{{cl}}+*} )\).Footnote 2 By summing the scale-dependent slacks and the in-cluster slacks, we define the total number of slacks as:

The scale and cluster adjusted data \((\overline{{\overline{{\mathbf {x}}}}}_k ,\overline{{\overline{{\mathbf {y}}}}}_k )\) (projection) is defined by:

Figure 3 illustrates the scale and cluster adjusted input.

Up to this point, we have removed scale-demerits and in-cluster slacks from the data set. Thus, we have obtained the scale-free and in-cluster slacks-free (projected) data set:

3.3 Scale and Cluster Adjusted Score (SAS)

In the input-oriented case, the SAS is defined by:

The reason we utilise the above scheme is as follows. First, we wish to eliminate scale-demerits from the CRS slacks. For this purpose, we decompose the CRS slacks into scale-dependent and scale-independent parts, by recognising that scale demerits are represented by \(1-\sigma _k \). If \(\sigma _k \)=1, the DMU has no scale-demerits, and its slacks are attributed to itself. If \(\sigma _{k}\) =0.25, 75 % of the slacks are attributed to the DMU’s scale-demerits. After removing the scale-dependent slacks, we evaluate the DMU within its cluster and determine the in-cluster slacks. If the DMU is efficient within its cluster, its in-cluster slacks are zero, while, if it is inefficient, the DMU has in-cluster slacks against the efficient DMUs. Finally, we sum the in-cluster and scale-dependent slacks to obtain the total number of slacks. Using the total slacks value determined, we define the SAS.

Proposition 3.1

SAS is not less than the CRS score.

Furthermore, if all DMUs belong to the same single cluster, it holds that \(\theta _k^{\mathrm{{SAS}}} =\theta _k^{\mathrm{{CRS}}} \;(\forall k).\) This implies that no non-convex frontiers exist when all DMUs belong to the same cluster.

Proposition 3.2

If \(\theta _k^{\mathrm{{CRS}}} =1\), then it holds that \(\theta _k^{\mathrm{{SAS}}} =\theta _{k}^{\mathrm{{CRS}}} \), but not vice versa.

Proposition 3.3

SAS decreases with increasing input and decreasing output, so long as both DMUs remain in the same cluster.

Proposition 3.4

The projected DMU \((\overline{{\overline{{\mathbf {x}}}}}_k ,\overline{{\overline{{\mathbf {y}}}}}_k )\) is efficient under the SAS model among the DMUs in the cluster it belongs to. It is also CRS and VRS efficient among the DMUs in its cluster.

Proofs for these propositions are given in Appendix B.

3.4 Summary of the SAS Computation

We summarise the SAS computation as follows:

-

Step 1. Input data (X, Y, Cluster). Cluster can be supplied exogenously by some clustering methods, including experts’ knowledge, or determined internally depending on the degree of scale-efficiency;

-

Step 2. For \(k=1,\ldots ,n,\) solve (3) and (4) to obtain the CRS and VRS scores, \(\theta _k^{\mathrm{{CRS}}} \) and \(\theta _k^{\mathrm{{VRS}}} \), respectively. Define the scale-efficiency \(\sigma _k :=\theta _k^{\mathrm{{CRS}}} /\theta _k^{\mathrm{{VRS}}} \);

-

Step 3. Using the optimal slacks \(({\mathbf {s}}_k^{-*},{\mathbf {s}}_k^{+*} )\) for the CRS model, define the scale-dependent slacks as \(((1-\sigma _k ){\mathbf {s}}_k^{-*},(1-\sigma _k ){\mathbf {s}}_k^{+*} );\)

-

Step 4. Define the scale-independent data set \((\overline{\mathbf {X}},\overline{\mathbf {Y}} ):=\left\{ {({\overline{\mathbf {x}}}_k,{\overline{\mathbf {y}}}_k )\left| {k=1,\ldots ,n} \right. } \right\} \) by:

$$\begin{aligned}&\text{ Scale-independent } \text{ input } {\overline{\mathbf {x}}}_k :={\mathbf {x}}_k -(1-\sigma _k ){\mathbf {s}}_k^{-*} ,\\&\text{ Scale-independent } \text{ output } {\overline{\mathbf {y}}}_k :={\mathbf {y}}_k +(1-\sigma _k ){\mathbf {s}}_k^{+*} ; \end{aligned}$$ -

Step 5. Solve the CRS model (15) for each \(({\overline{\mathbf {x}}}_k ,{\overline{\mathbf {y}}}_k )\) referring to the \((\overline{\mathbf {X}},\overline{\mathbf {Y}} )\) in the same cluster (\(k)\), and obtain the optimal in-cluster slacks \(({\mathbf {s}}_k^{\mathrm{{cl}}-*} ,{\mathbf {s}}_k^{\mathrm{{cl}}+*} )\);

-

Step 6. Define the SAS by:

$$\begin{aligned} \theta _k^\mathrm{{SAS}} :=1-\frac{1}{m}\sum \nolimits _{i=1}^m {\frac{s_{ik}^{\mathrm{{cl}}-*} +(1-\sigma _k )s_{ik}^{-*} }{x_{ik} }} ; \end{aligned}$$ -

Step 7. Obtain the scale and cluster adjusted input and output (projection) \((\overline{{\overline{{\mathbf {x}}}}}_k,\overline{{\overline{{\mathbf {y}}}}}_k )\) by:

$$\begin{aligned} \overline{{\overline{{\mathbf {x}}}}}_k :={\mathbf {x}}_k -(1-\sigma _k ){\mathbf {s}}_k^{-*} -{\mathbf {s}}_k^{\mathrm{{cl}}-*} \text{, }\;\overline{{\overline{{\mathbf {y}}}}}_k :={\mathbf {y}}_k +(1-\sigma _k ){\mathbf {s}}_k^{+*} +{\mathbf {s}}_k^{\mathrm{{cl}}+*} ; \end{aligned}$$ -

Step 8. We define the scale and cluster adjusted dataset by \((\overline{\overline{\mathbf {X}}},\overline{\overline{\mathbf {Y}}} ):=\left\{ {(\overline{{\overline{{\mathbf {x}}}}}_j ,\overline{{\overline{{\mathbf {y}}}}}_j )\left| {j=1,\ldots ,n} \right. } \right\} .\)

3.5 Global Characterisation of SAS-projected DMUs

From Proposition 3.4, the SAS-projected \((\overline{{\overline{{\mathbf {x}}}}}_k ,\overline{{\overline{{\mathbf {y}}}}}_k )\) is positioned on the convex frontier within its containing cluster. We can determine whether or not it is located on the global convex frontier by solving the following LP.

-

(a)

If this program is infeasible, then there is no supporting hyperplane to \(( {\overline{\overline{\mathbf {X}}},\overline{\overline{\mathbf {Y}}} })\) at \((\overline{{\overline{{\mathbf {x}}}}}_k,\overline{{\overline{{\mathbf {y}}}}}_k )\)which is located on the globally non-convex frontiers;

-

(b)

If this program is feasible, let an optimal solution be \(( {u_0^*,{\mathbf {v}}^*,{\mathbf {u}}^*})\), with \(u_0^*=\underline{u}_0 (\text{ or } \bar{u}_0 )\). Then, the hyperplane \(-{\mathbf {v}}^*{\mathbf {x}}+{\mathbf {u}}^*{\mathbf {y}}-u_0^*=0\) is a supporting hyperplane to \(( {\overline{\overline{\mathbf {X}}},\overline{\overline{\mathbf {Y}}} })\) at \((\overline{{\overline{{\mathbf {x}}}}}_k,\overline{{\overline{{\mathbf {y}}}}}_k )\). Hence, \((\overline{{\overline{{\mathbf {x}}}}}_k,\overline{{\overline{{\mathbf {y}}}}}_k )\)is located on the convex frontiers of the SAS projected DMUs. Furthermore, we can characterize some of the convex frontiers specifically;

-

(c)

If there is a vector \({\mathbf {v}}\ge {\mathbf {0}}\) such that \({\mathbf {v}}\overline{{\overline{{\mathbf {x}}}}}_k =1\) and \({\mathbf {v}}\overline{{\overline{{\mathbf {x}}}}}_j \ge 1\;(\forall j)\), then \((\overline{{\overline{{\mathbf {x}}}}}_k,\overline{{\overline{{\mathbf {y}}}}}_k )\) is located on the boundary of \(( {\overline{\overline{\mathbf {X}}},\overline{\overline{\mathbf {Y}}} })\) and has a supporting hyperplane which is vertical to the input axes.

-

(d)

If there is a vector \({\mathbf {u}}\ge {\mathbf {0}}\) such that \({\mathbf {u}}\overline{{\overline{{\mathbf {y}}}}}_{{\mathbf {k}}} =1\) and \({\mathbf {u}}\overline{{\overline{{\mathbf {y}}}}}_j \le 1\;(\forall j)\), then \((\overline{{\overline{{\mathbf {x}}}}}_k,\overline{{\overline{{\mathbf {y}}}}}_k )\) is located on the boundary of \(( {\overline{\overline{\mathbf {X}}},\overline{\overline{\mathbf {Y}}} })\) and has a supporting hyperplane which is horizontal to the input axes.

-

(e)

If \((\overline{{\overline{{\mathbf {x}}}}}_k,\overline{{\overline{{\mathbf {y}}}}}_k )\) satisfies the above two conditions (c) and (d), then \((\overline{{\overline{{\mathbf {x}}}}}_k,\overline{{\overline{{\mathbf {y}}}}}_k )\) is located on the corner of \(( {\overline{\overline{\mathbf {X}}},\overline{\overline{\mathbf {Y}}} })\) and has supporting hyperplanes which are vertical or horizontal to the input axes.

We note that the difference between the VRS score and the SAS score is unrelated to the global characteristics of SAS projected DMU.

4 An Illustrative Example

In this section, we present an artificial example with a single input and a single output. Table 1 shows 10 DMUs with input x and output y, while Fig. 4 gives a graphical interpretation of the same data. These DMUs display a typical S-shaped curve.

Data plot of DMUs defined in Table 1

Initially, we solved the input-oriented CRS and VRS models, and obtained the scale-efficiency and CRS slacks, which were then decomposed into scale-independent and scale-dependent parts. Table 2 shows the solutions and the decomposed parts. Since the output, \(y\), has no slacks in this example, they are not included in the table.

In the second phase, we deleted the scale-dependent slacks from the data and obtained the data set \((\overline{{\mathbf {X}},} \overline{\mathbf {Y}} )\). We solved the CRS model within each cluster and determined the in-cluster slacks. By summing the scale-dependent slacks and the in-cluster slacks, we obtained the total slacks. Table 3 shows the data obtained.

Finally, we computed the adjusted score, \(\theta ^{\mathrm{{SAS}}}\), and the projected input and output. These values are given in Table 4, while Fig. 5 displays the results graphically. In the table, “Frontier” indicates the global characteristics of the SAS projected DMUs, identified using (20).

In Table 5 and Fig. 6, we compare the input-oriented CRS score, VRS score and SAS. The SAS of DMUs C and D have larger values than the scores given by the VRS model. This reflects the non-convex characteristics of the data set. The projected frontiers are a mixture of non-convex and convex parts.

5 The Radial Model Case

In this section, we develop our model to the radial models. We utilize the input-oriented radial measures: CCR (Charnes et al. [18]) and BCC (Banker et al. [19]) models, for the efficiency evaluation of each DMU \(({\overline{\mathbf {x}}}_k,{\overline{\mathbf {y}}}_k )\;(k=1,\ldots ,n)\) as follows:

where \({\varvec{\lambda }}\in \text{ IR }^n\) is the intensity vector.

Although we present our model in the input-oriented radial model, we can develop the model in the output-oriented radial model as well.

We define the scale-efficiency (\(\sigma _k )\) of DMU\(_{k}\) by

5.1 Decomposition of CCR Slacks

We decompose CRS score into scale-independent and scale-dependent parts as follows:

The radial input-slack can be defined as;

We decompose this radial input-slack into scale-dependent and scale-independent slacks as;

5.2 Scale Adjusted Input and Output

We define scale adjusted input \({\overline{\mathbf {x}}}_k \) and output \({\overline{\mathbf {y}}}_k \) by

We define the scale-adjusted score by

We have the following propositions.

Proposition 5.1

Proposition 5.2

Proofs for these propositions are given in Appendix B.

5.3 Solving the CCR Model in the Same Cluster

We introduce the clusters of DMUs in the same manner as mentioned in the non-radial (SBM) case. We solve the input oriented CCR model for each DMU \(({\overline{\mathbf {x}}}_k,{\overline{\mathbf {y}}}_k )\;(k=1,\ldots ,n)\) referring to the \((\overline{\mathbf {X}} ,\overline{\mathbf {Y}})\) in the same Cluster (\(k)\), which can be formulated as follows:

Scale-cluster adjusted data (projection) \((\overline{{\overline{{\mathbf {x}}}}}_k ,\overline{{\overline{{\mathbf {y}}}}}_k )\) is defined by:

Up to this point, we deleted scale demerits and in-cluster slacks from the data set. Thus, we have obtained a scale free and in-cluster slacks free (projected) data set \((\overline{\overline{\mathbf {X}}},\overline{\overline{\mathbf {Y}}} ).\)

5.4 Scale and Cluster Adjusted Score (SAS)

In the input-oriented case, the scale and cluster adjusted score (SAS) is defined by

In this case, SAS is the product of the in-cluster efficiency and the scale adjusted score. This differs from the definition of SAS in the non-radial case (18), where SAS is defined using the sum of scale-dependent slacks and in-cluster slacks.

Similarly to Propositions 3.1–3.4, we have the following propositions.

Proposition 5.3

The scale and cluster adjusted score (SAS) is not less than the CCR score.

Proposition 5.4

If \(\theta _k^{\mathrm{{CCR}}} =1\), then it holds \(\theta _k^{\mathrm{{SAS}}} =\theta _k^{\mathrm{{CCR}}} \), but not vice versa.

Proposition 5.5

The scale and cluster adjusted score (SAS) is decreasing in the increase of input and in the decrease of output so long as the both DMUs remain in the same cluster.

Proposition 5.6

The SAS-projected DMU \((\overline{{\overline{{\mathbf {x}}}}}_k ,\overline{{\overline{{\mathbf {y}}}}}_k )\) is radially efficient under the SAS model among the DMUs in the cluster it belongs to. It is also CCR and BCC efficient among the DMUs in its cluster.

6 Scale-Dependent Data Set and Scale Elasticity

Thus far, we have discussed the efficiency score considerations of our proposed scheme. In this section, we deal with the consideration of scale elasticity. Many papers have discussed this subject under the guise of the globally convex frontier assumption (Banker and Thrall [20], Banker et al. [21], Färe and Primond [22], Førsund and Hjalmarsson [23], Førsund and Hjalmarsson [24], Kousmanen [5], Olesen and Petersen [7], Podinovski [6]). However, in the case of non-convex frontiers, we believe there is a need for further research. Based on the decomposition of the CRS slacks mentioned in Sect. 2, we have developed a new scale elasticity that can cope with non-convex frontiers.

6.1 Scale-dependent Data Set

We subtract or add scale-independent slacks from the data set, and thus define the scale-dependent data set \(({\hat{\mathbf {x}}}_k ,{\hat{\mathbf {y}}}_k )\):

Figure 7 illustrates an example of this scheme.

We define the scale-dependent set \(({{\hat{\mathbf {X}}},{\hat{\mathbf {Y}}}}):=\left\{ {( {{\hat{\mathbf {x}}}_k,{\hat{\mathbf {y}}}_k \left| {k=1,\ldots ,n} \right. })}\right\} \).

We first project \(({\hat{\mathbf {x}}}_k,{\hat{\mathbf {y}}}_k )\) onto the VRS frontier of (\({\hat{\mathbf {X}}},{\hat{\mathbf {Y}}})\) in the same cluster. Thus, we denote the projection \(({\hat{\mathbf {x}}}_k^{\text{ Proj }} ,{\hat{\mathbf {y}}}_k^{\text{ Proj }} )\):

6.2 Scale Elasticity

The scale elasticity, or “degree of scale economies”, is defined as the ratio of marginal product to average product. In a single input/output case, if the output, \(y\), is produced by the input, \(x\), we define the scale elasticity by:

For the case with multiple input–output environments, the scale elasticity is determined by solving linear programs related to the supporting hyperplane at the respective efficient point (Cooper et al. [25], pp. 147–149).

The projection set (\({\hat{\mathbf {X}}}^{\text{ Proj }},{\hat{\mathbf {Y}}}^{\text{ Proj }})\) defined above has at least convex frontiers within each cluster; we can find a supporting hyperplane at \(({\hat{\mathbf {x}}}_k^{\text{ Proj }} ,{\hat{\mathbf {y}}}_k^{\text{ Proj }})\) that supports all projected DMUs in the cluster and has the minimum deviation \(t\) from the cluster. This scheme can be formulated as follows:

Let the optimal \(u_{0}\) be \(u_0^*\). We define the scale elasticity of DMU \(({\mathbf {x}}_{k},{\mathbf {y}}_{k})\) by:

If \(u_o^*\) is not uniquely determined, we check its minimum and maximum while keeping \(t\) at the optimum value.

The reason behind applying the scheme outlined above is as follows:

-

(a)

Conventional methods assume a global convex production possibility set to identify the RTS characteristics of each DMU. However, as we observed, the data set does not always exhibit convexity. Moreover, the RTS property is a local property, but is not global, as indicated by (37). Hence, we investigate this issue within the individual cluster containing the DMU, after deleting the scale-independent slacks;

-

(b)

Conventional methods usually find multiple optimum values of \(u_0^*\), and there may be a large difference between the minimum and maximum values. The scale elasticity \(\varepsilon _k \) defined above remains between the minimum and maximum, but has a much smaller range of allowed values.

7 Application to a Data Set Concerning Japanese National Universities

In this section, we apply our scheme to a data set comprising research output information from the Faculties of Medicine within 37 Japanese National Universities.

7.1 Data

Table 6 shows the data set concerning the research output of Japanese National Universities with a Faculty of Medicine in 2008 (Report by the Council for Science and Technology Policy, Japanese Government, 2009). We chose two inputs: (I) subsidy and (I) no. of faculty, and three outputs: (O) no. of publications; (O) no. of JSPS (Japan Society for Promotion of Sciences) funds and (O) no. of funded research projects. Since there are large differences in size between the 37 universities, we classified them into four clusters, A, B, C and D, determined by the sum of the no. of JSPS funds and the no. of funded research projects. Cluster A is defined as the set of universities with a sum larger than 2000, cluster B between 2000 and 1000, cluster C between 1000 and 500, and cluster D less than 500. The average value for each cluster is: 3225 for A; 1204 for B; 653 for C and 348 for D. Determination of the effect of size is one of the objectives of this application.

Figure 8 shows the 37 universities considering the no. of faculty (input) and the no. of publications (output). Globally non-convex characteristics are observed. A large difference is observed between the big seven universities (A) and the other universities (B, C and D). We can observe similar tendencies when considering other inputs and outputs.

7.2 Adjusted Score (SAS)

Table 7 compares the CRS scores, VRS scores and SAS for this data by means of the non-radial model, and Fig. 9 displays the results graphically. In the table, “Frontier” indicates the global characterization of SAS-projected DMUs obtained by (22) with abbreviations H=horizontal, C=convex, N=non-convex and V=vertical.

The SAS of B1, B2 and B3 are much larger than the VRS score, demonstrating the non-convex structure of the data set. Universities in Cluster A are judged to be almost efficient when considering the adjusted scores. Table 8 summarises averages of CRS scores, VRS scores and SAS for each cluster. For cluster A universities, differences between the three scores are small, and these universities have the highest scores for each model. For cluster B universities, the average SAS is larger than the average VRS score. This indicates the existence of non-convex frontiers around cluster B universities. For cluster C universities, the discrepancy between the CRS and VRS scores becomes large, and the average SAS is between these values, but closer to the VRS score. For cluster D universities, the discrepancy between scores becomes largest, indicating the smallest scale-efficiency. SAS are positioned almost equally spaced between CRS and VRS scores. The average SAS decreases monotonically from cluster A through to cluster D.

7.3 Scale Elasticity

Table 9 shows the scale elasticity, \(\varepsilon \), calculated using (39).

We observe that, for cluster A universities, the scale elasticity is almost unity, with a maximum value of 1.0669 and a minimum value of 0.961. This cluster exhibits constant returns-to-scale. Clusters B, C and D universities have an elasticity value higher than unity, and the average elasticity increases from cluster B through to cluster D. These universities have increasing returns-to-scale characteristics.

8 Conclusions

Most DEA models assume convex efficient frontiers for evaluation of DEA scores. However, in real world situations, there exist non-convex frontiers which cannot be identified by the traditional models. Non-convex frontiers result from many factors, e.g., region, environment, ownership, size of enterprise, category of business, age, and so forth. If we categorize DMUs into several classes by these factors and evaluate efficiencies within each class, the scores gained from such classification are local and we cannot obtain the global (overall) measurement of their performance.

We have developed a scale and cluster adjusted DEA model assuming scale-efficiency and clustering of DMUs. The scale and cluster adjusted score (SAS) reflects the inefficiency of the DMUs after removing the inefficiencies caused by scale-demerits and accounting for in-cluster inefficiency. This model can identify non-convex (S-shaped) frontiers reasonably. We also proposed a new scheme for the evaluation of scale elasticity. We applied this model to a data set comprising the research input and output of Japanese universities.

The major implications of this study are as follows:

-

(1)

By using this model, we become free from the big differences typically observed between CRS and VRS scores. Many practitioners are puzzled which one is to be applied to their problems. Our approach will be a help on this problem when several clusters exist. Hence, the use of DEA becomes more convenient and simple;

-

(2)

We do not require any statistical tests on the range of the intensity vector \(\varvec{\lambda }\);

-

(3)

The model can cope with non-convex frontiers, e.g., S-shaped curves. In such cases, it is observed that even the VRS scores could become too stringent to be applied in the case of some DMUs.

Although we presented the scheme in input-oriented form, we can extend it to output-oriented and non-radial non-oriented (both-oriented) models as well as to directional distance models.

The main purpose of this paper was to introduce a DEA model that can cope with non-convex frontiers by recognising the impact of scale-efficiency (scale-merits and scale-demerits) and clusters. For our model, the clustering plays a fundamental role, which is as important as the selection of DMUs, input/output items and DEA models.

Future research subjects include studies into alternative scale-efficiency measures, rather than using the CRS/VRS ratio and clustering methods. Applications to negative data, cost, revenue, and profit models are also potential subjects for future research. We believe this study introduces a new roadmap for DEA research.

References

Avkiran, N.K.: Investigating technical and scale efficiencies of Australian universities through data envelopment analysis. Socio-Eco. Plann. Sci. 35, 57–80 (2001)

Avkiran, N.K., Tone, K., Tsutsui, M.: Bridging radial and non-radial measures of efficiency in DEA. Ann. Oper. Res. 164, 127–138 (2008)

Bogetoft, P., Otto, L.: Benchmarking with DEA, SFA, and R. Springer, New York (2010)

Dekker, D., Post, T.: A quasi-concave DEA model with an application for bank branch performance evaluation. Eur. J. Oper. Res. 132, 296–311 (2001)

Kousmanen, T.: DEA with efficiency classification preserving conditional convexity. Eur. J. Oper. Res. 132, 326–342 (2001)

Podinovski, V.V.: Local and global returns to scale in performance measurement. J. Oper. Res. Soc. 55, 170–178 (2004)

Olesen, O.B., Petersen, N.C.: Imposing the regular ultra Passum law in DEA models. Omega 41, 16–27 (2013)

Farrell, M.J.: The measurement of productive efficiency. J. Royal Statist. Soc. Ser. A (General) 120(III), 253–281 (1957)

Farrell, M.J., Fieldhouse, M.: Estimating efficient production functions under increasing returns to scale. J. Royal Statist. Soc. Ser. A (General) 125(2), 252–267 (1962)

Charnes, A., Cooper, W.W., Rhodes, E.: Evaluating program and managerial efficiency: an application of data envelopment analysis to program follow through. Manag. Sci. 27, 668–678 (1981)

Førsund, F.R., Kittelsen, S.A.C., Krivonozhko, V.E.: Farrell revisited—visualizing properties of DEA production frontiers. J. Oper. Res. Soc. 60, 1535–1545 (2009)

Krivonozhko, V.E., Utkin, O.B., Volodin, A.V., Sablin, I.A., Patrin, M.: Constructions of economic functions and calculation of marginal rates in DEA using parametric optimization methods. J. Oper. Res. Soc. 55, 1049–1058 (2004)

Deprins, D., Simar, L., Tulkens, H.: Measuring labor efficiency in post office. In Marchand, P., Pestieau, P., Tulkens, H. (eds.) The performance of public Enterprises: Concepts and measurement, pp. 243–267. North-Holland (1984)

Tone, K.: A slacks-based measure of efficiency in data envelopment analysis. Eur. J. Oper. Res. 130, 498–509 (2001)

Avkiran, N.K.: Applications of data envelopment analysis in the service sector. In: Handbook on Data Envelopment Analysis, Chapter 15. Springer, New York (2011)

Paradi, J. C., Yang, Z., Zhu, H.: Assessing bank and bank branch performance—modeling considerations and approaches. In: Handbook on Data Envelopment Analysis, Chapter 13. Springer, New York (2011)

Cook, W. D.: Qualitative data in DEA. In: Handbook on Data Envelopment Analysis, Chapter 6. Springer, New York (2011)

Charnes, A., Cooper, W.W., Rhodes, E.: Measuring the efficiency of decision making units. Eur. J. Oper. Res. 2, 429–444 (1978)

Banker, R.D., Charnes, A., Cooper, W.W.: Some models for estimating technical and scale inefficiencies in data envelopment analysis. Manag. Sci. 30, 1078–1092 (1984)

Banker, R.D., Thrall, R.M.: Estimation of returns to scale using data envelopment analysis. Eur. J. Oper. Res. 62, 74–84 (1992)

Banker, R.D., Cooper, W.W., Seiford, L.M., Thrall, R.M., Zhu, J.: Returns to scale in different DEA models. Eur. J. Oper. Res. 154, 345–362 (2004)

Färe, R., Primond, D.: Multi-Output Production and Duality: Theory and application. Kluwer Academic Press, Norwell, MA (1995)

Førsund, F.R., Hjalmarsson, L.: Are all scales optimal in DEA? theory and empirical evidence. J. Prod. Anal. 21, 25–48 (2004)

Førsund, F.R., Hjalmarsson, L.: Calculating scale elasticity in DEA models. J. Oper. Res. Soc. 55, 1012–1038 (2004)

Cooper, W.W., Seiford, L.M., Tone, K.: Data Envelopment Analysis: A Comprehensive Text with Models, Applications, References and DEA-Solver Software. Springer, Berlin (2007)

Acknowledgments

We are grateful to two reviewers for their comments and suggestions on the previous version of the manuscript. This research was supported by JSPS KAKENHI Grant Number 25282090.

Author information

Authors and Affiliations

Corresponding author

Additional information

Communicated by Jamal Ouenniche.

Appendices

Appendix A: Clustering Using Returns-to-Scale and Scale-Efficiency

We already know the returns-to-scale (RTS) characteristics of each DMU, \(i.e.\), increasing-returns-to-scale (IRS), CRS or DRS (decreasing returns-to-scale), as it is obtained from the VRS solution by projecting VRS inefficient DMUs onto the VRS efficient frontiers. We first classify CRS DMUs as Cluster C. Then, we classify IRS DMUs depending on the degree of scale-efficiency, \(\sigma \). For example, IRS DMUs with 1\(>\sigma \ge \)0.8 are classified as I1, and IRS DMUs with 0.8\(>\sigma \ge \)0.6 as I2, and so on. DRS DMUs with 1\(>\sigma \ge \)0.8 are classified as D1, and so on, as above. We determine the number of clusters and their bandwidth by considering the number of DMUs in the cluster. Each cluster is expected to have at least as many DMUs as a few times the sum of the input and output factors. Figure 10 illustrates this point. This figure corresponds to the input-oriented case, where DMUs with highly different input scales may be classified into the same cluster. If such classification is inappropriate, we may try the output-oriented, non-oriented or directional distance models to determine clusters.

Appendix B: Proof of Propositions

Proposition 3.1

\( \theta _k^{\mathrm{{SAS}}} \ge \theta _k^{\mathrm{{CRS}}} \quad (k=1,\ldots ,n).\)

Proof

The CRS scores for \(({\mathbf {x}}_k,{\mathbf {y}}_k )\) and \(({\overline{\mathbf {x}}}_k,{\overline{\mathbf {y}}}_k )\), respectively, are defined by:

and

We prove this proposition for two individual cases.

(Case 1) All DMUs belong to the same cluster.

In this case (B.2) becomes:

Let \(({\varvec{\lambda }}^*,{\mathbf {t}}^{-*},{\mathbf {t}}^{+*})\) be an optimal solution for (B.3). Since \(P({\mathbf {X}},{\mathbf {Y}})=P(\overline{\mathbf {X}} ,\overline{\mathbf {Y}} )\) by Lemma 3.1, and both sets have the same efficient DMUs which span \(({\overline{\mathbf {x}}}_k ,{\overline{\mathbf {y}}}_k )\;\), we have:

This indicates that \(({\varvec{\lambda }}^*,{\mathbf {t}}^{-*}+(1-\sigma _k ){\mathbf {s}}_k^{-*} ,{\mathbf {t}}^{+*}+(1-\sigma _k ){\mathbf {s}}_k^{+*} )\) is feasible for (B.1), and hence its objective function value is not less than the optimal value, \(\theta _k^{\mathrm{{CRS}}} \).

Conversely, \({\mathbf {t}}^{-*} =\sigma _k {\mathbf {s}}_k^{-*}\,\text{ and }\,{\mathbf {t}}^{+*} =\sigma _k {\mathbf {s}}_k^{+*}\) are feasible for SAS, and hence it holds that \(\theta _k^{\mathrm{{SAS}}} =\theta _k^{\mathrm{{CRS}}} \,(k=1,\ldots ,n).\)

(Case 2) Multiple clusters exist.

In this case, we have additional constraints to (B.3) to define the cluster restriction, as follows:

Since adding constraints results in an increase of the objective value, it holds that:

\(\square \)

Proposition 3.2

If \(\theta _o^{\mathrm{{CRS}}} =1\), then it holds that \(\theta _o^{\mathrm{{SAS}}} =\theta _o^{\mathrm{{CRS}}} \), but not vice versa.

Proof

If \(\theta _k^{\mathrm{{CRS}}} =1\), then we have \({\mathbf {s}}_k^{-*} ={\mathbf {0}}\,\text{ and } {\mathbf {s}}_k^{+*} ={\mathbf {0}}\). Hence, we have total slacks=0 and \(\theta _k^{\mathrm{{SAS}}} =1\). The converse is not always true, as demonstrated in the example below, where all DMUs belong to an independent cluster.

DMU | (l)x | (O)y | Cluster |

|---|---|---|---|

A | 2 | 2 | a |

B | 4 | 2 | b |

C | 6 | 2 | c |

DMU | CRS-I | SAS-I | Cluster |

|---|---|---|---|

A | 1 | 1 | a |

B | 0.5 | 1 | b |

C | 0.3333 | 1 | c |

\(\square \)

Proposition 3.3

SAS decreases with increasing input and decreasing output so long as both DMUs remain in the same cluster.

Proof

Let \(( {{\mathbf {x}}_p,{\mathbf {y}}_p })\,\,\text{ and } ( {{\mathbf {x}}_{q},{\mathbf {y}}_q })\,\text{ with } {\mathbf {x}}_p \le {\mathbf {x}}_q \,\text{ and } {\mathbf {y}}_p \ge {\mathbf {y}}_q \) be the original and varied DMUs, respectively, in the same cluster. Let \({\mathbf {x}}_q ={\mathbf {x}}_p +{\varvec{\delta }}_p^- \;({\varvec{\delta }}_p^- \ge {\mathbf {0}}),{\mathbf {y}}_q ={\mathbf {y}}_p -{\varvec{\delta }}_p^+ \;({\varvec{\delta }}_p^+ \ge {\mathbf {0}})\) and the optimal solution for \(( {{\mathbf {x}}_{p},{\mathbf {y}}_p })\,\) be \((\theta _p^{\mathrm{{SAS}}},{\varvec{\lambda }}_p^*,{\mathbf {s}}_p^{-*},{\mathbf {s}}_p^{+*} )\). We have \({\mathbf {X}}{\varvec{\lambda }}_p^*+{\mathbf {s}}_p^{-*} ={\mathbf {x}}_p ={\mathbf {x}}_q -{\varvec{\delta }}_p^-, \quad {\mathbf {Y}}{\varvec{\lambda }}_p^*-{\mathbf {s}}_p^{+*} ={\mathbf {y}}_p ={\mathbf {y}}_q +{\varvec{\delta }}_p^+ \). Hence \(({\mathbf {s}}_p^{-*} +{\varvec{\delta }}_p^- ,{\mathbf {s}}_p^{+*} +{\varvec{\delta }}_p^+ )\)is a feasible slack for \(( {{\mathbf {x}}_{q},{\mathbf {y}}_q })\,\). We have

\(\square \)

Proposition 3.4

The projected DMU \((\overline{{\overline{{\mathbf {x}}}}}_k ,\overline{{\overline{{\mathbf {y}}}}}_k )\) is efficient under the SAS model among the DMUs in its containing cluster. It is CRS and VRS efficient among the DMUs in its cluster.

Proof

From the definition of \((\overline{{\overline{{\mathbf {x}}}}}_k ,\overline{{\overline{{\mathbf {y}}}}}_k )\), it is SAS efficient. Thus, it is CRS and VRS efficient in its cluster. \(\square \)

Proposition 5.1

Proof

This term is increasing in \(\sigma _k \) and is equal to 1 when \(\sigma _k \)=1. \(\square \)

Proposition 5.2

Proof

If \(\sigma _k =1\), it holds \(\theta _k^{scale} =\sigma _k +\theta _k^{\mathrm{{CCR}}} -\sigma _k \theta _k^{\mathrm{{CCR}}} =1.\) Conversely, if \(\theta _k^\mathrm{{scale}} =\sigma _k +\theta _k^{\mathrm{{CCR}}} -\sigma _k \theta _k^{\mathrm{{CCR}}} =1\), we have \(\sigma _k (1-\theta _k^{\mathrm{{CCR}}} )=1-\theta _k^{\mathrm{{CCR}}} \). Hence, if \(\theta _k^{\mathrm{{CCR}}} <1\), then it holds \(\sigma _k =1.\) If \(\theta _k^{\mathrm{{CCR}}} =1\), then we have \(\theta _k^{\mathrm{{BCC}}} =1\;\text{ and }\,\sigma _k =1.\) \(\square \)

Rights and permissions

About this article

Cite this article

Tone, K., Tsutsui, M. How to Deal with Non-Convex Frontiers in Data Envelopment Analysis. J Optim Theory Appl 166, 1002–1028 (2015). https://doi.org/10.1007/s10957-014-0626-3

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10957-014-0626-3

Keywords

- Data envelopment analysis

- S-shaped curve

- Constant returns-to-scale

- Variable returns-to-scale

- Scale elasticity