Abstract

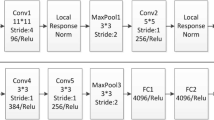

Image auto-annotation which annotates images according to their semantic contents has become a research focus in computer vision, as it helps people to edit, retrieve and understand large image collections. In the last decades, researchers have proposed many approaches to solve this task and achieved remarkable performance on several standard image datasets. In this paper, we train neural networks using visual and semantic ranking loss to learn visual-semantic embedding. This embedding can be easily applied to nearest-neighbor based models to boost their performance on image auto-annotation. We test our method on four challenging image datasets, reporting comparison results with existing works. Experimental results show that our method can be applied to several state-of-the-art nearest-neighbor based models including TagProp and 2PKNN, and significantly improves their performance.

Similar content being viewed by others

Notes

We also tried to use ReLU which perform slightly inferior than using SER (F1 values decrease 3–5% in our experiments on four datasets).

The source code of TagProp is available at: http://lear.inrialpes.fr/people/guillaumin/code.php#tagprop.

The source code of 2PKNN is available at: http://researchweb.iiit.ac.in/~yashaswi.verma/eccv12/2pknn.zip.

These features are available at: http://lear.inrialpes.fr/people/guillaumin/data.php.

References

Ballan L, Uricchio T, Seidenari L, Bimbo AD (2014) A cross-media model for automatic image annotation. In: ACM ICMR, pp 73–80

Blei D, Jordan M (2003) Modeling annotated data. In: ACM SIGIR, pp 127–134

Carneiro G, Chan A, Moreno P, Vasconcelos N (2007) Supervised learning of semantic classes for image annotation and retrieval. IEEE Trans Pattern Anal Mach Intell 29(3):394–410

Chatfield K, Lempitsky V, Vedaldi A, Zisserman A (2011) The devil is in the details: an evaluation of recent feature encoding methods. In: BMVC, pp 1–12

Deng J, Dong W, Socher R, Li L, Li K, Fei-Fei L (2009) Imagenet: a large-scale hierarchical image database. In: CVPR, pp 248–255

Fenga S, Manmatha R, Lavrenko V (2004) Multiple Bernoulli relevance models for image and video annotation. In: CVPR, pp 1002–1009

Fernando B, Anderson P, Hutter M, Gould S (2016) Discriminative hierarchical rank pooling for activity recognition. In: CVPR, pp 1924–1932

Fernando B, Gawes E, Oramas J, Ghodrati J, Tuytelaars T (2017) Rank pooling for action recognition. IEEE Trans Pattern Anal Mach Intell 99:773–787

Fu H, Zhang Q, Qiu G (2012) Random forest for image annotation. In: ECCV, pp 86–99

Gong Y, Jia Y, Leung T, Toshev A, Ioffe S (2014) Deep convolutional ranking for multilabel image annotation. In: CoRR, arXiv:1312.4894

Gong Y, Ke Q, Isard M, Lazebnik S (2014) A multi-view embedding space for modeling internet images, tags, and their semantics. Int J Comput Vis 106(2):210–233

Gong Y, Wang L, Hodosh M, Hockenmaier J, Lazebnik S (2014) Improving image-sentence embeddings using large weakly annotated photo collections. In: ECCV, pp 529–545

Gu Y, Xue H, Yang J (2016) Cross-modal saliency correlation for image annotation. Neural Process Lett 45(3):777–789

Guillaumin M, Mensink T, Verbeek J, Schmid C (2009) Tagprop: discriminative metric learning in nearest neighbor models for image auto-annotation. In: ICCV, pp 309–316

Hardoon D, Szedmak S, Shawe-Taylor J (2004) Cannonical correlation analysis: an overview with application to learning methods. Neural Comput 16(12):2639–2664

He K, Zhang X, Ren S, Sun J (2016) Deep residual learning for image recognition. In: CVPR, pp 770–778

Ioffe S, Szegedy C (2015) Batch normalization: accelerating deep network training by reducing internal covariate shift. In: ICML, pp 448–456

Jeon J, Lavreko V, Manmatha R (2003) Automatic image annotation and retrieval using cross-media relevance models. In: ACM SIGIR, pp 119–126

Joachims T (2002) Optimizing search engines using clickthrough data. In: ACM SIGKDD, pp 133–142

Johnson J, Ballan L, Fei-Fei L (2015) Love thy neighbors: image annotation by exploiting image metadata. In: ICCV, pp 4624–4632

Kiros R, Szepesvari C (2015) Deep representations and codes for image auto-annotation. In: NIPS, pp 917–925

Klein B, Lev G, Sadeh G, Wolf L (2015) Fisher vectors derived from hybrid Gaussian–Laplacian mixture models for image annotation. In: CoRR, arXiv:1411.7399

Klein B, Lev G, Sadeh G, Wolf L (2015) Fisher vectors derived from hybrid Gaussian–Laplacian mixture models for image annotation. In: CVPR

Krizhevsky A, Sutskever I, Hinton G (2012) Imagenet classification with deep convolutional neural networks. In: NIPS, pp 1106–1114

Lavrenko V, Manmatha R, Jeon J (2004) A model for learning the semantics of pictures. In: NIPS, pp 553–560

Lazebnik S, Schmid C, Ponce J (2006) Beyond bags of features: spatial pyramid matching for recognizing natural scene categories. In: CVPR, pp 2169–2178

Li X, Snoek C, Worring M (2007) Learning social tag relevance by neighbor voting. IEEE TMM 11(7):1310–1322

Liu Y, Xu D, Tsang I, Luo J (2007) Using large-scale web data to facilitate texual query based retrieval of consumer photos. In: ACM MM, pp 1277–1283

Lowe D (2004) Distinctive image features from scale-invariant keypoints. IJCV 60(2):91–110

Makadia A, Pavlovic V, Kumar S (2008) A new baseline for image annotation. In: ECCV, pp 316–329

Makadia A, Pavlovic V, Kumar S (2010) Baselines for image annotation. Int J Comput Vis 90(1):88–105

Mikolov T, Chen K, Corrado G, Dean J (2013) Efficient estimation of word representations in vector space. arXiv:1301.3781

Montazer G, Giveki D (2017) Scene classification using multi-resolution WAHOLB features and neural network classifier. Neural Process Lett 46(2):681–704

Moran S, Lanvrenko V (2014) Sparse kernel learning for image annotation. In: ACM ICMR, p 113

Oliva A, Torralba A (2001) Modeling the shape of the scene: a holistic representation of the spatial envelope. IJCV 42(3):145–175

Peng X, Zou C, Qiao Y, Peng Q (2010) Action recognition with stacked fisher vectors. In: ECCV, pp 581–595

Perronnin F, Sanchez J, Mensink T (2010) Improving the fisher kernel for large scale image classification. In: ECCV, pp 143–156

Simonyan K, Zisserman A (2015) Very deep convolutional networks for large scale image recognition. In: ICLR

Song Y, Zhuang Z, Li H, Zhao Q, Li J, Lee W, Giles CL (2008) Real-time automatic tag recommendation. In: ACM SIGIR, pp 515–522

Venkatesh N, Subhransu M, Manmatha R (2015) Automatic image annotation using deep learning representations. In: ACM ICMR, pp 603–606

Verma Y, Jawahar C (2012) Image annotation using metric learning in semantic neighbourhoods. In: ECCV, pp 836–849

Verma Y, Jawahar C (2013) Exploring SVM for image annotation in presence of confusing labels. In: British machine vision conference, pp 1–11

Wang G, Hoiem D, Forsyth D (2009) Building text features for object image classification. In: CVPR, pp 1367–1374

Wang J, Yang Y, Mao J, Huang Z, Huang C, Xu W (2016) Cnn-rnn: a unified framework for multi-label image classification. In: CVPR, pp 2285–2294

Wang L, Liu L, Khan L (2004) Automatic image annotation and retrieval using subspace clustering algorithm. In: ACM international workshop multimedia databases, pp 100–108

Weston J, Bengio S, Usunier N (2011) Wsabie: scaling up to large vocabulary image annotation. In: IJCAI, pp 2764–2770

Wu F, Jing X, Yue D (2017) Multi-view discriminant dictionary learning via learning view-specific and shared structured dictionaries for image classification. Neural Process Lett 45:649–666

Yang C, Dong M, Hua J (2007) Region-based image annotation using asymmetrical support vector machine-based multiple-instance learning. In: CVPR, pp 2057–2063

Yun H, Raman P, Vishwanathan S (2014) Ranking via robust binary classification. In: NIPS, pp 2582–2590

Zhang S, Huang J, Huang Y (2010) Automatic image annotation using group sparsity. In: CVPR, pp 3312–3319

Acknowledgements

This work is supported by the Natural Science Foundation of China (No. 61572162) and the Zhejiang Provincial Key Science and Technology Project Foundation (No. 2017C01010).

Author information

Authors and Affiliations

Corresponding authors

Ethics declarations

Conflict of interest

The authors declare that they have no conflict of interest.

Ethical Approval

This article does not contain any studies with human participants or animals performed by any of the authors.

Rights and permissions

About this article

Cite this article

Zhang, W., Hu, H. & Hu, H. Training Visual-Semantic Embedding Network for Boosting Automatic Image Annotation. Neural Process Lett 48, 1503–1519 (2018). https://doi.org/10.1007/s11063-017-9753-9

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11063-017-9753-9