Abstract

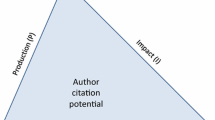

Considering that modern science is conducted primarily through a network of collaborators who organize themselves around key researchers, this research develops and tests a characterization and assessment method that recognizes the particular endogenous, or self-organizing characteristics of research groups. Instead of establishing an ad-hoc unit of analysis and assuming an unspecified network structure, the proposed method uses knowledge footprints, based on backward citations, to measure and compare the performance/productivity of research groups. The method is demonstrated by ranking research groups in Physics, Applied Physics/Condensed Matter/Materials Science and Optics in the leading institutions in Mexico, the results show that the understanding of the scientific performance of an institution changes with a more careful account for the unit of analysis used in the assessment. Moreover, evaluations at the group level provide more accurate assessments since they allow for appropriate comparisons within subfields of science. The proposed method could be used to better understand the self-organizing mechanisms of research groups and have better assessment of their performance.

Similar content being viewed by others

Notes

In this paper we define a Principal Investigator (PI) as an author with a high number of repeated connections, i.e. a researcher that has written several papers with a high number of coauthors.

We decide to normalize by group size given the great heterogeneity in the size of research groups in Mexico. This is related with the arguments of Hirsch (2005) that says that publications and citations may be inflated by a small number of “big hits” which may not be representative if he/she is coauthor with many others on those “big hits” papers. For example, the ATLAS collaboration papers published in 2008 had 2926 authors and the one in 2012 had 3171 authors.

“Co-word analysis deals directly with sets of terms shared by documents instead of with shared citations. Therefore, it maps the pertinent literature directly from the interactions of key terms instead of from the interactions of citations” (Coulter et al. 1998).

The knowledge footprint (KFP) for group i is the union of all the backward citations used by all members of a group in all of their papers within a specific time frame.

We chose Jerzy Plebanski (1928–2005) to exemplify the method because he is a well know Polish Physicist that has worked in Mexico for several years (CINVESTAV 2008).

The length of the path is the number of lines in a path (Wasserman and Faust 1994, p. 107). “A path is a walk in which all the nodes and all the lines are distinct” (Wasserman and Faust; ibid.).

A three year window was chosen to balance research projects’ time frame (Gonzalez-Brambila et al. 2013).

As stated by Krackhardt (1999, p. 186) “Indeed, the triad was special to Simmel primarily because of its contrast to the dyad. In his view, the differences between triads and larger cliques were minimal. The difference between a dyad and a triad, however, was fundamental. Adding a third party to a dyad “completely changes them, but… that the further expansion to four or more persons by no means correspondingly modifies the group any further (Simmel 1950, p. 138)”. Besides, in our sample less than 5 % of the publications have 2 authors.

The LSL for a researcher R is defined as maximum flow fRT between R and T where fRT is greater than the maximum flow fRU between R and any other researcher U. By the max-flow min-cut theorem, this is essentially a measure of the maximum number of edges that need to be cut (or maximum number papers removed from the network), such that R is no longer connected to some other researcher (Ford and Fulkerson 1962).

The max-flow min-cut theorem states that the size of the maximum flow, or the total amount of flow that can exist between source node s and target node t using the edges connecting s and t, is equal to the size of the minimum-cut between s and t.

The institutional profile of an author (or CG) is defined as a vector that contains in each cell the number of papers an author has published in a specific institution divided by the total number of papers this person has published, this means that if we have four institutions, A, B, C, D; and an author has published 5 papers in institution A, 3 in institution B, 2 in institution C and 0 in institution D her institutional profile is (0.5, 0.3, 0.2, 0.0). This concept can be extended to the collaboration groups by counting for each institution the number of papers each author of the CG has published in this institution and dividing this number by the total number of papers the CG has.

We also did the analysis considering different values, like ±2 the chosen critical values (with the exception of 1 %). No relevant differences were found.

Single institution groups (with three or more researchers in a particular institution) are used to properly assess the performance of this institution. Multiple institutions appear as a baseline application of the method because the initial criterion is only co-authorship in the context of a clique. Only when defining the boundary of the groups taking into consideration the institution we can get the groups that are meaningful from the point of view of benchmarking.

References

Adams, J. (1998). Benchmarking international research. Nature, 396, 615–618.

Borgatti, S., Everett, M., & Shirey, P. (1990). LS Sets, lambda sets and other cohesive subsets. Social Networks, 12, 337–357.

Braun, T., Gómez, I., Méndez, A., & Schubert, A. (1992). International co-authorship patterns in physics and its subfields, 1981–1985. Scientometrics, 24, 181–200.

Calero, C., Buter, R., Cabello-Valdés, C., & Noyons, E. (2006). How to identify research groups using publication analysis: an example in the field of nanotechnology. Scientometrics, 66(2), 365–376.

Callon, M., Courtial, J. P., Turner, W. A., & Bauin, S. (1983). From translations to problematic networks: an introduction to co-word analysis. Social Science Information, 22, 191–235.

CINVESTAV. (2008). Jerzy Plebanski’s Biography. Downloaded on 12 January 2009 from http://www.fis.cinvestav.mx/~grupogr/gr_mphysics/plebanski/Plebanski.html.

Cohen, J. E. (1991). Size, age and productivity of scientific and technical research groups. Scientometrics, 20(3), 395–416.

Collazo-Reyes, F., & Luna-Morales, M. E. (2002). Física mexicana de partículas elementales. INCI, 27, 347–353.

Collazo-Reyes, F., Luna-Morales, M. E., & Russell, J. M. (2004). Publication and citation patterns of the Mexican contribution to a “Big Science” discipline: Elementary particle physics. Scientometrics, 60, 131–143.

COSEPUP. (1999). Evaluating federal research programs: Research and the government performance and results act. Washington, DC: National Academy Press.

Coulter, N., Monarch, I., & Konda, S. (1998). Software engineering as seen through its research literature: A study in co-word analysis. Journal of the American Society for Information Science, 49, 1206–1223.

Du, N., Wu, B., Pei, X., Wang, B., & Xu, L. (2007). Community detection in large-scale social networks (pp. 16–25). New York, IN: Proceedings of WebKDD and SNA-KDD, ACM.

Ford, J. R., & Fulkerson, D. R. (1962). Flows in networks. Princeton, NJ: Princeton University Press.

Frederiksen, L. F., Hansson, F., & Wenneberg, S. B. (2003). The agora and the role of research. Evaluation, 9, 149–172.

Garfield, E. (1979). Is citation analysis a legitimate evaluation tool? Scientometrics, 1, 359–375.

Georghiou, L., & Roessner, D. (2000). Evaluating technology programs: tools and methods. Research Policy, 29, 657–678.

Glänzel, W., & Schubert, A. (2004). Analyzing scientific networks through co-authorship. In W. Glänzel & U. Schmoch (Eds.), Handbook of quantitative science and technology research (pp. 257–276). Dordrecht: Springer.

Gläser, J., Spurling, T. H., & Butler, L. (2004). Intraorganisational evaluation: Are there ‘least evaluable units’? Research Evaluation, 13, 19–32.

Gmür, M. (2003). Co-citation analysis and the search for invisible colleges: A methodological evaluation. Scientometrics, 57, 27–57.

Gomez, I., Fernandez, M. T., & Sebastian, J. (1999). Analysis of the structure of international scientific cooperation networks through bibliometric indicators. Scientometrics, 44, 441–457.

Gonzalez-Brambila, C. N., & Veloso, F. M. (2007). The determinants of research output and impact: A study of Mexican researchers. Research Policy, 36(7), 1035–1051.

Gonzalez-Brambila, C. N., Veloso, F. M., & Krackhardt, D. (2013). The impact of network embeddedness on research output. Research Policy, 42(9), 1555–1567.

Guimera, R., Uzzi, B., Spiro, J., & Nunes Amaral, L. A. (2005). Team assembly mechanisms determine collaboration network structure and team performance. Science, 308, 697–702.

Guston, D. H. (2003). The expanding role of peer review process in the United States. In P. Shapira & S. Kuhlmann (Eds.), Learning from Science and Technology Policy Evaluation (pp. 81–97). Northampton, MA: Edward Elgar Publishing.

Hirsch, J. E. (2005). An index to quantify an individual’s scientific research output. Proceedings of the National Academy of Sciences of the United States of America, 102(46), 16568–16572.

Kane, A. (2001). Indicators and evaluation for science, technology and innovation. ICSTI Task Force on Metrics and Impact. Downloaded on 15 August 2005 from http://www.economics.nuigalway.ie/people/kane/2002_2003ec378.html.

Katz, J. S., & Martin, B. R. (1997). What is research collaboration? Research Policy, 26, 1–18.

King, A. D. (2004). The scientific impact of nations. Nature, 430, 311–316.

Krackhardt, D. (1999). The ties that torture: Simmelian tie analysis in organizations. Research in the Sociology of Organizations, 16, 183–210.

Leydesdorff, L. (1987). Various methods for the Mapping of Science. Scientometrics, 11, 291–320.

Lu, H., & Feng, Y. (2009). A measure of authors’ centrality in co-authorship networks based on the distribution of collaborative relationships. Scientometrics, 81(2), 499–511.

Martin, B. R., & Irvine, J. (1983). Assessing basic research: Some partial indicators of scientific progress in radio astronomy. Research Policy, 12, 61–90.

Marx, W., & Hoffmann, T. (2011). Bibliometric analysis of fifty years of physica status solidi. Physica Status Solidi, 248(12), 2762–2771.

May, R. M. (1997). The scientific wealth of nations. Science, 275, 793–796.

Melin, G., & Persson, O. (1996). Studying research collaboration using co-authorships. Scientometrics, 36, 363–377.

Miquel, J. F., Ojasoo, T., Okubo, Y., Paul, A., & Doré, J. (1995). World science in 18 disciplinary areas: comparative evaluation of the publication patterns of 48 countries over the period 1981–1992. Scientometrics, 33, 149–167.

Moed, H. F. (2005). Citation analysis in research evaluation. Dordrecht: Springer.

Moody, J. (2004). The structure of a social science collaboration network: Disciplinary cohesion from 1963 to 1999. American Sociological Review, 69(2), 213–238.

Nagpaul, P. S., & Sharma, L. (1994). Research output and transnational cooperation in physics subfields: A multidimensional analysis. Scientometrics, 31, 97–122.

Narin, F. (1976). Evaluative bibliometrics: The use of publication and citation analysis in the evaluation of scientific activity. Washington DC: National Science Foundation.

Narin, F. (1978). Objectivity versus relevance in studies of scientific advance. Scientometrics, 1, 35–41.

Narvaez-Berthelemot, N., Frigoletto, L. P., & Miquel, J. F. (1992). International scientific collaboration in Latin America. Scientometrics, 24, 373–392.

Newman, M. E. J. (2001). The structure of scientific collaboration networks. Proceedings of the National Academy of Sciences, 98, 404–409.

Newman, M. E. J. (2004). Coauthorship networks and patterns of scientific collaboration. Proceeding of the National Academy of Science, 101, 5200–5205.

Noyons, E. C. M. (2004). Science maps within a science policy context. In H. F. Moed, W. Glänzel, & U. Schmoch (Eds.), Handbook of quantitative science and technology research. The use of publication and patent statistics in studies of S&T systems (pp. 237–256). Dordrecht: Kluwer Academic Publishers.

Noyons, E. C. M., Moed, H. F., & Luwel, M. (1999a). Combining mapping and citation analysis for evaluative bibliometric purposes: A bibliometric study. Journal of the American Society for Information Science, 50, 115–131.

Noyons, E. C. M., Moed, H. F., & van Raan, A. F. J. (1999b). Integrating research performance analysis and science mapping. Scientometrics, 46(3), 591–604.

Perianes-Rodriguez, A., Olmeda-Gomez, C., & Moya-Anegon, F. (2010). Detecting, identifying and visualizing research groups in co-authorship networks. Scientometrics, 82, 307–319.

Rip, A. (2000). Societal challenges for R&D evaluation. In P. Shapira & S. Kuhlmann (Eds.), Learning from science and technology policy evaluation (pp. 18–41). Cheltenham: Edward Elgar Publishing.

Russell, J. M. (1995). The increasing role of international cooperation in science and technology research in Mexico. Scientometrics, 34, 45–61.

Russell, J. M., del Río, J. A., & Cortés, H. D. (2007). Highly visible science: A look at tree decades of research from Argentina, Brazil, Mexico and Spain. INCI, 32, 629–634.

Scientific, Thomson. (2004). National Citation Report 1980–2003: Customized database for the Mexican Council for Science and Technology (CONACYT). Philadelphia, PA: Thomson Research.

Small, H. G. (1973). Co-citation in scientific literature: A new measure of the relationship between publications. Journal of the American Society for Information Science, 24, 265–269.

Small, H. G. (1977). Co-citation model of a scientific specialty: a longitudinal study of collagen research. Social Studies of Science, 7, 139–166.

Small, H. G. (1978). Cited documents as concept symbols. Social Studies of Science, 8, 327–340.

Thomson-ISI. (2003). National Science Indicators database—Deluxe version. Philadelphia, PA: Thomson Research.

Simmel, G. (1950). The sociology of Georg Simmel. New York: Free Press.

van Raan, A. F. J. (2000). R&D evaluation at the beginning of the new century. Research Evaluation, 9, 81–86.

van Raan, A. F. J. (2004). Measuring science capita selecta of current main issues. In H. F. Moed, W. Glänzel, & U. Schmoch (Eds.), Handbook of quantitative science and technology research (pp. 19–50). Dordrecht: Kluwer Academic Publishers.

van Raan, A. F. J. (2005). Fatal Attraction: Conceptual and methodological problems in the ranking of universities by bibliometric methods. Scientometrics, 62, 133–143.

Wagner, C. S., & Leydesdorff, L. (2005). Network structure, self-organization, and the growth of international collaboration in science. Research Policy, 34, 1608–1618.

Wasserman, S., & Faust, K. (1994). Social network analysis: Methods and applications. Cambridge: Cambridge University Press.

White, H. D., & McCain, K. W. (1998). Visualizing a discipline: An author co-citation analysis of information science, 1972–1995. Journal of the American Society for Information Science, 49, 327–355.

Yang, T., Jun, R., Chi, Y., & Zhu, S. (2009). Combining link and content for community detection: a discriminative approach. Proceedings of KDD (pp. 927–936). New York: ACM.

Zulueta, M. A., & Bordons, M. (1999). A global approach to the study of teams in multidisciplinary research áreas through bibliometric indicators. Research Evaluation, 8(2), 111–118.

Acknowledgments

Authors gratefully acknowledge support from Conacyt. Gonzalez-Brambila also thanks the Instituto Tecnologico Autonomo de Mexico and the Asociación Mexicana de Cultura AC for their generous support.

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Reyes-Gonzalez, L., Gonzalez-Brambila, C.N. & Veloso, F. Using co-authorship and citation analysis to identify research groups: a new way to assess performance. Scientometrics 108, 1171–1191 (2016). https://doi.org/10.1007/s11192-016-2029-8

Received:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11192-016-2029-8