Abstract

Citations are commonly used to measure academic impacts of scientific publications, including books. However, citation frequencies of books are single numerical evaluation metrics. It neglects details about books (e.g. contents), which may lead to the decline in comprehensiveness of evaluation results. Hence, fine-grained mining on books’ citation information to integrate frequency metrics and content metrics can obtain more reliable evaluation results. Books’ citation literatures (i.e. literatures cited books) present citation frequencies of books, and reflect citation intentions, topics and domains simultaneously. Existing research focused on analysing citation frequencies, authors or citation contexts of citation literatures to conduct citation analysis. It may be costly for collecting citation contexts and neglected latent information of citation literatures, such as impact scopes or topics of books reflected by citation literatures. Therefore, in this paper, we conducted fine-grained analysis on books’ citation literatures to assess whether citation literatures could be systematically used for indicators of books’ wider impacts. Specifically, we firstly collected books and corresponding information about their citation literatures. Then, we extracted multi-dimensional metrics via multi-granularity mining on citation literatures, and got assessment results by integrating content-level and frequency-level metrics. Finally, we compared assessment results based on citation literatures and existing metrics for assessing books’ impacts to verify assessment results. Experimental results infer that citation literatures are a promising source for book impact assessment, especially books’ academic impacts.

Similar content being viewed by others

References

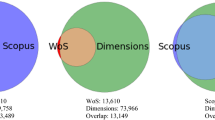

Adriaanse, L. S., & Rensleigh, C. (2013). Web of science, Scopus and Google Scholar: A content comprehensiveness comparison. The Electronic Library, 31(6), 727–744.

Barilan, J. (2010). Citations to the “Introduction to informetrics” indexed by WOS. Scopus and Google Scholar. Scientometrics, 82(3), 495–506.

Chien-Lih, H. (2005). An elementary derivation of Euler’s series for the arctangent function. The Mathematical Gazette, 89(516), 469–470.

China, T. S. A. O. (2009). Chinese discipline classification and code GB/T13745-2009.

Cortes, C., & Vapnik, V. (1995). Support-vector networks. Machine Learning, 20(3), 273–297.

Gorraiz, J., Purnell, P. J., & Glänzel, W. (2014). Opportunities for and limitations of the book citation index. Journal of the Association for Information Science & Technology, 64(7), 1388–1398.

Harzing, A.-W. K., & Van der Wal, R. (2008). Google Scholar as a new source for citation analysis. Ethics in Science and Environmental Politics, 8(1), 61–73.

Hernández-Alvarez, M., Soriano, J. M. G., & Martínez-Barco, P. (2017). Citation function, polarity and influence classification. Natural Language Engineering, 23(4), 561–588.

Hoffman, M. D., Blei, D. M., & Bach, F. R. (2010). Online learning for latent Dirichlet allocation. Advances in Neural Information Processing Systems, 23, 856–864.

Huang, Z., & Yuan, B. (2012). Mining Google scholar citations: An exploratory study. In Proceedings of the international conference on intelligent computing (pp. 182–189).

Jacsó, P. (2005). Google Scholar: The pros and the cons. Online Information Review, 29(2), 208–214.

Jian, W., Bart, T., & Wolfgang, G. (2015). Interdisciplinarity and impact: Distinct effects of variety, balance, and disparity. PLoS ONE, 10(5), e0127298.

Kousha, K., & Thelwall, M. (2015a). Alternative metrics for book impact assessment: Can choice reviews be a useful source? In Proceedings of the 15th international conference on scientometrics and informetrics (pp. 59–70).

Kousha, K., & Thelwall, M. (2015b). An automatic method for extracting citations from Google Books. Journal of the Association for Information Science and Technology, 66(2), 309–320.

Kousha, K., & Thelwall, M. (2016). Can Amazon.com reviews help to assess the wider impacts of books. Journal of the Association for Information Science & Technology, 67(3), 566–581.

Kousha, K., Thelwall, M., & Abdoli, M. (2016). Goodreads reviews to assess the wider impacts of books. Journal of the Association for Information Science & Technology, 68(8), 2004–2016.

Kousha, K., Thelwall, M., & Rezaie, S. (2011). Assessing the citation impact of books: The role of Google books, Google Scholar, and Scopus. Journal of the American Society for Information Science and Technology, 62(11), 2147–2164.

Levine-Clark, M., & Gil, E. L. (2009). A comparative citation analysis of web of science, Scopus, and Google Scholar. Journal of Business & Finance Librarianship, 14(1), 32–46.

Lewison, G. (2001). Evaluation of books as research outputs in history of medicine. Research Evaluation, 10(2), 89–95.

Leydesdorff, L., & Felt, U. (2012). “Books” and “book chapters” in the book citation index (BKCI) and science citation index (SCI, SoSCI, A&HCI). Proceedings of the American Society for Information Science & Technology, 49(1), 1–7.

Li, J., Burnham, J. F., Lemley, T., & Britton, R. M. (2010). Citation analysis: Comparison of Web of Science®, Scopus™, SciFinder®, and Google Scholar. Journal of Electronic Resources in Medical Libraries, 7(3), 196–217.

Maity, S. K., Panigrahi, A., & Mukherjee, A. (2018). Analyzing social book reading behavior on Goodreads and how it predicts Amazon best sellers. In Proceedings of the international conference on advances in social networks analysis and mining (pp. 211–235).

McCain, K. W., & Salvucci, L. J. (2006). How influential is Brooks’ law? A longitudinal citation context analysis of Frederick Brooks’ the mythical man-month. Journal of Information Science, 32(3), 277–295.

Mika, S., Ratsch, G., Weston, J., Scholkopf, B., & Mullers, K. R. (1999). Fisher discriminant analysis with kernels. In Proceedings of the 9th IEEE workshop on neural networks for signal processing (pp. 41–48).

Nie, H. Z., Pan, L., Qiao, Y., & Yao, X. P. (2009). Comprehensive fuzzy evaluation for transmission network planning scheme based on entropy weight method. Power System Technology, 33(11), 278–281.

Rajesh, P., Vedika, G., Kumar, S. V., David, P., David, P., Kumar, S. V., et al. (2018). Book impact assessment: A quantitative and text-based exploratory analysis. Journal of Intelligent & Fuzzy Systems., 34(5), 3101–3110.

Simpson, E. H. (1949). Measurement of diversity. Nature, 163(4148), 688.

Thelwall, M., & Abrizah, A. (2014). Can the impact of non-Western academic books be measured? An investigation of Google Books and Google Scholar for Malaysia. Journal of the Association for Information Science & Technology, 65(12), 2498–2508.

Torres-Salinas, D., Robinson-García, N., Cabezas-Clavijo, Á., & Jiménez-Contreras, E. (2014). Analyzing the citation characteristics of books: Edited books, book series and publisher types in the book citation index. Scientometrics, 98(3), 2113–2127.

Torres-Salinas, D., Robinson-Garcia, N., Campanario, J. M., & Lópezcózar, E. D. (2013). Coverage, field specialisation and the impact of scientific publishers indexed in the Book Citation Index. Online Information Review, 38(1), 24–42.

Tsay, M.-Y., Shen, T.-M., & Liang, M.-H. (2016). A comparison of citation distributions of journals and books on the topic “information society”. Scientometrics, 106(2), 475–508.

White, H. D., Boell, S. K., Yu, H., Davis, M., Wilson, C. S., & Cole, F. T. H. (2009). Libcitations: A measure for comparative assessment of book publications in the humanities and social sciences. Journal of the American Society for Information Science and Technology, 60(6), 1083–1096.

Zhang, C., & Zhou, Q. (2020). Assessing books’ depth and breadth via multi-level mining on tables of contents. Journal of Informetrics, 14(2), 101032.

Zhou, Q., Zhang, C., Zhao, S. X., & Chen, B. (2016). Measuring book impact based on the multi-granularity online review mining. Scientometrics, 107(3), 1435–1455.

Zuccala, A., & Cornacchia, R. (2016). Data matching, integration, and interoperability for a metric assessment of monographs. Scientometrics, 108(1), 465–484.

Zuccalá, A., & Leeuwen, T. V. (2014). Book reviews in humanities research evaluations. Journal of the American Society for Information Science and Technology, 62(10), 1979–1991.

Zuccala, A., & Robinson-Garcia, N. (2019). Reviewing, indicating, and counting books for modern research evaluation systems. In Springer handbook of science and technology indicators (pp. 715–728).

Zuccala, A., Someren, M. V., & Bellen, M. V. (2014). A machine-learning approach to coding book reviews as quality indicators: Toward a theory of megacitation. Journal of the Association for Information Science & Technology, 65(11), 2248–2260.

Zuccala, A. A., Verleysen, F. T., Cornacchia, R., & Engels, T. C. E. (2015). Altmetrics for the humanities comparing Goodreads reader ratings with citations to history books. Aslib Journal of Information Management, 67(3), 320–336.

Acknowledgement

This work is supported by the National Social Science Fund Project (No. 19CTQ031).

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Zhou, Q., Zhang, C. Evaluating wider impacts of books via fine-grained mining on citation literatures. Scientometrics 125, 1923–1948 (2020). https://doi.org/10.1007/s11192-020-03676-2

Received:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11192-020-03676-2