Abstract

Examining the relationships among scientific disciplines is important today, but existing methods are limited by the contents and structure of their bibliographic databases. We therefore demonstrate a novel approach that measures disparity by examining the organization of published scientific books and monographs into Library of Congress Subject Headings. After outlining the method and analyses conducted, we compare our results with those produced by prior works, note potential implications of the demonstrated method for use by bibliometric practitioners, and suggest directions for further research.

Similar content being viewed by others

Notes

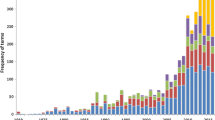

There are 1,065 books published before 1830 including 1,018 books in which publishing year information is missing.

Cosine similarity has been frequently used to measure the disparity (or similarity) among disciplines in previous bibliometric studies (Ahlgren et al., 2003; Huang et al., 2021; Zhang et al., 2016; Zhang et al., 2021). It provides more reasonable and intuitive results for measuring the similarity among scientific disciplines compared with the measurement by Person correlation (Klavans & Boyack, 2006).

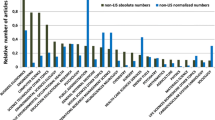

NSF classification system is a 2-level journal classification system consisting of 14 major disciplines and 144 subfields. This system exclusively assigns each individual journal into only one single field. In this study, the disparity measure was calculated at the level of 14 major disciplines in SSH (i.e., Arts, Health, Humanities, Professional fields, Psychology, and Social sciences) and NSE (i.e., Biology, Chemistry, Engineering and Technology, Mathematics, Clinical medicine, Physics, Biomedical Research, and Earth and space science).

References

Adams, J., Jackson, L., & Marshall, S. (2007). Bibliometric analysis of interdisciplinary research. Report to the Higher Education Funding Council for England by Evidence Ltd.

Ahlgren, P., Jarneving, B., & Rousseau, R. (2003). Requirements for a cocitation similarity measure, with special reference to Pearson’s correlation coefficient. Journal of the American Society for Information Science and Technology, 54(6), 550–560. https://doi.org/10.1002/asi.10242

Boyack, K., & Klavans, R. (2014). Atypical combinations are confounded by disciplinary effects.

Bromham, L., Dinnage, R., & Hua, X. (2016). Interdisciplinary research has consistently lower funding success. Nature, 534(7609), 684–687.

Chan, L. M., & Hodges, T. (2007). Cataloging and classification: An introduction (3rd ed.). Scrarecrow Press.

Chao, X.-Y. (2020, Nov. 20). NSFC lanched the department of interdisciplinary research. Science and Technology Daily.

Chen, S., Arsenault, C., & Larivière, V. (2015). Are top-cited papers more interdisciplinary? Journal of Informetrics, 9(4), 1034–1046. https://doi.org/10.1016/j.joi.2015.09.003

Chen, S., Qiu, J., Arsenault, C., & Larivière, V. (2021). Exploring the interdisciplinarity patterns of highly cited papers. Journal of Informetrics, 15(1), 101–124.

Huang, Y., Glänzel, W., Thijs, B., Porter, A. L., & Zhang, L. (2021). The comparison of various similarity measurement approaches on interdisciplinary indicators. FEB Research Report MSI_2102, 1–24.

Julien, C.-A., Tirilly, P., Leide, J. E., & Guastavino, C. (2012). Constructing a true LCSH tree of a science and engineering collection. Journal of the American Society for Information Science and Technology, 63(12), 2405–2418.

Klavans, R., & Boyack, K. W. (2012, 4–6 Sept). Towards the development of an article-level indicator of conformity, innovation and deviation. Paper presented at the 18th International Conference on Science and Technology Indicators, Berlin, Germany.

Klavans, R., & Boyack, K. W. (2006). Identifying a better measure of relatedness for mapping science. Journal of the American Society for Information Science and Technology, 57(2), 251–263. https://doi.org/10.1002/asi.20274

Klavans, R., & Boyack, K. W. (2009). Toward a consensus map of science. Journal of the American Society for Information Science and Technology, 60(3), 455–476.

Lariviere, V., & Gingras, Y. (2010). On the relationship between interdisciplinarity and scientific impact. Journal of the American Society for Information Science and Technology, 61(1), 126–131. https://doi.org/10.1002/Asi.21226

Larivière, V., & Gingras, Y. (2014). Measuring interdisciplinarity. In B. Cronin & C. R. Sugimoto (Eds.), Beyond bibliometrics: harnessing multidimensional indicators of scholarly impact (pp. 187–200). MIT Press.

Larivière, V., Haustein, S., & Börner, K. (2015). Long-distance interdisciplinarity leads to higher scientific impact. PLoS ONE, 10(3), e0122565. https://doi.org/10.1371/journal.pone.0122565

Leahey, E., Beckman, C. M., & Stanko, T. L. (2017). Prominent but less productive: The impact of interdisciplinarity on scientists’ research. Administrative Science Quarterly, 62(1), 105–139.

Levitt, J. M., & Thelwall, M. (2008). Is multidisciplinary research more highly cited? A macrolevel study. Journal of the American Society for Information Science and Technology, 59(12), 1973–1984. https://doi.org/10.1002/asi.20914

Leydesdorff, L., Comins, J. A., Sorensen, A. A., Bornmann, L., & Hellsten, I. (2016). Cited references and Medical Subject Headings (MeSH) as two different knowledge representations: Clustering and mappings at the paper level. Scientometrics, 109(3), 2077–2091. https://doi.org/10.1007/s11192-016-2119-7

Leydesdorff, L., & Ivanova, I. (2021). The measurement of “interdisciplinarity” and “synergy” in scientific and extra-scientific collaborations. Journal of the Association for Information Science and Technology, 72(4), 387–402. https://doi.org/10.1002/asi.24416

Library of Congress. (2016). Subject heading manual: H 0180 Assigning and constructing subject headings. Retrieved from http://www.loc.gov/aba/publications/FreeSHM/freeshm.html

MacRoberts, M. H., & MacRoberts, B. R. (1989). Problems of citation analysis: A critical review. Journal of the American Society for Information Science, 40(5), 342–349.

Morillo, F., Bordons, M., & Gómez, I. (2001). An approach to interdisciplinarity through bibliometric indicators. Scientometrics, 51(1), 203–222.

Morillo, F., Bordons, M., & Gómez, I. (2003). Interdisciplinarity in science: A tentative typology of disciplines and research areas. Journal of the American Society for Information Science and Technology, 54(13), 1237–1249. https://doi.org/10.1002/asi.10326

National Science Board. (2018). Science and Engineering Indicators 2018. (NSB-2018–1). Alexandria, VA: National Science Foundation Retrieved from https://www.nsf.gov/statistics/indicators/.

Okubo, Y. (1997). Bibliometric indicators and analysis of research systems methods and examples. OECD Publishing.

Porter, A. L., & Chubin, D. E. (1985). An indicator of cross-disciplinary research. Scientometrics, 8(3–4), 161–176. https://doi.org/10.1007/Bf02016934

Porter, A. L., & Rafols, I. (2009). Is science becoming more interdisciplinary? Measuring and mapping six research fields over time. Scientometrics, 81(3), 719–745.

Rafols, I., & Meyer, M. (2010). Diversity and network coherence as indicators of interdisciplinarity: Case studies in bionanoscience. Scientometrics, 82(2), 263–287. https://doi.org/10.1007/s11192-009-0041-y

Rinia, E. J., van Leeuwen, T., & van Raan, A. J. (2002). Impact measures of interdisciplinary research in physics. Scientometrics, 53(2), 241–248. https://doi.org/10.1023/A:1014856625623

Robare, L., El-Hoshy, L., Trumble, B., & Hixson, C. G. (Eds.). (2011). Basic subject cataloging using LCSH - Instructor’s manual. ALCTS/SAC-PCC/SCT.

Shu, F. (2021). Limitations of citation analysis on the measurement of research impact: A summary. Data Science and Informetrics, 1(3), 37–49.

Shu, F., Dinneen, J. D., Asadi, B., & Julien, C.-A. (2017). Mapping science using Library of Congress Subject Headings. Journal of Informetrics, 11(4), 1080–1094.

Shu, F., Julien, C.-A., Zhang, L., Qiu, J., Zhang, J., & Larivière, V. (2019). Comparing journal and paper level classifications of science. Journal of Informetrics, 13(1), 202–225.

Steele, T. W., & Stier, J. C. (2000). The impact of interdisciplinary research in the environmental sciences: A forestry case study. Journal of the American Society for Information Science, 51(5), 476–484. https://doi.org/10.1002/(SICI)1097-4571(2000)51:5%3c476::AID-ASI8%3e3.0.CO;2-G

Stirling, A. (2007). A general framework for analysing diversity in science, technology and society. Journal of the Royal Society, Interface, 4(15), 707–719. https://doi.org/10.1098/rsif.2007.0213

Uzzi, B., Mukherjee, S., Stringer, M., & Jones, B. (2013). Atypical combinations and scientific impact. Science, 342(6157), 468–472.

Wang, J., Thijs, B., & Glänzel, W. (2015). Interdisciplinarity and impact: Distinct effects of variety, balance, and disparity. PLoS ONE, 10(5), e0127298. https://doi.org/10.1371/journal.pone.0127298

Wang, J., Veugelers, R., & Stephan, P. (2017). Bias against novelty in science: A cautionary tale for users of bibliometric indicators. Research Policy, 46(8), 1416–1436. https://doi.org/10.1016/j.respol.2017.06.006

Yegros-Yegros, A., Rafols, I., & D’este, P. (2015). Does interdisciplinary research lead to higher citation impact? The different effect of proximal and distal interdisciplinarity. PloS ONE, 10(8), e0135095.

Yi, K., & Chan, L. M. (2010). Revisiting the syntactical and structural analysis of Library of Congress Subject Headings for the digital environment. Journal of the American Society for Information Science and Technology, 61(4), 677–687.

Zhang, L., Rousseau, R., & Glänzel, W. (2016). Diversity of references as an indicator of the interdisciplinarity of journals: Taking similarity between subject fields into account. Journal of the Association for Information Science and Technology, 67(5), 1257–1265.

Zhang, L., Sun, B., Jiang, L., & Huang, Y. (2021). On the relationship between interdisciplinarity and impact: Distinct effects on academic and broader impact. Research Evaluation, 30(3), 256–268.

Acknowledgements

This work is supported by Zhejiang Provincial Philosophy and Social Sciences Planning Project (22NDJC085YB). We also would like to thank Philippe Mongeon for his data analysis assistance and his helpful comments.

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Shu, F., Dinneen, J.D. & Chen, S. Measuring the disparity among scientific disciplines using Library of Congress Subject Headings. Scientometrics 127, 3613–3628 (2022). https://doi.org/10.1007/s11192-022-04387-6

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11192-022-04387-6