Abstract

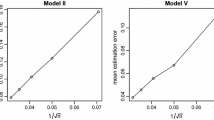

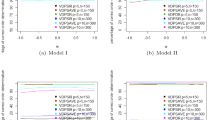

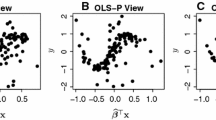

To reduce the predictors dimension without loss of information on the regression, we develop in this paper a sufficient dimension reduction method which we term cumulative Hessian directions. Unlike many other existing sufficient dimension reduction methods, the estimation of our proposal avoids completely selecting the tuning parameters such as the number of slices in slicing estimation or the bandwidth in kernel smoothing. We also investigate the asymptotic properties of our proposal when the predictors dimension diverges. Illustrations through simulations and an application are presented to evidence the efficacy of our proposal and to compare it with existing methods.

Similar content being viewed by others

References

Cook, R.D.: Regression Graphics: Ideas for Studying Regressions Through Graphics. Wiley, New York (1998a)

Cook, R.D.: Principal Hessian directions revisited. J. Am. Stat. Assoc. 93, 84–94 (1998b)

Cook, R.D., Li, B.: Dimension reduction for conditional mean in regression. Ann. Stat. 30, 455–474 (2002)

Cook, R.D., Weisberg, S.: Discussion to “Sliced inverse regression for dimension reduction”. J. Am. Stat. Assoc. 86, 316–342 (1991)

Li, B., Wang, S.L.: On directional regression for dimension reduction. J. Am. Stat. Assoc. 102, 997–1008 (2007)

Li, B., Zha, H., Chiaromonte, F.: Contour regression: a general approach to dimension reduction. Ann. Stat. 33, 1580–1616 (2005)

Li, K.C.: Sliced inverse regression for dimension reduction (with discussion). J. Am. Stat. Assoc. 86, 316–342 (1991)

Li, K.C.: On principal Hessian directions for data visualization and dimension reduction: another application of Stein’s lemma. J. Am. Stat. Assoc. 87, 1025–1039 (1992)

Li, K.C., Duan, N.H.: Regression analysis under link violation. Ann. Stat. 17, 1009–1052 (1989)

Li, Y.X., Zhu, L.X.: Asymptotics for sliced average variance estimation. Ann. Stat. 35, 41–69 (2007)

Loeve, M.: Probability Theory II, 4th edn. Springer, New York (1978)

Serfling, R.J.: Approximation Theorems of Mathematical Statistics. Wiley, New York (1980)

Xia, Y.C.: A constructive approach to the estimation of dimension reduction directions. Ann. Stat. 35, 2654–2690 (2007)

Ye, Z., Weiss, R.E.: Using the bootstrap to select one of a new class of dimension reduction methods. J. Am. Stat. Assoc. 98, 968–979 (2003)

Zhu, L.P., Zhu, L.X.: On kernel method for sliced average variance estimation. J. Multivar. Anal. 98, 970–991 (2007)

Zhu, L.X., Fang, K.T.: Asymptotics for the kernel estimates of sliced inverse regression. Ann. Stat. 24, 1053–1067 (1996)

Zhu, L.X., Ng, K.W.: Asymptotics of sliced inverse regression. Stat. Sin. 5, 727–736 (1995)

Author information

Authors and Affiliations

Corresponding author

Additional information

The second author was supported by a NSSF grant from National Social Science Foundation of China (No. 08CTJ001), and the third author was supported by a grant from the Research Grants Council of Hong Kong HKBU2034/09P, and a FRG grant from Hong Kong Baptist University, Hong Kong. The authors are grateful to the editor, an associate editor, and two anonymous referees for their generous help and their constructive comments and suggestions, which led to a great improvement of our earlier draft.

About this article

Cite this article

Zhang, LM., Zhu, LP. & Zhu, LX. Sufficient dimension reduction in regressions through cumulative Hessian directions. Stat Comput 21, 325–334 (2011). https://doi.org/10.1007/s11222-010-9172-5

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11222-010-9172-5