Abstract

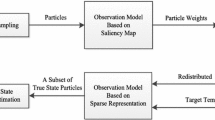

Vision-based aircraft tracking has been considered for emerging real-world applications, such as collision avoidance, air traffic surveillance, and target tracking for military use. However, conventional tracking methods often fail in following aircraft due to 1) variations of object shape, 2) continuously varying background, and 3) unpredictable flight motion. In this paper, we address the problems of vision-based aircraft tracking. To this ends, we propose a principled manner of improving color-based tracking algorithm by combining a biologically inspired saliency feature. More specifically, we exploit the integration of color distributions into particle filtering, which is a Monte Carlo method for general nonlinear filtering problems. To overcome the varying appearances which are usually from changing illumination and pose conditions, we update the target color model. Furthermore, we adopt a structure tensor based saliency algorithm to incorporate the saliency features into particle filter framework, which results in robustly assigning appropriate particle weights even in complex backgrounds. The rationale behind our approach is that color and saliency information are complementary, both mutually fulfilling and completing each other, especially when tracking aircraft in a harsh environment. Tests on real flight sequences reveal that the proposed system yields convincing tracking outcomes under both variations of background and sudden target motion changes.

Similar content being viewed by others

References

Adam, A., Rivlin, E., Shimshoni, I. (2006). Robust fragments-based tracking using the integral histogram. In IEEE conference on computer vision and pattern recognition (pp. 798–805).

Anderson, B.D.O., & Moore, J.B. (1979). Optimal filtering. Englewood Cliffs, N.J: Prentice-Hall.

Arulampalam, M., Maskell, S., Gordon, N., Clapp, T. (2002). A tutorial on particle filters for online nonlinear/non-gaussian bayesian tracking. IEEE Transactions on Signal Processing, 50(2), 174–188.

Bar-Shalom, Y., Shertukde, H., Pattipati, K. (1989). Use of measurements from an imaging sensor for precision target tracking. IEEE Transactions on Aerospace and Electronic Systems, 25(6), 863–872.

Bigün, J., & Granlund, G.H. (1987). Proceedings of the ieee first international conference on computer vision (pp. 433–438). London, Great Britain.

Campoy, P., Correa, J.F., Mondragon, I.F., Martinez, C., Olivares, M., Mejias, L., Artieda, J. (2009). Computer vision onboard uavs for civilian tasks. Journal of Intelligent and Robotic Systems, 54(1–3), 105–135.

Carnie, R., Walker, R., Corke, P. (2006). Image processing algorithms for uav sense and avoid. In IEEE international conference on robotics and automation (pp. 2848–2853).

Casasent, D., & Ye, A. (1997). Detection filters and algorithm fusion for atr. IEEE Transactions on Image Processing, 6(1), 114–125.

Cesetti, A., Frontoni, E., Mancini, A., Zingaretti, P., Longhi, S. (2010). A vision-based guidance system for uav navigation and safe landing using natural landmarks. Journal of Intelligent and Robotic Systems, 57(1–4), 233–257.

Chan, T., & Vese, L. (2001). Active contours without edges. IEEE Transactions on Image Processing, 10(2), 266–277.

Comaniciu, D., Ramesh, V., Meer, P. (2000). Real-time tracking of non-rigid objects using mean shift. In IEEE conference on computer vision and pattern recognition (pp. 142–149).

Cootes, T., Edwards, G., Taylor, C. (2001). Active appearance models. IEEE Transactions on Pattern Analysis and Machine Intelligence, 23(6), 681–685.

Dey, D., Geyer, C.M., Singh, S., Digioia, M. (2011). A cascaded method to detect aircraft in video imagery. The International Journal of Robotics Research, 30(12), 1527–1540.

Doucet, A., Defreitas, N., Gordon, N. (2001). An introduction to sequential Monte Carlo methods. Springer-Verlag.

Gandhi, T., Yang, M.T., Kasturi, R., Camps, O., Coraor, L., McCandless, J. (2003). Detection of obstacles in the flight path of an aircraft. IEEE Transactions on Aerospace and Electronic Systems, 39(1), 176–191.

Geyer, C.M., Singh, S., Chamberlain, L.J. (2008). Avoiding collisions between aircraft: state of the art and requirements for uavs operating in civilian airspace. Tech. Rep. CMU-RI-TR-08-03, Robotics Institute Pittsburgh, PA.

Gordon, N.J., Salmond, D.J., Smith, A.F.M. (1993). Novel approach to nonlinear/non-Gaussian Bayesian state estimation. IEE Proceedings F Radar and Signal Processing, 140(2), 107– 113.

Kim, W., & Kim, C. (2012). Saliency detection via textural contrast. Optics Letters, 37(9), 1550–1552.

Lai, J., Mejias, L., Ford, J.J. (2011). Airborne vision-based collision-detection system. Journal Field Robot, 28(2), 137–157.

Lowe, D.G. (2004). Distinctive image features from scale-invariant keypoints. International Journal of Computer Vision, 60(2), 91–110.

Lucas, B.D., & Kanade, T. (1981). An iterative image registration technique with an application to stereo vision (ijcai). In Proceedings of the 7th international joint conference on artificial intelligence (IJCAI ’81) (pp. 674–679).

MacCormick, J., & Blake, A. (2000). A probabilistic exclusion principle for tracking multiple objects. International Journal of Computer Vision, 39(1), 57–71.

Mejias, L., McNamara, S., Lai, J., Ford, J. (2010). Vision-based detection and tracking of aerial targets for UAV collision avoidance. In IEEE/RSJ international conference on intelligent robots and systems (IROS) (pp. 87–92). IEEE.

Mian, A.S. (2008). Realtime visual tracking of aircrafts. In Proceedings of the 2008 digital image computing: techniques and applications (pp. 351–356).

Nummiaro, K., Koller-Meier, E., Van Gool, L. (2003). An adaptive color-based particle filter. Image and Vision Computing, 21(1), 99–110.

Ripley, B.D. (1987). Stochastic simulation. New York: Wiley.

Ross, D.A., Lim, J., Lin, R.S., Yang, M.H. (2008). Incremental learning for robust visual tracking. International Journal of Computer Vision, 77, 125–141.

Sanna, A., Pralio, B., Lamberti, F., Paravati, G. (2009). A novel ego-motion compensation strategy for automatic target tracking in flir video sequences taken from uavs. IEEE Transactions on Aerospace and Electronic Systems, 45(2), 723–734.

Shimin, Y., Jin Hee, N., Jin Young, C., Songhwai, O. (2011). Hierarchical kalman-particle filter with adaptation to motion changes for object tracking. Computer Vision and Image Understanding, 115(6), 885–900.

Suresh, K., Kannan, E.N., Johnson, Y.W., Sattigeri, R. (2011). Vision-basedtracking of uncooperative targets. International Journal of Aerospace Engineering, 2011.

Yilmaz, A., Javed, O., Shah, M. (2006). Object tracking: a survey. ACM Computing Surveys, 38(4), 1–45.

Yin, S., Na, J.H., Choi, J.Y., Oh, S. (2011). Hierarchical kalman-particle filter with adaptation to motion changes for object tracking. Computer Vision and Image Understanding, 115(6), 885– 900.

Zarchan, P. (1990). Tactical and strategic missile guidance / Paul Zarchan. Washington, DC: American Institute of Aeronautics and Astronautics.

Zhang, J., Liu, W., Wu, Y. (2011). Novel technique for vision based uav navigation. IEEE Transactions on Aerospace and Electronic Systems, 47(4), 2731–2741.

Zhang, S. (2005). Object tracking in unmanned aerial vehicle (uav) videos using a combined approach. In IEEE international conference on acoustics, speech, and signal processing (Vol. 2, pp. 681–684).

Zsedrovits, T., Zarandy, A., Vanek, B., Peni, T., Bokor, J., Roska, T. (2011). Collision avoidance for uav using visual detection. In IEEE international symposium on circuits and systems (pp. 2173–2176). doi:10.1109/ISCAS.2011.5938030.

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Yoo, S., Kim, W. & Kim, C. Saliency Combined Particle Filtering for Aircraft Tracking. J Sign Process Syst 76, 19–31 (2014). https://doi.org/10.1007/s11265-013-0803-x

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11265-013-0803-x