Abstract

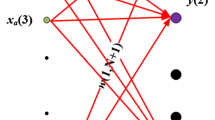

This paper presents a fast adaptive iterative algorithm to solve linearly separable classification problems in \( {R^n} \). In each iteration, a subset of the sampling data (n-points, where n is the number of features) is adaptively chosen and a hyperplane is constructed such that it separates the chosen n-points at a margin ϵ and best classifies the remaining points. The classification problem is formulated and the details of the algorithm are presented. Further, the algorithm is extended to solving quadratically separable classification problems. The basic idea is based on mapping the physical space to another larger one where the problem becomes linearly separable. Numerical illustrations show that few iteration steps are sufficient for convergence when classes are linearly separable. For nonlinearly separable data, given a specified maximum number of iteration steps, the algorithm returns the best hyperplane that minimizes the number of misclassified points occurring through these steps. Comparisons with other machine learning algorithms on practical and benchmark datasets are also presented, showing the performance of the proposed algorithm.

Similar content being viewed by others

References

Duda R O, Hart P E, Stork D G. Pattern Classification. New York: Wiley-Interscience, 2000.

Theodoridis S, Koutroumbas K. Pattern Recognition. Academic Press, An Imprint of Elsevier, 2006.

Cristianini N, Shawe T J. An Introduction to Support Vector Machines. Vol. I, Cambridge University Press, 2003.

Atiya A. Learning with kernels: Support vector machines, regularization, optimization, and beyond. IEEE Transactions on Neural Networks, 2005, 16(3): 781.

Rosenblatt F. Principles of Neurodynamics. Spartan Books, 1962.

Taha H A. Operations Research An Introduction. Macmillan Publishing Co., Inc, 1982.

Zurada J M. Introduction to Artificial Neural Systems. Boston: PWS Publishing Co., USA, 1999.

Barber C B, Dodkin D P, Huhdanpaa H. The quickhull algorithm for convex hulls. ACM Transactions on Mathematical Software, 1996, 22(4): 469–483.

Tajine M, Elizondo D. New methods for testing linear separability. Neurocomputing, 2002, 47(1–4): 295–322.

Elizondo D. Searching for linearly separable subsets using the class of linear separability method. In Proc. IEEE-IJCNN, Budapest, Hungary, Jul. 25–29, 2004, pp.955-960.

Elizondo D. The linear separability problem: Some testing methods. IEEE Transactions on Neural Networks, 2006, 17(2): 330–344.

www.archive.ics.uci.edu/ml/datasets.html, Mar. 31, 2009.

Fisher R A. The Use of Multiple Measurements in Taxonomic Problems. Annals of Eugenics, 1936, 7: 179–188.

http://www.cs.waikato.ac.nz/~ml/weka/, May 1, 2009.

Witten I H, Frank E. Data Mining: Practical Machine Learning Tools and Techniques. Elsevier, 2005.

Author information

Authors and Affiliations

Corresponding author

Electronic Supplementary Material

Below is the link to the electronic supplementary material.

Rights and permissions

About this article

Cite this article

Soliman, M.AK.M.A., Abo-Bakr, R.M. Linearly and Quadratically Separable Classifiers Using Adaptive Approach. J. Comput. Sci. Technol. 26, 908–918 (2011). https://doi.org/10.1007/s11390-011-0188-x

Received:

Revised:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11390-011-0188-x