Abstract

The “memory wall” problem or so-called von Neumann bottleneck limits the efficiency of conventional computer architectures, which move data from memory to CPU for computation; these architectures cannot meet the demands of the emerging memory-intensive applications. Processing-in-memory (PIM) has been proposed as a promising solution to break the von Neumann bottleneck by minimizing data movement between memory hierarchies. This study focuses on prior art of architecture level DRAM PIM technologies and their implementation. The key challenges and mainstream solutions of PIM are summarized and introduced. The relative limitations of PIM simulation are discussed, as well as four conventional PIM simulators. Finally, research directions and perspectives are proposed for future development.

Similar content being viewed by others

References

Mittal S. A survey of ReRAM-based architectures for processing-in-memory and neural networks. Mach Learn Knowl Extr, 2018, 1: 75–114

Chen L R, Li J W, Chen Y R, et al. Accelerator-friendly neural-network training: learning variations and defects in RRAM crossbar. In: Proceedings of Design, Automation & Test in Europe Conference & Exhibition (DATE), Lausanne, 2017. 19–24

Chen W H, Li K X, Lin W Y, et al. A 65 nm 1 Mb nonvolatile computing-in-memory ReRAM macro with sub-16 ns multiply-and-accumulate for binary DNN AI edge processors. In: Proceedings of IEEE International Solid-State Circuits Conference, San Francisco, 2018. 494–496

Cai F, Correll J M, Lee S H, et al. A fully integrated reprogrammable memristor-CMOS system for efficient multiply-accumulate operations. Nat Electron, 2019, 2: 290–299

Yao P, Wu H, Gao B, et al. Fully hardware-implemented memristor convolutional neural network. Nature, 2020, 577: 641–646

Burr G W, Shelby R M, Sidler S, et al. Experimental demonstration and tolerancing of a large-scale neural network (165000 synapses) using phase-change memory as the synaptic weight element. In: Proceedings of IEEE International Electron Devices Meeting, 2015. 3498–3507

Guo X, Bayat F M, Bavandpour M, et al. Fast, energy-efficient, robust, and reproducible mixed-signal neuromorphic classifier based on embedded NOR flash memory technology. In: Proceedings of IEEE International Electron Devices Meeting (IEDM), San Francisco, 2017. 1–4

Jiang Z, Yin S, Seo J S, et al. XNOR-SRAM: in-bitcell computing SRAM Macro based on resistive computing mechanism. In: Proceedings of the 2019 on Great Lakes Symposium on VLSI, 2019. 417–422

Valavi H, Ramadge P J, Nestler E, et al. A 64-Tile 2.4-Mb in-memory-computing CNN accelerator employing charge-domain compute. IEEE J Solid-State Circ, 2019, 54: 1789–1799

Seshadri V, Lee D, Mullins T, et al. Ambit: in-memory accelerator for bulk bitwise operations using commodity DRAM technology. In: Proceedings of the 50th Annual IEEE/ACM International Symposium on Microarchitecture (MICRO), Boston, 2017. 273–287

Li S, Niu D, Malladi K T, et al. DRISA: a DRAM-based reconfigurable in-situ accelerator. In: Proceedings of the 50th Annual IEEE/ACM International Symposium on Microarchitecture (MICRO), Boston, 2017. 288–301

Angizi S, Fan D. ReDRAM: a reconfigurable processing-in-DRAM platform for accelerating bulk bit-wise operations. In: Proceedings of IEEE/ACM International Conference on Computer-Aided Design (ICCAD), Westminster, 2019. 1–8

Kautz W H. Cellular logic-in-memory arrays. IEEE Trans Comput, 1969, 18: 719–727

Stone H S. A logic-in-memory computer. IEEE Trans Comput, 1970, 19: 73–78

Singh G, Chelini L, Corda S, et al. Near-memory computing: past, present, and future. Microprocessors Microsyst, 2019, 71: 102868

Jeddeloh J, Keeth B. Hybrid memory cube new DRAM architecture increases density and performance. In: Proceedings of Symposium on VLSI Technology (VLSIT), 2012

Dong U L, Kyung W K, Kwan W K, et al. 25.2 A 1.2 V 8 Gb 8-channel 128 GB/s high-bandwidth memory (HBM) stacked DRAM with effective microbump I/O test methods using 29 nm process and TSV. In: Proceedings of IEEE International Solid-State Circuits Conference Digest of Technical Papers (ISSCC), San Francisco, 2014. 432–433

Devaux F. The true processing in memory accelerator. In: Proceedings of IEEE Hot Chips 31 Symposium (HCS), Cupertino, 2019. 1–24

Consortium. Hybrid memory cube specification 2.1, 2015

Zhuo Y, Wang C, Zhang M, et al. GraphQ: scalable PIM-based graph processing. In: Proceedings of the 52nd Annual IEEE/ACM International Symposium, 2019. 712–725

He M, Song C, Kim I, et al. Newton: a DRAM-maker’s accelerator-in-memory (AiM) architecture for machine learning. In: Proceedings of the 53rd Annual IEEE/ACM International Symposium on Microarchitecture (MICRO), Athens, 2020. 372–385

Boroumand A, Zheng H, Mutlu O, et al. CoNDA: efficient cache coherence support for near-data accelerators. In: Proceedings of ACM/IEEE 46th Annual International Symposium on Computer Architecture (ISCA), Phoenix, 2019. 629–642

Ahn J, Yoo S, Mutlu O, et al. PIM-enabled instructions: a low-overhead, locality-aware processing-in-memory architecture. In: Proceedings of ACM/IEEE 42nd Annual International Symposium on Computer Architecture (ISCA), Portland, 2015. 336–348

Cheng L, Muralimanohar N, Ramani K, et al. Interconnect-aware coherence protocols for chip multiprocessors. In: Proceedings of the 33rd International Symposium on Computer Architecture (ISCA), Boston, 2006. 339–351

Baer J L, Wang W H. On the inclusion properties for multi-level cache hierarchies. In: Proceedings of the 15th Annual International Symposium on Computer Architecture, Honolulu, 1988. 73–80

Imani M, Gupta S, Rosing T. Ultra-efficient processing in-memory for data intensive applications. In: Proceedings of the 54th ACM/EDAC/IEEE Design Automation Conference (DAC), Austin, 2017. 1–6

Azarkhish E, Rossi D, Loi I, et al. Design and evaluation of a processing-in-memory architecture for the smart memory cube. In: Proceedings of International Conference on Architecture of Computing Systems. Berlin: Springer, 2016

Farmahini-Farahani A, Ahn J H, Morrow K, et al. NDA: near-DRAM acceleration architecture leveraging commodity DRAM devices and standard memory modules. In: Proceedings of IEEE 21st International Symposium on High Performance Computer Architecture (HPCA), Burlingame, 2015. 283–295

Boroumand A, Ghose S, Patel M, et al. LazyPIM: an efficient cache coherence mechanism for processing-in-memory. IEEE Comput Arch Lett, 2017, 16: 46–50

Xu S, Chen X, Wang Y, et al. CuckooPIM: an efficient and less-blocking coherence mechanism for processing-in-memory systems. In: Proceedings of the 24th Asia and South Pacific Design Automation Conference (ASPDAC’19). New York: Association for Computing Machinery, 2019. 140–145

Xu S, Wang Y, Han Y, et al. PIMCH: cooperative memory prefetching in processing-in-memory architecture. In: Proceedings of the 23rd Asia and South Pacific Design Automation Conference (ASP-DAC), Jeju, 2018. 209–214

Nesbit K J, Smith J E. Data cache prefetching using a global history buffer. IEEE Micro, 2005, 25: 90–97

Ishii Y, Inaba M, Hiraki K. Access map pattern matching for high performance data cache prefetch. J Instruction-Level Parallelism, 2011, 13: 499–500

Ahn J, Hong S, Yoo S, et al. A scalable processing-in-memory accelerator for parallel graph processing. In: Proceedings of 2015 ACM/IEEE 42nd Annual International Symposium on Computer Architecture (ISCA), Portland, 2015. 105–117

Xu S, Chen X, Han Y, et al. TUPIM: a transparent and universal processing-in-memory architecture for unmodified binaries. In: Proceedings of the 2020 on Great Lakes Symposium on VLSI (GLSVLSI’20). New York: Association for Computing Machinery, 2020. 199–204

Oliveira G F, Santos P C, Alves M A Z, et al. A generic processing in memory cycle accurate simulator under hybrid memory cube architecture. In: Proceedings of 2017 International Conference on Embedded Computer Systems: Architectures, Modeling, and Simulation (SAMOS), Pythagorion, 2017. 54–61

Kim Y, Yang W, Mutlu O. Ramulator: a fast and extensible DRAM simulator. IEEE Comput Arch Lett, 2016, 15: 45–49

Singh G, Gomez-Luna J, Mariani G, et al. NAPEL: near-memory computing application performance prediction via ensemble learning. In: Proceedings of the 56th ACM/IEEE Design Automation Conference (DAC), Las Vegas, 2019. 1–6

Xu S, Chen X, Wang Y, et al. PIMSim: a flexible and detailed processing-in-memory simulator. IEEE Comput Arch Lett, 2019, 18: 6–9

Binkert N, Beckmann B, Black G, et al. The GEM5 simulator. SIGARCH Comput Archit News, 2011, 39: 1–7

Sanchez D, Kozyrakis C. ZSim: fast and accurate microarchitectural simulation of thousand-core systems. SIGARCH Comput Archit News, 2013, 41: 475–486

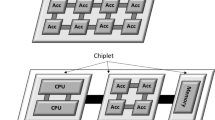

Coudrain P, Charbonnier J, Garnier A, et al. Active interposer technology for chiplet-based advanced 3D system architectures. In: Proceedings of 2019 IEEE 69th Electronic Components and Technology Conference (ECTC), Las Vegas, 2019. 569–578

Shen X, Xia Z, Yang T, et al. Hydrogen source and diffusion path for Poly-Si channel passivation in Xtacking 3D NAND flash memory. IEEE J Electron Dev Soc, 2020, 8: 1021–1024

Acknowledgements

This work was supported by National Key R&D Program of China (Grant No. 2018YFA0701500), Zhejiang Lab (Grant No. 2019KC0AB010), Key Research Program of Frontier Sciences, CAS (Grant No. ZDBS-LY-JSC012), Strategic Priority Research Program of CAS (Grant No. XDB44000000), Youth Innovation Promotion Association CAS, Beijing Academy of Artificial Intelligence (BAAI), Anhui Natural Science Foundation (Grant No. 2008085QF330), and Research Program of Anhui Normal University (Grant No. 751968).

Author information

Authors and Affiliations

Corresponding authors

Rights and permissions

About this article

Cite this article

Zou, X., Xu, S., Chen, X. et al. Breaking the von Neumann bottleneck: architecture-level processing-in-memory technology. Sci. China Inf. Sci. 64, 160404 (2021). https://doi.org/10.1007/s11432-020-3227-1

Received:

Revised:

Accepted:

Published:

DOI: https://doi.org/10.1007/s11432-020-3227-1