Abstract

Purpose

Machine learning (ML) models in medical imaging (MI) can be of great value in computer aided diagnostic systems, but little attention is given to the confidence (alternatively, uncertainty) of such models, which may have significant clinical implications. This paper applied, validated, and explored a technique for assessing uncertainty in convolutional neural networks (CNNs) in the context of MI.

Materials and methods

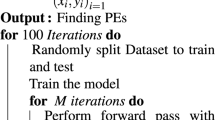

We used two publicly accessible imaging datasets: a chest x-ray dataset (pneumonia vs. control) and a skin cancer imaging dataset (malignant vs. benign) to explore the proposed measure of uncertainty based on experiments with different class imbalance-sample sizes, and experiments with images close to the classification boundary. We also further verified our hypothesis by examining the relationship with other performance metrics and cross-checking CNN predictions and confidence scores with an expert radiologist (available in the Supplementary Information). Additionally, bounds were derived on the uncertainty metric, and recommendations for interpretability were made.

Results

With respect to training set class imbalance for the pneumonia MI dataset, the uncertainty metric was minimized when both classes were nearly equal in size (regardless of training set size) and was approximately 17% smaller than the maximum uncertainty resulting from greater imbalance. We found that less-obvious test images (those closer to the classification boundary) produced higher classification uncertainty, about 10–15 times greater than images further from the boundary. Relevant MI performance metrics like accuracy, sensitivity, and sensibility showed seemingly negative linear correlations, though none were statistically significant (p \(\ge \) 0.05). The expert radiologist and CNN expressed agreement on a small sample of test images, though this finding is only preliminary.

Conclusions

This paper demonstrated the importance of uncertainty reporting alongside predictions in medical imaging. Results demonstrate considerable potential from automatically assessing classifier reliability on each prediction with the proposed uncertainty metric.

Similar content being viewed by others

References

Kendall A, Gal Y (2017) What uncertainties do we need in Bayesian deep learning for computer vision? Adv Neural Inf Process Syst 2017:5575–5585

Gal Y, Ghahramani Z (2016) Dropout as a Bayesian approximation: representing model uncertainty in deep learning, 33rd Int Conf Mach Learn ICML 3:1651–1660

Leibig C, Allken V, Ayhan MS, Berens P, Wahl S (2017) Leveraging uncertainty information from deep neural networks for disease detection. Sci Rep 7(1):1–14. https://doi.org/10.1038/s41598-017-17876-z

Balki I, Amirabadi A, Levman J, Martel AL, Emersic Z, Meden B, Garcia-Pedrero A, Ramirez SC, Kong D, Moody AR, Tyrrell PN (2019) Sample-size determination methodologies for machine learning in medical imaging research: a systematic review. Can Assoc Radiol J 70(4):344–353. https://doi.org/10.1016/j.carj.2019.06.002

Park SH, Han K (2018) Methodologic guide for evaluating clinical performance and effect of artificial intelligence technology for medical diagnosis and prediction. Radiol 286(3):800–809. https://doi.org/10.1148/radiol.2017171920

Goodfellow I, Bengio Y, Courville A (2015) Deep learning. MIT Press, USA

Michelmore R, Kwiatkowska M and Gal Y (2018) Evaluating uncertainty quantification in end-to-end autonomous driving control,” [Online] Available: http://arxiv.org/abs/1811.06817

Tang A, Tam R, Cadrin-Chenevert A, Guest W, Chong J, Barfett J, Chepelev L, Cairns R, Mitchell JR, Cicero MD, Poudrette MG, Jaremko JL, Reinhold C, Gallix B, Gray B, Geis R (2018) Canadian association of radiologists white paper on artificial intelligence in radiology. Can Assoc Radiol Artif Intell Work Gr 69:120–135

Kwon Y, Won J-H, Kim BJ, Paik MC (2020) “Uncertainty quantification using Bayesian neural networks in classification: application to ischemic stroke lesion segmentation. Comput Stat Data Anal 142:106816

Kermany DS, Goldbaum M, Cai W, Valentim CCS, Liang H, Baxter SL, McKeown A, Yang G, Wu X, Yan F, Dong J, Prasadha MK, Pei J, Ting MYL, Zhu J, Li C, Hewett S, Dong J, Ziyar I, Shi A, Zhang R, Zheng L, Hou R, Shi W, Fu X, Duan Y, Huu VAN, Wen C, Zhang ED, Zhang CL, Li O, Wang X, Singer MA, Sun X, Xu J, Tafreshi A, Lewis MA, Xia H, Zhang K (2018) Identifying medical diagnoses and treatable diseases by image-based deep learning. Cell 172(5):1122-1131.e9. https://doi.org/10.1016/j.cell.2018.02.010

Funding

No funds, grants, or other support was received.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest

Dr. Bilbily is an officer and possesses shares in 16 Bit Inc, a medical AI startup company founded in 2016. Dr. Levman is founder of Time Will Tell Technologies, an AI focused technology startup company founded in 2021.

Ethical standards

This article does not contain any studies with human participants or animals performed by any of the authors.

Human and animals rights

This article does not contain any studies with human participants or animals performed by any of the authors.

Informed consent

This article does not contain patient data.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary Information

Below is the link to the electronic supplementary material.

11548_2022_2578_MOESM1_ESM.png

Fig. S1 Ten test-set images (consisting of the 5 most-confidently classified by the CNN and the 5 least-confidently classified by the CNN, in orange) with confidence scores (between 0 and 1) alongside radiologist confidence scores (in blue) for a machine-human comparison (PNG 15 kb)

Rights and permissions

About this article

Cite this article

Valen, J., Balki, I., Mendez, M. et al. Quantifying uncertainty in machine learning classifiers for medical imaging. Int J CARS 17, 711–718 (2022). https://doi.org/10.1007/s11548-022-02578-3

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11548-022-02578-3