Abstract

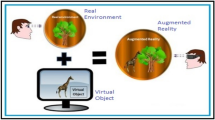

This paper studies the application of augmented reality real-time depth image technology to 3D human motion recognition technology. The accuracy and real-time performance of sensor-based 3D human reconstruction are affected by visual characteristics and illumination changes. Features are not easily extracted and cannot be tracked, leading to failures in the 3D reconstruction of the human body. Based on this system, the sensor-based visual inertial initialization algorithm is studied, which is integrated in the two-frame image time interval to provide accurate initial values for vision-based motion estimation, improve the accuracy of the calculated posture, and finally improve the accuracy of the 3D reconstruction system. Based on the relationship between the depth image and the distance and reflectivity, a model for correcting the distance error and reflectivity error of the depth image is established to improve the accuracy of the depth image, and finally the accuracy of the three-dimensional reconstruction of the human body.

Similar content being viewed by others

References

Ge, L., Liang, H., Yuan, J., et al.: Real-time 3D hand pose estimation with 3D convolutional neural networks. IEEE Trans. Pattern Anal. Mach. Intell. 41(4), 956–970 (2018a)

Xu, W., Chatterjee, A., Zollhoefer, M., et al.: Mo 2 Cap 2: real-time mobile 3D motion capture with a cap-mounted Fisheye camera. IEEE Trans. Visual Comput. Graphics 25(5), 2093–2101 (2019)

Thilahar, R., Sivaramakrishnan, R.: Fuzzy neuro-genetic approach for feature selection and image classification in augmented reality systems. Int. J. Robot. Autom. 8(3), 194–204 (2019)

Mehta, D., Sridhar, S., Sotnychenko, O., et al.: Vnect: real-time 3D human pose estimation with a single RGB camera. ACM Trans. Graphics 36(4), 1–14 (2017)

Yao, Z., Liu, Y., Ji, Z., et al.: 3D driver pose estimation based on joint 2D–3D network. IET Comput. Vis. 14(3), 84–91 (2020)

He, H., Liu, G., Zhu, X., et al.: Interacting multiple model-based human pose estimation using a distributed 3D camera network. IEEE Sens. J. 19(22), 10584–10590 (2019)

Guo, F., He, Z., Zhang, S., et al.: Estimation of 3D human hand poses with structured pose prior. IET Comput. Vis. 13(8), 683–690 (2019)

Li, C., Sun, X., Li, Y.: Information hiding based on augmented reality. Math. Biosci. Eng. 16(5), 4777–4787 (2019)

Alhaija, H.A., Mustikovela, S.K., Mescheder, L., et al.: Augmented reality meets computer vision: efficient data generation for urban driving scenes. Int. J. Comput. Vis. 126(9), 961–972 (2018)

Gao, Q.H., Wan, T.R., Tang, W., et al.: Object registration in semi-cluttered and partial-occluded scenes for augmented reality. Multimed. Tools Appl. 78(11), 15079–15099 (2019)

Kim, S.J., Kim, S., Lee, D., et al.: Depth-map estimation using combination of global deep network and local deep random forest. Electron. Imaging 2019(16), 4-1-4–5 (2019)

Hetherington, J., Lessoway, V., Gunka, V., et al.: SLIDE: automatic spine level identification system using a deep convolutional neural network. Int. J. Comput. Assist. Radiol. Surg. 12(7), 1189–1198 (2017)

Kim, J., Jung, H., Kang, M.A., et al.: 3D human-gesture interface for fighting games using motion recognition sensor. Wirel. Pers. Commun. 89(3), 927–940 (2016)

Nishio, T., Okamoto, H., Nakashima, K., et al.: Proactive received power prediction using machine learning and depth images for mm wave networks. IEEE J. Sel. Areas Commun. 37(11), 2413–2427 (2019)

Hu, Z., Hu, Y., Wu, B., et al.: Hand pose estimation with multi-scale network. Appl. Intell. 48(8), 2501–2515 (2018)

Davies, R., Wilson, I., Ware, A.: Stereoscopic human detection in a natural environment. Ann. Emerg. Technol. Comput. 2(2), 15–23 (2018)

Tong, H., Wan, Q., Kaszowska, A., et al.: ARFurniture: augmented reality interior decoration style colorization. Electron. Imaging 2019(2), 175–179 (2019)

Togootogtokh, E., Shih, T.K., Kumara, W., et al.: 3D finger tracking and recognition image processing for real-time music playing with depth sensors. Multimed. Tools Appl. 77(8), 9233–9248 (2018)

Kumara, W., Yen, S.H., Hsu, H.H., et al.: Real-time 3D human objects rendering based on multiple camera details. Multimed. Tools Appl. 76(9), 11687–11713 (2017)

Ge, L., Liang, H., Yuan, J., et al.: Robust 3D hand pose estimation from single depth images using multi-view CNNs. IEEE Trans. Image Process. 27(9), 4422–4436 (2018b)

Hoang, V.T., Jo, K.H.: 3-D human pose estimation using cascade of multiple neural networks. IEEE Trans. Ind. Inf. 15(4), 2064–2072 (2018)

Kim, H.J., Kim, B.H.: Implementation of young children English education system by AR type based on P2P network service model. Peer-to-Peer Network. Appl. 11(6), 1252–1264 (2018)

Alghabri, A.O., Osman, F.H., Ahmed, N.Y.: FPGA-based real time hand gesture and AR marker recognition and tracking for multi augmented reality applications. Arab. J. Nucl. Sci. Appl. 50(3), 66–76 (2017)

Albu, V.: Measuring customer behavior with deep convolutional neural networks. BRAIN Broad Res. Artif. Intell. Neurosci. 7(1), 74–79 (2016)

Rameau, F., Ha, H., Joo, K., et al.: A real-time augmented reality system to see-through cars. IEEE Trans. Visual Comput. Graphics 22(11), 2395–2404 (2016)

Acknowledgements

This work is supported by the Sichuan education informatization application and Development Research Center: Research on the innovation of AR technology in the field of basic education (no.: JYXX20-034).

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Huang, R., Sun, M. Network algorithm real-time depth image 3D human recognition for augmented reality. J Real-Time Image Proc 18, 307–319 (2021). https://doi.org/10.1007/s11554-020-01045-z

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11554-020-01045-z