Abstract

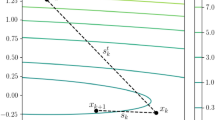

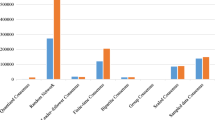

This paper considers a distributed optimization problem encountered in a time-varying multi-agent network, where each agent has local access to its convex objective function, and cooperatively minimizes a sum of convex objective functions of the agents over the network. Based on the mirror descent method, we develop a distributed algorithm by utilizing the subgradient information with stochastic errors. We firstly analyze the effects of stochastic errors on the convergence of the algorithm and then provide an explicit bound on the convergence rate as a function of the error bound and number of iterations. Our results show that the algorithm asymptotically converges to the optimal value of the problem within an error level, when there are stochastic errors in the subgradient evaluations. The proposed algorithm can be viewed as a generalization of the distributed subgradient projection methods since it utilizes more general Bregman divergence instead of the Euclidean squared distance. Finally, some simulation results on a regularized hinge regression problem are presented to illustrate the effectiveness of the algorithm.

Similar content being viewed by others

References

Tsitsiklis, J.N.: Problems in decentralized decision making and computation, Ph.D. Thesis, Department of Electrical Engineering and Computer Science, Massachusetts Institute of Technology (1984)

Tsitsiklis, J.N., Bertsekas, D.P., Athans, M.: Distributed asynchronous deterministic and stochastic gradient optimization algorithms. IEEE Trans. Autom. Control 31, 803–812 (1986)

Bertsekas, D.P., Tsitsiklis, J.N.: Parallel and distributed computation: numerical methods. Athena Scientific, Belmont (1997)

Olfati-Saber, R., Murray, R.: Consensus problems in networks of agents with switching topology and time-delays. IEEE Trans. Autom. Control 49, 1520–1533 (2004)

Johansson, B., Rabi, M., Johansson, M.: A randomized incremental subgradient method for distributed optimization in networked systems. SIAM J. Optim. 20, 1157–1170 (2009)

Boyd, S., Parikh, N., Chu, E., Peleato, B., Eckstein, J.: Distributed optimization and statistical learning via the alternating direction method of multipliers. Found. Trends Mach. Learn. 3, 1–122 (2011)

Nedić, A., Ozdaglar, A.: Distributed subgradient methods for multi-agent optimization. IEEE Trans. Autom. Control. 54, 48–61 (2009)

Xiao, L., Boyd, S., Kim, S.J.: Distributed average consensus with least-mean-square deviation. J. Parallel Distrib. Comput. 67, 33–46 (2007)

Xiao, L.: Dual averaging methods for regularized stochastic learning and online optimization. J. Mach. Learn. Res. 11, 2543–2596 (2010)

Nedić, A., Ozdaglar, A., Parrilo, P.A.: Constrained consensus and optimization in multi-agent networks. IEEE Trans. Autom. Control. 55, 922–938 (2010)

Ram, S.S., Nedić, A., Veeravalli, V.V.: Distributed stochastic subgradient projection algorithms for convex optimization. J. Optim. Theory Appl. 147, 516–545 (2011)

Lobel, I., Ozdaglar, A.: Distributed subgradient methods for convex optimization over random networks. IEEE Trans. Autom. Control 56, 1291–1306 (2011)

Lee, S., Nedić, A.: Distributed random projection algorithm for convex optimization, IEEE. J. Select. Top. Signal Proc. 7, 221–229 (2013)

Li, J., Wu, C., Wu, Z., Qiang, L.: Gradient-free method for nonsmooth distributed optimization. J. Global Optim. 61, 325–340 (2015)

Li, J., Wu, C., Wu, Z., Qiang, L., Wang, X.: Distributed proximal-gradient method for convex optimization with inequality constraints. ANZIAM J. 56, 160–178 (2014)

Duchi, J.C., Agarwal, A., Wainwright, M.: Dual averaging for distributed optimization: Convergence analysis and network scaling. IEEE Trans. Autom. Control. 57, 592–606 (2012)

Zhu, M., Martínez, S.: On distributed convex optimization under inequality and equality constraints. IEEE Trans. Autom. Control 57, 151–164 (2012)

Yuan, D., Xu, S., Zhao, H.: Distributed primal-dual subgradient method for multiagent optimization via consensus algorithms. IEEE Trans. Syst. Man. Cybern. B 41, 1715–1724 (2011)

Beck, A., Teboulle, M.: Mirror descent and nonlinear projected subgradient methods for convex optimization. Oper. Res. Lett. 31, 167–175 (2003)

Xi, C.G., Wu, Q., Khan, U.A.: Distributed mirror descent over directed graphs, (2014); arXiv preprint arXiv:1412.5526

Nemirovksi, A., Yudin, D.: Problem complexity and method efficiency in optimization. John Wiley Press, New Jersey (1983)

Nemirovski, A., Juditsky, A., Lan, G., Shapiro, A.: Robust stochastic approximation approach to stochastic programming. SIAM J. Optim. 19, 1574–1609 (2009)

Nedić, A., Bertsekas, D.P.: The effect of deterministic noise in subgradient methods. Math. Progr. 125, 75–99 (2010)

Ermoliev, Y.: Stochastic programming methods. Nauka, Moscow (1976)

Ram, S.S., Nedić, A., Veeravalli, V.V.: Incremental stochastic subgradient algorithms for convex optimization. SIAM J. Optim. 20, 691–717 (2009)

Bregman, L.M.: The relaxation method of finding the common point of convex sets and its application to the solution of problems in convex programming, U.S.S.R. Comput. Math. Math. Phys. 7, 200–217 (1967)

Bauschke, H.H., Jonathan, M.B., Borwein, M.: Joint and separate convexity of the Bregman distance. Stud. Comput. Math. 8, 23–36 (2001)

Nedić, A., Lee, S.: On stochastic subgradient mirror-descent algorithm with weighted averaging. SIAM J. Optim. 24, 84–107 (2014)

Li, J., Chen, G., Dong, Z., Wu, Z.: Distributed mirror descent method for multi-agent optimization with delay. Neurocomputing 177, 643–650 (2016)

Yuan, D., Xu, S., Zhang, B., Rong, L.: Distributed primal-dual stochastic subgradient algorithms for multi-agent optimization under inequality constraints. Int. J. Robust. Nonlinear Control 23, 1846–1868 (2013)

Yuan, D., Ho, D.W.: Randomized gradient-free method for multiagent optimization over time-varying networks. IEEE Trans. Neural Networks Learn. Syst. 26, 1342–1347 (2015)

Bianchi, P., Jakubowicz, J.: Convergence of a multi-agent projected stochastic gradient algorithm for non-convex optimization. IEEE Trans. Autom. Control 58(2), 391–405 (2013)

Yousefian, F., Nedić, A., Shanbhag, U.V.: On stochastic gradient and subgradient methods with adaptive steplength sequences. Automatica 48, 56–67 (2012)

Duchi, J.C., Bartlett, P.L., Wainwright, M.J.: Randomized smoothing for stochastic optimization. SIAM J. Optim. 22, 674–701 (2012)

Acknowledgments

This research was partially supported by the NSFC under Grants 11501070, 61473326 and 11401064, by the Chinese Scholarship Council Grant 201508500038, by the Natural Science Foundation Projection of Chongqing under Grants cstc2015jcyjA0382, cstc2013jcyjA00029 and cstc2013jcyjA00021, by Chongqing Municipal Education Commission under Grants KJ1500301 and KJ1500302, and by the Chongqing Normal University Research Foundation under Grant 15XLB005.

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Li, J., Li, G., Wu, Z. et al. Stochastic mirror descent method for distributed multi-agent optimization. Optim Lett 12, 1179–1197 (2018). https://doi.org/10.1007/s11590-016-1071-z

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11590-016-1071-z