Abstract

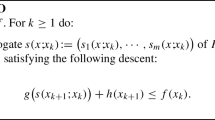

We consider \(\beta \)-smooth (satisfies the generalized Hölder condition with parameter \(\beta > 2\)) stochastic convex optimization problem with zero-order one-point oracle. The best known result was (Akhavan et al. in Exploiting higher order smoothness in derivative-free optimization and continuous bandits, 2020):

in \(\gamma \)-strongly convex case, where n is the dimension. In this paper we improve this bound:

Similar content being viewed by others

References

Akhavan, A., Pontil, M., Tsybakov, A.B.: Exploiting higher order smoothness in derivative-free optimization and continuous bandits. arXiv preprint arXiv:2006.07862 (2020)

Akhavan, A., Pontil, M., Tsybakov, A.B.: Distributed zero-order optimization under adversarial noise. arXiv preprint arXiv:2102.01121 (2021)

Bach, F., Perchet, V.: Highly-smooth zero-th order online optimization. In: Conference on Learning Theory, pp. 257–283 (2016)

Bubeck, S., Lee, Y.T., Eldan, R.: Kernel-based methods for bandit convex optimization. In: Proceedings of the 49th Annual ACM SIGACT Symposium on Theory of Computing, pp. 72–85 (2017)

Conn, A.R., Scheinberg, K., Vicente, L.N.: Introduction to Derivative-Free Optimization. Society for Industrial and Applied Mathematics, Philadelphia (2009)

Gasnikov, A., Dvurechensky, P., Kamzolov, D.: Gradient and gradient-free methods for stochastic convex optimization with inexact oracle. arXiv preprint arXiv:1502.06259 (2015)

Gasnikov, A., Dvurechensky, P., Nesterov, Y.: Stochastic gradient methods with inexact oracle. arXiv preprint arXiv:1411.4218 (2014)

Gasnikov, A.V., Krymova, E.A., Lagunovskaya, A.A., Usmanova, I.N., Fedorenko, F.A.: Stochastic online optimization. Single-point and multi-point non-linear multi-armed bandits. Convex and strongly-convex case. Autom. Remote Control 78(2), 224–234 (2017)

Larson, J., Menickelly, M., Wild, S.M.: Derivative-free optimization methods. Acta Numer. 28, 287–404 (2019). https://doi.org/10.1017/S0962492919000060

Nemirovski, A., Yudin, D.: Problem Complexity and Method Efficiency in Optimization. John Wiley & Sons, New York (1983)

Novitskii, V.: Zeroth-order algorithms for smooth saddle-point problems (2020). https://cutt.ly/jmKAtcg

Polyak, B.T., Tsybakov, A.B.: Optimal order of accuracy of search algorithms in stochastic optimization. Probl. Peredachi Inf. 26(2), 45–53 (1990)

Sadiev, A., Beznosikov, A., Dvurechensky, P., Gasnikov, A.: Zeroth-order algorithms for smooth saddle-point problems. arXiv preprint arXiv:2009.09908 (2020)

Spall, J.C.: Introduction to Stochastic Search and Optimization, 1st edn. John Wiley & Sons Inc., New York (2003)

Acknowledgements

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

This work was supported by a grant for research centers in the field of artificial intelligence, provided by the Analytical Center for the Government of the RF in accordance with the subsidy agreement (agreement identifier 000000D730321P5Q0002) and the agreement with the Ivannikov Institute for System Programming of the RAS dated November 2, 2021 No. 70-2021-00142.

Rights and permissions

About this article

Cite this article

Novitskii, V., Gasnikov, A. Improved exploitation of higher order smoothness in derivative-free optimization. Optim Lett 16, 2059–2071 (2022). https://doi.org/10.1007/s11590-022-01863-z

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11590-022-01863-z