Abstract

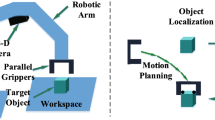

Perception and manipulation tasks for robotic manipulators involving highly-cluttered objects have become increasingly in-demand for achieving a more efficient problem solving method in modern industrial environments. But, most of the available methods for performing such cluttered tasks failed in terms of performance, mainly due to inability to adapt to the change of the environment and the handled objects. Here, we propose a new, near real-time approach to suction-based grasp point estimation in a highly cluttered environment by employing an affordance-based approach. Compared to the state-of-the-art, our proposed method offers two distinctive contributions. First, we use a modified deep neural network backbone for the input of the semantic segmentation, to classify pixel elements of the input red, green, blue and depth (RGBD) channel image which is then used to produce an affordance map, a pixel-wise probability map representing the probability of a successful grasping action in those particular pixel regions. Later, we incorporate a high speed semantic segmentation to the system, which makes our solution have a lower computational time. This approach does not need to have any prior knowledge or models of the objects since it removes the step of pose estimation and object recognition entirely compared to most of the current approaches and uses an assumption to grasp first then recognize later, which makes it possible to have an object-agnostic property. The system was designed to be used for household objects, but it can be easily extended to any kind of objects provided that the right dataset is used for training the models. Experimental results show the benefit of our approach which achieves a precision of 88.83%, compared to the 83.4% precision of the current state-of-the-art.

Similar content being viewed by others

References

X. L. Li, L. C. Wu, T. Y. Lan. A 3D-printed robot hand with three linkage-driven underactuated fingers. International Journal of Automation and Computing, vol. 15, no. 5, pp. 593–602, 2018. DOI: https://doi.org/10.1007/s11633-018-1125-z.

Y. D. Ku, J. H. Yang, H. Y. Fang, W. Xiao, J. T. Zhuang. Optimization of grasping efficiency of a robot used for sorting construction and demolition waste. International Journal of Automation and Computing, vol. 17, no. 5, pp. 691–700, 2020. DOI: https://doi.org/10.1007/s11633-020-1237-0.

C. Ma, H. Qiao, R. Li, X. Q. Li. Flexible robotic grasping strategy with constrained region in environment. International Journal of Automation and Computing, vol. 14, no. 5, pp. 552–563, 2017. DOI: https://doi.org/10.1007/s11633-017-1096-5.

A. Zeng, S. R. Song, K. T. Yu, E. Donlon, F. R. Hogan, M. Bauza, D. L. Ma, O. Taylor, M. Liu, E. Romo, N. Fazeli, F. Alet, N. C. Dafle, R. Holladay, I. Morena, P. Q. Nair, D. Green, I. Taylor, W. Liu, T. Funkhouser, A. Rodriguez. Robotic pick-and-place of novel objects in clutter with multi-affordance grasping and cross-domain image matching. In Proceedings of IEEE International Conference on Robotics and Automation, Brisbane, Australia, pp. 3750–3757, 2018. DOI: https://doi.org/10.1109/ICRA.2018.8461044.

S. Caldera, A. Rassau, D. Chai. Review of deep learning methods in robotic grasp detection. Multimodal Technologies and Interaction, vol. 2, no. 3, Article number 57, 2018. DOI: https://doi.org/10.3390/mti2030057.

D. M. Zhao, S. T. Li. A 3D image processing method for manufacturing process automation. Computers in Industry, vol. 56, no. 8–9, pp. 975–985, 2005. DOI: https://doi.org/10.1016/j.compind.2005.05.021.

A. Collet, D. Berenson, S. S. Srinivasa, D. Ferguson. Object recognition and full pose registration from a single image for robotic manipulation. In Proceedings of IEEE international conference on Robotics and Automation, Kobe, Japan, pp. 3534–3541, 2009. DOI: https://doi.org/10.1109/ROBOT.2009.5152739.

C. Papazov, S. Haddadin, S. Parusel, K. Krieger, D. Burschka. Rigid 3D geometry matching for grasping of known objects in cluttered scenes. The International Journal of Robotics Research, vol. 31, no. 4, pp. 538–553, 2012. DOI: https://doi.org/10.1177/0278364911436019.

M. Y. Liu, O. Tuzel, A. Veeraraghavan, Y. Taguchi, T. K. Marks, R. Chellappa. Fast object localization and pose estimation in heavy clutter for robotic bin picking. The International Journal of Robotics Research, vol. 31, no. 8, pp. 951–973, 2012. DOI: https://doi.org/10.1177/0278364911436018.

C. Y. Tsai, K. J. Hsu, H. Nisar. Efficient model-based object pose estimation based on multi-template tracking and PnP algorithms. Algorithms, vol. 11, no. 8, Article number 122, 2018. DOI: https://doi.org/10.3390/a11080122.

A. Herzog, P. Pastor, M. Kalakrishnan, L. Righetti, J. Bohg, T. Asfour, S. Schaal. Learning of grasp selection based on shape-templates. Autonomous Robots, vol. 36, no. 1–2, pp. 51–65, 2014. DOI: https://doi.org/10.1007/s10514-013-9366-8.

M. Gualtieri, A. Ten Pas, K. Saenko, R. Platt. High precision grasp pose detection in dense clutter. In Proceedings of IEEE/RSJ International Conference on Intelligent Robots and Systems, IEEE, Daejeon, South Korea, pp. 598–605, 2016. DOI: https://doi.org/10.1109/IROS.2016.7759114.

A. ten Pas, M. Gualtieri, K. Saenko, R. Platt. Grasp pose detection in point clouds. The International Journal of Robotics Research, vol. 36, no. 13–14, pp. 1455–1473, 2017. DOI: https://doi.org/10.1177/0278364917735594.

J. Wei, H. P. Liu, G. W. Yan, F. C. Sun. Robotic grasping recognition using multi-modal deep extreme learning machine. Multidimensional Systems and Signal Processing, vol. 28, no. 3, pp. 817–833, 2017. DOI: https://doi.org/10.1007/s11045-016-0389-0.

D. Guo, F. C. Sun, H. P. Liu, T. Kong, B. Fang, N. Xi. A hybrid deep architecture for robotic grasp detection. In Proceedings of IEEE International Conference on Robotics and Automation, Singapore, pp. 1609–1614, 2017. DOI: https://doi.org/10.1109/ICRA.2017.7989191.

J. Bohg, A. Morales, T. Asfour, D. Kragic. Data-driven grasp synthesis-a survey. IEEE Transactions on Robotics, vol. 30, no. 2, pp. 289–309, 2014. DOI: https://doi.org/10.1109/TRO.2013.2289018.

Y. Xiang, T. Schmidt, V. Narayanan, D. Fox. PoseCNN: A convolutional neural network for 6D object pose estimation in cluttered scenes, [Online], Available: https://arxiv.org/abs/1711.00199, 2017.

J. Mahler, J. Liang, S. Niyaz, M. Laskey, R. Doan, X. Y. Liu, J. A. Ojea, K. Goldberg. Dex-Net 2.0: Deep learning to plan robust grasps with synthetic point clouds and analytic grasp metrics, [Online], Available: https://arxiv.org/abs/1703.09312, 2017.

J. Mahler, K. Goldberg. Learning deep policies for robot bin picking by simulating robust grasping sequences. In Proceedings of the 1st Annual Conference on Robot Learning, Mountain View, USA, pp. 515–524, 2017.

T. T. Do, M. Cai, T. Pham, I. Reid. Deep-6DPose: Recovering 6D object pose from a single RGB image, [Online], Available: https://arxiv.org/abs/1802.10367, 2018.

J. Tremblay, T. To, B. Sundaralingam, Y. Xiang, D. Fox, S. Birchfield. Deep object pose estimation for semantic robotic grasping of household objects, [Online], Available: https://arxiv.org/abs/1809.10790, 2018.

M. Danielczuk, M. Matl, S. Gupta, A. Li, A. Lee, J. Mahler, K. Goldberg. Segmenting unknown 3D objects from real depth images using mask R-CNN trained on synthetic data. In Proceedings of International Conference on Robotics and Automation, IEEE, Montreal, Canada, pp. 7283–7290, 2019. DOI: https://doi.org/10.1109/ICRA.2019.8793744.

A. Saxena, J. Driemeyer, J. Kearns, A. Y. Ng. Robotic grasping of novel objects. In Proceedings of the 19th International Conference on Neural Information Processing Systems, Vancouver, Canada, pp. 1209–1216, 2006.

E. Johns, S. Leutenegger, A. J. Davison. Deep learning a grasp function for grasping under gripper pose uncertainty. In Proceedings of IEEE/RSJ International Conference on Intelligent Robots and Systems, IEEE, Daejeon, South Korea, pp. 4461–4468, 2016. DOI: https://doi.org/10.1109/IROS.2016.7759657.

Q. K. Lu, K. Chenna, B. Sundaralingam, T. Hermans. Planning multi-fingered grasps as probabilistic inference in a learned deep network, [Online], Available: https://arxiv.org/abs/1804.03289, 2018.

C. F. Liu, B. Fang, F. C. Sun, X. L. Li, W. B. Huang. Learning to grasp familiar objects based on experience and objects’ shape affordance. IEEE Transactions on Systems, Man, and Cybernetics: Systems, vol. 49, no. 12, pp. 2710–2723, 2019. DOI: https://doi.org/10.1109/TSMC.2019.2901955.

K. M. He, X. Y. Zhang, S. Q. Ren, J. Sun. Deep residual learning for image recognition. In Proceedings of IEEE Conference on Computer Vision and Pattern Recognition, Las Vegas, USA, pp. 770–778, 2016. DOI: https://doi.org/10.1109/CVPR.2016.90.

M. X. Tan, Q. V. Le. EfficientNet: Rethinking model scaling for convolutional neural networks, [Online], Available: https://arxiv.org/abs/1905.11946, 2019.

B. Zoph, V. Vasudevan, J. Shlens, Q. V. Le. Learning transferable architectures for scalable image recognition, [Online], Available: https://arxiv.org/abs/1707.07012, 2017.

M. X. Tan, B. Chen, R. M. Pang, V. Vasudevan, M. Sandler, A. Howard, Q. V. Le. MnasNet: Platform-aware neural architecture search for mobile, [Online], Available: https://arxiv.org/abs/1807.11626, 2018.

M. Sandler, A. Howard, M. L. Zhu, A. Zhmoginov, L. C. Chen. MobileNetV2: Inverted residuals and linear bottlenecks. In Proceedings of IEEE/CVF Conference on Computer Vision and Pattern Recognition, IEEE, Salt Lake City, USA, pp. 4510–4520, 2018. DOI: https://doi.org/10.1109/CVPR.2018.00474.

J. Hu, L. Shen, G. Sun. Squeeze-and-excitation networks. In Proceedings of IEEE/CVF conference on Computer Vision and Pattern Recognition, IEEE, Salt Lake City, USA, pp. 7132–7141, 2018. DOI: https://doi.org/10.1109/CVPR.2018.00745.

J. Long, E. Shelhamer, T. Darrell. Fully convolutional networks for semantic segmentation. In Proceedings of IEEE Conference on Computer Vision and Pattern Recognition, Boston, USA, pp. 3431–3440, 2015. DOI: https://doi.org/10.1109/CVPR.2015.7298965.

H. S. Zhao, J. P. Shi, X. J. Qi, X. G. Wang, J. Y. Jia. Pyramid scene parsing network. In Proceedings of IEEE Conference on Computer Vision and Pattern Recognition, Honolulu, USA, pp. 2881–2890, 2017. DOI: https://doi.org/10.1109/CVPR.2017.660.

F. Yu, V. Koltun. Multi-Scale context aggregation by dilated convolutions, [Online], Available: https://arxiv.org/abs/1511.07122, 2015.

C. Q. Yu, J. B. Wang, C. Peng, C. X. Gao, G. Yu, N. Sang. BiSeNet: Bilateral segmentation network for real-time semantic segmentation. In Proceedings of the 15th European Conference on Computer Vision, Springer, Munich, Germany, pp. 334–349, 2018. DOI: https://doi.org/10.1007/978-3-030-01261-8_20.

A. B. Jung, K. Wada, J. Crall, S. Tanaka, J. Graving, C. Reinders, S. Yadav, J. Banerjee, G. Vecsei, A. Kraft, Z. Rui, J. Borovec, C. Vallentin, S. Zhydenko, K. Pfeiffer, B. Cook, I. Fernández, W. F. M. De Rainville, Chi-Hung, A. Ayala-Acevedo, R. Meudec, M. Laporte. Imgaug, [Online], Available: https://github.com/aleju/imgaug, May 5, 2019.

I. Lenz, H. Lee, A. Saxena. Deep learning for detecting robotic grasps. The International Journal of Robotics Research, vol. 34, no. 4–5, pp. 705–724, 2015. DOI: https://doi.org/10.1177/0278364914549607.

A. Zeng, K. T. Yu, S. R. Song, D. Suo, E. Walker Jr, A. Rodriguez, J. X. Xiao. Multi-view self-supervised deep learning for 6D pose estimation in the amazon picking challenge, [Online], Available: https://arxiv.org/abs/1609.09475, 2016.

E. Matsumoto, M. Saito, A. Kume, J. Tan. End-to-end learning of object grasp poses in the amazon robotics challenge. Advances on Robotic Item Picking, A. Causo, J. Durham, K. Hauser, K, Okada, A. Rodriguez, Eds., Cham, Switzerland: Springer, pp. 63–72, 2020. DOI: https://doi.org/10.1007/978-3-030-35679-8_6.

Author information

Authors and Affiliations

Corresponding author

Additional information

Colored figures are available in the online version at https://link.springer.com/journal/11633

Tri Wahyu Utomo received the B. Eng. degree in electrical engineering from University of Gadjah Mada, Indonesia in 2019. He is now working as an artificial intelligence (AI) engineer in a computer vision startup company in Jakarta, Indonesia.

His research interests include motion planning for autonomous mobile robot, deep learning and computer vision.

Adha Imam Cahyadi received the B. Eng. degree in electrical engineering from University of Gadjah Mada, Indonesia in 2002. Then he worked as an engineer in industry, such as in Matsushita Kotobuki Electronics and Halliburton Energy Services for a year. He received the M. Eng. degree in control engineering from King Mongkut’s Institute of Technology Ladkrabang, Thailand (KMITL) in 2005, and received the Ph. D. degree in control engineering from Tokai University, Japan in 2008. Currently, he is a lecturer at Department of Electrical Engineering and Information Technology, University of Gadjah Mada and a visiting lecturer at the Centre for Artificial Intelligence and Robotics (CAIRO), University of Teknologi Malaysia, Malaysia.

His research interests include teleoperation systems and robust control for delayed systems especially process plant.

Igi Ardiyanto received the B. Eng. degree in electrical engineering from University of Gadjah Mada, Indonesia in 2009, the M. Eng. and Ph. D. degrees in computer science and engineering from Toyohashi University of Technology (TUT), Japan in 2012 and 2015, respectively. He joined the TUT-NEDO (New Energy and Industrial Technology Development Organization, Japan) research collaboration on service robots, in 2011. He is now an assistant professor at University of Gadjah Mada, Indonesia. He received several awards, including Finalist of the Best Service Robotics Paper Award at the 2013 IEEE International Conference on Robotics and Automation (ICRA 2013) and Panasonic Award for the 2012 RT-Middleware Contest.

His research interests include planning and control system for mobile robotics, deep learning, and computer vision.

Rights and permissions

About this article

Cite this article

Utomo, T.W., Cahyadi, A.I. & Ardiyanto, I. Suction-based Grasp Point Estimation in Cluttered Environment for Robotic Manipulator Using Deep Learning-based Affordance Map. Int. J. Autom. Comput. 18, 277–287 (2021). https://doi.org/10.1007/s11633-020-1260-1

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11633-020-1260-1