Abstract

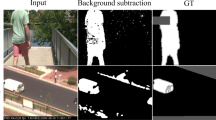

Detection of visual change or anomaly in the image sequence is a common computer vision problem that can be formulated as background/foreground segregation. To achieve this, the background model is generated and the target (foreground) is detected via background subtraction. We propose a framework for visual change detection with three main modules: background modeler, convolutional neural network, and feedback scheme for background model updating. Through analysis of a short image sequence, the background modeler can generate one image which represents the background of that video. The background image frame and individual frames of the image sequence are input to the convolutional neural network for background/foreground segregation. We design an encoder-decoder convolutional neural network which produces a binary segmentation map. The output indicates the regions of visual change in the current image frame. For long-term analysis, maintenance of the background model is needed. A feedback scheme is proposed that can dynamically update the colors of the background frame. The results, obtained from the benchmark dataset, show that our proposed framework outperforms many high-ranking background subtraction algorithms by 9.9% or more.

Similar content being viewed by others

References

Elhabian, S.Y., El-Sayed, K.M., Ahmed, S.H.: Moving object detection in spatial domain using background removal techniques—state-of-art. Recent Patents Comput. Sci. 1, 32–54 (2008)

Bouwmans, T.: Recent advanced statistical background modeling for foreground detection—a systematic survey. Recent Patents Comput. Sci. 4(3), 147–176 (2011)

Sobral, A., Vacavant, A.: A comprehensive review of background subtraction algorithms evaluated with synthetic and real videos. Comput. Vis. Image Underst. 122, 4–21 (2014)

Wang, J., Chan, K.L.: Background subtraction based on encoder-decoder structured CNN. Proceedings of Asian Conference on Pattern Recognition (2019)

Stauffer, C., Grimson, W.E.L.: Learning patterns of activity using real-time tracking. IEEE Trans. Pattern Anal. Mach. Intell. 22(8), 747–757 (2000)

Zivkovic, Z.: Improved adaptive Gaussian mixture model for background subtraction. Proceedings of International Conference on Pattern Recognition, pp. 28–31 (2004)

Elgammal, A., Duraiswami, R., Harwood, D., Davis, L.S.: Background and foreground modeling using nonparametric kernel density estimation for visual surveillance. Proc. IEEE 90(7), 1151–1163 (2002)

Barnich, O., Van Droogenbroeck, M.: ViBe: a powerful random technique to estimate the background in video sequences. Proceedings of International Conference Acoustics, Speech and Signal Processing, pp. 945–948 (2009)

Mahadevan, V., Vasconcelos, N.: Spatiotemporal saliency in dynamic scenes. IEEE Trans. Pattern Anal. Mach. Intell. 32(1), 171–177 (2010)

Heikkilä, M., Pietikäinen, M.: A texture-based method for modeling the background and detecting moving objects. IEEE Trans. Pattern Anal. Mach. Intell. 28(4), 657–662 (2006)

Liao, S., Zhao, G., Kellokumpu, V., Pietikäinen, M., Li, S.Z.: Modeling pixel process with scale invariant local patterns for background subtraction in complex scenes. Proceedings of IEEE Conference on Computer Vision and Pattern Recognition, pp. 1301–1306 (2010)

Maddalena, L., Petrosino, A.: A self organizing approach to background subtraction for visual surveillance applications. IEEE Trans. Image Process. 17(7), 1168–1177 (2008)

Wang, Y., Luo, Z., Jodoin, P.-M.: Interactive deep learning method for segmenting moving objects. Pattern Recogn. Lett. 96, 66–75 (2017)

Lim, L.A., Keles, H.Y.: Foreground segmentation using a triplet convolutional neural net-work for multiscale feature encoding. arXiv:1801.02225 [cs.CV] (2018)

Lim, L.A., Keles, H.Y.: Foreground segmentation using convolution neural networks for multiscale feature encoding. Pattern Recogn. Lett. 112, 256–262 (2018)

Braham, M., Van Droogenbroeck, M.: Deep background subtraction with scene-specific convolutional neural networks. Proceedings of IEEE International Conference on Systems, Signals and Image Processing, pp. 1–4 (2016).

Babaee, M., Dinh, D.T., Rigoll, G.: A deep convolutional neural network for video sequence background subtraction. Pattern Recogn. 76, 635–649 (2018)

Dou, J., Qin, Q., Tu, Z.: Background subtraction based on deep convolutional neural networks features. Multimed. Tools Appl. 78, 14549–14571 (2019)

Tezcan, M. O., Ishwar, P., Konrad, J.: BSUV-Net: A fully-convolutional neural network for background subtraction of unseen videos. Proceedings of IEEE Winter Conference on Applications of Computer Vision, pp. 2763–2772 (2020)

Minematsu, T., Shimada, A., Uchiyama, H., Taniguchi, R.: Analytics of deep neural network-based background subtraction. J. Imag. 4(6), 78 (2018)

Bouwmans, T., Javed, S., Sultana, M., Jung, S.K.: Deep neural network concepts for background subtraction: a systematic review and comparative evaluation. Neural Netw. 117, 8–66 (2019)

St-Charles, P.-L., Bilodeau, G.-A., Bergevin, R.: SuBSENSE: a universal change detection method with local adaptive sensitivity. IEEE Trans. Image Process. 24(1), 359–373 (2015)

Lauguard, B., Piérard, S., Van Droogenbroeck, M.: LaBGen-P: A pixel-level stationary background generation method based on LaBGen. Proceedings of IEEE International Conference on Pattern Recognition, pp. 107–113 (2016)

Lauguard, B., Piérard, S., Van Droogenbroeck, M.: LaBGen-P-Semantic: a first step for leveraging semantic segmentation in background generation. J. Imag. 4(7), 86 (2018)

He, K., Zhang, X., Ren, S., Sun J.: Deep residual learning for image recognition. Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 770–778 (2016)

Noh, H., Hong, S., Han, B.: Learning deconvolution network for semantic segmentation. Proceedings of International Conference on Computer Vision (2015)

Van Droogenbroeck, M., Paquot, O.: Background subtraction: experiments and improve-ments for ViBe. Proceedings of IEEE Workshop on Change Detection at CVPR-2012 32–37 (2012)

Wang, Y., Jodoin, P.-M., Porikli, F., Konrad, J., Benezeth, Y., Ishwar, P.: CDnet 2014: An Expanded Change Detection Benchmark Dataset. Proceedings of IEEE Workshop on Change Detection at CVPR-2014 387–394 (2014)

St-Charles, P.-L., Bilodeau, G.-A., Bergevin, R.: A self-adjusting approach to change detection based on background word consensus. Proceedings of IEEE Winter Conference on Applications of Computer Vision 990–997 (2015)

Wang, R., Bunyak, F., Seetharaman, G., Palaniappan, K.: Static and moving object detection using flux tensor with split gaussian models. Proceedings of IEEE Conference on Computer Vision and Pattern Recognition Workshops (2014)

Chen, Y., Wang, J., Lu, H.: Learning sharable models for robust background subtraction. Proceedings of IEEE International Conference on Multimedia and Expo (2015)

Tezcan, M.O., Ishwar, P., Konrad, J.: BSUV-Net 2.0: spatio-temporal data augmentations for video-agnostic supervised background subtraction. IEEE Access 9, 53849–53860 (2021)

Acknowledgements

The work described in this paper was supported by a grant from the Research Grants Council of the Hong Kong Special Administrative Region, China (Project No. CityU 11202319). The authors would like to thank the reviewers for their comments and suggestions. We gratefully acknowledge Mr. W. L Ip and Ms. Y. Zhang for the experimentations with the BSUV-Net and BSUV-Net 2.0.

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Springer Nature or its licensor holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Chan, KL., Wang, J. & Yu, H. A combination of background modeler and encoder-decoder CNN for background/foreground segregation in image sequence. SIViP 17, 1297–1304 (2023). https://doi.org/10.1007/s11760-022-02337-6

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11760-022-02337-6