Abstract

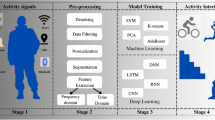

The analysis of affective or communicational states in human-human and human-computer interaction (HCI) using automatic machine analysis and learning approaches often suffers from the simplicity of the approaches or that very ambitious steps are often tried to be taken at once. In this paper, we propose a generic framework that overcomes many difficulties associated with real world user behavior analysis (i.e. uncertainty about the ground truth of the current state, subject independence, dynamic realtime analysis of multimodal information, and the processing of incomplete or erroneous inputs, e.g. after sensor failure or lack of input). We motivate the approach, that is based on the analysis and spotting of behavioral cues that are regarded as basic building blocks forming user state specific behavior, with the help of related work and the analysis of a large HCI corpus. For this corpus paralinguistic and nonverbal behavior could be significantly associated with user states. Some of our previous work on the detection and classification of behavioral cues is presented and a layered architecture based on hidden Markov models is introduced. We believe that this step by step approach towards the understanding of human behavior underlined by encouraging preliminary results outlines a principled approach towards the development and evaluation of computational mechanisms for the analysis of multimodal social signals.

Similar content being viewed by others

Notes

Competence center for Perception and Interactive Technologies (PIT) at Ulm University.

As mentioned in [89]: “As psychologists use the term, it includes the euphoria of winning an Olympic gold medal, a brief startle at an unexpected noise, unrelenting profound grief, the fleeting pleasant sensations from a warm breeze, cardiovascular changes in response to viewing a film, the stalking and murder of an innocent victim, lifelong love of an offspring, feeling chipper for no known reason, and interest in a news bulletin.”

Even though, some may argue that hot anger is a common feeling one might have towards the ineptitude of the operating system.

Referred to by the Japanese term “moriagari” [15].

Which of course may be error prone.

Unfortunately, the camera setup only allows the annotation of the gaze of user U1, as no frontal view of U2 is available.

Standard measurements such as Cohen’s κ are designed for atomic entities or pre-segmented samples of the data, which are not available [19].

An offer or suggestion of the system is perceived positively by the subject.

All negative subject states combined, i.e. uninterested, embarrassed, impatient, stressed, negative accepting, disagreement.

A laugh bout contains multiple calls (e.g. the typical repetition of ‘ha’).

Excursion is defined as the difference between the maximum and minimum f 0 within a call, and the change is defined as the absolute value of the difference between the f 0 at the call onset and the one at the call offset.

\(F_{1} = 2 \cdot\frac{P \cdot R}{P+R}\), where P denotes the precision (ratio of hits to all hits and false alarms) and R the recall (ratio of hits to all laughs in the set) of the approach.

Usually relevant samples are those that influence the training the most (i.e. for a support vector machine those samples closest to the separating hyperplane are most relevant for the adaptation of the hyperplane; samples that are far from the hyperplane have hardly any influence and won’t help during training).

References

Aloimonos Y, Guerra-Filho G, Ogale A (2010) The language of action: A new tool for human-centric interfaces. In: Aghajan H, Augusto J, Delgado R (eds) Human centric interfaces for ambient intelligence. Elsevier, Amsterdam, pp 95–131

Argyle M (1988) Bodily Communication, 2nd edn. Methuen, London

Bachorowski J-A, Smoski MJ, Owren MJ (2001) The acoustic features of human laughter. J Acoust Soc Am 110(3):1581–1597

Bänziger T, Scherer KR (2007) Using actor portrayals to systematically study multimodal emotion expression: the gemep corpus. In: Proceedings of the 2nd international conference on affective computing and intelligent interaction (ACII’07). Springer, Berlin, pp 476–487

Batliner A, Steidl S, Eyben F, Schuller B (2010) On laughter and speech laugh, based on observations of child-robot interaction. In: The phonetics of laughing, trends in linguistics. de Gruyter, Berlin, to appear

Batliner A, Steidl S, Schuller B, Seppi D, Vogt T, Wagner J, Devillers L, Vidrascu L, Aharonson V, Kessous L, Amir N (2011) Whodunnit—searching for the most important feature types signalling emotion-related user states in speech. Comput Speech Lang 25(1):4–28

Beck C, Ognibeni T, Neumann H (2008) Object segmentation from motion discontinuities and temporal occlusions—a biologically inspired model. PLoS ONE 3(11):e3807

Bousmalis K, Mehu M, Pantic M (2009) Spotting agreement and disagreement: A survey of nonverbal audiovisual cues and tools. In: Proceedings of the 3rd international conference on affective computing and intelligent interaction (ACII’09), vol 2. IEEE Press, New York, pp 1–9

Breiman L (1996) Bagging predictors. Mach Learn 24(2):123–140

Brennan SE (1996) Lexical entrainment in spontaneous dialog. In: Proceedings of ISSD, pp 41–44

Burkhardt F, Paeschke A, Rolfes M, Sendlmeier W, Weiss B (2005) A database of german emotional speech. In: Proceedings of interspeech 2005, pp 1517–1520. ISCA

Campbell N, Kashioka H, Ohara R (2005) No laughing matter. In: Proceedings of interspeech 2005, pp 465–468. ISCA

Campbell WN (2004) Listening between the lines: a study of paralinguistic information carried by tone-of-voice. In: Proceedings of international symposium on tonal aspects of languages (TAL’04), Beijing, China, pp 13–16. ISCA

Campbell WN (2007) On the use of non verbal speech sounds in human communication. In: Lecture notes in computer science, vol 4775. Springer, Berlin, pp 117–128

Campbell WN (2008) Automatic detection of participant status and topic changes in natural spoken dialogues. In: Autumn meeting of the acoustical society of Japan 2008 (ASJ’08)

Campbell WN (2008) Tools and resources for visualising conversational-speech interaction. In: Proceedings of the sixth international language resources and evaluation (LREC’08), Marrakech, Morocco, pp 231–234. ELRA

Campbell WN, Scherer S (2010) Comparing measures of synchrony and alignment in dialogue speech timing with respect to turn-taking activity. In: Proceedings of interspeech 2010, pp 2546–2549. ISCA

Caridakis G, Karpouzis K, Wallace M, Kessous L, Amir N (2010) Multimodal user’s affective state analysis in naturalistic interaction. J Multimodal User Interfaces 3:49–66

Cohen J (1960) A coefficient of agreement for nominal scales. Educ Psychol Meas 20(1):37–46

Cowie R, Cornelius RR (2003) Describing the emotional states that are expressed in speech. Speech Commun 40(1–2):5–32

Cowie R, Douglas-Cowie E, Tsapatsoulis N, Votsis G, Kollias S, Fellenz W, Taylor JG (2001) Emotion recognition in human-computer interaction. IEEE Signal Process Mag 18(1):32–80

Cuperman R, Ickes W (2009) Big five predictors of behavior and perceptions in initial dyadic interactions: personality similarity helps extraverts and introverts but hurts disagreeables. J Pers Soc Psychol 97(4):667–684

Darwin C (1978) The expression of emotion in man and animals, 3rd edn. Harper Collins, London

Devillers L, Vidrascu L, Lamel L (2005) Challenges in real-life emotion annotation and machine learning based detection. Neural Netw 18:407–422

Dietterich TG (2000) Ensemble methods in machine learning. In: Kittler J, Roli F (eds) Multiple classifier systems, proceedings of first international workshop, MCS 2000, Cagliari, Italy, June 21–23, 2000. Lecture notes in computer science, vol 1857. Springer Berlin, pp 1–15

D’Mello SK, Graesser AC, Schuller B, Martin J-C (eds) (2011) Proceedings of affective computing and intelligent interaction—fourth international conference, ACII 2011, Memphis, TN, USA, October 9–12, 2011, Part II. Lecture notes in computer science, vol 6975. Springer, Berlin

Douglas-Cowie E, Cowie R, Sneddon I, Cox C, Lowry O, McRorie M, Martin J-C, Devillers L, Abrilian S, Batliner A, Amir N, Karpouzis K (2007) The humaine database: addressing the collection and annotation of naturalistic and induced emotional data. In: Proceedings of the 2nd international conference on affective computing and intelligent interaction (ACII’07). Springer, Berlin, pp 488–500

Douxchamps D, Campbell WN (2007) Robust real time face tracking for the analysis of human behaviour. In: Lecture notes in computer science, vol 4892. Springer, Berlin, pp 1–10

Edlund J, Heldner M, Hirschberg J (2009) Pause and gap length in face-to-face interaction. In: Proceedings of interspeech 2009, pp 2779–2782

Egger S, Schatz R, Scherer S (2010) It takes two to tango—assessing the impact of delay on conversational interactivity on perceived speech quality. In: Proceedings of interspeech 2010, pp 1321–1324. ISCA

Ekman P (1993) Facial expression and emotion. Am Psychol 48:384–392

Ekman P, Friesen W (1978) Facial action coding system: a technique for the measurement of facial movement. Consulting Psychologists Press, Palo Alto

Engel D, Spinello L, Triebel R, Siegwart R, Bülthoff H, Curio C (2009) Medial features for superpixel segmentation. In: Proceedings of the eleventh IAPR conference on machine vision applications (MVA 2009), pp 248–252

Garrod S, Pickering MJ (2004) Why is conversation so easy? Trends Cogn Sci 8(1):8–11

Glodek M, Bigalke L, Schels M, Schwenker F (2011) Incorporating uncertainty in a layered hmm architecture for human activity recognition. In: Proceedings of the 2011 joint ACM workshop on Human gesture and behavior understanding, J-HGBU’11. ACM, New York, pp 33–34

Glodek M, Schwenker F, Palm G (2012) Detecting actions by integrating sequential symbolic and sub-symbolic information in human activity recognition. In: International conference in stochastic modeling techniques and data analysis, to appear

Glodek M, Tschechne S, Layher G, Schels M, Brosch T, Scherer S, Kächele M, Schmidt M, Neumann H, Palm G, Schwenker F (2011) Multiple classifier systems for the classification of audio-visual emotional states. In: D’Mello S, Graesser A, Schuller B, Martin J-C (eds) Affective computing and intelligent interaction. Lecture notes in computer science, vol 6975. Springer, Berlin, pp 359–368

Gnjatovic M, Rösner D (2008) On the role of the Nimitek corpus in developing an emotion adaptive spoken dialogue system. In: Calzolari N, Choukri K, Maegaard B, Mariani J, Odjik J, Piperidis S, Tapias D (eds) Proceedings of the sixth international language resources and evaluation (LREC’08), Marrakech, Morocco. ELRA

Gobl C, Bennett E, Ni Chasaide A (2002) Expressive synthesis: how crucial is voice quality? In: IEEE workshop on speech synthesis, Sept 2002. IEEE Press, New York, pp 91–94

Grichkovtsova I, Lacheret A, Morel M (2007) The role of intonation and voice quality in the affective speech perception. In: Proceedings of interspeech 2007, pp 2245–2248. ISCA

Haykin SS (1999) Neural networks: a comprehensive foundation. Prentice Hall, New York

Hermansky H (1990) Perceptual linear predictive (PLP) analysis of speech. J Acoust Soc Am 87(4):1738–1752

Hermansky H (1997) The modulation spectrum in automatic recognition of speech. In: Proceedings of IEEE workshop on automatic speech recognition and understanding (ASRU’97). IEEE Press, New York, pp 140–147

Jaakkola TS, Haussler D (1999) Exploiting generative models in discriminative classifiers. In: Advances in neural information processing systems, pp 487–493

Jaeger H (2002) Tutorial on training recurrent neural networks, covering BPPT, RTRL, EKF and the echo state network approach. Technical report 159, Fraunhofer-Gesellschaft, St. Augustin, Germany

Jokinen K, Scherer S (2012) Embodied communicative activity in cooperative conversational interactions—studies in visual interaction management. Special Issue of Acta Polytech Hung: CogInfoCom 2011 9(1):18–40

Kapadia S (1998) Discriminative training of hidden Markov models. PhD thesis, University of Cambridge

Keltner D, Ekman P, Gonzaga GC, Beer J (2003) Facial expression of emotion. In: Handbook of affective sciences. Affective science. Oxford University Press, London, pp 415–432. Chap 22

Kendon A (ed) (1981) Nonverbal communication, interaction, and gesture. Selections from semiotica series, vol 41. de Gruyter Berlin

Kennedy L, Ellis D (2004) Laughter detection in meetings. In: Proceedings of IEEE international conference on acoustics, speech and signal processing (ICASSP 2004), meeting recognition workshop. IEEE Press, New York, pp 118–121

Kim J, André E (2008) Emotion recognition based on physiological changes in listening music. IEEE Trans Pattern Anal Mach Intell 30(12):2067–2083

Kipp M (2001) Anvil—a generic annotation tool for multimodal dialogue. In: Proceedings of the European conference on speech communication and technology (Eurospeech), Aalborg, pp 1367–1370. ISCA

Knox M, Mirghafori N (2007) Automatic laughter detection using neural networks. In: Proceedings of interspeech 2007, pp 2973–2976. ISCA

Koller D, Friedman N (2009) Probabilistic graphical models: principles and techniques. MIT Press, Cambridge

Krauss RM, Chen Y, Chawla P (1996) Nonverbal behavior and nonverbal communication: what do conversational hand gestures tell us? In: Advances in experimental social psychology. Academic Press, San Diego, pp 389–450

Kuncheva LI (2001) Using measures of similarity and inclusion for multiple classifier fusion by decision templates. Fuzzy Sets Syst 122(3):401–407

Kuncheva LI (2004) Combining pattern classifiers: methods and algorithms. Wiley, New York

Kuncheva LI, Bezdek JC, Duin RPW (2001) Decision templates for multiple classifier fusion: an experimental comparison. Pattern Recognit 34(2):299–314

Kuncheva LI, Whitaker CJ (2003) Measures of diversity in classifier ensembles and their relationship with the ensemble accuracy. Mach Learn 51(2):181–207

Lakin JL, Chartrand TL (2003) Using nonconscious behavioral mimicry to create affiliation and rapport. J Psychol Sci 14(4):334–339

Laskowski K (2008) Modeling vocal interaction for text-independent detection of involvement hotspots in multi-party meetings. In: Proceedings of the 2nd IEEE/ISCA/ACL workshop on spoken language technology (SLT’08), pp 81–84

Laver J (1979) The desription of voice quality in general phonetic theory. Work Prog - Univ Edinb, Dept Linguist 12:30–52

Laver J (1980) The phonetic description of voice quality. Cambridge University Press, Cambridge

Layher G, Liebau H, Niese R, Al-Hamadi A, Michaelis B, Neumann H (2011) Robust stereoscopic head pose estimation in human-computer interaction and a unified evaluation framework. In: Maino G, Foresti GL (eds) Proceedings of 16th international conference on image analysis and processing (ICIAP’11). LNCS, vol 6978. Springer, Berlin, pp 227–236

Lugger M, Yang B, Wokurek W (2006) Robust estimation of voice quality parameters under real world disturbances. In: Proceedings of IEEE international conference on acoustics, speech, and signal processing (ICASSP 2006), vol 1. IEEE Press, New York, pp 1097–1100

Mackenzie Beck J (2005) Perceptual analysis of voice quality: the place of vocal profile analysis. In: Laver J, Hardcastle W, Mackenzie Beck J (eds) A figure of speech: A Festschrift for John Laver, pp 285–322. Chap 12

Maganti HK, Scherer S, Palm G (2007) A novel feature for emotion recognition in voice based applications. In: Proceedings of the 2nd international conference on affective computing and intelligent interaction (ACII’07). Springer, Berlin, pp 710–711

Mishra AK, Aloimonos Y (2009) Active segmentation. Int J Humanoid Robot 6(3):361–386

Monzo C, Alias F, Iriondo I, Gonzalvo X, Planet S (2007) Discriminating expressive speech styles by voice quality parameterization. In: Proceedings of 16th international congress of phonetic sciences (ICPhS’07), pp 2081–2084

Murphy-Chutorian MM, Trivedi E (2009) Head pose estimation in computer vision: a survey. IEEE Trans Pattern Anal Mach Intell 31(4):607–626

Mutch J, Lowe DG (2008) Object class recognition and localization using sparse features with limited receptive fields. Int J Comput Vis 80(1):45–57

Oertel C, De Looze C, Scherer S, Windmann A, Wagner P, Campbell N (2011) Towards the automatic detection of involvement in conversation. In: Esposito A, Vinciarelli A, Vicsi K, Pelachaud C, Nijholt A (eds) Analysis of verbal and nonverbal communication and enactment. The processing issues. Lecture notes in computer science, vol 6800. Springer, Berlin, pp 163–170. doi:10.1007/978-3-642-25775-9-16

Oertel C, Scherer S, Wagner P, Campbell N (2011) On the use of multimodal cues for the prediction of involvement in spontaneous conversation. In: Proceedings of interspeech 2011, pp 1541–1544. ISCA

Ogale A, Karapurkar A, Aloimonos Y (2007) View-invariant modeling and recognition of human actions using grammars. In: Dynamical vision, pp 115–126

Oliver N, Garg A, Horvitz E (2004) Layered representations for learning and inferring office activity from multiple sensory channels. Comput Vis Image Underst 96(2):163–180

Ortony A, Clore GL, Collins A (1988) The cognitive structure of emotion. Cambridge University Press, Cambridge

Oudeyer P-Y (2003) The production and recognition of emotions in speech: features and algorithms. Int J Hum-Comput Interact 59(1–2):157–183

Pantic M, Caridakis G, Andre E, Kim J, Karpouzis K, Kollias S (2011) Multimodal emotion recognition from low-level cues. In: Emotion-oriented systems, cognitive technologies. Springer, Berlin, pp 115–132

Pentland A (2007) Social signal processing [exploratory DSP]. IEEE Signal Process Mag 24(4):108–111

Pentland A (2008) Honest signals—how they shape our world. MIT Press, Cambridge

Petridis S, Pantic M (2009) Is this joke really funny? Judging the mirth by audiovisual laughter analysis. In: Proceedings of IEEE international conference on multimedia and expo (ICME’09). IEEE Press, New York, pp 1444–1447

Pickering MJ, Garrod S (2006) Alignment as the basis for successful communication. Res Lang Comput 4(2–3):203–228

Platt JC (1999) Probabilistic outputs for support vector machines and comparisons to regularized likelihood methods. In: Advances in large margin classifiers, pp 61–74

Provine R, Yong L (1991) Laughter: A stereotyped human vocalization. Ethology 89(2):115–124

Rabiner LR, Schafer RW (1978) Digital processing of speech signals. Prentice Hall signal processing series. Prentice Hall, New York

Rabiner LR (1989) A tutorial on hidden Markov models and selected applications in speech recognition. Proc IEEE 77(2):257–286

Reuderink B, Poel M, Truong K, Poppe R, Pantic M (2008) Decision-level fusion for audio-visual laughter detection. In: Popescu-Belis A, Stiefelhagen R (eds) Machine learning for multimodal interaction. Lecture notes in computer science, vol 5237. Springer, Berlin, pp 137–148

Russell JA (2003) Core affect and the psychological construction of emotion. Psychol Rev 110(1):145–172

Russell JA, Barrett LF (1999) Core affect, prototypical emotional episodes, and other things called emotion: dissecting the elephant. J Pers Soc Psychol 76(5):805–819

Schels M, Scherer S, Glodek M, Kestler HA, Palm G, Schwenker F (2011) On the discovery of events in EEG data utilizing information fusion. In: Computational statistics; special issue: Proceedings of Reisensburg 2010, pp 1–14

Schels M, Schwenker F (2010) A multiple classifier system approach for facial expressions in image sequences utilizing GMM supervectors. In: International conference on pattern recognition (ICPR), pp 4251–4254

Scherer KR, Johnstone T, Klasmeyer G (2003) Handbook of affective sciences—vocal expression of emotion. In: Affective science. Oxford University Press, London, pp 433–456. Chap 23

Scherer S, Glodek M, Schwenker F, Campbell N, Palm G (2012) Spotting laughter in naturalistic multiparty conversations: a comparison of automatic online and offline approaches using audiovisual data. ACM Trans Interact Intell Syst: Special Issue on Affective Interact Nat Environ 2(1):4:1–4:31

Scherer S, Kane J, Gobl C, Schwenker F (2012) Investigating fuzzy-input fuzzy-output support vector machines for robust voice quality classification. Comput Speech Lang. Under review

Scherer S, Schels M, Palm G (2011) How low level observations can help to reveal the user’s state in hci. In: D’Mello S, Graesser A, Schuller B, Martin J-C (eds) Proceedings of the 4th international conference on affective computing and intelligent interaction (ACII’11), vol 2. Springer, Berlin, pp 81–90

Scherer S, Schwenker F, Campbell WN, Palm G (2009) Multimodal laughter detection in natural discourses. In: Ritter H, Sagerer G, Dillmann R, Buss M (eds) Proceedings of 3rd international workshop on human-centered robotic systems (HCRS’09). Cognitive systems monographs. Springer, Berlin, pp 111–121

Scherer S, Schwenker F, Palm G (2007) Classifier fusion for emotion recognition from speech. In: 3rd IET international conference on intelligent environments 2007 (IE’07), Sept 2007. IEEE Press, New York, pp 152–155

Scherer S, Strauss P-M (2008) A flexible wizard of oz environment for rapid prototyping. In: Proceedings of the sixth international language resources and evaluation (LREC’08), Marrakech, Morocco, pp 958–961. ELRA

Scherer S, Trentin E, Schwenker F, Palm G (2009) Approaching emotion in human computer interaction. In: International workshop on spoken dialogue systems (IWSDS’09), pp 156–168

Schmidt M, Schels M, Schwenker F (2010) A hidden Markov model based approach for facial expression recognition in image sequences. In: Artificial neural networks in pattern recognition (ANNPR). LNAI, vol 5998. Springer, Berlin, pp 149–160

Schölkopf B, Smola AJ, Williamson RC, Bartlett PL (2000) New support vector algorithms. Neural Comput 12(5):1207–1245

Schuller B, Valsta M, Eyben F, McKeown G, Cowie R, Pantic M (2011) In: The first international audio/visual emotion challenge and workshop (AVEC 2011). LNCS

Schwenker F, Dietrich C, Thiel C, Palm G (2006) Learning decision fusion mappings for pattern recognition. ICGST Int J Artif Intell Mach Learn (AIML) 6:17–21

Schwenker F, Sachs A, Palm G, Kestler H (2006) Orientation histograms for face recognition. In: Artificial neural networks in pattern recognition, pp 253–259

Schwenker F, Scherer S, Magdi Y, Palm G (2009) The GMM-SVM supervector approach for the recognition of the emotional status from speech. In: Alippi C (ed) 19th international conference on artificial neural networks 2009, Part I. LNCS, vol 5768. Springer, Berlin, pp 894–903

Schwenker F, Scherer S, Schmidt M, Schels M, Glodek M (2010) Multiple classifier systems for the recognition of human emotions. In: El Gayar N, Kittler J, Roli F (eds) 9th international workshop on multiple classifier systems (MCS 2010). Springer, Berlin, pp 315–324

Serre T, Poggio T (2010) A neuromorphic approach to computer vision. Commun ACM 53:54–61

Serre T, Wolf L, Poggio T (2005) Object recognition with features inspired by visual cortex. In: CVPR, pp 994–1000

Settles B (2009) Active learning literature survey. Computer sciences technical report 1648, University of Wisconsin-Madison

Shepard CA, Giles H, Le Poired BA (2001) Communication accommodation theory. Wiley, New York

Strauss P-M, Hoffmann H, Minker W, Neumann H, Palm G, Scherer S, Traue HC, Weidenbacher U (2008) The PIT corpus of german multi-party dialogues. In: Proceedings of the sixth international language resources and evaluation (LREC’08), Marrakech, Morocco, pp 2442–2445. ELRA

Strauss P-M, Hoffmann H, Neumann H, Minker W, Palm G, Scherer S, Schwenker F, Traue HC, Weidenbacher U (2006) Wizard-of-oz data collection for perception and interaction in multi-user environments. In: Proceedings of the fifth international language resources and evaluation (LREC’06), pp 2014–2017. ELRA

Strauss P-M, Hoffmann H, Scherer S (2007) Evaluation and user acceptance of a dialogue system using wizard-of-oz recordings. In: 3rd IET international conference on intelligent environments 2007 (IE’07). IEEE Press, New York, pp 521–524

Strauss P-M, Minker W (2010) Proactive spoken dialogue interaction in multi-party environments. Springer, Berlin

Strauss P-M, Scherer S, Layher G, Hoffmann H (2010) Evaluation of the PIT corpus or what a difference a face makes? In: Calzolari N, Choukri K, Maegaard B, Mariani J, Odjik J, Piperidis S, Rosner M, Tapias D (eds) Proceedings of the seventh international conference on language resources and evaluation (LREC’10), Valletta, Malta, pp 3470–3474. ELRA

Sutton C, McCallum A (2007) An introduction to conditional random fields for relational learning. In: Introduction to statistical relational learning, p 93

Suzuki N, Katagiri Y (2007) Prosodic alignment in human–computer interaction. Connect Sci 19(2):131–141

Tax DMJ, van Breukelen M, Robert W, Duin P, Kittler J (2000) Combining multiple classifiers by averaging or by multiplying. Pattern Recognit 33(9):1475–1485

Thiel C, Scherer S, Schwenker F (2007) Fuzzy-input fuzzy-output one-against-all support vector machines. In: 11th international conference on knowledge-based and intelligent information and engineering systems (KES’07). Lecture notes in artificial intelligence, vol 3. Springer, Berlin, pp 156–165

Truong KP, Van Leeuwen DA (2005) Automatic detection of laughter. In: Proceedings of interspeech 2005, pp 485–488. ISCA

Ullman S (1984) Visual routines. Cognition 18(1–3):97–159

Vaughan B (2011) Prosodic synchrony in co-operative task-based dialogues: A measure of agreement and disagreement. In: Proceedings of interspeech 2011, pp 1865–1868. ISCA

Vinciarelli A, Pantic M, Bourlard H (2009) Social signal processing: survey of an emerging domain. Image Vis Comput J 27(12):1743–1759

Vinciarelli A, Pantic M, Bourlard H, Pentland A (2008) Social signals, their function, and automatic analysis: a survey. In: Proceedings of the 10th international conference on multimodal interfaces, ICMI’08. ACM, New York, pp 61–68

Walter S, Scherer S, Schels M, Glodek M, Hrabal D, Schmidt M, Böck R, Limbrecht K, Traue HC, Schwenker F (2011) Multimodal emotion classification in naturalistic user behavior. In: Jacko JA (ed) Proceedings of 14th international conference on human-computer interaction (HCII’11), vol 3. Springer, Berlin, pp 603–611

Watzlawick P, Beavin JH, Jackson DD (2011) Menschliche Kommunikation, Formen Stoerungen Paradoxien, 12 edn. Verlag Hans Huber, Berlin

Weidenbacher U, Neumann H (2009) Extraction of surface-related features in a recurrent model of v1-v2 interactions. PLoS ONE 4(6):e5909

Wendt B, Scheich H (2002) The “Magdeburger Prosodie Korpus”—a spoken language corpus for fMRI-studies. In: Proceedings of speech prosody 2002, pp 699–701. ISCA

Wolpert DH (1992) Stacked generalization. Neural Netw 5:241–259

Wrede B, Shriberg E (2003) Spotting “hot spots” in meetings: human judgments and prosodic cues. In: Eurospeech 2003, pp 2805–2808

Yanushevskaya I, Gobl C, Ní Chasaide A (2005) Voice quality and f 0 cues for affect expression. In: Proceedings of interspeech, pp 1849–1852. ISCA

Yanushevskaya I, Gobl C, Ní Chasaide A (2008) Voice quality and loudness in affect perception. In: Proceedings of speech prosody 2008, Campinas, Brazil, pp 29–32. ISCA

Acknowledgements

The presented work was developed within the Transregional Collaborative Research Centre SFB/TRR 62 “Companion Technology for Cognitive Technical Systems” funded by the German Research Foundation (DFG). The work of Martin Schels is supported by a scholarship of the Carl-Zeiss Foundation.

Author information

Authors and Affiliations

Corresponding author

Appendix 1: Additional results

Appendix 1: Additional results

Table 3 lists a summary of the layered annotations.

Rights and permissions

About this article

Cite this article

Scherer, S., Glodek, M., Layher, G. et al. A generic framework for the inference of user states in human computer interaction. J Multimodal User Interfaces 6, 117–141 (2012). https://doi.org/10.1007/s12193-012-0093-9

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s12193-012-0093-9