Abstract

In models of evolution and learning in games, a variety of proofs of convergence rely on the assumption that the players’ choice functions are integrable. This assumption does not have an obvious game-theoretic interpretation. We address this question by introducing probability models defined in terms of piecewise-smooth closed curves through \(\mathbb{R}^{n}\); these curves describe cycles in the performances of the available actions. We establish that a choice function is integrable if and only if in the probability model induced by each such curve, the rate at which players switch to a randomly drawn action is uncorrelated with a certain binary signal. The binary signal specifies whether the performance of the randomly drawn action is improving or worsening, and can also be interpreted as a signal about the performances of actions other than the one randomly drawn.

Similar content being viewed by others

Notes

This requirement on switching rates is not unreasonable in the models described above, in which agents are assumed to act in a completely myopic way. It would be much less reasonable in alternative models in which agents make simple forecasts about the likely directions of change in the performances of their actions before deciding which action to play. The use of such forecasts can generate dynamics with excellent convergence properties—see Shamma and Arslan [22] and Arslan and Shamma [1].

For background on population games and evolutionary dynamics, see Sandholm [21].

The restriction of the excess payoff vector π to the complement of \(\mathbb{R}^{n}_{-}\) in condition (1) reflects two facts. First, the excess payoff vector cannot lie in \(\mathrm{int}(\mathbb{R}^{n}_{-})\), since this would mean that all actions generate a lower than average payoff. Second, the excess payoff vector \(\hat{F}(x)\) lies on \(\mathrm{bd}(\mathbb{R}^{n}_{-})\) if and only if x is a Nash equilibrium of F: see Proposition 3.4 of Sandholm [19].

Here and in what follows, we call π a “performance vector” to emphasize that it is an element of \(\mathbb{R}^{n}\), but despite this terminology, π should be viewed as a point in space rather than a velocity through space. Also, the interpretation of \(\rho(\pi) \in\mathbb{R}^{n}\) suggests that its components should be nonnegative, but this property is not needed in our analysis.

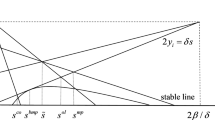

Our results depend on defining our probability model using the parameterization of C that moves at a constant ℓ 1 rate. In particular, if C is a closed curve of performance vectors induced by a closed trajectory through the set of population states, it can be endowed with this trajectory’s parameterization, but we do not use this parameterization to define our probability model.

Without the independence of Z and I, this implication would not hold. The situation is analogous to a basic one in Bayesian statistics. There, two observations that are independent conditional on an unknown (i.e., random) parameter are not unconditionally independent, because the value of the first observation provides information about the parameter, which in turn provides information about the second observation.

References

Arslan G, Shamma JS (2006) Anticipatory learning in general evolutionary games. In: Proceedings of the 45th IEEE conference on decision and control, pp 6289–6294

Benaïm M, Weibull JW (2003) Deterministic approximation of stochastic evolution in games. Econometrica 71:873–903

Blackwell D (1956) Controlled random walks. In: Gerretsen JCH, Groot JD (eds) Proceedings of the international conference of mathematicians 1954, vol 3. North Holland, Amsterdam, pp 336–338

Brown GW (1949) Some notes on computation of games solutions. Report P-78, The Rand Corporation

Brown GW (1951) Iterative solutions of games by fictitious play. In: Koopmans TC et al. (eds) Activity analysis of production and allocation. Wiley, New York, pp 374–376

Brown GW, von Neumann J (1950) Solutions of games by differential equations. In: Kuhn HW, Tucker AW (eds) Contributions to the theory of games I. Annals of mathematics studies, vol 24. Princeton University Press, Princeton, pp 73–79

Fudenberg D, Kreps DM (1993) Learning mixed equilibria. Games Econ Behav 5:320–367

Fudenberg D, Levine DK (1998) The theory of learning in games. MIT Press, Cambridge

Hannan J (1957) Approximation to Bayes risk in repeated play. In: Dresher M, Tucker AW, Wolfe P (eds) Contributions to the theory of games III. Annals of mathematics studies, vol 39. Princeton University Press, Princeton, pp 97–139

Hart S (2005) Adaptive heuristics. Econometrica 73:1401–1430

Hart S, Mas-Colell A (2001) A general class of adaptive strategies. J Econ Theory 98:26–54

Hofbauer J (2000) From Nash and Brown to Maynard Smith: equilibria, dynamics, and ESS. Selection 1:81–88

Hofbauer J, Sandholm WH (2002) On the global convergence of stochastic fictitious play. Econometrica 70:2265–2294

Hofbauer J, Sandholm WH (2009) Stable games and their dynamics. J Econ Theory 144:1665–1693

Maynard Smith J, Price GR (1973) The logic of animal conflict. Nature 246:15–18

Monderer D, Shapley LS (1996) Potential games. Games Econ Behav 14:124–143

Roth G, Sandholm WH (2013) Stochastic approximations with constant step size and differential inclusions. SIAM J Control Optim 51:525–555

Sandholm WH (2001) Potential games with continuous player sets. J Econ Theory 97:81–108

Sandholm WH (2005) Excess payoff dynamics and other well-behaved evolutionary dynamics. J Econ Theory 124:149–170

Sandholm WH (2009) Large population potential games. J Econ Theory 144:1710–1725

Sandholm WH (2010) Population games and evolutionary dynamics. MIT Press, Cambridge

Shamma JS, Arslan G (2005) Dynamic fictitious play, dynamic gradient play, and distributed convergence to Nash equilibria. IEEE Trans Autom Control 50:312–327

Weibull JW (1996) The mass action interpretation. Excerpt from ‘The work of John Nash in game theory: Nobel seminar, December 8, 1994’. J Econ Theory 69:165–171

Young HP (2004) Strategic learning and its limits. Oxford University Press, Oxford

Acknowledgements

I thank George Mailath for asking the question that motivated this paper, and Larry Samuelson and two referees for helpful comments. Financial support from the National Science Foundation under Grants SES-0092145 and SES-1155135 is gratefully acknowledged.

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Sandholm, W.H. Probabilistic Interpretations of Integrability for Game Dynamics. Dyn Games Appl 4, 95–106 (2014). https://doi.org/10.1007/s13235-013-0082-y

Published:

Issue Date:

DOI: https://doi.org/10.1007/s13235-013-0082-y