Abstract

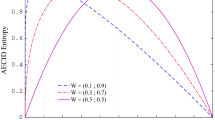

Several splitting criteria for binary classification trees are shown to be written as weighted sums of two values of divergence measures. This weighted sum approach is then used to form two families of splitting criteria. One of them contains the chi-squared and entropy criterion, the other contains the mean posterior improvement criterion. Both family members are shown to have the property of exclusive preference. Furthermore, the optimal splits based on the proposed families are studied. We find that the best splits depend on the parameters in the families. The results reveal interesting differences among various criteria. Examples are given to demonstrate the usefulness of both families.

Similar content being viewed by others

References

Aeberhard, S., Coomans, D. and de Vel, O. (1993) Improvements in the preformance of regularized discriminant analysis. Journal of Chemometrics, 7, 99–115.

Breiman, L. (1996) Some properties of splitting criteria. Machine Learning, 24, 41–47.

Breiman, L., Friedman, J. H., Olshen, R. A. and Stone, C. J. (1984) Classification and Regression Trees. Wadsworth, Belmont, California.

Buntine, W. and Niblett, T. (1992) A further comparison of splitting rules for decision tree induction. Machine Learning, 8, 75–85.

Ciampi, A., Chang, C.-H., Hogg, S. and McKinney, S. (1987) Recursive partitioning: a versatile method for exploratory data analysis in biostatistics. In: M. I. B. and G. J. Umphrey (eds) Biostatistics, pp. 23–50, D. Reidel, New York.

Clark, L. A. and Pregibon, D. (1992) Tree-based models. In: J. M. Chambers and T. J. Hastie (eds) Statistical Models in S, Wadsworth & Brooks/Cole, Pacific Grove, CA.

Fayyad, U. M. and Irani, R. B. (1992) The attribute selection problem in decision tree generation, 10th National Conference on AI, AAAI-92, pp. 104–110, MIT Press.

Hand, D. J. (1997)Construction and Assessment of Classification Rules, John Wiley, Chichester, England.

Kass, G. V. (1980) An exploratory technique for investigation large quantities of categorical data. Applied Statistics, 29, 119–127.

Loh, W.-Y. and Shih, Y.-S. (1997)Split selection methods for classification trees. Statistica Sinica, 7, 815–840.

Loh, W.-Y. and Vanichsetakul, N. (1988) Tree-structured classification via generalized discriminant analysis (with discussion). Journal of the American Statistical Association, 83, 715–728.

Lubischew, A. A. (1962) On the use of discriminant functions in taxonomy. Biometrics, 18, 455–477.

Merz, C. J. and Murphy, P. M. (1996) UCI Repository of Machine Learning Databases, Department of Information and Computer Science, University of California, Irvine, CA.

Quinlan, J. R. (1993) C4.5: Programs for Machine Learning, Morgan Kaufmann, San Mateo, CA.

Read, T. R. C. and Cressie, N. A. C. (1988) Goodness-of-Fit Statistics for Discrete Multivariate Data, Springer-Verlag, New York.

Taylor, P. C. and Silverman, B. W. (1993) Block diagrams and splitting criteria for classification trees. Statistics and Computing, 3, 147–161.

Rights and permissions

About this article

Cite this article

Shih, YS. Families of splitting criteria for classification trees. Statistics and Computing 9, 309–315 (1999). https://doi.org/10.1023/A:1008920224518

Issue Date:

DOI: https://doi.org/10.1023/A:1008920224518