Abstract

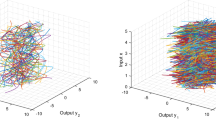

When increasing numbers of principalcomponents are extracted by using the sequentialmethod proposed in [1] by Banour and Azimi-Sadjadi, the accumulated extractionerror will become dominant and affect the extractionsof the remaining principal components. To improvethis, we suggest that the initial weight vector forthe extraction of the next component should beorthogonal to the eigensubspace spanned by the alreadyextracted weight vectors. Simulation results showthat both the convergence and the accuracy of theextraction are improved. Our improved method is alsocapable of extracting full eigenspace accurately.

Similar content being viewed by others

References

Bannour, S. and Azimi-Sadjadi, M. R: Principal component extraction using recursive least squares learning, IEEE Trans. on Neural Networks 6 (1995), 457-469.

Iiguni, Y. and Sakai, H.: A real-time learning algorithm for a multilayered neural network based on the extended Kalman filter, IEEE Trans. on Signal Processing 40 (1992), 959-966.

Parlett, B. N.: The Symmetric Eigenvalue Problem, Prentice-Hall, Englewood Cliffs, NJ, 1980.

Leung, C. S., Wong, K. W., Sum, J. and Chan, L. W.: On-line training and pruning for the recursive least square algorithms, Electronics Letter 32 (1996), 2152-2153.

Chen, T.: Modified Oja's algorithms for principal subspace and minor subspace extraction, Neural Processing Letters 5 (1997), 105-110.

Author information

Authors and Affiliations

Rights and permissions

About this article

Cite this article

Wong, A.SY., Wong, KW. & Wong, CS. A Practical Sequential Method for Principal Component Analysis. Neural Processing Letters 11, 107–112 (2000). https://doi.org/10.1023/A:1009646500088

Issue Date:

DOI: https://doi.org/10.1023/A:1009646500088