Abstract

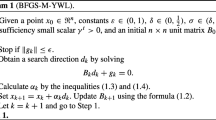

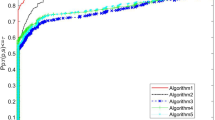

The BFGS method is the most effective of the quasi-Newton methods for solving unconstrained optimization problems. Wei, Li, and Qi [16] have proposed some modified BFGS methods based on the new quasi-Newton equation B k+1 s k = y* k , where y* k is the sum of y k and A k s k, and A k is some matrix. The average performance of Algorithm 4.3 in [16] is better than that of the BFGS method, but its superlinear convergence is still open. This article proves the superlinear convergence of Algorithm 4.3 under some suitable conditions.

Similar content being viewed by others

References

C.G. Broyden, J.E. Dennis, and J.J. Moré, “On the local and superlinear convergence of quasi-Newton methods,” J. Inst. Math. Appl., vol. 12, pp. 223–246, 1973.

R. Byrd and J. Nocedal, “A tool for the analysis of quasi-Newton methods with application to unconstrained minimization,” SIAM J. Numerical Anal., vol. 26, pp. 727–739, 1989.

R. Byrd, J. Nocedal, and Y. Yuan, “Global convergence of a class of quasi-Newton methods on convex problems,” SIAM J. Numer. Anal., vol. 24, pp. 1171–1189, 1987.

Y. Dai, “Convergence properties of the BFGS algorithm,” SIAM J. Optim., vol. 13, pp. 693–701, 2003.

W.C. Davidon, “Variable metric methods for minimization,” Argonne National Laboratories Report, ANL-5990.

J.E. Dennis and J.J. Moré, “A characterization of superlinear convergence and its application to quasi-Newton methods,” Math. Comp., vol. 28, pp. 549–560, 1974.

J.E. Dennis and J.J. Moré, “Quasi-Newton methods, motivation and theory,” SIAM Rev., vol. 19, pp. 46–89, 1977.

A. Griewank and P.L. Toint, “Local convergence analysis for partitioned quasi-Newton updates,” Numer. Math., vol. 39, pp. 429–448, 1982.

D. Li and M. Fukushima, “A modified BFGS method and its global convergence in nonconvex minimization,” J. Comput. Appl. Math., vol. 129, pp. 15–35, 2001.

D. Li and M. Fukushima, “A global and superlinear convergent Gauss-Newton-based BFGS method for symmetric nonlinear equations,” SIAM J. Numer. Anal., vol. 37, pp. 152–172, 1999.

D. Li and M. Fukushima, “On the global convergence of the BFGS method for nonconvex unconstrained optimization problems,” SIAM J. Optim., vol. 11, pp. 1054–1064, 2001.

J.M. Perry, “A class of conjugate algorithms with a two step variable metric memory,” Discussion paper 269, Center for Mathematical Studies in Economics and Management Science, Northwestern University, 1977.

M.J.D. Powell, “A new algorithm for unconstrained optimation,” in Nonlinear Programming, J.B. Rosen, O.L. Mangasarian, and K. Ritter (Eds.), Academic Press, New York, 1970.

D.F. Shanno, “On the convergence of a new conjugate gradient algorithm,” SIAM J. Numer. Anal., vol. 15, pp. 1247–1257, 1978.

Z. Wei, L. Qi, and X. Chen, “An SQP-type method and its application in stochastic programming,” J. Optim. Theory Appl., vol. 116, pp. 205–228, 2003.

Z. Wei, G. Li, and L. Qi, “New quasi-Newton methods for unconstrained optimization problems,” to appear in Mathematical Programming.

Z. Wei, L. Qi, and S. Ito, “New step-size rules for optimization problems,” Department of Mathematics and Information Science, Guangxi University, Nanning, Guangxi, People's Republic of China, October 2000.

Y. Yuan and W. Sun, Theory and Methods of Optimization, Science Press of China, 1999.

Author information

Authors and Affiliations

Rights and permissions

About this article

Cite this article

Wei, Z., Yu, G., Yuan, G. et al. The Superlinear Convergence of a Modified BFGS-Type Method for Unconstrained Optimization. Computational Optimization and Applications 29, 315–332 (2004). https://doi.org/10.1023/B:COAP.0000044184.25410.39

Issue Date:

DOI: https://doi.org/10.1023/B:COAP.0000044184.25410.39