Abstract

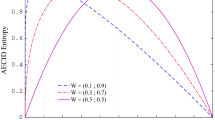

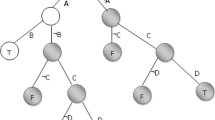

Decision trees are among the most popular machine learning algorithms, due to their simplicity, versatility, and interpretability. Their underlying principle revolves around the recursive partitioning of the feature space into disjoint subsets, each of which should ideally contain only a single class. This is achieved by selecting features and conditions that allow for the most effective split of the tree structure. Traditionally, impurity metrics are used to measure the effectiveness of a split, as ideally in a given subset only instances from a single class should be present. In this paper, we discuss the underlying shortcoming of such an assumption and introduce the notion of local class imbalance. We show that traditional splitting criteria induce the emergence of increasing class imbalances as the tree structure grows. Therefore, even when dealing with initially balanced datasets, class imbalance will become a problem during decision tree induction. At the same time, we show that existing skew-insensitive split criteria return inferior performance when data is roughly balanced. To address this, we propose a simple, yet effective hybrid decision tree architecture that is capable of dynamically switching between standard and skew-insensitive splitting criterion during decision tree induction. Our experimental study depicts that local class imbalance is embedded in most standard classification problems and that the proposed hybrid approach is capable of alleviating its influence.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Alcalá-Fdez, J., Fernández, A., Luengo, J., Derrac, J., García, S.: KEEL data-mining software tool: data set repository, integration of algorithms and experimental analysis framework. Mult.-Valued Log. Soft Comput. 17(2–3), 255–287 (2011)

Boonchuay, K., Sinapiromsaran, K., Lursinsap, C.: Decision tree induction based on minority entropy for the class imbalance problem. Pattern Anal. Appl. 20(3), 769–782 (2017)

Breiman, L.: Technical note: some properties of splitting criteria. Mach. Learn. 24(1), 41–47 (1996)

Breiman, L., Friedman, J.H., Olshen, R.A., Stone, C.J.: Classification and Regression Trees. Wadsworth (1984)

Cano, A.: A survey on graphic processing unit computing for large-scale data mining. Wiley Interdisc. Rew. Data Min. Knowl. Discov. 8(1) (2018)

Cieslak, D.A., Chawla, N.V.: Learning decision trees for unbalanced data. In: Daelemans, W., Goethals, B., Morik, K. (eds.) ECML PKDD 2008. LNCS (LNAI), vol. 5211, pp. 241–256. Springer, Heidelberg (2008). https://doi.org/10.1007/978-3-540-87479-9_34

Cieslak, D.A., Hoens, T.R., Chawla, N.V., Kegelmeyer, W.P.: Hellinger distance decision trees are robust and skew-insensitive. Data Min. Knowl. Discov. 24(1), 136–158 (2012)

Flach, P.A.: The geometry of roc space: understanding machine learning metrics through roc isometrics. In: Proceedings of the Twentieth International Conference on International Conference on Machine Learning, pp. 194–201. ICML’03, AAAI Press (2003). http://dl.acm.org/citation.cfm?id=3041838.3041863

García, S., Fernández, A., Luengo, J., Herrera, F.: Advanced nonparametric tests for multiple comparisons in the design of experiments in computational intelligence and data mining: experimental analysis of power. Inf. Sci. 180(10), 2044–2064 (2010)

Hapfelmeier, A., Pfahringer, B., Kramer, S.: Pruning incremental linear model trees with approximate lookahead. IEEE Trans. Knowl. Data Eng. 26(8), 2072–2076 (2014)

He, H., Garcia, E.A.: Learning from imbalanced data. IEEE Trans. Knowl. Data Eng. 21(9), 1263–1284 (2009). https://doi.org/10.1109/TKDE.2008.239

Jaworski, M., Duda, P., Rutkowski, L.: New splitting criteria for decision trees in stationary data streams. IEEE Trans. Neural Netw. Learn. Syst. 29(6), 2516–2529 (2018)

Kearns, M.J., Mansour, Y.: On the boosting ability of top-down decision tree learning algorithms. In: STOC, pp. 459–468. ACM (1996)

Krawczyk, B.: Learning from imbalanced data: open challenges and future directions. Prog. AI 5(4), 221–232 (2016)

Lango, M., Brzezinski, D., Firlik, S., Stefanowski, J.: Discovering minority sub-clusters and local difficulty factors from imbalanced data. In: Yamamoto, A., Kida, T., Uno, T., Kuboyama, T. (eds.) DS 2017. LNCS (LNAI), vol. 10558, pp. 324–339. Springer, Cham (2017). https://doi.org/10.1007/978-3-319-67786-6_23

Li, F., Zhang, X., Zhang, X., Du, C., Xu, Y., Tian, Y.: Cost-sensitive and hybrid-attribute measure multi-decision tree over imbalanced data sets. Inf. Sci. 422, 242–256 (2018)

Pedregosa, F., et al.: Scikit-learn: machine learning in python. J. Mach. Learn. Res. 12, 2825–2830 (2011)

Smith, M.R., Martinez, T.R., Giraud-Carrier, C.G.: An instance level analysis of data complexity. Mach. Learn. 95(2), 225–256 (2014)

Weinberg, A.I., Last, M.: Interpretable decision-tree induction in a big data parallel framework. Appl. Math. Comput. Sci. 27(4), 737–748 (2017)

Woźniak, M.: A hybrid decision tree training method using data streams. Knowl. Inf. Syst. 29(2), 335–347 (2011)

Woźniak, M., Graña, M., Corchado, E.: A survey of multiple classifier systems as hybrid systems. Inf. Fusion 16, 3–17 (2014)

Acknowledgements

This work is supported by the VCU College of Engineering Deans Undergraduate Research Initiative (DURI) program.

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2018 Springer Nature Switzerland AG

About this paper

Cite this paper

Mulyar, A., Krawczyk, B. (2018). Addressing Local Class Imbalance in Balanced Datasets with Dynamic Impurity Decision Trees. In: Soldatova, L., Vanschoren, J., Papadopoulos, G., Ceci, M. (eds) Discovery Science. DS 2018. Lecture Notes in Computer Science(), vol 11198. Springer, Cham. https://doi.org/10.1007/978-3-030-01771-2_1

Download citation

DOI: https://doi.org/10.1007/978-3-030-01771-2_1

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-01770-5

Online ISBN: 978-3-030-01771-2

eBook Packages: Computer ScienceComputer Science (R0)