Abstract

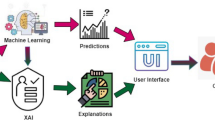

Interpretability is a fundamental property for the acceptance of machine learning models in highly regulated areas. Recently, deep neural networks gained the attention of the scientific community due to their high accuracy in vast classification problems. However, they are still seen as black-box models where it is hard to understand the reasons for the labels that they generate. This paper proposes a deep model with monotonic constraints that generates complementary explanations for its decisions both in terms of style and depth. Furthermore, an objective framework for the evaluation of the explanations is presented. Our method is tested on two biomedical datasets and demonstrates an improvement in relation to traditional models in terms of quality of the explanations generated.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Ba, J., Caruana, R.: Do deep nets really need to be deep? In: Advances in Neural Information Processing Systems 27, pp. 2654–2662. Curran Associates, Inc. (2014)

Cardoso, J.S., Cardoso, M.J.: Towards an intelligent medical system for the aesthetic evaluation of breast cancer conservative treatment. Artif. Intell. Med. 40, 115–126 (2007)

Fernandes, K., Cardoso, J.S., Astrup, B.: A deep learning approach for the forensic evaluation of sexual assault. Pattern Anal. Appl. 21, 629–640 (2018)

Gupta, M., et al.: Monotonic calibrated interpolated look-up tables. J. Mach. Learn. Res. 17(109), 1–47 (2016)

Kim, B., Rudin, C., Shah, J.A.: The Bayesian case model: a generative approach for case-based reasoning and prototype classification. In: Advances in Neural Information Processing Systems 27, pp. 1952–1960. Curran Associates, Inc. (2014)

Kim, B., Doshi-Velez, F.: Interpretable machine learning: the fuss, the concrete and the questions. In: ICML Tutorial on Interpretable Machine Learning (2017)

Mendonça, T., Ferreira, P.M., Marques, J.S., Marcal, A.R.S., Rozeira, J.: PH2 - a dermoscopic image database for research and benchmarking. In: 2013 35th Annual International Conference of the IEEE Engineering in Medicine and Biology Society (EMBC), pp. 5437–5440, July 2013

Pashler, H., McDaniel, M., Rohrer, D., Bjork, R.: Learning styles: concepts and evidence. Psychol. Sci. Public Interes. 9(3), 105–119 (2008)

Wang, T., Rudin, C., Doshi-Velez, F., Liu, Y., Klampfl, E., MacNeille, P.: A bayesian framework for learning rule sets for interpretable classification. J. Mach. Learn. Res. 18(70), 1–37 (2017)

Zeiler, M.D., Fergus, R.: Visualizing and understanding convolutional networks. In: Fleet, D., Pajdla, T., Schiele, B., Tuytelaars, T. (eds.) ECCV 2014. LNCS, vol. 8689, pp. 818–833. Springer, Cham (2014). https://doi.org/10.1007/978-3-319-10590-1_53

Acknowledgements

This work was partially funded by the Project “NanoSTIMA: Macro-to-Nano Human Sensing: Towards Integrated Multimodal Health Monitoring and Analytics/NORTE-01-0145-FEDER-00001” financed by the North Portugal Regional Operational Programme (NORTE 2020), under the PORTUGAL 2020 Partnership Agreement, and through the European Regional Development Fund (ERDF).

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2018 Springer Nature Switzerland AG

About this paper

Cite this paper

Silva, W., Fernandes, K., Cardoso, M.J., Cardoso, J.S. (2018). Towards Complementary Explanations Using Deep Neural Networks. In: Stoyanov, D., et al. Understanding and Interpreting Machine Learning in Medical Image Computing Applications. MLCN DLF IMIMIC 2018 2018 2018. Lecture Notes in Computer Science(), vol 11038. Springer, Cham. https://doi.org/10.1007/978-3-030-02628-8_15

Download citation

DOI: https://doi.org/10.1007/978-3-030-02628-8_15

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-02627-1

Online ISBN: 978-3-030-02628-8

eBook Packages: Computer ScienceComputer Science (R0)