Abstract

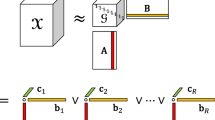

We propose IM-PARAFAC, a PARAFAC tensor decomposition method that enables rapid processing of large scalable tensors in Apache Spark for distributed in-memory big data management systems. We consider the memory overflow that occurs when processing large amounts of data because of running on in-memory. Therefore, the proposed method, IM-PARAFAC, is capable of dividing and decomposing large input tensors. It can handle large tensors even in small, distributed environments. The experimental results indicate that the proposed IM-PARAFAC enables handling of large tensors and reduces the execution time.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

Notes

- 1.

\(X_{(1)}\) is unfolded by mode I of the tensor

. The symbol \(*\) is the Hadamard product and the symbol \(\dagger \) is the pseudo-inverse of the matrix. The Khatri-Rao product is denoted by \(\odot \).

. The symbol \(*\) is the Hadamard product and the symbol \(\dagger \) is the pseudo-inverse of the matrix. The Khatri-Rao product is denoted by \(\odot \). - 2.

Real datasets are supported by BigTensor. https://datalab.snu.ac.kr/bigtensor/dataset.

- 3.

BigTensor is Hadoop-based tensor decomposition tool.

- 4.

S-PARAFAC is Spark-based PARAFAC decomposition tool.

References

Kolda, T.G., Bader, B.W.: Tensor decompositions and applications. SIAM Rev. 51(3), 455–500 (2009)

Kang, U., Papalexakis, E.E., Harpale, A., Faloutsos, C.: GigaTensor: scaling tensor analysis up by 100 times - algorithms and discoveries. In: Proceedings of the 18th ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, KDD 2012, Beijing, China, 12–16 August 2012, pp. 316–324. ACM (2012)

Park, N., Jeon, B., Lee, J., Kang, U.: BIGtensor: mining billion-scale tensor made easy. In: Proceedings of the 25th ACM International on Conference on Information and Knowledge Management (CIKM 2016), pp. 2457–2460. ACM (2016)

Yang, H.K., Yong, H.S.: S-PARAFAC: distributed tensor decomposition using Apache Spark. J. Korean Inst. Inf. Sci. Eng. (KIISE) 45(3), 280–287 (2018)

Acknowledgement

This research was supported by Basic Science Research Program through the National Research Foundation of Korea (NRF) funded by the Ministry of Education (NRF-2016R1D1A1B03931529).

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2019 Springer Nature Switzerland AG

About this paper

Cite this paper

Yang, HK., Yong, HS. (2019). Distributed PARAFAC Decomposition Method Based on In-memory Big Data System. In: Li, G., Yang, J., Gama, J., Natwichai, J., Tong, Y. (eds) Database Systems for Advanced Applications. DASFAA 2019. Lecture Notes in Computer Science(), vol 11448. Springer, Cham. https://doi.org/10.1007/978-3-030-18590-9_31

Download citation

DOI: https://doi.org/10.1007/978-3-030-18590-9_31

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-18589-3

Online ISBN: 978-3-030-18590-9

eBook Packages: Computer ScienceComputer Science (R0)

. The symbol

. The symbol