Abstract

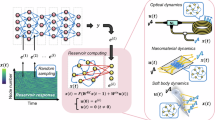

The implementation of artificial neural networks in hardware substrates is a major interdisciplinary enterprise. Well suited candidates for physical implementations must combine nonlinear neurons with dedicated and efficient hardware solutions for both connectivity and training. Reservoir computing addresses the problems related with the network connectivity and training in an elegant and efficient way. However, important questions regarding impact of reservoir size and learning routines on the convergence-speed during learning remain unaddressed. Here, we study in detail the learning process of a recently demonstrated photonic neural network based on a reservoir. We use a greedy algorithm to train our neural network for the task of chaotic signals prediction and analyze the learning-error landscape. Our results unveil fundamental properties of the system’s optimization hyperspace. Particularly, we determine the convergence speed of learning as a function of reservoir size and find exceptional, close to linear scaling. This linear dependence, together with our parallel diffractive coupling, represent optimal scaling conditions for our photonic neural network scheme.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Brunner, D., Soriano, M.C., Mirasso, C.R., Fischer, I.: Parallel photonic information processing at gigabyte per second data rates using transient states. Nat. Commun. 4, 1364 (2013). https://doi.org/10.1038/ncomms2368

Brunner, D., Soriano, M.C., Van der Sande, G. (eds.): Photonic Reservoir Computing: Optical Recurrent Neural Networks. DeGruyter, Berlin (2019)

Bueno, J., et al.: Reinforcement learning in a large-scale photonic recurrent neural network. Optica 5(6), 756 (2018). https://doi.org/10.1364/OPTICA.5.000756. http://arxiv.org/abs/1711.05133. https://www.osapublishing.org/abstract.cfm?URI=optica-5-6-756

Duport, F., Schneider, B., Smerieri, A., Haelterman, M., Massar, S.: All-optical reservoir computing. Opt. Express 20(20), 22783 (2012). https://doi.org/10.1364/oe.20.022783

Jaeger, H., Haas, H.: Harnessing nonlinearity: predicting chaotic systems and saving energy in wireless communication. Science 304(5667), 78–80 (2004). https://doi.org/10.1126/science.1091277. http://www.ncbi.nlm.nih.gov/pubmed/15064413

Larger, L., et al.: Photonic information processing beyond turing: an optoelectronic implementation of reservoir computing. Opt. Express 20(3), 3241–9 (2012). https://doi.org/10.1364/OE.20.003241. http://www.osapublishing.org/viewmedia.cfm?uri=oe-20-3-3241&seq=0&html=true

Maass, W., Natschlager, T., Markram, H.: Real-time computing without stable states: a new framework for neural computation based on perturbations. Neural Comput. 14(11), 2531–2560 (2002). https://doi.org/10.1162/089976602760407955

Paquot, Y., et al.: Optoelectronic reservoir computing. Sci. Rep. 2 (2012). https://doi.org/10.1038/srep00287

Van Der Sande, G., Brunner, D., Soriano, M.C.: Advances in photonic reservoir computing. Nanophotonics 6(3), 561–576 (2017). https://doi.org/10.1515/nanoph-2016-0132

Acknowledgements

This work has been supported by the EUR EIPHI program (Contract No. ANR-17-EURE-0002), by the BiPhoProc ANR project (No. ANR-14-OHRI-0002-02), by the Volkswagen Foundation NeuroQNet project and the ENERGETIC project of Bourgogne Franche-Comté. X.P. has received funding from the European Union’s Horizon 2020 research and innovation programme under the Marie Sklodowska-Curie grant agreement No. 713694 (MULTIPLY).

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2019 Springer Nature Switzerland AG

About this paper

Cite this paper

Porte, X., Andreoli, L., Jacquot, M., Larger, L., Brunner, D. (2019). Reservoir-Size Dependent Learning in Analogue Neural Networks. In: Tetko, I., Kůrková, V., Karpov, P., Theis, F. (eds) Artificial Neural Networks and Machine Learning – ICANN 2019: Workshop and Special Sessions. ICANN 2019. Lecture Notes in Computer Science(), vol 11731. Springer, Cham. https://doi.org/10.1007/978-3-030-30493-5_21

Download citation

DOI: https://doi.org/10.1007/978-3-030-30493-5_21

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-30492-8

Online ISBN: 978-3-030-30493-5

eBook Packages: Computer ScienceComputer Science (R0)