Abstract

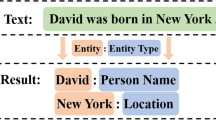

Named Entity Recognition (NER) is a basic task of Natural Language Processing (NLP), it’s a challenging task in a variety of special applications. This paper aims to solve the global consistency of NER, and to improve the performance. Inspired by human reading process, we propose a NE-Reasoner model, which combine deep neural networks and memory artificial neural network to identify named entities with global consistency. The advantages of the model are: (1) The multi-layer deep architecture, allowing it to bootstrap the recognized entity set from coarse to fine. (2) The candidate pool memory mechanism, allowing it to exchange identified entity information between layers. (3) The reasoner, combing encoder-decoder and cached information to infer to get global entities. The experimental results show that the NE-Reasoner can identity ambiguous words and named entities that rarely or never met before.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Collobert, R., Weston, J., Bottou, L., Karlen, M., Kavukcuoglu, K., Kuksa, P.: Natural language processing (almost) from scratch. J. Mach. Learn. Res. 12(Aug), 2493–2537 (2011)

Huang, Z., Xu, W., Yu, K.: Bidirectional LSTM-CRF models for sequence tagging. arXiv preprint arXiv:1508.01991 (2015)

Ma, X., Hovy, E.: End-to-end sequence labeling via bi-directional LSTM-CNNs-CRF. In: Proceedings of the 54th Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), vol. 1, pp. 1064–1074 (2016)

Chiu, J.P., Nichols, E.: Named entity recognition with bidirectional LSTM-CNNs. Trans. Assoc. Comput. Linguist. 4, 357–370 (2016)

Lample, G., Ballesteros, M., Subramanian, S., Kawakami, K., Dyer, C.: Neural architectures for named entity recognition. In: Proceedings of NAACL-HLT, pp. 260–270 (2016)

Strubell, E., Verga, P., Belanger, D., McCallum, A.: Fast and accurate entity recognition with iterated dilated convolutions. In: Proceedings of the 2017 Conference on Empirical Methods in Natural Language Processing, pp. 2670–2680 (2017)

Peters, M., Ammar, W., Bhagavatula, C., Power, R.: Semi-supervised sequence tagging with bidirectional language models. In: Proceedings of the 55th Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), vol. 1, pp. 1756–1765 (2017)

Liu, L., Shang, J., Xu, F.F., Ren, X., Gui, H., Peng, J., Han, J.: Empower sequence labeling with task-aware neural language model. In: International Conference on Artificial Intelligence (2018)

Zhang, Y., Yang, J.: Chinese NER using lattice LSTM. In: Proceedings of the 56th Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), pp. 1554–1564. Association for Computational Linguistics (2018). http://aclweb.org/anthology/P18-1144

Nguyen, T.H., Sil, A., Dinu, G., Florian, R.: Toward mention detection robustness with recurrent neural networks. arXiv preprint arXiv:1602.07749 (2016)

Graves, A., Wayne, G., Danihelka, I.: Neural turing machines. arXiv preprint arXiv:1410.5401 (2014)

Sukhbaatar, S., Weston, J., Fergus, R., et al.: End-to-end memory networks. In: Advances in Neural Information Processing Systems, pp. 2440–2448 (2015)

Pennington, J., Socher, R., Manning, C.D.: Glove: global vectors for word representation. In: Empirical Methods in Natural Language Processing (EMNLP), pp. 1532–1543 (2014). http://www.aclweb.org/anthology/D14-1162

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2020 Springer Nature Switzerland AG

About this paper

Cite this paper

Yin, X., Liu, R., Zheng, D., Lu, Z. (2020). A Deep Learning Based Reasoner for Global Consistency in Named Entity Recognition. In: Liu, Y., Wang, L., Zhao, L., Yu, Z. (eds) Advances in Natural Computation, Fuzzy Systems and Knowledge Discovery. ICNC-FSKD 2019. Advances in Intelligent Systems and Computing, vol 1074. Springer, Cham. https://doi.org/10.1007/978-3-030-32456-8_7

Download citation

DOI: https://doi.org/10.1007/978-3-030-32456-8_7

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-32455-1

Online ISBN: 978-3-030-32456-8

eBook Packages: Intelligent Technologies and RoboticsIntelligent Technologies and Robotics (R0)