Abstract

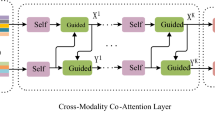

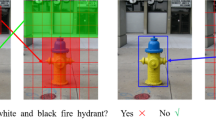

Better capturing the interactions of different modality is a hot research topic in visual question answering (VQA) recently. Inspired by human vision information processing, a method of VQA based on intra-modality features interactive with self-attention mechanism (IMFI-SA) is proposed. We adopted object-level features with bottom-up attention instead of feature mapping to extract the fine-grained information in images. Moreover, the interactions of intra-modality in the question and the image modality is also extracted by proposed IMFI-SA model respectively. Finally, we combined the enhanced object-level features interaction using top-down cross-attention and the question features interaction to predict the answer given a question and image. Experimental results on the VQA2.0 dataset show that the proposed method is superior to the existing method in the reasoning answer generating, especially in counting problems.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Anderson, P., et al.: Bottom-up and top-down attention for image captioning and visual question answering. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 6077–6086 (2018)

Antol, S., et al.: VQA: visual question answering. In: Proceedings of the IEEE International Conference on Computer Vision, pp. 2425–2433 (2015)

Cheng, J., Dong, L., Lapata, M.: Long short-term memory-networks for machine reading. arXiv preprint arXiv:1601.06733 (2016)

Chung, J., Gulcehre, C., Cho, K.H., Bengio, Y.: Empirical evaluation of gated recurrent neural networks on sequence modeling. Eprint Arxiv (2014)

Das, A., Agrawal, H., Zitnick, L., Parikh, D., Batra, D.: Human attention in visual question answering: do humans and deep networks look at the same regions? Comput. Vis. Image Underst. 163, 90–100 (2017)

Fukui, A., Park, D.H., Yang, D., Rohrbach, A., Darrell, T., Rohrbach, M.: Multimodal compact bilinear pooling for visual question answering and visual grounding. arXiv preprint arXiv:1606.01847 (2016)

Gao, P., et al.: Question-guided hybrid convolution for visual question answering. In: The European Conference on Computer Vision (ECCV), September 2018

Goyal, Y., Khot, T., Summers-Stay, D., Batra, D., Parikh, D.: Making the V in VQA matter: elevating the role of image understanding in visual question answering (2017)

He, K., Zhang, X., Ren, S., Sun, J.: Deep residual learning for image recognition. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 770–778 (2016)

Kim, J.H., On, K.W., Lim, W., Kim, J., Ha, J.W., Zhang, B.T.: Hadamard product for low-rank bilinear pooling. arXiv preprint arXiv:1610.04325 (2016)

Lin, T.-Y., Maire, M., Belongie, S., Hays, J., Perona, P., Ramanan, D., Dollár, P., Zitnick, C.L.: Microsoft COCO: common objects in context. In: Fleet, D., Pajdla, T., Schiele, B., Tuytelaars, T. (eds.) ECCV 2014. LNCS, vol. 8693, pp. 740–755. Springer, Cham (2014). https://doi.org/10.1007/978-3-319-10602-1_48

Lu, J., Yang, J., Batra, D., Parikh, D.: Hierarchical question-image co-attention for visual question answering. In: Advances in Neural Information Processing Systems, pp. 289–297 (2016)

Patro, B., Namboodiri, V.P.: Differential attention for visual question answering. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 7680–7688 (2018)

Pennington, J., Socher, R., Manning, C.: GloVe: global vectors for word representation. In: Proceedings of the 2014 Conference on Empirical Methods in Natural Language Processing (EMNLP), pp. 1532–1543 (2014)

Russakovsky, O., et al.: ImageNet large scale visual recognition challenge. Int. J. Comput. Vision 115(3), 211–252 (2014)

Simonyan, K., Zisserman, A.: Very deep convolutional networks for large-scale image recognition. arXiv preprint arXiv:1409.1556 (2014)

Tan, Z., Wang, M., Xie, J., Chen, Y., Shi, X.: Deep semantic role labeling with self-attention. In: Thirty-Second AAAI Conference on Artificial Intelligence (2018)

Teney, D., Anderson, P., He, X., Hengel, A.V.D.: Tips and tricks for visual question answering: learnings from the 2017 challenge (2017)

Vaswani, A., et al.: Attention is all you need. In: Advances in Neural Information Processing Systems, pp. 5998–6008 (2017)

Vendrov, I., Kiros, R., Fidler, S., Urtasun, R.: Order-embeddings of images and language. arXiv preprint arXiv:1511.06361 (2015)

Xu, K., et al.: Show, attend and tell: neural image caption generation with visual attention. In: International Conference on Machine Learning, pp. 2048–2057 (2015)

Yu, D., Fu, J., Mei, T., Rui, Y.: Multi-level attention networks for visual question answering. In: The IEEE Conference on Computer Vision and Pattern Recognition (CVPR), July 2017

Yu, Z., Yu, J., Fan, J., Tao, D.: Multi-modal factorized bilinear pooling with co-attention learning for visual question answering. In: Proceedings of the IEEE International Conference on Computer Vision, pp. 1821–1830 (2017)

Zhou, B., Tian, Y., Sukhbaatar, S., Szlam, A., Fergus, R.: Simple baseline for visual question answering. Computer Science (2015)

Acknowledgments

Supported by National Natural Science Foundation of China (61773272, 61272258, 61301299), The Natural Science Foundation of the Jiangsu Higher Education Institutions of China (19KJA230001), the Priority Academic Program Development of Jiangsu Higher Education Institutions.

Author information

Authors and Affiliations

Corresponding authors

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2019 Springer Nature Switzerland AG

About this paper

Cite this paper

Shao, H., Xu, Y., Ji, Y., Yang, J., Liu, C. (2019). Intra-Modality Feature Interaction Using Self-attention for Visual Question Answering. In: Gedeon, T., Wong, K., Lee, M. (eds) Neural Information Processing. ICONIP 2019. Communications in Computer and Information Science, vol 1143. Springer, Cham. https://doi.org/10.1007/978-3-030-36802-9_24

Download citation

DOI: https://doi.org/10.1007/978-3-030-36802-9_24

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-36801-2

Online ISBN: 978-3-030-36802-9

eBook Packages: Computer ScienceComputer Science (R0)