Abstract

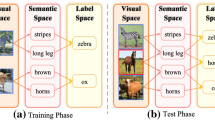

Zero-shot learning (ZSL), i.e. classifying patterns where there is a lack of labeled training data, is a challenging yet important research topic. One of the most common ideas for ZSL is to map the data (e.g., images) and semantic attributes to the same embedding space. However, for coarse-grained classification tasks, the samples of each class tend to be unevenly distributed. This leads to the possibility of learned embedding function mapping the attributes to an inappropriate location, and hence limiting the classification performance. In this paper, we propose a novel regularized deep embedding model for ZSL in which a self-focus mechanism, is constructed to constrain the learning of the embedding function. During the training process, the distances of different dimensions in the embedding space will be focused conditioned on the class. Thereby, locations of the prototype mapped from the attributes can be adjusted according to the distribution of the samples for each class. Moreover, over-fitting of the embedding function to known classes will also be mitigated. A series of experiments on four commonly used zero-shot databases show that our proposed method can attain significant improvement in coarse-grained data sets.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

Notes

- 1.

Available at https://github.com/lzrobots/DeepEmbeddingModel_ZSL.

References

Annadani, Y., Biswas, S.: Preserving semantic relations for zero-shot learning. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 7603–7612 (2018)

Bucher, M., Herbin, S., Jurie, F.: Generating visual representations for zero-shot classification. In: International Conference on Computer Vision Workshops: Transferring and Adapting Source Knowledge in Computer Vision (2017)

Chao, W.-L., Changpinyo, S., Gong, B., Sha, F.: An empirical study and analysis of generalized zero-shot learning for object recognition in the wild. In: Leibe, B., Matas, J., Sebe, N., Welling, M. (eds.) ECCV 2016. LNCS, vol. 9906, pp. 52–68. Springer, Cham (2016). https://doi.org/10.1007/978-3-319-46475-6_4

Ding, Z., Shao, M., Fu, Y.: Generative zero-shot learning via low-rank embedded semantic dictionary. IEEE Trans. Pattern Anal. Mach. Intell. 41(12), 2861–2874 (2019)

Dinu, G., Lazaridou, A., Baroni, M.: Improving zero-shot learning by mitigating the hubness problem. In: International Conference on Learning Representations, Workshop on Track Proceedings (2015)

Farhadi, A., Endres, I., Hoiem, D., Forsyth, D.: Describing objects by their attributes. In: IEEE Conference on Computer Vision and Pattern Recognition, pp. 1778–1785 (2009)

Huang, G., Liu, Z., Van Der Maaten, L., Weinberger, K.Q.: Densely connected convolutional networks. In: IEEE Conference on Computer Vision and Pattern Recognition, pp. 4700–4708 (2017)

Kingma, D.P., Ba, J.: Adam: a method for stochastic optimization. In: International Conference on Learning Representations (2015)

Kodirov, E., Xiang, T., Gong, S.: Semantic autoencoder for zero-shot learning. In: IEEE Conference on Computer Vision and Pattern Recognition, pp. 3174–3183 (2017)

Kumar Verma, V., Arora, G., Mishra, A., Rai, P.: Generalized zero-shot learning via synthesized examples. In: IEEE Conference on Computer Vision and Pattern Recognition, pp. 4281–4289 (2018)

Lampert, C.H., Nickisch, H., Harmeling, S.: Attribute-based classification for zero-shot visual object categorization. IEEE Trans. Pattern Anal. Mach. Intell. 36(3), 453–465 (2014)

Luo, C., Li, Z., Huang, K., Feng, J., Wang, M.: Zero-shot learning via attribute regression and class prototype rectification. IEEE Trans. Image Process. 27(2), 637–648 (2018)

Mikolov, T., Le, Q.V., Sutskever, I.: Exploiting similarities among languages for machine translation. arXiv preprint arXiv:1309.4168 (2013)

Parikh, D., Grauman, K.: Relative attributes. In: IEEE International Conference on Computer Vision, pp. 503–510 (2011)

Ren, S., He, K., Girshick, R., Sun, J.: Faster R-CNN: towards real-time object detection with region proposal networks. In: Advances in Neural Information Processing Systems, pp. 91–99 (2015)

Shigeto, Y., Suzuki, I., Hara, K., Shimbo, M., Matsumoto, Y.: Ridge regression, hubness, and zero-shot learning. In: Appice, A., Rodrigues, P.P., Santos Costa, V., Soares, C., Gama, J., Jorge, A. (eds.) ECML PKDD 2015. LNCS (LNAI), vol. 9284, pp. 135–151. Springer, Cham (2015). https://doi.org/10.1007/978-3-319-23528-8_9

Snell, J., Swersky, K., Zemel, R.: Prototypical networks for few-shot learning. In: Advances in Neural Information Processing Systems, pp. 4077–4087 (2017)

Sung, F., Yang, Y., Zhang, L., Xiang, T., Torr, P.H., Hospedales, T.M.: Learning to compare: relation network for few-shot learning. In: IEEE Conference on Computer Vision and Pattern Recognition, pp. 1199–1208 (2018)

Wah, C., Branson, S., Welinder, P., Perona, P., Belongie, S.: The caltech-ucsd birds-200-2011 dataset (2011)

Wang, Z., Ren, J., Zhang, D., Sun, M., Jiang, J.: A deep-learning based feature hybrid framework for spatiotemporal saliency detection inside videos. Neurocomputing 287, 68–83 (2018)

Xian, Y., Lampert, C.H., Schiele, B., Akata, Z.: Zero-shot learning-a comprehensive evaluation of the good, the bad and the ugly. IEEE Trans. Pattern Anal. Mach. Intell. 41(9), 2251–2265 (2019)

Xian, Y., Lorenz, T., Schiele, B., Akata, Z.: Feature generating networks for zero-shot learning. In: IEEE Conference on Computer Vision and Pattern Recognition, pp. 5542–5551 (2018)

Yang, X., Huang, K., Zhang, R., Hussain, A.: Introduction to deep density models with latent variables. In: Huang, K., Hussain, A., Wang, Q.F., Zhang, R. (eds.) Deep Learning: Fundamentals, Theory and Applications. COCT, vol. 2, pp. 1–29. Springer, Cham (2019). https://doi.org/10.1007/978-3-030-06073-2_1

Zhang, H., Long, Y., Guan, Y., Shao, L.: Triple verification network for generalized zero-shot learning. IEEE Trans. Image Process. 28(1), 506–517 (2019)

Zhang, L., Xiang, T., Gong, S.: Learning a deep embedding model for zero-shot learning. In: IEEE Conference on Computer Vision and Pattern Recognition, pp. 2021–2030 (2017)

Zhang, S., Huang, K., Zhang, R., Hussain, A.: Learning from few samples with memory network. Cogn. Comput. 10(1), 15–22 (2018)

Acknowledgements

The work was partially supported by National Natural Science Foundation of China under no. 61876155, and 61876154; The Natural Science Foundation of the Jiangsu Higher Education Institutions of China under no. 17KJD520010; Suzhou Science and Technology Program under no. SYG201712, SZS201613; Natural Science Foundation of Jiangsu Province BK20181189 and BK20181190; Key Program Special Fund in XJTLU under no. KSF-A-01, KSF-P-02, KSF-E-26, and KSF-A-10; XJTLU Research Development Fund RDF-16-02-49.

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2020 Springer Nature Switzerland AG

About this paper

Cite this paper

Yang, G., Huang, K., Zhang, R., Goulermas, J.Y., Hussain, A. (2020). Self-focus Deep Embedding Model for Coarse-Grained Zero-Shot Classification. In: Ren, J., et al. Advances in Brain Inspired Cognitive Systems. BICS 2019. Lecture Notes in Computer Science(), vol 11691. Springer, Cham. https://doi.org/10.1007/978-3-030-39431-8_2

Download citation

DOI: https://doi.org/10.1007/978-3-030-39431-8_2

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-39430-1

Online ISBN: 978-3-030-39431-8

eBook Packages: Computer ScienceComputer Science (R0)