Abstract

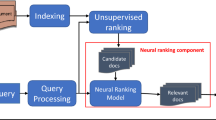

Recently, neural information retrieval (NeuIR) has attracted a lot of interests, where a variety of neural models have been proposed for the core ranking problem. Beyond the continuous refresh of the state-of-the-art neural ranking performance, the community calls for more analysis and understanding of the emerging neural ranking models. In this paper, we attempt to analyze these new models from a traditional view, namely term dependence. Without loss of generality, most existing neural ranking models could be categorized into three categories with respect to their underlying assumption on query term dependence, i.e., independent models, dependent models, and hybrid models. We conduct rigorous empirical experiments over several representative models from these three categories on a benchmark dataset and a large click-through dataset. Interestingly, we find that no single type of model can achieve a consistent win over others on different search queries. An oracle model which can select the right model for each query can obtain significant performance improvement. Based on the analysis we introduce an adaptive strategy for neural ranking models. We hypothesize that the term dependence in a query could be measured through the divergence between its independent and dependent representations. We thus propose a dependence gate based on such divergence representation to softly select neural ranking models for each query accordingly. Experimental results verify the effectiveness of the adaptive strategy.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Bendersky, M., Kurland, O.: Utilizing passage-based language models for document retrieval. In: Macdonald, C., Ounis, I., Plachouras, V., Ruthven, I., White, R.W. (eds.) ECIR 2008. LNCS, vol. 4956, pp. 162–174. Springer, Heidelberg (2008). https://doi.org/10.1007/978-3-540-78646-7_17

Bendersky, M., Metzler, D., Croft, W.B.: Learning concept importance using a weighted dependence model. In: WSDM, pp. 31–40. ACM (2010)

Cohen, D., O’Connor, B., Croft, W.B.: Understanding the representational power of neural retrieval models using NLP tasks. In: SIGIR, pp. 67–74. ACM (2018)

Dai, Z., Xiong, C., Callan, J., Liu, Z.: Convolutional neural networks for soft-matching n-grams in ad-hoc search. In: WSDM, pp. 126–134. ACM (2018)

Guo, J., Fan, Y., Ai, Q., Croft, W.B.: A deep relevance matching model for ad-hoc retrieval. In: CIKM, pp. 55–64. ACM (2016)

Guo, J., Fan, Y., Ji, X., Cheng, X.: MatchZoo: a learning, practicing, and developing system for neural text matching. In: Proceedings of the 42Nd International ACM SIGIR Conference on Research and Development in Information Retrieval, SIGIR 2019, pp. 1297–1300. ACM, New York, NY, USA (2019)

Guo, J., et al.: A deep look into neural ranking models for information retrieval. arXiv preprint arXiv:1903.06902 (2019)

Hu, B., Lu, Z., Li, H., Chen, Q.: Convolutional neural network architectures for matching natural language sentences. In: NIPS, pp. 2042–2050 (2014)

Huang, P.-S., He, X., Gao, J., Deng, L., Acero, A., Heck, L.: Learning deep structured semantic models for web search using clickthrough data. In: CIKM, pp. 2333–2338. ACM (2013)

Hui, K., Yates, A., Berberich, K., de Melo, G.: A position-aware deep model for relevance matching in information retrieval. CoRR (2017)

Jaech, A., Kamisetty, H., Ringger, E., Clarke, C.: Match-tensor: a deep relevance model for search. arXiv preprint arXiv:1701.07795 (2017)

Lioma, C., Simonsen, J.G., Larsen, B., Hansen, N.D.: Non-compositional term dependence for information retrieval. In: SIGIR, pp. 595–604. ACM (2015)

Metzler, D., Croft, W.B.: A Markov random field model for term dependencies. In: SIGIR, pp. 472–479. ACM (2005)

Mikolov, T., Sutskever, I., Chen, K., Corrado, G.S., Dean, J.: Distributed representations of words and phrases and their compositionality. In: NIPS, pp. 3111–3119 (2013)

Mitra, B., Craswell, N.: Neural models for information retrieval. arXiv preprint arXiv:1705.01509 (2017)

Mitra, B., Diaz, F., Craswell, N.: Learning to match using local and distributed representations of text for web search. In: WWW, pp. 1291–1299. International World Wide Web Conferences Steering Committee (2017)

Nie, Y., Li, Y., Nie, J.-Y.: Empirical study of multi-level convolution models for IR based on representations and interactions. In: SIGIR, pp. 59–66. ACM (2018)

Pang, L., Lan, Y., Guo, J., Xu, J., Wan, S., Cheng, X.: Text matching as image recognition. In: AAAI, pp. 2793–2799 (2016)

Pang, L., Lan, Y., Guo, J., Xu, J., Xu, J., Cheng, X.: DeepRank: a new deep architecture for relevance ranking in information retrieval. In: CIKM, pp. 257–266. ACM (2017)

Peng, J., Macdonald, C., He, B., Plachouras, V., Ounis, I.: Incorporating term dependency in the DFR framework. In: SIGIR, pp. 843–844. ACM (2007)

Qin, T., Liu, T.-Y., Xu, J., Li, H.: LETOR: a benchmark collection for research on learning to rank for information retrieval. Inf. Retr. 13(4), 346–374 (2010)

Robertson, S.E., Walker, S.: Some simple effective approximations to the 2-Poisson model for probabilistic weighted retrieval. In: Croft, B.W., van Rijsbergen, C.J. (eds.) SIGIR, pp. 232–241. Springer, London (1994). https://doi.org/10.1007/978-1-4471-2099-5_24

Shen, Y., He, X., Gao, J., Deng, L., Mesnil, G.: A latent semantic model with convolutional-pooling structure for information retrieval. In: CIKM, pp. 101–110. ACM (2014)

Srikanth, M., Srihari, R.: Biterm language models for document retrieval. In: SIGIR, pp. 425–426. ACM (2002)

Turtle, H., Croft, W.B.: Evaluation of an inference network-based retrieval model. TOIS 9(3), 187–222 (1991)

Wan, S., Lan, Y., Guo, J., Xu, J., Pang, L., Cheng, X.: A deep architecture for semantic matching with multiple positional sentence representations. In: AAAI, vol. 16, pp. 2835–2841 (2016)

Xiong, C., Dai, Z., Callan, J., Liu, Z., Power, R.: End-to-end neural ad-hoc ranking with kernel pooling. In: SIGIR, pp. 55–64. ACM (2017)

Zhai, C., Lafferty, J.: A study of smoothing methods for language models applied to ad hoc information retrieval. In: ACM SIGIR Forum, vol. 51, pp. 268–276. ACM (2017)

Acknowledgements

This work was funded by the National Natural Science Foundation of China (NSFC) under Grants No. 61902381, 61425016, 61722211, 61773362, and 61872338, the Youth Innovation Promotion Association CAS under Grants No. 20144310, and 2016102, the National Key R&D Program of China under Grants No. 2016QY02D0405, and the Foundation and Frontier Research Key Program of Chongqing Science and Technology Commission (No. cstc2017jcyjBX0059).

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2020 Springer Nature Switzerland AG

About this paper

Cite this paper

Fan, Y., Guo, J., Lan, Y., Cheng, X. (2020). Understanding and Improving Neural Ranking Models from a Term Dependence View. In: Wang, F., et al. Information Retrieval Technology. AIRS 2019. Lecture Notes in Computer Science(), vol 12004. Springer, Cham. https://doi.org/10.1007/978-3-030-42835-8_11

Download citation

DOI: https://doi.org/10.1007/978-3-030-42835-8_11

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-42834-1

Online ISBN: 978-3-030-42835-8

eBook Packages: Computer ScienceComputer Science (R0)