Abstract

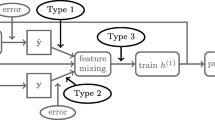

A multi-label classifier assigns a set of labels to each data object. A natural requirement in many end-use applications is that the classifier also provides a well-calibrated confidence (probability) to indicate the likelihood of the predicted set being correct; for example, an application may automate high-confidence predictions while manually verifying low-confidence predictions. The simplest multi-label classifier, called Binary Relevance (BR), applies one binary classifier to each label independently and takes the product of the individual label probabilities as the overall label-set probability (confidence). Despite its many known drawbacks, such as generating suboptimal predictions and poorly calibrated confidence scores, BR is widely used in practice due to its speed and simplicity. We seek in this work to improve both BR’s confidence estimation and prediction through a post calibration and reranking procedure. We take the BR predicted set of labels and its product score as features, extract more features from the prediction itself to capture label constraints, and apply Gradient Boosted Trees (GB) as a calibrator to map these features into a calibrated confidence score. GB not only produces well-calibrated scores (aligned with accuracy and sharp), but also models label interactions, correcting a critical flaw in BR. We further show that reranking label sets by the new calibrated confidence makes accurate set predictions on par with state-of-the-art multi-label classifiers—yet calibrated, simpler, and faster.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

Notes

- 1.

- 2.

This particular way of bucketing is only for visualization purpose; when we evaluate calibration quantitatively we follow the standard practice of using 10 equal-width buckets.

References

Belanger, D., McCallum, A.: Structured prediction energy networks. In: Proceedings of the International Conference on Machine Learning (2016)

Brukhim, N., Globerson, A.: Predict and constrain: modeling cardinality in deep structured prediction. arXiv preprint arXiv:1802.04721 (2018)

Bucak, S.S., Mallapragada, P.K., Jin, R., Jain, A.K.: Efficient multi-label ranking for multi-class learning: application to object recognition. In: 2009 IEEE 12th International Conference on Computer Vision, pp. 2098–2105. IEEE (2009)

Chen, T., Guestrin, C.: XGBoost: a scalable tree boosting system. In: Proceedings of the 22nd ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, pp. 785–794. ACM (2016)

Chen, T., Navrátil, J., Iyengar, V., Shanmugam, K.: Confidence scoring using whitebox meta-models with linear classifier probes. arXiv preprint arXiv:1805.05396 (2018)

Chen, Y.N., Lin, H.T.: Feature-aware label space dimension reduction for multi-label classification. In: NIPS, pp. 1529–1537 (2012)

Cheng, W., Hüllermeier, E., Dembczynski, K.J.: Bayes optimal multilabel classification via probabilistic classifier chains. In: ICML 2010, pp. 279–286 (2010)

Collins, M., Koo, T.: Discriminative reranking for natural language parsing. Comput. Linguist. 31(1), 25–70 (2005)

Deng, J., et al.: Large-scale object classification using label relation graphs. In: Fleet, D., Pajdla, T., Schiele, B., Tuytelaars, T. (eds.) ECCV 2014. LNCS, vol. 8689, pp. 48–64. Springer, Cham (2014). https://doi.org/10.1007/978-3-319-10590-1_4

Fan, R.E., Chang, K.W., Hsieh, C.J., Wang, X.R., Lin, C.J.: LIBLINEAR: a library for large linear classification. J. Mach. Learn. Res. 9(Aug), 1871–1874 (2008)

Friedman, J.H.: Greedy function approximation: a gradient boosting machine. Ann. Stat. 1189–1232 (2001)

Fürnkranz, J., Hüllermeier, E., Mencía, E.L., Brinker, K.: Multilabel classification via calibrated label ranking. Mach. Learn. 73(2), 133–153 (2008)

Ghamrawi, N., McCallum, A.: Collective multi-label classification. In: Proceedings of the 14th ACM International Conference on Information and Knowledge Management, pp. 195–200. ACM (2005)

Gneiting, T., Balabdaoui, F., Raftery, A.E.: Probabilistic forecasts, calibration and sharpness. J. R. Stat. Soc. Ser. B (Stat. Methodol.) 69(2), 243–268 (2007)

Godbole, S., Sarawagi, S.: Discriminative methods for multi-labeled classification. In: Dai, H., Srikant, R., Zhang, C. (eds.) PAKDD 2004. LNCS (LNAI), vol. 3056, pp. 22–30. Springer, Heidelberg (2004). https://doi.org/10.1007/978-3-540-24775-3_5

Goodfellow, I., et al.: Generative adversarial nets. In: Advances in Neural Information Processing Systems, pp. 2672–2680 (2014)

Guo, C., Pleiss, G., Sun, Y., Weinberger, K.Q.: On calibration of modern neural networks. arXiv preprint arXiv:1706.04599 (2017)

Gygli, M., Norouzi, M., Angelova, A.: Deep value networks learn to evaluate and iteratively refine structured outputs. arXiv preprint arXiv:1703.04363 (2017)

Hsu, D., Kakade, S., Langford, J., Zhang, T.: Multi-label prediction via compressed sensing. In: NIPS, vol. 22, pp. 772–780 (2009)

Kuleshov, V., Fenner, N., Ermon, S.: Accurate uncertainties for deep learning using calibrated regression. arXiv preprint arXiv:1807.00263 (2018)

Kuleshov, V., Liang, P.S.: Calibrated structured prediction. In: Advances in Neural Information Processing Systems, pp. 3474–3482 (2015)

Kumar, A., Vembu, S., Menon, A.K., Elkan, C.: Learning and inference in probabilistic classifier chains with beam search. In: Flach, P.A., De Bie, T., Cristianini, N. (eds.) ECML PKDD 2012. LNCS (LNAI), vol. 7523, pp. 665–680. Springer, Heidelberg (2012). https://doi.org/10.1007/978-3-642-33460-3_48

Li, C., Wang, B., Pavlu, V., Aslam, J.A.: Conditional Bernoulli mixtures for multi-label classification. In: Proceedings of the 33rd International Conference on Machine Learning, pp. 2482–2491 (2016)

Liu, W., Tsang, I.: On the optimality of classifier chain for multi-label classification. In: Advances in Neural Information Processing Systems, pp. 712–720 (2015)

Montañes, E., Senge, R., Barranquero, J., Quevedo, J.R., del Coz, J.J., Hüllermeier, E.: Dependent binary relevance models for multi-label classification. Pattern Recogn. 47(3), 1494–1508 (2014)

Nam, J., Mencía, E.L., Kim, H.J., Fürnkranz, J.: Maximizing subset accuracy with recurrent neural networks in multi-label classification. In: Advances in Neural Information Processing Systems, pp. 5413–5423 (2017)

Park, S.H., Fürnkranz, J.: Multi-label classification with label constraints. In: ECML PKDD 2008 Workshop on Preference Learning, pp. 157–171 (2008)

Platt, J., et al.: Probabilistic outputs for support vector machines and comparisons to regularized likelihood methods. Adv. Large Margin Classif. 10(3), 61–74 (1999)

Qin, K., Li, C., Pavlu, V., Aslam, J.: Adapting RNN sequence prediction model to multi-label set prediction. In: Proceedings of the 2019 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, (Long and Short Papers), vol. 1, pp. 3181–3190 (2019)

Read, J., Pfahringer, B., Holmes, G., Frank, E.: Classifier chains for multi-label classification. Machine. Learn. 85(3), 333–359 (2011)

Robertson, T.: Order restricted statistical inference. Technical report (1988)

Sasabuchi, S., Inutsuka, M., Kulatunga, D.: A multivariate version of isotonic regression. Biometrika 70(2), 465–472 (1983)

Shen, L., Sarkar, A., Och, F.J.: Discriminative reranking for machine translation. In: HLT-NAACL 2004 (2004)

Tsoumakas, G., Dimou, A., Spyromitros, E., Mezaris, V., Kompatsiaris, I., Vlahavas, I.: Correlation-based pruning of stacked binary relevance models for multi-label learning. In: Proceedings of the 1st International Workshop on Learning from Multi-label Data, pp. 101–116 (2009)

Tsoumakas, G., Katakis, I.: Multi-label classification: an overview. Int. J. Data Warehous. Min. 2007, 1–13 (2007)

Tsoumakas, G., Vlahavas, I.: Random k-labelsets: an ensemble method for multilabel classification. In: Kok, J.N., Koronacki, J., Mantaras, R.L., Matwin, S., Mladenič, D., Skowron, A. (eds.) ECML 2007. LNCS (LNAI), vol. 4701, pp. 406–417. Springer, Heidelberg (2007). https://doi.org/10.1007/978-3-540-74958-5_38

Xie, P., Salakhutdinov, R., Mou, L., Xing, E.P.: Deep determinantal point process for large-scale multi-label classification. In: ICCV, pp. 473–482 (2017)

Yen, I.E., Huang, X., Zhong, K., Ravikumar, P., Dhillon, I.S.: PD-Sparse: a primal and dual sparse approach to extreme multiclass and multilabel classification. In: Proceedings of the 33nd International Conference on Machine Learning (2016)

Zadrozny, B., Elkan, C.: Transforming classifier scores into accurate multiclass probability estimates. In: KDD, pp. 694–699. ACM (2002)

Zhang, M.L., Zhang, K.: Multi-label learning by exploiting label dependency. In: KDD, pp. 999–1008. ACM (2010)

Zhang, M.L., Zhou, Z.H.: ML-KNN: a lazy learning approach to multi-label learning. Pattern Recogn. 40(7), 2038–2048 (2007)

Zhou, T., Tao, D., Wu, X.: Compressed labeling on distilled labelsets for multi-label learning. Mach. Learn. 88(1–2), 69–126 (2012)

Acknowledgments

We thank Jeff Woodward for sharing his observation regarding prediction set cardinality, Pavel Metrikov for the helpful discussion on the model design, and reviewers for suggesting related work. This work has been generously supported through a grant from the Massachusetts General Physicians Organization.

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2020 Springer Nature Switzerland AG

About this paper

Cite this paper

Li, C., Pavlu, V., Aslam, J., Wang, B., Qin, K. (2020). Learning to Calibrate and Rerank Multi-label Predictions. In: Brefeld, U., Fromont, E., Hotho, A., Knobbe, A., Maathuis, M., Robardet, C. (eds) Machine Learning and Knowledge Discovery in Databases. ECML PKDD 2019. Lecture Notes in Computer Science(), vol 11908. Springer, Cham. https://doi.org/10.1007/978-3-030-46133-1_14

Download citation

DOI: https://doi.org/10.1007/978-3-030-46133-1_14

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-46132-4

Online ISBN: 978-3-030-46133-1

eBook Packages: Computer ScienceComputer Science (R0)